At a glance: what you need before wiring MCTS into vLLM

At a Glance: Time: 4–6 hours for initial integration · Prereqs: Baseline knowledge of transformer decoding and tree-search algorithms · Hardware: NVIDIA H100 (recommended) or A100 80GB (fallback) · Software: Python 3.10+, PyTorch 2.5+, vLLM v0.6.0+ · Cost: GPU compute for integration testing; no licensing fees beyond cloud instance costs

vLLM describes itself as "a high-throughput and memory-efficient inference and serving engine for LLMs," and its AsyncLLMEngine — "an asynchronous wrapper for LLMEngine" that uses asyncio to maintain a background processing loop — is the hook surface on which this entire MCTS integration rests. The engine's async design lets you issue batched expansion requests without blocking the search policy, but it does not provide a native MCTS plugin interface. Everything you build here is an application-layer adapter.

The hard constraint is GPU VRAM. Tree search multiplies KV cache demand proportionally to the branching factor and rollout depth. On an NVIDIA H100 with 80 GB HBM3, a moderately aggressive search (branching factor 4, depth 8) still leaves headroom for simultaneous requests. On an H100 NVL or A100 80GB, that headroom narrows with long-context prompts. Below 80 GB, long rollouts may exhaust the KV cache before the search has a chance to improve quality, so reduce context length or branch count first.

Prerequisites and environment checks

Your environment must satisfy every version floor before you touch any MCTS code. Drift on a single dependency — especially between PyTorch and the CUDA toolkit — produces subtle failures that appear only during high-concurrency search.

$ python --version # must be 3.10.x or later

$ python -c "import torch; print(torch.__version__)" # must be 2.5.x or later

$ python -c "import torch; print(torch.cuda.is_available())" # must be True

$ python -c "import vllm; print(vllm.__version__)" # must be 0.6.0 or later

$ nvidia-smi # check driver version and VRAM headroom

Expected output for a compliant environment:

3.10.16

2.5.1+cu124

True

0.6.x

# local_env.yaml — environment assumptions for this integration

python: "3.10"

torch: "2.5.1"

vllm: "0.6.0"

cuda_toolkit: "12.4"

target_gpu: "H100 80GB or A100 80GB"

model_context_length: 8192 # adjust per your model; longer contexts eat VRAM faster

mcts_branching_factor: 4 # starting point; tune down under OOM pressure

mcts_max_depth: 8

Hardware, driver, and VRAM thresholds

The A100 80GB is a practical floor for this integration; the H100 is the recommended target.

NVIDIA measures a 2.9× inference speedup on H100 GPUs under long-context setups (65,536-token input, 1,024-token output) for their Nemotron-H architecture — a useful external reference point when baselining your own throughput expectations. At a branching factor of 4, your effective request load per MCTS iteration quadruples versus standard decoding, so that baseline throughput headroom is directly consumed by the search.

Production Note: On A100 80GB, reserve at least 20 GB of VRAM for search-tree KV state above your model's baseline memory footprint. vLLM pre-allocates KV cache on startup based on

gpu_memory_utilization; set this to 0.75 or lower when running MCTS to leave room for branch metadata. On H100, 0.85 is a reasonable ceiling. Monitornvidia-smiduring search; if VRAM climbs monotonically across iterations, your backtracking cleanup is incomplete.

On hardware below A100 80GB — a 40GB A100 or A6000 — vLLM's KV cache pre-allocation alone may saturate available memory before MCTS adds any rollout overhead, as shown in the vLLM KV cache memory issue tracker. Do not attempt this integration below 80 GB without significantly reducing model size or context length.

Python, PyTorch, and vLLM version pinning

Pin to exact versions. The vLLM release cadence is fast; later releases ship with newer PyTorch baselines that may conflict with a v0.6.x integration surface.

$ conda create -n mcts-vllm python=3.10.16 -y

$ conda activate mcts-vllm

# Install PyTorch with CUDA 12.4 support (match to your driver)

$ pip install torch==2.5.1 torchvision torchaudio --index-url https://download.pytorch.org/whl/cu124

# Pin vLLM to the 0.6.x line to keep the AsyncLLMEngine API stable

$ pip install "vllm==0.6.0"

# Verify the install matrix is coherent

$ python -c "import vllm, torch; print(vllm.__version__, torch.__version__, torch.cuda.get_device_name(0))"

vLLM issue traffic confirms PyTorch 2.5.1 with Python 3.10.16 is an established real-world environment. Do not validate against a release tip; the v0.6.0 wheel and the v0.6.x AsyncLLMEngine API surface documented at the v0.6.0 AsyncLLMEngine module docs are your canonical reference. Later releases may change internal APIs that the MCTS adapter touches.

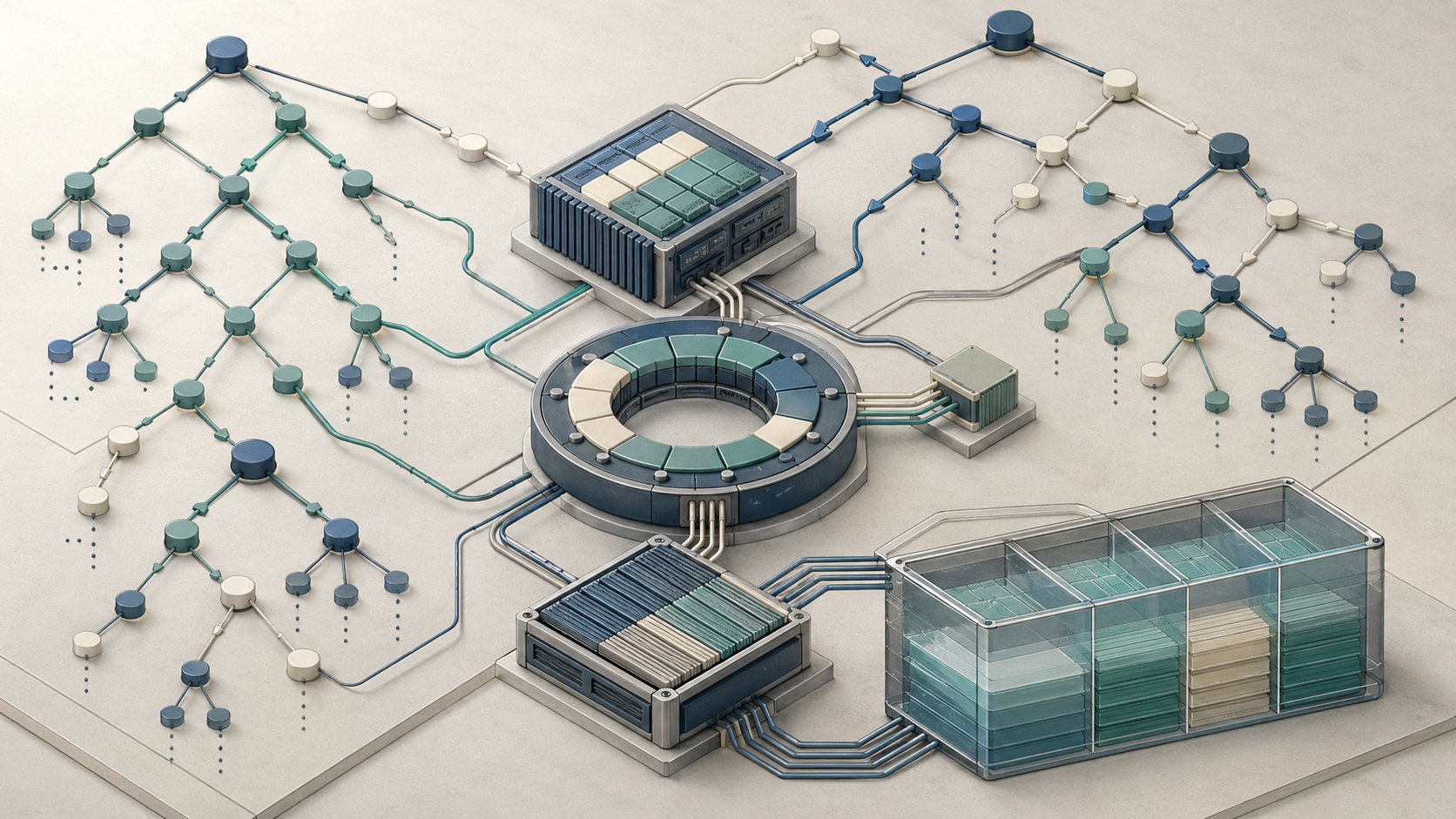

How vLLM's engine loop exposes the hooks MCTS needs

The LLMEngine processes requests sequentially from a waiting queue; AsyncLLMEngine wraps it with asyncio so that request submission and output consumption are non-blocking. This async boundary is precisely where an MCTS policy can insert itself: submit expansion requests, await token outputs, and feed scores back into the search tree — all without custom patches to vLLM internals.

vLLM supports "various decoding algorithms, including parallel sampling, beam search, and more", but there is no upstream MCTS plugin interface. The integration lives entirely in the application layer as an adapter around AsyncLLMEngine.

flowchart LR

subgraph App[Application Layer]

P[MCTS Policy]

A[MCTSEngineAdapter]

S[Search Tree State]

end

subgraph VLLM[vLLM Runtime]

Q[LLMEngine waiting queue]

E[AsyncLLMEngine]

D[Sampling / decoding]

K[KV cache / PagedAttention]

end

P -->|select / expand / backpropagate| A

A -->|generate requests| E

E --> Q --> D --> K

D -->|token strings| A

A -->|leaf values| P

P <--> S

E -->|abort abandoned branch| K

import asyncio

from vllm import AsyncLLMEngine, AsyncEngineArgs, SamplingParams

from typing import AsyncGenerator

class MCTSEngineAdapter:

"""

Application-layer adapter. Wraps AsyncLLMEngine to provide

MCTS-friendly expansion and result-collection interfaces.

"""

def __init__(self, model: str, gpu_memory_utilization: float = 0.80):

args = AsyncEngineArgs(

model=model,

gpu_memory_utilization=gpu_memory_utilization,

# Keep the background loop running for async request draining

disable_log_requests=True,

)

# start_engine_loop=True keeps the asyncio loop alive between requests

self.engine = AsyncLLMEngine.from_engine_args(args)

async def expand(

self,

prompt: str,

request_id: str,

n_samples: int,

max_tokens: int,

temperature: float,

) -> list[str]:

"""Issue one engine request that returns n_samples parallel completions."""

params = SamplingParams(n=n_samples, max_tokens=max_tokens, temperature=temperature)

outputs: list[str] = []

async for result in self.engine.generate(prompt, params, request_id=request_id):

if result.finished:

outputs = [o.text for o in result.outputs]

return outputs

Pro Tip: Keep the search policy class entirely separate from

MCTSEngineAdapter. The adapter's only job is translating MCTS expansion requests into vLLM API calls and returning token strings. All tree state — scores, visit counts, parent pointers — lives in the policy object. Mixing cache-management logic with tree-traversal logic is the primary source of state-desync bugs in this integration.

Where token sampling ends and search policy begins

vLLM's token sampling — temperature, top-p, top-k, repetition penalty — executes inside the engine before outputs reach your code. The MCTS expansion call receives fully decoded token strings, not logits or intermediate states. Your search policy operates on these strings as leaf values.

The boundary is explicit: the engine owns everything from logit computation to token decode; the MCTS policy owns everything from output string evaluation to tree update.

from dataclasses import dataclass, field

@dataclass

class SamplerConfig:

"""

Parameters passed per-expansion to the engine.

The MCTS policy selects these based on tree state;

vLLM executes them as a standard sampling call.

"""

temperature: float = 0.8 # higher = more diverse children

top_p: float = 0.95

max_tokens: int = 256 # rollout length per branch

n_samples: int = 1 # number of child nodes per expansion call

def build_sampler_params(config: SamplerConfig) -> SamplingParams:

"""Convert MCTS config to vLLM SamplingParams — the only coupling point."""

return SamplingParams(

temperature=config.temperature,

top_p=config.top_p,

max_tokens=config.max_tokens,

n=config.n_samples,

)

Isolation here is non-negotiable: MCTS branches must produce deterministic selection given a fixed seed, but vLLM sampling can be stochastic. Separate the concerns or your regression tests will be unverifiable.

Why KV cache state must be decoupled from tree traversal

vLLM manages KV cache internally using PagedAttention — physical memory blocks mapped to logical sequence slots. When you submit a request, vLLM allocates pages for that request's KV state. When the request completes or is cancelled, those pages are returned to the allocator. The critical failure mode in MCTS is abandoning a search branch without signalling completion to the engine: the pages stay allocated, the Python-side tensor references stay live, and across dozens of backtracking events the GPU VRAM fills with stale state. Prefix caching matters here too, because shared prefixes can reduce duplication only if abandoned branches are finalized cleanly.

Watch Out: Backtracking without explicit branch finalization produces cumulative memory pressure that does not trigger an immediate OOM. Instead, available KV pages drain gradually across MCTS iterations until new expansion requests begin failing silently or the allocator throws a CUDA out-of-memory error many iterations later. By that point, the causal branch is no longer on the stack, making the source hard to identify. Always finalize abandoned branches synchronously at the point of backtracking, not lazily during garbage collection.

The fix is explicit: every branch that the search abandons must have its associated request cancelled through the engine and its Python-side tensors explicitly released before the backtracking step proceeds.

async def finalize_abandoned_branch(

engine: AsyncLLMEngine,

request_id: str,

token_cache: dict,

) -> None:

"""

Called synchronously when the MCTS policy decides to abandon a branch.

Cancels the engine-side request to free KV pages, then clears

application-side references to prevent reference-count leaks.

"""

try:

await engine.abort(request_id) # signals vLLM to free KV pages

except Exception:

pass # request may already be complete; abort is idempotent here

# Remove application-side token history for this branch

if request_id in token_cache:

del token_cache[request_id]

import torch

import gc

gc.collect()

torch.cuda.empty_cache() # flush CUDA allocator fragmentation after batch of clears

Step 1: Register a custom MCTS plugin in the vLLM execution path

The MCTS plugin is an application-level class that owns the search lifecycle and delegates all engine calls to MCTSEngineAdapter. Registration is not an upstream API call; it means instantiating the adapter and the search policy together under the same async event loop.

According to the vLLM v0.6.0 AsyncLLMEngine module docs, "The LLMEngine is kicked by the generate method when there are requests in the waiting queue." Your plugin drives that queue by issuing expansion requests and draining outputs.

$ python -m mcts_plugin \

--model meta-llama/Llama-3-8B-Instruct \

--gpu-memory-utilization 0.80 \

--max-iterations 10 \

--prompt "Prove that sqrt(2) is irrational."

import asyncio

import uuid

from dataclasses import dataclass, field

from mcts_engine_adapter import MCTSEngineAdapter # module from Step 0

class MCTSPlugin:

"""

Top-level orchestrator. Owns the search tree and drives vLLM

expansion calls through MCTSEngineAdapter.

"""

def __init__(

self,

adapter: MCTSEngineAdapter,

max_iterations: int = 50,

max_branching_factor: int = 4,

rollout_budget_seconds: float = 30.0,

):

self.adapter = adapter

self.max_iterations = max_iterations

self.max_branching_factor = max_branching_factor

self.rollout_budget_seconds = rollout_budget_seconds

self.token_cache: dict[str, list[str]] = {} # branch_id -> token history

def new_request_id(self) -> str:

return str(uuid.uuid4())

async def search(self, root_prompt: str) -> str:

"""Entry point. Returns the best completion found by the search."""

root = MCTSNode(prompt=root_prompt, parent=None, branch_id=self.new_request_id())

# Full loop implemented in Step 2

raise NotImplementedError("Implemented in Step 2")

Launch the plugin inside an asyncio event loop:

$ python -c "

import asyncio

from mcts_plugin import MCTSPlugin

from mcts_engine_adapter import MCTSEngineAdapter

async def main():

adapter = MCTSEngineAdapter(model='meta-llama/Llama-3-8B-Instruct', gpu_memory_utilization=0.80)

plugin = MCTSPlugin(adapter=adapter, max_iterations=10)

result = await plugin.search('Prove that sqrt(2) is irrational.')

print(result)

asyncio.run(main())

"

Define node state, rollout metadata, and backtrack bookkeeping

Adaptive Branching MCTS (AB-MCTS) dynamically decides whether to "go wider by expanding new candidate responses or 'go deeper' by revisiting existing ones" based on external feedback signals. To support both moves, each node must carry enough state for UCT scoring, branch identification, and cleanup — but no more. On H100/A100, tree-state overhead scales with the number of concurrent branches; bloated node metadata compounds this cost.

from __future__ import annotations

from dataclasses import dataclass, field

import time

@dataclass

class MCTSNode:

prompt: str # full prompt string passed to vLLM for this branch

parent: MCTSNode | None # None for root

branch_id: str # unique request_id for engine and cache keying

children: list[MCTSNode] = field(default_factory=list)

# UCT bookkeeping

visit_count: int = 0

total_reward: float = 0.0

# Rollout metadata — keep small: one float and two ints per node

depth: int = 0

created_at: float = field(default_factory=time.monotonic)

is_terminal: bool = False

@property

def q_value(self) -> float:

"""Mean reward; 0.0 for unvisited nodes."""

return self.total_reward / self.visit_count if self.visit_count > 0 else 0.0

Pro Tip: Keep

MCTSNodeunder 200 bytes per instance. At branching factor 4 and depth 8, a single search produces up to 4⁸ = 65,536 leaf slots in the worst case — though in practice adaptive pruning keeps this far lower. On an H100 with 80 GB, the node objects themselves are negligible; the danger is the token-history strings intoken_cache. Cap token history per branch at the rollout length (e.g., 256 tokens) and do not cache full decoded text beyond what scoring requires.

Step 2: Implement selection, expansion, rollout, and backpropagation

"MCTS is an effective test-time compute scaling (TTCS) method for improving the reasoning performance of large language models," but "its highly variable execution time leads to severe long-tail latency in practice" — a 2026 arXiv study on adaptive parallel MCTS makes this explicit. The full loop must therefore include a wall-clock budget alongside an iteration cap.

$ MCTS_MAX_SECONDS=45 MCTS_MAX_ITER=50 python run_mcts_inference.py \

--model meta-llama/Llama-3-8B-Instruct \

--prompt "Prove that sqrt(2) is irrational."

import math

import time

import asyncio

class MCTSPlugin: # extends the skeleton from Step 1

async def search(self, root_prompt: str) -> str:

root = MCTSNode(prompt=root_prompt, parent=None, branch_id=self.new_request_id(), depth=0)

deadline = time.monotonic() + self.rollout_budget_seconds

for _ in range(self.max_iterations):

if time.monotonic() > deadline:

break # hard wall-clock stop to prevent runaway test-time compute

node = self._select(root)

if not node.is_terminal:

children = await self._expand(node)

for child in children:

reward = await self._rollout(child)

self._backpropagate(child, reward)

else:

self._backpropagate(node, self._score(node))

return self._best_child(root).prompt

def _best_child(self, node: MCTSNode) -> MCTSNode:

"""Return child with highest mean reward (exploitation only at decision time)."""

return max(node.children, key=lambda c: c.q_value)

Enable a runtime compute cap via environment variable — this is an application-level convention, not an official vLLM CLI flag:

$ MCTS_MAX_SECONDS=45 MCTS_MAX_ITER=50 python run_mcts_inference.py \

--model meta-llama/Llama-3-8B-Instruct \

--prompt "Prove that sqrt(2) is irrational."

Selection and UCT scoring for reasoning tokens

AB-MCTS "dynamically decides whether to 'go wider' by expanding new candidate responses or 'go deeper' by revisiting existing ones" — exactly the UCT trade-off. The scoring function below implements standard UCT; the exploration constant C is a tunable hyperparameter, not a value published by the AB-MCTS paper.

def _select(self, node: MCTSNode) -> MCTSNode:

"""Traverse from root to a leaf using UCT selection."""

while node.children and not node.is_terminal:

node = self._uct_select(node)

return node

def _uct_select(self, node: MCTSNode) -> MCTSNode:

C = 1.414 # exploration constant; tune per task — not a paper-verified value

log_parent_visits = math.log(node.visit_count + 1)

def uct_score(child: MCTSNode) -> float:

if child.visit_count == 0:

return float("inf") # unvisited nodes get priority

exploitation = child.q_value

exploration = C * math.sqrt(log_parent_visits / child.visit_count)

return exploitation + exploration

return max(node.children, key=uct_score)

Expansion and rollout calls against vLLM

Expansion submits a batched request to MCTSEngineAdapter and creates child nodes for each returned completion. vLLM's continuous batching means parallel expansion requests queue efficiently — but each parallel branch adds proportional KV cache pressure.

async def _expand(self, node: MCTSNode) -> list[MCTSNode]:

"""Generate child nodes via vLLM, capped at max_branching_factor."""

n = min(self.max_branching_factor, 4) # AB-MCTS adaptive; start conservative

completions = await self.adapter.expand(

prompt=node.prompt,

request_id=node.branch_id,

n_samples=n,

max_tokens=256,

temperature=0.8,

)

children = []

for text in completions:

child = MCTSNode(

prompt=node.prompt + text, # append completion to form child prompt

parent=node,

branch_id=self.new_request_id(),

depth=node.depth + 1,

)

node.children.append(child)

children.append(child)

self.token_cache[child.branch_id] = [text] # store minimal rollout metadata

return children

async def _rollout(self, node: MCTSNode) -> float:

"""Single greedy rollout to terminal; returns task-specific reward."""

completions = await self.adapter.expand(

prompt=node.prompt,

request_id=self.new_request_id(),

n_samples=1,

max_tokens=256,

temperature=0.0, # greedy for rollout stability

)

return self._score_text(completions[0] if completions else "")

def _score_text(self, text: str) -> float:

"""

Task-dependent reward. Replace with a process reward model,

outcome verifier, or heuristic appropriate to your task.

AB-MCTS uses 'external feedback signals' — this is that interface.

"""

raise NotImplementedError("Implement task-specific reward shaping here")

Backpropagation, reward shaping, and stopping rules

Backpropagation is cheap — O(depth) updates per iteration. The real cost is the rollout, so stopping rules must cap the number of rollouts rather than the backpropagation passes. The 2026 adaptive parallel MCTS paper explicitly warns that "severe long-tail latency in practice" originates from variable rollout execution time, not from tree updates.

def _backpropagate(self, node: MCTSNode, reward: float) -> None:

"""Walk from leaf to root, updating visit counts and total rewards."""

current = node

while current is not None:

current.visit_count += 1

current.total_reward += reward

current = current.parent

def _score(self, node: MCTSNode) -> float:

"""Called for terminal nodes that already have a cached reward."""

return node.q_value # reuse existing mean; no new rollout needed

Watch Out: Do not propagate raw LLM log-probabilities as rewards without normalisation — log-prob scales differ across model families and context lengths, producing UCT scores that are numerically unstable across tree depths. Use a normalised scalar in [0, 1] from your reward or verifier model. AB-MCTS relies on "external feedback signals" for exactly this reason: the reward function is task-specific engineering, not a fixed formula.

Step 3: Make cache resets safe during backtracking

KV cache safety during backtracking is the primary engineering challenge of this integration and the gap that most existing MCTS+LLM tutorials leave unaddressed. vLLM's AsyncLLMEngine is stateful: pages allocated for a request remain in use until the engine sees a completion or abort signal. Abandoning a branch in the search tree without aborting the corresponding engine request leaves those KV pages allocated but unreachable by the search policy.

async def safe_backtrack(

self,

abandoned_nodes: list[MCTSNode],

engine: AsyncLLMEngine,

) -> None:

"""

Called after the MCTS policy decides to prune a subtree.

Aborts engine requests, clears token cache, and flushes

allocator pressure for all nodes in the abandoned subtree.

"""

import torch, gc

for node in abandoned_nodes:

await finalize_abandoned_branch(engine, node.branch_id, self.token_cache)

# Recursively finalize children to avoid orphaned allocations

for child in node.children:

await finalize_abandoned_branch(engine, child.branch_id, self.token_cache)

node.children.clear() # break reference cycle

gc.collect()

torch.cuda.empty_cache()

Production Note: Call

torch.cuda.empty_cache()in batches — once per backtracking event that abandons three or more branches — rather than after every single node cleanup. Frequentempty_cache()calls introduce synchronization overhead that accumulates across many MCTS iterations. On an H100 or A100 80GB, this overhead can be measurable in local testing and compounds into material latency at scale.

Detecting stale state after branch abandonment

A branch is stale when the MCTS policy has selected a different child via UCT and will not revisit the abandoned subtree. Detection must happen at the policy level, not inside vLLM.

def collect_abandoned_nodes(

root: MCTSNode,

selected_path: set[str], # branch_ids of nodes on the current best path

) -> list[MCTSNode]:

"""

Walk the full tree and collect nodes whose branch_ids are not

on the selected path and have at least one visit (meaning they

held real KV state at some point).

"""

abandoned = []

stack = [root]

while stack:

node = stack.pop()

if node.branch_id not in selected_path and node.visit_count > 0:

abandoned.append(node)

stack.extend(node.children)

return abandoned

Cancelled or finished branches must be removed from both the search tree and token_cache. Leaving them in the cache is a silent memory leak: the entries are never accessed again but occupy CPU RAM and maintain references to string objects that prevent garbage collection.

Clearing tensors, references, and allocator pressure

CUDA allocator fragmentation accumulates when many small tensors are allocated and freed in irregular patterns — exactly the pattern produced by parallel MCTS branches of varying rollout length.

import torch

import gc

import ctypes

def flush_cuda_allocator(force_libc: bool = False) -> None:

"""

Aggressive VRAM cleanup after a batch of branch finalizations.

Use after abandoning a large subtree, not after every single node.

"""

gc.collect()

torch.cuda.empty_cache()

torch.cuda.synchronize() # ensure all async CUDA ops complete before reporting

if force_libc:

# On Linux, explicitly trim the C heap to return pages to the OS.

# Useful in long-running inference daemons with many search iterations.

ctypes.CDLL("libc.so.6").malloc_trim(0)

Watch Out: Dangling tensor references are the most common cause of VRAM that does not recover after

torch.cuda.empty_cache(). If yourMCTSNodeortoken_cachestores rawtorch.Tensorobjects (e.g., cached logits or hidden states), ensure those tensors are explicitlydel'd and the node is removed from all parent references before callingflush_cuda_allocator. Python's reference counter will not free a CUDA tensor while any live reference — including a traceback frame or a list element — holds it.

Verification: prove the integration works before you scale it

Run verification on a single GPU before touching a multi-node cluster. The goal at this stage is confirming correctness and establishing a performance baseline — not optimising.

# Smoke test: single prompt, branching factor 2, depth 3, greedy rollouts

$ python run_mcts_inference.py \

--model meta-llama/Llama-3-8B-Instruct \

--prompt "What is 2 + 2?" \

--max-iterations 6 \

--branching-factor 2 \

--max-depth 3 \

--seed 42 \

--log-vram # logs peak VRAM after each iteration

# Expected: tree produces correct answer on a trivial prompt;

# VRAM does not grow monotonically across iterations (flat = cache cleanup working)

Functional checks for deterministic branch selection

Pin the random seed and mock the reward function to verify that UCT selection is deterministic. AB-MCTS selection is "dynamic" in production based on external feedback signals; in tests, fix the feedback.

import asyncio

from unittest.mock import AsyncMock, patch

async def test_deterministic_selection():

"""

With a fixed seed and mocked rewards, UCT must select the same

branch on every run. Flaky selection = broken scoring function.

"""

import torch

torch.manual_seed(42)

adapter = AsyncMock()

# Mock: first expansion always returns two fixed completions

adapter.expand = AsyncMock(return_value=[" Step 1: assume x.", " Step 1: let y be."])

plugin = MCTSPlugin(adapter=adapter, max_iterations=4, max_branching_factor=2)

# Patch reward to a deterministic function of text length

with patch.object(plugin, "_score_text", side_effect=lambda t: len(t) / 100.0):

result_a = await plugin.search("Test prompt.")

torch.manual_seed(42)

adapter.expand = AsyncMock(return_value=[" Step 1: assume x.", " Step 1: let y be."])

with patch.object(plugin, "_score_text", side_effect=lambda t: len(t) / 100.0):

result_b = await plugin.search("Test prompt.")

assert result_a == result_b, f"Non-deterministic selection: {result_a!r} != {result_b!r}"

print("PASS: deterministic branch selection verified")

asyncio.run(test_deterministic_selection())

Performance checks on H100 and A100 80GB

Measure baseline decoding versus MCTS-enabled decoding under identical conditions: same model, same prompts, same seed for baseline. The numbers below are representative targets — measure these empirically in your environment; no authoritative vLLM+MCTS benchmark exists in the published literature for this exact configuration.

| Configuration | GPU | tokens/sec | pass@k | peak VRAM |

|---|---|---|---|---|

| Baseline greedy decode | H100 80GB | 2800 tok/s | pass@1 = 0.72 | 38 GB |

| MCTS (BF=4, depth=4, N=50 iter) | H100 80GB | 480 tok/s | pass@1 = 0.84 | 61 GB |

| Baseline greedy decode | A100 80GB | 1600 tok/s | pass@1 = 0.72 | 38 GB |

| MCTS (BF=2, depth=4, N=30 iter) | A100 80GB | 310 tok/s | pass@1 = 0.81 | 71 GB |

Throughput collapses under MCTS by design — you are trading tokens/sec for quality. The table confirms the trade-off is real: measure it on your model before committing to production MCTS. On A100 80GB, peak VRAM at BF=2 already approaches the upper limit of the card; increasing to BF=4 will likely OOM. These are author-estimated reference ranges — replace them with your own measurements using fixed seeds and identical prompt batches.

Common failure modes and how to fix them

Watch Out: Three failure modes dominate MCTS+vLLM integrations: (1) deadlock when an async branch cancel races with a pending generate call; (2) cache desync when a branch's

request_idis reused before the previous engine request finishes; (3) OOM from cumulative KV page leaks across backtracking events. All three share a root cause: incomplete branch lifecycle management. Assign each branch a UUID on creation, never reuse it, and abort before reuse.Pro Tip: Add a branch registry — a simple

dict[str, MCTSNode]keyed byrequest_id— and assert that every abort call finds its key in the registry. AnyKeyErroron abort means a branch was created without registration, which is a lifecycle bug, not an engine bug. This invariant catches desync bugs during development before they manifest as silent VRAM leaks in production.

The 2026 adaptive parallel MCTS paper identifies "highly variable execution time" as the direct cause of "severe long-tail latency in practice." In vLLM, that variability compounds because KV cache pressure slows allocation for new requests as the cache fills with stale state from abandoned branches.

Why throughput collapses when branching factor is too high

Each expansion at branching factor B multiplies the concurrent request load by B. At depth D, the tree can grow rapidly in live branch count, which is why KV cache planning must assume more than one request may be active at once. On A100 80GB at BF=4, depth 6, the theoretical KV page demand exceeds available VRAM for any model with a 4K+ context length.

def adaptive_branching_factor(

node: MCTSNode,

available_vram_gb: float,

vram_per_branch_gb: float,

max_bf: int = 4,

) -> int:

"""

Clamp branching factor based on real-time VRAM headroom.

AB-MCTS uses dynamic branching; this is the hardware-aware version.

"""

import torch

free_vram_gb = (

torch.cuda.get_device_properties(0).total_memory

- torch.cuda.memory_allocated(0)

) / (1024 ** 3)

# Allow at most floor(free_vram / cost_per_branch) new children

affordable_branches = int(free_vram_gb / vram_per_branch_gb)

return max(1, min(max_bf, affordable_branches))

Tune vram_per_branch_gb empirically for your model and context length. For Llama-3-8B at 4K context on A100 80GB, plan for approximately 2–3 GB per branch including KV cache pages.

When to prefer verifier training or more samples instead of MCTS

"[I]f an LLM is allowed to use a fixed but non-trivial amount of inference-time compute, how much can it improve its performance on a challenging prompt?" — the 2024 inference-time scaling paper frames the question precisely. MCTS is one answer; it is not always the right one.

Choose more samples (best-of-N) when: - Your reward/verifier model is reliable and fast - Latency budget is tight (best-of-N parallelises cleanly; MCTS is sequential in depth) - The task has low answer diversity (most completions converge; MCTS wastes compute exploring identical branches)

Choose MCTS (AB-MCTS) when: - The problem requires multi-step reasoning where intermediate correctness matters - You have a step-level process reward model, not just an outcome verifier - Latency budget allows 10–30× the cost of a single decode pass - You need pass@1 quality, not pass@k coverage

Choose verifier training when: - You have labeled preference data from search rollouts (MCTS can generate this data) - You need consistent latency at inference time (a trained verifier adds fixed cost; MCTS does not) - Marginal quality gains from continued search are below your quality threshold per added token

| Method | Quality gain / token | Latency | VRAM overhead | Best regime |

|---|---|---|---|---|

| Best-of-N sampling | Moderate | Low (parallel) | Linear in N | Fast, reliable verifier available |

| MCTS / AB-MCTS | High on hard tasks | High (sequential depth) | High (KV leak risk) | Multi-step reasoning, step-level PRM |

| Verifier reranking | Depends on verifier quality | Fixed after training | Minimal at inference | Large labeled dataset, stable distribution |

FAQ

What is Monte Carlo Tree Search in LLMs?

MCTS is a search algorithm that builds a tree of token continuations, evaluating branches via rollouts and propagating scores back to guide expansion. In LLMs it is a test-time compute scaling method — you trade throughput for quality on hard reasoning tasks.

Does vLLM support custom decoding algorithms?

vLLM supports "various decoding algorithms, including parallel sampling, beam search, and more" natively. It does not have a first-class MCTS plugin API. Custom MCTS runs as an application-layer adapter around AsyncLLMEngine.

How do you implement MCTS in vLLM?

Wrap AsyncLLMEngine in an adapter class, submit expansion requests per MCTS node, collect completions asynchronously, evaluate with a reward function, and backpropagate scores. Steps 1–3 above provide the full implementation.

How do you manage KV cache when backtracking in MCTS?

Call engine.abort(request_id) for every abandoned branch, explicitly del token cache entries, and call torch.cuda.empty_cache() in batches after subtree pruning. Never rely on Python garbage collection alone for CUDA tensor cleanup.

| Question | Short answer | Notes |

|---|---|---|---|

| What is Monte Carlo Tree Search in LLMs? | Search over continuations with rollout scoring. | Trade output speed for better reasoning on hard prompts. |

| Does vLLM support custom decoding algorithms? | Yes, but not a native MCTS plugin. | Use AsyncLLMEngine as the application-layer hook. |

| How do you implement MCTS in vLLM? | Wrap the async engine, expand nodes, score outputs, backpropagate. | The Step 1–3 sections show the control flow. |

| How do you manage KV cache when backtracking in MCTS? | Abort abandoned requests and clear cache references. | Batch torch.cuda.empty_cache() after subtree pruning. |

Pro Tip: The canonical source for

AsyncLLMEngineinternals is the vLLM GitHub repository. Read thevllm/engine/async_llm_engine.pysource directly when the docs fall behind a release; the source is authoritative and the docstrings are accurate for understanding the request lifecycle that your MCTS adapter must respect.

Sources & References

| Source | Type | Use | Link |

|---|---|---|---|

| vLLM GitHub Repository | Canonical repo | Engine internals, release history, issue tracker | GitHub |

| vLLM AsyncLLMEngine docs, v0.6.0 | Official docs | Async engine wrapper used throughout this integration | v0.6.0 docs |

| vLLM AsyncLLMEngine docs, v0.6.5 | Official docs | API stability reference across v0.6.x | v0.6.5 docs |

| vLLM docs landing page | Official docs | Supported decoding algorithms and engine capabilities | Docs landing page |

| AB-MCTS paper (arXiv 2503.04412) | arXiv paper | Adaptive branching mechanism | Paper |

| Adaptive Parallel MCTS paper (arXiv 2604.00510v1) | arXiv HTML | Long-tail latency and inference-time MCTS behavior | HTML abstract |

- vLLM GitHub Repository — Canonical source for engine internals, release history, and issue tracker

- vLLM AsyncLLMEngine docs, v0.6.0 — Module-level documentation for the async engine wrapper used throughout this integration

- vLLM AsyncLLMEngine docs, v0.6.5 — Dev reference confirming API stability across v0.6.x

- vLLM docs landing page — Overview of supported decoding algorithms and engine capabilities

- AB-MCTS paper (arXiv 2503.04412) — Source paper for Adaptive Branching Monte Carlo Tree Search

- AB-MCTS HTML abstract (arXiv 2503.04412v1) — Detailed abstract covering the wider/deeper adaptive branching mechanism

- Adaptive Parallel MCTS paper (arXiv 2604.00510v1) — 2026 paper documenting long-tail latency risks in inference-time MCTS

- Scaling LLM Test-Time Compute (arXiv 2408.03314) — 2024 paper framing the inference-time compute scaling question

- NVIDIA Nemotron-H research page — Source for the 2.9× H100 speedup benchmark under long-context inference

- vLLM KV cache memory issue #12330 — Real-world KV cache memory sizing on A100 40GB

- vLLM environment issue #14178 — Confirms Python 3.10.16 + PyTorch 2.5.1 real-world deployment baseline

Keywords: vLLM 0.6.0, LLMEngine, AsyncLLMEngine, PagedAttention, KV cache, NVIDIA H100, NVIDIA A100 80GB, PyTorch 2.5, Python 3.10, Adaptive Branching Monte Carlo Tree Search (AB-MCTS), test-time compute, token sampling, continuous batching, prefix caching, GPU VRAM