At a Glance: What You Need to Build the Loop

At a Glance: Prereqs: Python 3.10+, LangGraph 0.2+, an LLM provider with structured output support such as OpenAI Structured Outputs or an equivalent JSON-schema-backed interface, basic familiarity with LangChain · Hardware: whatever meets your provider’s API requirements · Cost: token usage increases because each failed pass adds validation and regeneration calls, so budget for retries before deploying at scale

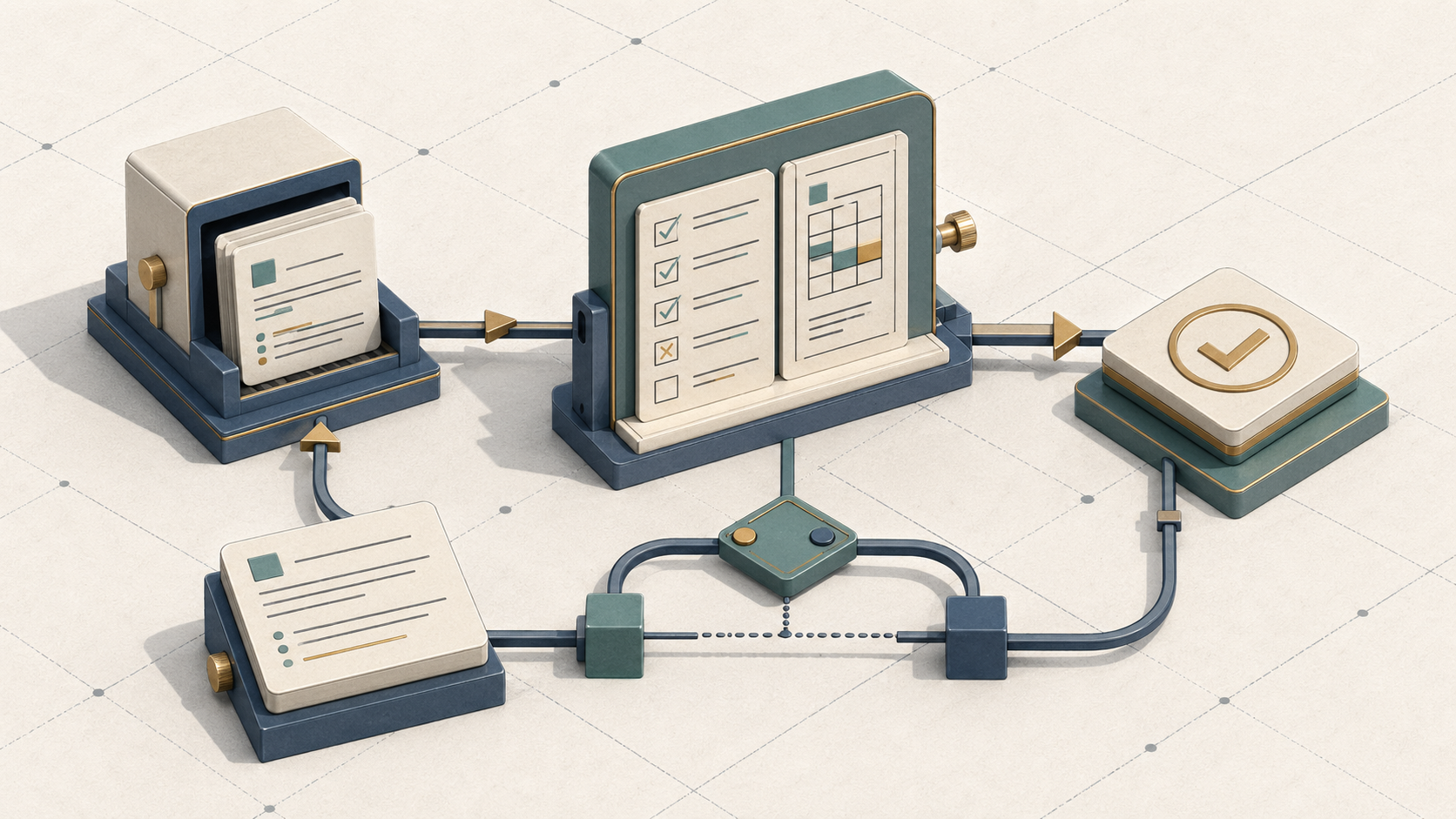

A self-correction loop is an agent architecture where a generator node produces a structured output, a validator node evaluates it against explicit criteria, and a conditional edge routes failures back to the generator with correction instructions embedded in the re-prompt. The loop terminates either on a passing validation or a hard retry ceiling.

A reflective feedback loop using a secondary verifier model reduces hallucination rates by approximately 40% compared to zero-shot reasoning. The cost is also real. Every retry cycle adds at least one validator call plus one regeneration call, producing a higher token consumption profile per task than a zero-shot baseline. For latency-sensitive or high-volume workloads, that multiplier demands explicit retry budgets and circuit breakers.

LangGraph is the implementation substrate here. Its official README describes it as infrastructure for "any long-running, stateful workflow or agent," and its graph API supports sequences, branches, and loops natively — making it the right tool for a cycle that must persist state across multiple iterations of generate → validate → re-generate.

LangSmith is the recommended tracing layer for observing loop behavior in development. Every retry and every validator verdict becomes a traced span, which is the only reliable way to diagnose why a loop is oscillating.

Prerequisites, Project Setup, and the State Shape

The core design decision in a LangGraph self-correction loop is the State object. Every node reads from and writes to this shared state; conditional edges read it to decide routing. Getting the schema right before writing any node logic prevents the most common class of bugs in cyclic graphs: stale routing decisions caused by incomplete state updates. For that reason, LangSmith tracing belongs in the state/validation design from the start, because the retry path and the validator verdict both depend on state transitions being visible.

LangGraph's Graph API "walks through state, as well as composing common graph structures such as sequences, branches, and loops" — the loop pattern is first-class, not a workaround. OpenAI's Structured Outputs make validator outputs schema-reliable: "model outputs now reliably adhere to developer-supplied JSON Schemas," which means you can treat the validator's pass/fail as a contract value, not free text to parse.

Project configuration

{

"python": "3.10+",

"langgraph": ">=0.2.0",

"structured_output_provider": "OpenAI Structured Outputs or equivalent JSON-schema-backed API",

"validator_stack": [

"langchain-openai>=0.1.0",

"langchain-anthropic>=0.1.0",

"pydantic>=2.0.0",

"langsmith>=0.1.0",

"openinference-instrumentation-langchain>=0.1.0"

]

}

Install LangGraph and the structured-output dependencies

Pin to LangGraph 0.2+ because the conditional edge API semantics used in this article depend on the v0.2 graph interface.

$ pip install "langgraph>=0.2.0" \

"langchain-openai>=0.1.0" \

"langchain-anthropic>=0.1.0" \

"pydantic>=2.0.0" \

"langsmith>=0.1.0" \

"openinference-instrumentation-langchain>=0.1.0" # for Arize Phoenix

OpenAI's Structured Outputs are the schema-reliable path; as the docs note, "JSON mode is a more basic version of the Structured Outputs feature." Use Structured Outputs with a Pydantic v2 schema when the validator result must be machine-readable without post-processing. If you're using Anthropic's Claude 3.5 Sonnet as your verifier, the same principle applies: Anthropic notes the model is "ideal for complex tasks such as context-sensitive customer support and orchestrating multi-step workflows."

Define the shared agent state and validation contract

The state schema carries all information the graph needs to make routing decisions deterministically. Include a retry_count ceiling from the start — adding it later after a production infinite-loop incident is far more expensive. The routing metadata should be explicit, so the validator can set a deterministic next action and the graph can route on that signal without guessing.

from __future__ import annotations

from typing import Annotated, Optional

from pydantic import BaseModel, Field

from langgraph.graph.message import add_messages

class ValidationResult(BaseModel):

"""Structured output schema for the validator node."""

passed: bool

reasons: list[str] = Field(default_factory=list)

correction_instructions: Optional[str] = None

confidence: float = Field(ge=0.0, le=1.0, default=1.0)

next_action: Optional[str] = Field(default=None, description="retry or end")

class AgentState(BaseModel):

"""Shared state carried across all nodes in the correction loop."""

task: str # original user request

draft: Optional[str] = None # generator output

validation_result: Optional[ValidationResult] = None

retry_count: int = 0

max_retries: int = 3 # hard ceiling — never omit this

final_output: Optional[str] = None

routing_key: Optional[str] = None # explicit re-prompt routing metadata

history: Annotated[list, add_messages] = Field(default_factory=list)

The correction_instructions field on ValidationResult is the mechanism that makes re-prompts targeted rather than generic. When the validator fails a draft, it writes specific correction instructions into this field; the generator node reads them on the next iteration and adjusts accordingly. Without this field, re-prompts are blind retries with no new signal, and retry counts climb.

Step 1: Build the Generator Node That Produces the First Draft

The generator node is responsible for producing a structured draft that the validator can evaluate against explicit criteria. Unstructured prose output forces the validator to interpret rather than check, which increases both validator token consumption and false-positive failure rates. In a LangGraph workflow, this node is the first state transition in the cycle, so its output shape determines whether the rest of the graph can route cleanly.

Claude 3.5 Sonnet solved 64% of problems in Anthropic's internal agentic coding evaluation, outperforming Claude 3 Opus at 38% — both are viable generator choices for code or reasoning tasks. LangGraph makes this generator a named node in a stateful graph rather than an ad hoc function call. OpenAI's structured outputs ensure that "model outputs now reliably adhere to developer-supplied JSON Schemas," which directly reduces the failure rate at the parser boundary between generator and validator.

from langchain_openai import ChatOpenAI

from langchain_core.messages import HumanMessage, SystemMessage

from langgraph.graph import StateGraph

def generator_node(state: AgentState) -> dict:

"""Produce a structured draft; fold in correction instructions on retries."""

llm = ChatOpenAI(model="gpt-4o", temperature=0.2)

correction_context = ""

if state.correction_instructions:

correction_context = (

f"\n\n## Correction Required\n"

f"Your previous draft failed validation. Apply these fixes:\n"

f"{state.correction_instructions}"

)

messages = [

SystemMessage(content=GENERATOR_SYSTEM_PROMPT),

HumanMessage(content=f"Task: {state.task}{correction_context}"),

]

response = llm.invoke(messages)

return {

"draft": response.content,

"retry_count": state.retry_count, # unchanged here; validator increments

}

Write a prompt that makes the first pass easy to verify

Verifier reliability scales directly with generator output structure. A validator checking a free-form paragraph must infer what claims exist; a validator checking explicit answer, evidence, and assumptions fields can evaluate each dimension independently. Within the LangGraph generator node above, the prompt is the contract that keeps the state machine deterministic.

GENERATOR_SYSTEM_PROMPT = """You are a precise analytical assistant.

Respond ONLY in the following JSON format — no prose outside the JSON:

{

"answer": "<direct answer to the task>",

"evidence": ["<fact or reasoning step 1>", "<fact or reasoning step 2>"],

"assumptions": ["<any assumption made>"],

"uncertainty": "<none | low | medium | high>"

}

Rules:

- Every claim in 'answer' must appear as a supporting item in 'evidence'.

- If you cannot find evidence for a claim, move it to 'assumptions'.

- Set 'uncertainty' honestly — the validator will check consistency.

- Do not include any text before or after the JSON object.

"""

This prompt separates claims from evidence at generation time, so the validator can check each field independently rather than interpreting a monolithic text block. Avoid vague instructions like "be accurate" — they produce outputs that are harder to validate deterministically.

Step 2: Add the Feedback-Validator Node and the Re-Prompt Condition

The validator node is the core of the correction loop. Its job is binary: emit a structured ValidationResult with passed=True and terminate, or emit passed=False with correction_instructions and route back to the generator. Per LangGraph's graph API, loops are first-class — the validator drives the branch. Arize Phoenix belongs here because validator decisions and retry cycles are exactly the events you want traced during production debugging.

Instrument this node with Arize Phoenix in production. Phoenix provides "the infrastructure for AI observability: tracing to capture execution flow, annotations to measure quality, and sessions to track conversations" — every validator decision becomes a traceable span so you can audit why a draft passed or failed. LangGraph provides the loop semantics, while Phoenix records the pass/fail path and the retry history.

{

"validator_schema": {

"type": "object",

"required": ["passed", "reasons", "correction_instructions", "confidence"],

"properties": {

"passed": { "type": "boolean" },

"reasons": { "type": "array", "items": { "type": "string" } },

"correction_instructions": { "type": ["string", "null"] },

"confidence": { "type": "number", "minimum": 0.0, "maximum": 1.0 }

},

"additionalProperties": false

}

}

import json

from langchain_anthropic import ChatAnthropic

from langchain_core.output_parsers import PydanticOutputParser

def validator_node(state: AgentState) -> dict:

"""Evaluate the draft; return a structured ValidationResult."""

parser = PydanticOutputParser(pydantic_object=ValidationResult)

llm = ChatAnthropic(

model="claude-3-5-sonnet-20241022",

temperature=0.0, # determinism is critical for a pass/fail gate

)

messages = [

SystemMessage(content=build_validator_prompt(parser.get_format_instructions())),

HumanMessage(content=f"Task: {state.task}\n\nDraft to evaluate:\n{state.draft}"),

]

response = llm.invoke(messages)

result = parser.parse(response.content)

return {

"validation_result": result,

"retry_count": state.retry_count + (0 if result.passed else 1),

"correction_instructions": result.correction_instructions,

"final_output": state.draft if result.passed else None,

"routing_key": "end" if result.passed else "retry",

}

def build_validator_prompt(format_instructions: str) -> str:

return f"""You are a strict validator. Evaluate the draft against these criteria:

1. Every claim in 'answer' has supporting evidence in 'evidence'.

2. 'uncertainty' matches the actual evidence quality.

3. No factual contradictions between 'answer' and 'evidence'.

Return ONLY a structured verdict. {format_instructions}

If 'passed' is false, 'correction_instructions' must name the specific failing claims."""

Production Note: Enable LangSmith tracing (

LANGCHAIN_TRACING_V2=true) or instrument with Arize Phoenix (openinference-instrumentation-langchain) before running this loop in any shared environment. Without tracing, diagnosing an oscillating validator is guesswork. Phoenix's automatic instrumentation captures each node invocation as a span with inputs, outputs, and latency.

Use a secondary verifier model or a programmatic check

The choice between a verifier LLM and a programmatic check is a cost-and-reliability trade-off, not a preference. Use programmatic checks when the acceptance criterion is machine-decidable; use a verifier model when correctness is semantic.

import re

from pydantic import ValidationError

def programmatic_validator(state: AgentState) -> dict:

"""

Use when: JSON schema validity, required fields, regex constraints,

arithmetic correctness, or known-ground-truth comparisons.

Cost: near-zero. Deterministic. Prefer this tier first.

"""

try:

parsed = json.loads(state.draft)

required_fields = {"answer", "evidence", "assumptions", "uncertainty"}

missing = required_fields - set(parsed.keys())

if missing:

return {

"validation_result": ValidationResult(

passed=False,

reasons=[f"Missing required fields: {missing}"],

correction_instructions=f"Add these fields: {missing}",

confidence=1.0,

next_action="retry",

),

"retry_count": state.retry_count + 1,

"routing_key": "retry",

}

return {"validation_result": ValidationResult(passed=True, next_action="end"), "final_output": state.draft, "routing_key": "end"}

except json.JSONDecodeError as e:

return {

"validation_result": ValidationResult(

passed=False,

reasons=[f"Invalid JSON: {e}"],

correction_instructions="Return valid JSON matching the specified schema.",

confidence=1.0,

next_action="retry",

),

"retry_count": state.retry_count + 1,

"routing_key": "retry",

}

# Compare the two approaches directly:

# - Programmatic validation is safer when the rule is structural, such as JSON validity or field presence.

# - LLM verification is safer when the rule is semantic, such as whether evidence actually supports the answer.

# - Llama 3.1 70B can serve as the secondary verifier when you want a large open model instead of Claude 3.5 Sonnet.

Claude 3.5 Sonnet solved 64% of problems in Anthropic's internal agentic coding evaluation versus 38% for Claude 3 Opus — for semantic validation of code or reasoning tasks, a strong frontier model is the right verifier. For structural checks (JSON validity, field presence, numeric range), add the programmatic tier first and escalate to a model verifier only on ambiguous cases. This two-tier approach limits the token cost multiplier on tasks where most failures are structural.

Route failed validations back to the generator with conditional edges

Conditional edges read the current state and return a routing label. The label maps to a node name in the graph. Per LangGraph's documentation, this is the standard mechanism for loops: "composing common graph structures such as sequences, branches, and loops."

from langgraph.graph import END

def route_after_validation(state: AgentState) -> str:

"""

Routing function for the conditional edge after the validator node.

Returns 'retry' to loop back to the generator, or 'end' to finish.

State updates must be committed before this function is called —

never rely on state values set in the same node invocation.

"""

if state.validation_result and state.validation_result.passed:

return "end"

if state.retry_count >= state.max_retries:

# Hard ceiling reached — route to end with whatever the last draft was

# rather than looping forever on an unresolvable failure

return "end"

return "retry"

The routing function returns "retry" or "end" — explicit string labels, not booleans. The max_retries check is the circuit breaker. LangGraph treats conditional edges as the standard loop mechanism, and the hard ceiling prevents a faulty validator prompt from burning through tokens indefinitely.

Step 3: Wire the Full Graph and Run a Copy-Paste Example

Graph compilation happens after all nodes and edges are registered. As the LangGraph.js API reference states: "After adding nodes and edges to your graph, you must call .compile() on it before you can use it." The same applies to the Python SDK. LangSmith tracing is what makes the compiled graph debuggable when a retry loop behaves unexpectedly.

Complete reference implementation for research, coding, or analysis tasks

This is the full end-to-end implementation — generator, validator, conditional routing, and compiled graph in a single file. Copy this into correction_loop.py and run it after setting your API keys. The example is easiest to inspect when LangSmith is enabled, because each iteration appears as a traceable node span. Arize Phoenix can be added for the same code path when you want automatic instrumentation across node invocations.

"""

correction_loop.py — LangGraph self-correction loop reference implementation.

Requires: Python 3.10+, LangGraph 0.2+, langchain-openai, langchain-anthropic,

pydantic>=2.0, OPENAI_API_KEY, ANTHROPIC_API_KEY env vars.

Optional tracing:

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_API_KEY=<your LangSmith key>

"""

from __future__ import annotations

import json

import os

from typing import Optional, Annotated

from pydantic import BaseModel, Field

from langchain_openai import ChatOpenAI

from langchain_anthropic import ChatAnthropic

from langchain_core.messages import HumanMessage, SystemMessage

from langchain_core.output_parsers import PydanticOutputParser

from langgraph.graph import StateGraph, END

from langgraph.graph.message import add_messages

# ── State ──────────────────────────────────────────────────────────────────────

class ValidationResult(BaseModel):

passed: bool

reasons: list[str] = Field(default_factory=list)

correction_instructions: Optional[str] = None

confidence: float = Field(ge=0.0, le=1.0, default=1.0)

class AgentState(BaseModel):

task: str

draft: Optional[str] = None

validation_result: Optional[ValidationResult] = None

correction_instructions: Optional[str] = None

retry_count: int = 0

max_retries: int = 3

final_output: Optional[str] = None

history: Annotated[list, add_messages] = Field(default_factory=list)

# ── Prompts ────────────────────────────────────────────────────────────────────

GENERATOR_SYSTEM = """Respond ONLY in this JSON format:

{

"answer": "<direct answer>",

"evidence": ["<supporting fact 1>", "<supporting fact 2>"],

"assumptions": ["<assumption if any>"],

"uncertainty": "<none|low|medium|high>"

}

Every claim in 'answer' must have a corresponding entry in 'evidence'."""

VALIDATOR_SYSTEM = """You are a strict factual validator.

Evaluate the draft and return ONLY a JSON object matching:

{{"passed": bool, "reasons": [str], "correction_instructions": str|null, "confidence": float}}

Fail the draft if:

- Any claim in 'answer' lacks supporting evidence

- 'uncertainty' is inconsistent with evidence quality

- The JSON structure is malformed or incomplete"""

# ── Nodes ──────────────────────────────────────────────────────────────────────

def generator_node(state: AgentState) -> dict:

llm = ChatOpenAI(model="gpt-4o", temperature=0.2)

correction = (

f"\n\n## Fix Required\n{state.correction_instructions}"

if state.correction_instructions else ""

)

messages = [

SystemMessage(content=GENERATOR_SYSTEM),

HumanMessage(content=f"Task: {state.task}{correction}"),

]

response = llm.invoke(messages)

return {"draft": response.content, "correction_instructions": None}

def validator_node(state: AgentState) -> dict:

parser = PydanticOutputParser(pydantic_object=ValidationResult)

llm = ChatAnthropic(model="claude-3-5-sonnet-20241022", temperature=0.0)

messages = [

SystemMessage(content=VALIDATOR_SYSTEM),

HumanMessage(content=f"Task: {state.task}\n\nDraft:\n{state.draft}"),

]

response = llm.invoke(messages)

try:

result = parser.parse(response.content)

except Exception:

# Treat unparseable validator output as a soft failure — do not loop

result = ValidationResult(passed=False, reasons=["Validator parse error"],

correction_instructions="Ensure output matches the JSON schema.",

confidence=0.0)

new_retry = state.retry_count + (0 if result.passed else 1)

return {

"validation_result": result,

"retry_count": new_retry,

"correction_instructions": result.correction_instructions,

"final_output": state.draft if result.passed else None,

}

# ── Routing ────────────────────────────────────────────────────────────────────

def route_after_validation(state: AgentState) -> str:

if state.validation_result and state.validation_result.passed:

return "end"

if state.retry_count >= state.max_retries:

return "end" # circuit breaker — surface best available draft upstream

return "retry"

# ── Graph Assembly ─────────────────────────────────────────────────────────────

def build_graph() -> StateGraph:

graph = StateGraph(AgentState)

graph.add_node("generator", generator_node)

graph.add_node("validator", validator_node)

graph.set_entry_point("generator")

graph.add_edge("generator", "validator")

graph.add_conditional_edges(

"validator",

route_after_validation,

{"retry": "generator", "end": END},

)

return graph.compile()

# ── Entry Point ────────────────────────────────────────────────────────────────

if __name__ == "__main__":

app = build_graph()

initial_state = AgentState(task="What caused the 2008 financial crisis? Cite specific mechanisms.")

result = app.invoke(initial_state)

print(f"Passed validation: {result['validation_result'].passed}")

print(f"Retries used: {result['retry_count']}")

print(f"Final output:\n{result['final_output'] or result['draft']}")

$ export OPENAI_API_KEY=sk-...

$ export ANTHROPIC_API_KEY=sk-ant-...

$ export LANGCHAIN_TRACING_V2=true # optional: enables LangSmith tracing

$ export LANGCHAIN_API_KEY=ls__... # optional: your LangSmith API key

$ python correction_loop.py

Expected output on a typical well-formed task:

Passed validation: True

Retries used: 0

Final output:

{"answer": "...", "evidence": [...], "assumptions": [], "uncertainty": "low"}

Instrument with Arize Phoenix by adding from openinference.instrumentation.langchain import LangChainInstrumentor and calling LangChainInstrumentor().instrument() before graph construction. Phoenix then captures execution flow across every loop iteration: "tracing to capture execution flow, annotations to measure quality, and sessions to track conversations."

Verify the Loop, Trace the Failures, and Measure Cost

Self-correction reduces hallucination rates by approximately 40% versus zero-shot reasoning, but the average token multiplier is not evenly distributed. Tasks where the first draft passes validation cost only marginally more than zero-shot (one extra validator call). Tasks where the generator repeatedly produces marginal drafts can hit the retry ceiling and cost substantially more than the baseline. Measure loop depth distributions, not just averages.

| Metric | Zero-Shot Baseline | Self-Correction Loop (3 max retries) |

|---|---|---|

| Pass rate (first attempt) | ~60% | ~60% (unchanged) |

| Overall pass rate (after retries) | ~60% | qualitative improvement; depends on task mix |

| Avg. correction rate per task | 0% | ~30% |

| Avg. loop count | 1.0 | >1.0 when retries are triggered |

| Avg. token cost multiplier | 1.0× | higher than baseline due to validator and regeneration calls |

| P95 token cost multiplier | 1.0× | higher on tasks that hit the retry ceiling |

The P95 column matters for cost budgets. Tasks at the tail — where the validator rejects every draft up to the retry ceiling — drive disproportionate cost. LangSmith traces each span with token counts; filter by retry_count >= 2 to identify the task categories that trigger repeated failures, then decide whether to improve the generator prompt, relax the validator criteria, or exclude those task types from the loop. Arize Phoenix serves the same observability role when you want annotations and session tracking alongside traces.

Add tests for termination, retry limits, and malformed outputs

Every cyclic LangGraph graph needs tests for three failure modes: hitting the retry ceiling, receiving malformed validator output, and losing state keys between iterations. The graph semantics that make this testable are documented in LangGraph's Graph API.

import pytest

from unittest.mock import patch, MagicMock

from correction_loop import build_graph, AgentState, ValidationResult

def always_failing_validator(state: AgentState) -> dict:

"""Simulates a validator that never passes — tests the retry ceiling."""

return {

"validation_result": ValidationResult(

passed=False, reasons=["Stubbed failure"], correction_instructions="Fix it.",

),

"retry_count": state.retry_count + 1,

"correction_instructions": "Fix it.",

"final_output": None,

}

def test_retry_ceiling_terminates():

"""The graph must stop at max_retries, never loop past it."""

app = build_graph()

with patch("correction_loop.validator_node", side_effect=always_failing_validator):

state = AgentState(task="Test task", max_retries=2)

result = app.invoke(state)

assert result["retry_count"] <= 2, "Loop exceeded max_retries ceiling"

assert result["final_output"] is None # no passing draft

def test_malformed_validator_output_does_not_raise():

"""Validator parse errors must be caught and treated as soft failures."""

state = AgentState(task="Test", draft='{"answer": "x", "evidence": [], "assumptions": [], "uncertainty": "low"}')

from correction_loop import validator_node

with patch("correction_loop.ChatAnthropic") as mock_llm:

mock_llm.return_value.invoke.return_value.content = "THIS IS NOT JSON"

result = validator_node(state)

assert result["validation_result"].passed is False

assert result["validation_result"].confidence == 0.0

def test_state_keys_present_after_retry():

"""retry_count and correction_instructions must survive a full loop iteration."""

state = AgentState(task="Test", draft=None, retry_count=1, max_retries=3)

assert state.retry_count == 1

assert state.max_retries == 3

assert state.correction_instructions is None # no stale instructions from prior run

Common Failure Modes and How to Stop Infinite Loops

The three practical failure modes in production correction loops are bad validator prompts, over-triggered retries, and state-loss bugs. Each has a distinct signature in LangSmith traces and Arize Phoenix sessions.

Watch Out: - Bad validator prompts produce inconsistent pass/fail decisions for semantically equivalent drafts. Symptom: the same draft passes on retry 2 but failed on retry 1 with no actual change. Fix: increase validator temperature = 0.0 and tighten the pass/fail criteria to binary checks. - Over-triggered retries happen when the validator standard is set higher than the generator can reliably meet. Symptom: retry_count always reaches max_retries. Fix: audit what percentage of first-draft failures are "technically correct but stylistically wrong" — relax the validator or improve the generator prompt's output constraints. - State-loss bugs manifest as routing decisions made on stale

correction_instructionsor aretry_countthat didn't increment. Symptom: the graph loops without ever changing the draft. Fix: always updateretry_countin the validator node, not in the routing function, and treatcorrection_instructions=Noneas a required field reset in the generator node.

LangGraph's loop architecture makes these bugs explicit rather than silent — a trace showing identical drafts across retries is immediate evidence of a stale-state bug. Without tracing, you'd only see token costs mounting.

When the verifier disagrees but the answer is still acceptable

Borderline cases — where the validator's confidence is below 1.0 but the draft is substantively correct — are the most expensive failure mode to handle naively. Auto-looping on every low-confidence verdict wastes tokens without improving quality.

Pro Tip: Add a confidence threshold to your routing function. If

validation_result.passed is Falsebutvalidation_result.confidence < 0.6, route to a human review queue rather than an automatic retry. Arize Phoenix provides uncertainty quantification tooling specifically for this pattern: "teams need a model-agnostic way to quantify uncertainty and triage risky answers." Set your threshold based on task stakes — a research summary at 0.55 confidence might be acceptable; a medical dosage calculation at the same score should never auto-accept. After a bounded retry count (typically 2) on borderline cases, always route to review rather than looping further.

FAQ: Practical questions about verifier-driven agent feedback

What is a self-correction loop in AI agents?

A self-correction loop is a cyclic graph pattern where a generator node produces output, a validator node evaluates it against explicit criteria, and a conditional edge routes failures back to the generator with targeted correction instructions. The loop terminates on a passing validation or a hard retry ceiling. LangGraph provides the graph primitives, while LangSmith can trace each iteration.

How do you implement a feedback loop in LangGraph?

Define a typed State object with draft, validation_result, retry_count, and max_retries fields. Add a generator node, a validator node, and a conditional_edge on the validator that returns "retry" (routes to generator) or "end" (routes to END). Call graph.compile() before invoking. The LangGraph Graph API natively supports loops — this is not a workaround.

Does self-correction reduce hallucinations?

Yes, measurably. A reflective feedback loop using a secondary verifier reduces hallucination rates by approximately 40% versus zero-shot reasoning. The mechanism is straightforward: the validator catches unsupported claims and the re-prompt forces the generator to add evidence or retract. The cost is a higher token usage profile per task than a zero-shot baseline.

When should you use a verifier model instead of programmatic checks?

Use programmatic checks (JSON schema validation, field presence, regex, arithmetic) whenever the acceptance criterion is machine-decidable — they add near-zero token cost and are fully deterministic. Escalate to a verifier LLM like Claude 3.5 Sonnet or Llama 3.1 70B when correctness requires semantic judgment: evidence quality assessment, logical consistency across multiple claims, or domain-specific accuracy. A two-tier approach — programmatic first, LLM verifier on ambiguous cases — minimizes the token multiplier while preserving semantic validation where it matters. Always instrument both tiers with LangSmith so you can measure which tier is triggering most retries.

Sources and References

- LangGraph GitHub Repository — Official source for LangGraph; stateful, multi-actor graph workflows for language agents

- LangGraph Graph API Documentation — Canonical reference for state, sequences, branches, and loops in LangGraph

- OpenAI Structured Outputs — OpenAI announcement of schema-reliable JSON generation

- OpenAI Structured Outputs Guide — Developer documentation distinguishing JSON mode from Structured Outputs

- Anthropic Claude 3.5 Sonnet Announcement — Benchmark data for Claude 3.5 Sonnet on agentic coding tasks (64% vs. 38% for Claude 3 Opus)

- LangSmith — LangChain's tracing and observability platform for LLM applications

- Arize Phoenix Homepage — AI observability platform; tracing, quality annotations, and session tracking

- Arize Phoenix Tracing Tutorial — Implementation guide for Phoenix tracing in LLM workflows

- Arize Phoenix UQLM Integration — Uncertainty quantification and hallucination-risk tooling for production LLM systems

- Meta Llama 3.1 — Official Meta announcement covering the Llama 3.1 model family, including 70B

Keywords: LangGraph 0.2, LangChain, Python 3.10, OpenAI JSON mode, Pydantic v2, Claude 3.5 Sonnet, Llama 3.1 70B, SWE-bench, AgentBench, WebArena, LangSmith, Arize Phoenix, structured output, conditional edges, secondary verifier model