What the latest model-merging benchmarks actually show

A systematic study published at ICLR 2025 and available on OpenReview and arXiv (2410.03617) is currently the most comprehensive benchmark of model merging at scale, covering four merge methods, model sizes from 1B to 64B parameters, and up to eight expert models. The central finding challenges a common practitioner assumption: at scale, the method you pick matters far less than the quality of the checkpoints you start with and how many of them you merge.

Bottom Line: The benchmark's five headline findings, in order of practical weight: 1. Base model quality dominates. Merging works better when experts come from strong base models with good zero-shot performance. This is the single largest lever. 2. Larger models are easier to merge. Merge behavior stabilizes as parameter count grows from 1B to 64B. 3. Merging consistently improves generalization. Merged models outperform individual experts on held-out tasks across the study's evaluation suite. 4. Eight large experts can beat multitask training. On zero-shot held-out tasks, merging eight large expert models often generalizes better than a dedicated multitask-trained model. 5. Method differences shrink at scale. Averaging, Task Arithmetic, Dare-TIES, and TIES-Merging converge in behavior as model size grows — so investing heavily in method tuning at large scale returns diminishing value.

Strongest practical implication: Spend your budget on selecting or training stronger base model checkpoints and collecting more high-quality expert fine-tunes before you spend time tuning merge hyperparameters.

The benchmark's value is that it isolates how merge quality changes with base model quality, expert count, and method choice.

How the benchmark study was set up

The study's scope is defined precisely: the researchers merged fully fine-tuned models across model sizes from 1B to 64B parameters and merged up to eight different expert models, comparing four methods throughout. As the arXiv abstract states directly: "We experiment with merging fully fine-tuned models using 4 popular merging methods -- Averaging, Task Arithmetic, Dare, and TIES -- across model sizes ranging from 1B-64B parameters and merging up to 8 different expert models."

The 64× parameter spread gives the benchmark real resolution on how merge behavior tracks with scale — not just whether merging works at a single size, but whether the dynamics change as you move from small to large models. The expert count goes from 2 through 8, answering the practitioner question of whether adding more fine-tuned checkpoints keeps paying off.

| Dimension | Range | Notes |

|---|---|---|

| Model size | 1B – 64B parameters | 64× spread; both small and large regimes covered |

| Expert count | Up to 8 | Tests incremental gains from adding more fine-tunes |

| Merge methods | 4 | Averaging, Task Arithmetic, Dare-TIES, TIES-Merging |

| Evaluation | Held-in + zero-shot held-out | Both in-distribution and unseen task generalization |

The design answers "how many models can you merge?" not just in principle but empirically: up to eight, with the caveat that larger models handle higher expert counts more reliably.

Model sizes, expert counts, and merge methods covered

All four merge methods were applied uniformly across the full scale range, making method comparisons consistent rather than cherry-picked at favorable scales.

| Method | Parameter scales tested | Expert counts tested | Category |

|---|---|---|---|

| Averaging | 1B – 64B | 2 – 8 | Baseline; no learned weighting |

| Task Arithmetic | 1B – 64B | 2 – 8 | Task-vector addition |

| TIES-Merging | 1B – 64B | 2 – 8 | Trim, elect sign, merge |

| Dare-TIES | 1B – 64B | 2 – 8 | Drop-and-rescale + TIES |

Each method was applied to the same set of experts at each scale, so differences in model merging benchmark results reflect method behavior rather than confounded checkpoint selection. The abstract does not expose per-task numeric tables in its snippet; precise per-cell results require the full paper PDF.

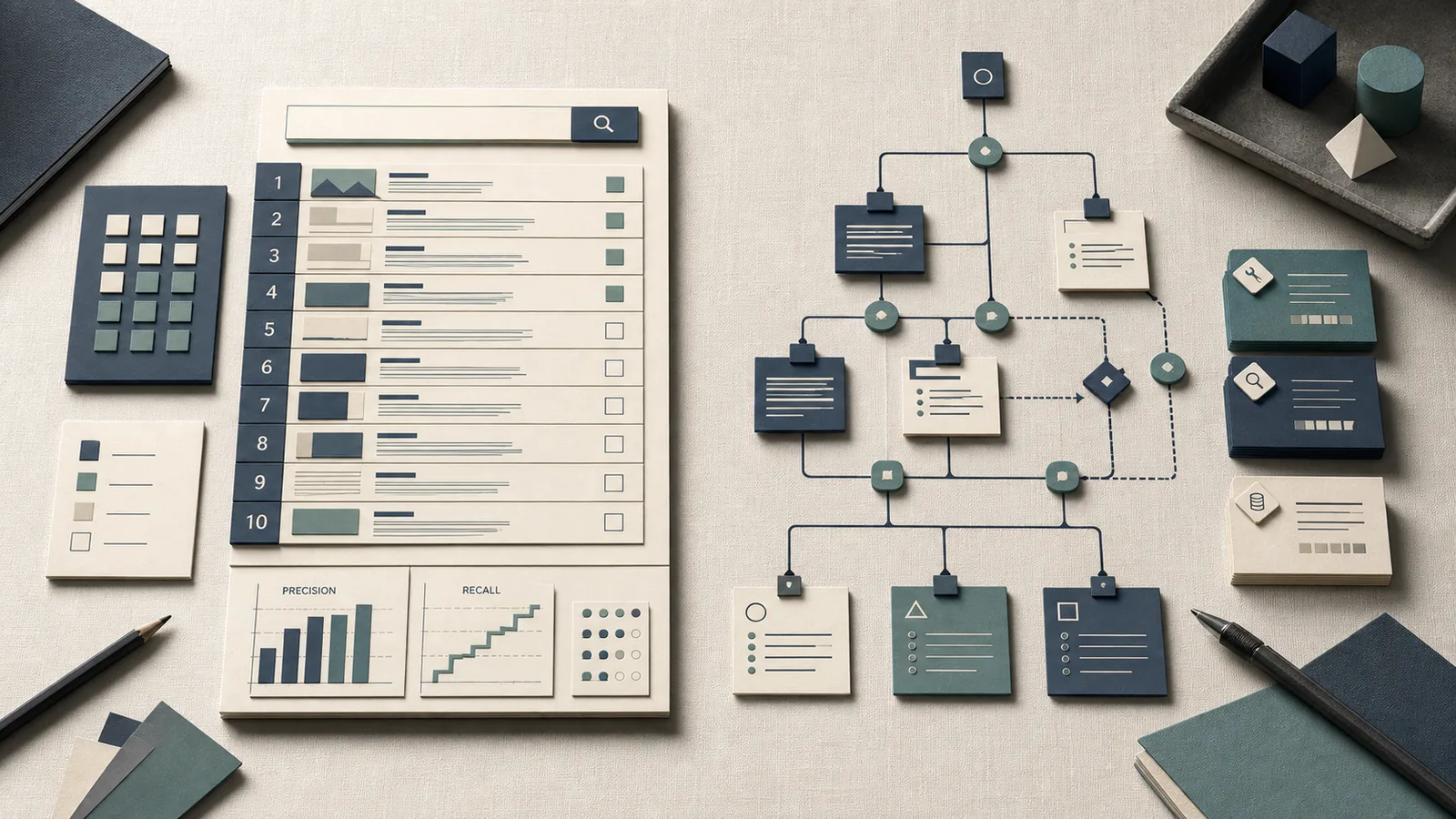

What the evaluation measured and why held-out tasks matter

The study evaluated merged models on two distinct evaluation tracks: held-in tasks (the tasks the expert models were fine-tuned on) and zero-shot generalization to unseen held-out tasks. The OpenReview PDF states this directly: "We evaluate the merged models on both held-in tasks, i.e., the expert's training tasks, and zero-shot generalization to unseen held-out tasks."

Held-in scores confirm that merging does not catastrophically destroy task-specific capability. But held-out scores are the more diagnostic signal: they tell you whether the merged model acquired something beyond a weighted average of its constituent fine-tunes. The benchmark reports that "merging consistently improves generalization capabilities," which is a held-out finding, not a held-in one.

| Evaluation track | What it measures | Why it matters |

|---|---|---|

| Held-in tasks | Performance on each expert's training tasks | Checks that task-specific capability survives merging |

| Zero-shot held-out tasks | Generalization to unseen tasks | Shows whether merging adds transferable capability |

Pro Tip: When evaluating a merge in your own pipeline, never report only held-in accuracy. A merged model that scores well on its constituent tasks but poorly on benchmarks like MMLU or GPQA may be interpolating fine-tune knowledge rather than genuinely generalizing. Zero-shot held-out scores are the signal that separates capacity expansion from mere task averaging — and they are what this study's most important claims rest on.

Practitioners who evaluate only on the tasks their experts were trained on will systematically overestimate the practical value of their merges. The held-out evaluation design is why this benchmark is a more useful reference point than ablations that test only held-in recovery.

Why base model quality changes merge outcomes

Base model quality is the study's primary finding and the variable with the largest observed effect on merge success. The OpenReview abstract states: "First, merging works better when experts are constructed from strong base models with good zero-shot performance."

The mechanism is not stated explicitly in the abstract, but the directional finding aligns with what practitioners observe empirically: model merging operates in weight space, and if the base weight geometry is already well-structured for general-purpose reasoning (reflected in strong zero-shot scores), task-specific fine-tunes sit as relatively small perturbations off that base. Those perturbations are easier to compose without destructive interference than perturbations built on a weak or poorly calibrated base. A strong zero-shot base also means the merged model can fall back to base-level generalization when no single expert's knowledge is directly applicable to a held-out query.

| Base model zero-shot quality | Merge outcome (directional) | Practical implication |

|---|---|---|

| Strong zero-shot generalist | Better post-merge held-out scores; more stable across methods | Prioritize selecting top-tier base checkpoints |

| Moderate zero-shot capability | Moderate merge gains; higher variance across methods | Method choice becomes more relevant as a compensator |

| Weak or misaligned base | Merging can amplify capability gaps | Avoid merging; fix the base first |

The study does not publish a numeric cutoff for what constitutes "strong" — the conclusion is directional.

Why strong zero-shot performance before merging is a good sign

Treating pre-merge zero-shot scores as a quality gate is directly supported by the benchmark's first finding. If a checkpoint struggles with zero-shot reasoning before fine-tuning, merging multiple fine-tuned versions of it does not recover that capability gap — it distributes a weakness across the merged weight space.

Watch Out: Merging can amplify weaknesses as readily as it combines strengths. If your expert checkpoints come from a base model that is poorly instruction-tuned, undertrained on general reasoning tasks, or significantly misaligned in output format, post-merge quality on held-out tasks will be bounded by that base-level ceiling. The benchmark establishes that strong zero-shot performance is a prerequisite, not a bonus — its absence correlates with worse merge outcomes. Do not rely on merge methods to compensate for weak bases.

The selection implication is concrete: before running any merge, evaluate each candidate base model (not just the fine-tuned experts) on a held-out zero-shot benchmark. Strong base-level scores predict better merge outcomes in this study's framework.

Why larger models are easier to merge

Across the full 1B-to-64B range studied, larger models consistently proved easier to merge. The OpenReview abstract states this as the study's second headline finding: "Second, larger models facilitate easier merging."

The 64× parameter spread in the benchmark is significant because it means the trend is observable across a genuine range, not just between two adjacent sizes. The practical meaning of the benchmark's size sweep is that merge behavior becomes more tractable as parameter count increases.

| Parameter scale | Merge stability | Method sensitivity | Max reliable expert count |

|---|---|---|---|

| 1B – 7B | Lower | Higher | Fewer experts before degradation |

| 13B – 30B | Moderate | Moderate | Intermediate |

| 30B – 64B | Higher | Lower | Up to 8 experts studied |

This does not mean that larger models always produce better absolute task scores — the benchmark is about mergeability, not raw capability. A 64B merge does not trivially outperform a 7B expert on every task. What improves is the stability and reliability of the merge operation itself: weight-space interference is less destructive, and the merged model generalizes more predictably.

What changes when the model size grows from 1B to 64B

The practical meaning of the 1B-to-64B range in the model merging benchmarks is not merely compute cost — it is about how the geometry of the weight space responds to superposition of task vectors. At larger parameter counts, individual task-specific fine-tunes represent smaller fractional perturbations to the total weight space, which leaves more room for multiple perturbations to coexist without overwriting each other. The benchmark's fourth finding reinforces this: "Fourth, we can better merge more expert models when working with larger models." The ability to successfully merge eight experts is contingent on operating at the larger end of the scale range.

Pro Tip: Scale improves merge stability, not task performance per se. A larger model that merges cleanly can still underperform a smaller specialist on a specific narrow task. Treat larger scale as a prerequisite for running high-expert-count merges reliably — not as a guarantee that every merged output metric will be better than a smaller fine-tune. Held-out generalization is where the scale benefit is most consistently observed.

How expert count affects zero-shot generalization

Adding more expert models to the merge consistently improved zero-shot generalization on held-out tasks in this benchmark, up to and including eight experts. The OpenReview abstract reports: "Notably, when merging eight large expert models, the merged models often generalize better compared to the multitask trained models." The feasibility of merging eight experts is itself scale-dependent: the study's fourth finding is that merging more experts becomes more tractable as model size increases.

| Expert count | Held-out zero-shot trend | Condition |

|---|---|---|

| 2 experts | Modest generalization gain over individual expert | Any scale |

| 4 experts | Consistent held-out improvement | Moderate to large scale |

| 8 experts | Can exceed multitask-trained model on held-out tasks | Large scale (30B–64B range); strong base required |

The practical message is directional: more experts reliably helps generalization at large scale, and the ceiling (8 in this study) has not been shown to be the true limit of the phenomenon, only the limit of what was tested.

Why eight large experts can beat multitask training

The finding that eight large merged experts can outperform a multitask-trained model on held-out tasks is the benchmark's most practically significant result. Multitask training requires orchestrating joint optimization across tasks, balancing gradient conflicts, and tuning loss weights — a nontrivial engineering cost. Merging eight independently fine-tuned experts sidesteps that cost while achieving equivalent or better held-out generalization in the study's setting.

The mechanism is that each expert's fine-tuning is unconstrained by other tasks' gradients, so each captures task-specific signal cleanly. The merge then superposes those signals in weight space. The OpenReview abstract frames this as one of the benefits of merging: "Model merging aims to combine multiple expert models into a more capable single model, offering benefits such as reduced storage and serving costs, improved generalization, and support for decentralized model development."

Watch Out: The eight-expert-beats-multitask result is qualified by "large" models and "strong" bases. The abstract specifies "merging eight large expert models" — this finding does not transfer to small-model recipes (1B–7B range) or to low-quality base checkpoints. Practitioners running merges on Mistral 7B-scale models with a weak base should not expect this outcome. Applying the large-scale finding to small-scale merges is the most common overclaim made about this research.

Why merge method differences shrink at larger scale

At large parameter counts, Averaging, Task Arithmetic, Dare-TIES, and TIES-Merging produce results that behave very similarly to each other. The OpenReview abstract states this as the fifth headline finding: "Fifth, different merging methods behave very similarly at larger scales." This directly addresses the competitive gap in SERP coverage: most practitioners spend time selecting and tuning merge methods when, at the scales where merging is most useful, that effort returns minimal gains compared to checkpoint selection.

| Method | Small-scale differentiation | Large-scale differentiation | Primary mechanism |

|---|---|---|---|

| Averaging | Baseline | Converges toward other methods | Simple parameter mean |

| Task Arithmetic | Modest edge at small scale | Converges | Task-vector addition; scale λ per task |

| TIES-Merging | Handles sign conflicts | Converges | Trim + elect sign + merge |

| Dare-TIES | Handles redundancy | Converges | Sparse rescaling + TIES |

Exact method-by-method numeric deltas are in the paper's result tables and figures — the abstract does not enumerate them. The directional conclusion is clear: method choice is a second-order variable at large scale, whereas base model quality and expert count are first-order.

When Averaging is close enough and when it is not

At large scale (roughly 30B+ in this study's range), simple parameter averaging produces results close enough to the more sophisticated methods that the additional complexity of TIES-Merging or Dare-TIES is difficult to justify on benchmark performance alone. The fifth finding makes this explicit.

Pro Tip: If you are running merges with models at the 30B–70B scale, start with Averaging via MergeKit before investing time in TIES or Dare-TIES hyperparameter tuning. The benchmark evidence supports treating Averaging as the baseline that more complex methods must beat — and at large scale, they often do not beat it by a meaningful margin. Reserve TIES-Merging and Dare-TIES for smaller-scale merges where sign conflicts and parameter redundancy create larger gaps relative to Averaging.

Averaging is clearly insufficient at small scale when method-specific mechanisms (sign conflict resolution in TIES, sparse rescaling in Dare) can separate results meaningfully. The threshold is not a fixed parameter count — it shifts with base model quality and expert count — but the benchmark's evidence points to large scale as the regime where the simpler method is defensible.

What the study does not prove

The benchmark provides strong directional evidence across its evaluation setup, but the OpenReview abstract is explicit about scope: "Overall, our findings shed light on some interesting properties of model merging while also highlighting some limitations. We hope that this study will serve as a reference point for large-scale merging for upcoming research." "Reference point" and "some limitations" are meaningful qualifications — this is not a universal theory of merging.

Merging does improve performance within this benchmark's scope. But the benchmark does not prove that merging will outperform alternative approaches in every model family, every task distribution, or every compute budget. The held-out generalization gains depend on the specific evaluation suite used; gains on MMLU or GPQA style benchmarks in the paper do not guarantee equivalent gains on domain-specific downstream tasks you care about.

Watch Out: The study's benchmark gains should not be extrapolated to architectures, training recipes, or task domains outside its evaluation scope. The paper explicitly positions itself as a reference point, not a final word. Practitioners who treat its findings as universal deployment guarantees risk poor outcomes when their specific model families, fine-tuning data distributions, or target tasks differ from the study's setup. Always validate merge quality on your own held-out evaluation suite before shipping.

Compute, reproducibility, and scope limits to keep in mind

The benchmark's scope is bounded by its design: four merge methods, 1B–64B model sizes, and up to eight experts. Full replication requires the paper's appendix for complete dataset names, task sets, and hyperparameter configurations — the abstract does not expose those details.

Production Note: The abstract and preprint do not disclose the full hardware configuration or training budget used to produce the expert fine-tunes evaluated in the benchmark. Practitioners attempting to reproduce results should consult the full paper PDF for task and dataset specifics. For large-scale experiments in this parameter range, NVIDIA H100 clusters with NCCL over InfiniBand are the typical infrastructure, but the paper does not confirm this explicitly. At the 64B scale, merging itself is lightweight (no gradient computation required); the compute cost lies in fine-tuning the expert checkpoints and running evaluations.

What practitioners should do with these benchmark results

The benchmark yields a clear priority ordering for practitioners planning open-weights merges. Base model quality, model scale, and expert count are the primary levers — in that order. Merge method is a secondary concern that matters most at small scale and becomes largely irrelevant as you move toward the 30B–64B range.

| Situation | Base quality | Scale | Expert count | Recommended strategy |

|---|---|---|---|---|

| Strong base, large scale | High | 30B–64B | 4–8 | OpenReview and arXiv: start with Averaging; add experts aggressively |

| Strong base, small scale | High | 1B–7B | 2–4 | OpenReview: use TIES-Merging or Dare-TIES; method choice matters more |

| Moderate base, large scale | Moderate | 30B–64B | 2–4 | arXiv: use Task Arithmetic or TIES; validate held-out carefully |

| Weak base, any scale | Low | Any | Any | OpenReview: fix the base before merging; do not expect merging to recover |

| Targeting held-out generalization | High | 30B–64B | 6–8 | OpenReview: merge > multitask training in this regime per benchmark |

The selection decision is to evaluate the base first, then choose scale-appropriate methods only after that signal is clear. Expert count and scale matter more than fine distinctions among merging algorithms once you are in the larger regime.

A simple selection rule for merge projects

The benchmark's five findings collapse into a practical if-then heuristic that covers the majority of open-weights merge decisions:

Bottom Line: - If your base model has strong zero-shot performance (evaluate before fine-tuning, not after) and you are working at ≥30B parameters → start with Averaging, collect as many high-quality expert fine-tunes as feasible (up to 8 shows consistent gains in this benchmark), and expect meaningful held-out generalization improvement. - If your base is weak or you are at ≤7B parameters → fix the base or use a stronger one before merging; invest in TIES-Merging or Dare-TIES rather than simple averaging; keep expert count low until you validate each addition. - In all cases: measure zero-shot held-out performance — not just held-in task recovery — as your primary merge quality signal.

This heuristic is derived from one benchmark study and should be treated as an informed starting point, validated against your specific task domain before deployment.

Questions readers ask about model merging at scale

What is model merging in machine learning?

Model merging combines multiple independently fine-tuned expert models into a single model by operating directly in weight space — no additional training required. As the OpenReview abstract defines it: "Model merging aims to combine multiple expert models into a more capable single model, offering benefits such as reduced storage and serving costs, improved generalization, and support for decentralized model development." Common methods include Averaging, Task Arithmetic, TIES-Merging, and Dare-TIES, all executable via tools like MergeKit.

Does model merging actually improve performance?

Within the benchmark's evaluation setup, yes — merging consistently improves zero-shot generalization on held-out tasks. At large scale with strong bases and eight experts, merged models can exceed multitask-trained models on held-out tasks. The gains are not universal across all architectures, task domains, or base quality levels, and the paper explicitly notes limitations alongside its positive findings.

How many models can you merge together?

The benchmark tested up to eight expert models. The key condition is that higher expert counts require larger models to work reliably — the study's fourth finding is that merging more experts becomes more feasible as model scale increases. Whether more than eight experts continue to improve generalization is not established by this study.

Which model merging method is best?

At large scale (roughly 30B–64B parameters), no method clearly dominates — they all converge to similar behavior. At small scale, TIES-Merging and Dare-TIES offer advantages over Averaging by handling sign conflicts and parameter redundancy. Method selection matters most when base quality is moderate and model size is small.

What matters most for model merging at scale?

Base model quality is the primary determinant of merge success. Model scale comes second, enabling both more stable merges and higher expert counts. Expert count is the third lever. Merge method choice is fourth — relevant at small scale, nearly irrelevant at large scale.

Sources and references

- What Matters for Model Merging at Scale? — OpenReview (ICLR 2025) — Primary benchmark study; source for all five headline findings and verified quotes in this article

- arXiv preprint 2410.03617 — Preprint version of the same paper; source for experimental design details including scale range and method list

- OpenReview PDF (full paper) — Full paper including evaluation protocol for held-in and zero-shot held-out tasks

- MergeKit — Arcee AI — Open-source library implementing Averaging, Task Arithmetic, TIES-Merging, and Dare-TIES for HuggingFace-compatible models

Keywords: MergeKit, Averaging, Task Arithmetic, TIES-Merging, Dare-TIES, OpenReview, arXiv 2410.03617, MMLU, GPQA