Bottom line up front: when MoE serving is worth the operational overhead

Bottom Line: MoE serving earns its keep when three conditions hold simultaneously: the model is large enough that dense serving saturates memory bandwidth per GPU, sustained request volume keeps all expert ranks busy, and the team has the infrastructure maturity to operate expert-parallel routing without accumulating invisible cost in imbalance, communication overhead, and debugging time. If any of these conditions fails — low or bursty traffic, a small or mid-size model, limited GPU topology control, or a thin platform team — a dense baseline is cheaper and less risky on every TCO dimension. The MoE serving TCO advantage is real at scale; it is not automatic.

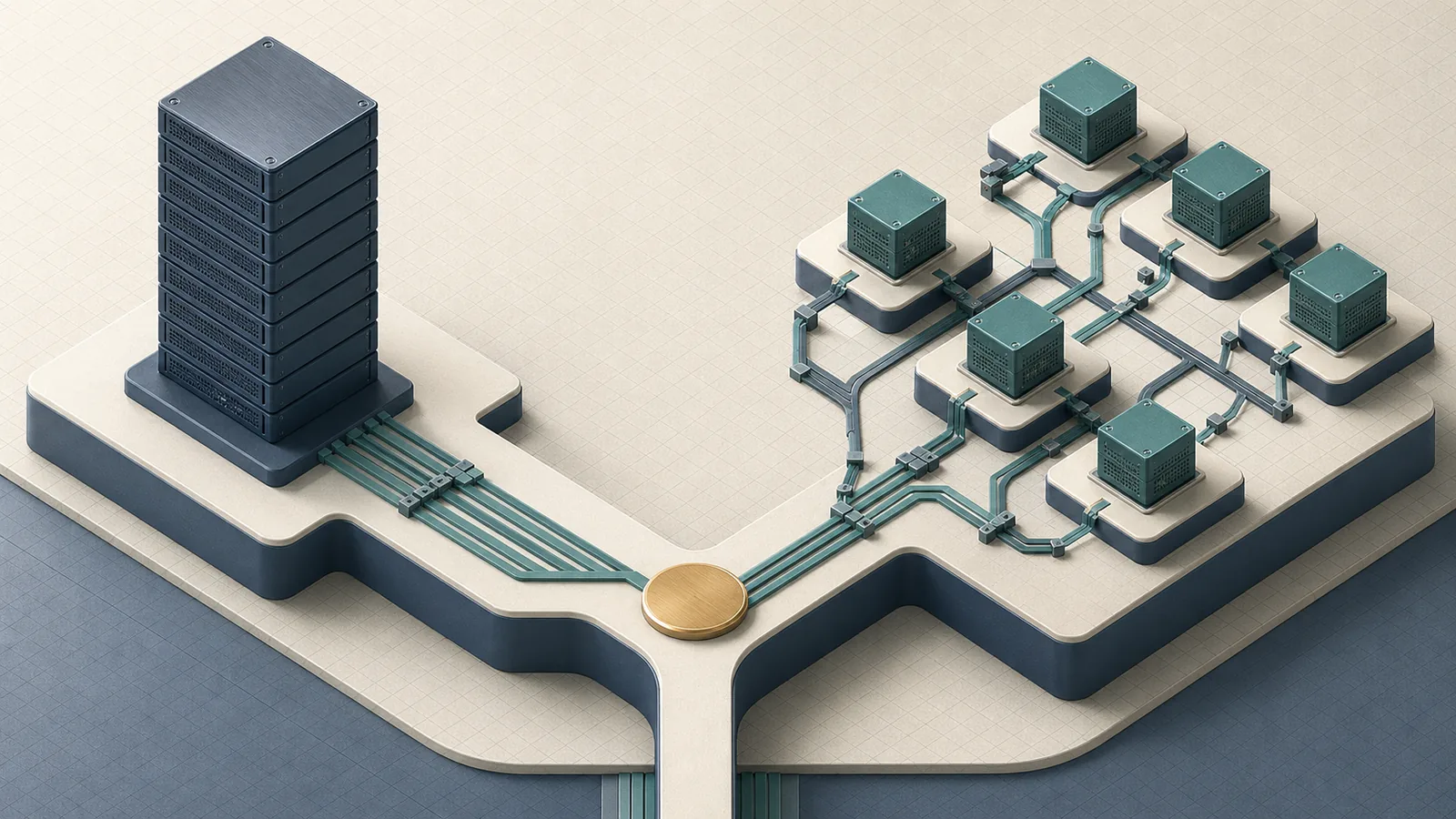

The core arithmetic is concrete. DeepSeek-V3 carries 671B total parameters but activates only 37B per token at inference time. That sparsity gap is the entire economic argument for MoE serving. But the gap only converts to actual dollar savings when routing cost, inter-GPU communication, and expert load imbalance do not consume the saved FLOPs. The dense-vs-MoE decision is therefore not a model quality question; it is a systems cost question.

vLLM's Expert Parallelism (EP) formalizes the production path: "Expert Parallelism (EP) ... allows experts in Mixture-of-Experts (MoE) models to be deployed on separate GPUs, increasing locality, efficiency, and throughput overall." That benefit is conditional on the deployment meeting the locality and utilization prerequisites EP assumes.

Traffic shape, model scale, and the infrastructure forces that change the answer

MoE outperforms dense serving on TCO only in a specific parameter space. The table below maps the key input variables to a concrete recommendation.

| Condition | Dense favored | MoE favored |

|---|---|---|

| Model scale | < 30B parameters | ≥ 70B, especially sparse configs like 671B/37B active |

| Request volume | Low to moderate or bursty | High and sustained; keeps EP ranks loaded |

| Traffic regularity | Unpredictable prompt lengths/domains | Homogeneous or predictable prompt distribution |

| GPU memory bandwidth pressure | Manageable on single-node | Memory-bound at scale; sparsity offloads pressure |

| Interconnect quality | Commodity Ethernet, PCIe-only | NVLink or InfiniBand between GPU nodes |

| Ops maturity | Small platform team, limited observability | Dedicated infra team, per-expert monitoring in place |

The GPU memory bandwidth constraint is the hidden forcing function. At large model scale, serving cost is dominated by memory movement — loading weights and KV-cache entries — not raw arithmetic. A dense 70B model must move all 70B parameters' worth of weights through bandwidth-constrained memory paths on every forward pass. A MoE model at 671B total / 37B active does not eliminate that pressure, but it concentrates it on the active experts and spreads the rest across GPUs. Whether that concentration helps or hurts depends entirely on how evenly traffic routes to experts and how fast GPUs can communicate results.

vLLM's large-scale serving deployment on CoreWeave with InfiniBand and ConnectX-7 NICs achieved 2.2k tokens per second per H200 GPU in a production-like multi-node setup, a figure that reflects a well-provisioned fabric. That number does not transfer to a cluster with slower interconnect or unbalanced expert placement.

Why token-level sparsity changes throughput economics

Pro Tip: Sparse activation creates real throughput headroom — not theoretical savings — only when routing overhead stays low enough to avoid dominating token processing time, and no single expert absorbs a disproportionate share of tokens across a sustained request window. Test both conditions under production traffic before committing the MoE serving TCO case to stakeholders.

DeepSeek-V3's 5.5% active-parameter fraction (37B of 671B) is the clearest published illustration of what token-level sparsity means at inference time: each token touches roughly one-eighteenth of the parameter set. The MoE inference literature formalizes why this matters: "Routed experts only process tokens selected by the gating mechanism." Fewer parameters touched per token means fewer memory reads and fewer multiply-accumulate operations per forward pass — which, on GPU memory bandwidth-constrained workloads, translates directly to throughput.

The caveat is that GPU memory bandwidth savings disappear if the system still must keep all experts resident in memory simultaneously (which expert parallelism across a multi-GPU cluster handles by sharding) or if routing introduces synchronization barriers that stall GPU pipelines. When expert dispatch requires cross-node all-to-all communication over InfiniBand, the communication latency subtracts from the FLOP savings. The MoE serving TCO case rests on ensuring the net of these effects remains positive.

Where dense serving stays the safer default

Watch Out: MoE introduces three structural cost sources that dense serving avoids entirely: variable token counts per expert, unpredictable routing skew across request types, and cross-GPU communication overhead proportional to expert placement. Any one of these, left unmanaged, can erase throughput gains and inflate operational engineering time. Teams that cannot instrument per-expert utilization in production are flying blind on all three.

The dense-vs-MoE choice tilts back toward dense whenever traffic is low-volume, irregular, or domain-diverse enough that routing skew is probable. As the MoE inference literature states directly: "the number of tokens per expert is variable and can be unpredictable, complicating load balancing and parallelization." Dense models execute all parameters uniformly on every token, which means latency and throughput are predictable and attributable. MoE removes that predictability unless the serving stack actively compensates.

The operational burden is also asymmetric. A dense serving deployment requires tuning batch size, tensor parallelism degree, and KV-cache allocation. An MoE expert-parallel deployment requires all of that plus: expert placement across GPUs, EPLB configuration, all-to-all communication tuning, and per-expert utilization monitoring. Teams with thin platform coverage find that the additional failure modes accumulate faster than the throughput gains.

The MoE serving landscape: frameworks, deployment patterns, and who they fit

The SERP sources surface individual framework capabilities but do not synthesize them into a decision framework. The table below does that across the three production-relevant options.

| Framework | EP support | Operational intensity | Topology sensitivity | Best fit |

|---|---|---|---|---|

| vLLM | Native EP + DP composition; EPLB included | Moderate-high; EP/DP config required | Requires fast interconnect for multi-node EP | Teams already on vLLM; multi-model production fleets |

| TensorRT-LLM | Multi-GPU/multi-node; NVLink-optimized all-to-all | High; topology-aware kernel selection required | Strongest on NVLink-connected clusters (DGX B200, GB200 NVL72) | NVIDIA-certified HW stacks; latency-critical single-model deployments |

| llm-d | Disaggregated prefill/decode; EP-aware scheduling | High; requires distributed orchestration layer | Network topology must support disaggregated serving paths | Large-scale cloud-native deployments; organizations with k8s expertise |

| Megascale-Infer | Not treated as a primary production path here | Depends on deployment design | Depends on topology and routing strategy | A related system to evaluate alongside the frameworks above |

Dense-vs-MoE framework selection compounds with the underlying choice: a team that cannot manage vLLM's EP/DP tuning is not ready to operate TensorRT-LLM's topology-aware kernel path. The MoE serving TCO calculus must include which framework the team can actually run at production fidelity.

What vLLM adds for MoE serving

vLLM's Expert Parallelism is a serving-layer mechanism, not a training concept ported to inference. The framework distributes experts across GPUs and pairs EP with data parallelism to maximize locality: individual expert weights live on dedicated GPU(s), and requests route to those GPUs rather than broadcasting weights across all ranks.

Pro Tip: vLLM's Expert Parallel Load Balancer (EPLB) dynamically adjusts expert assignment across GPUs based on observed utilization. Enable it from the start of any production MoE deployment — not as a tuning step after you observe imbalance. Retroactively adding dynamic balancing to a hot-expert situation typically requires a restart cycle that surfaces in SLA metrics before the team diagnoses the cause.

A documented vLLM configuration for DeepSeek-V3-0324 uses 1-way tensor parallelism, 8-way attention data parallelism, and 8-way expert parallelism — a composition that reflects how the framework's EP and DP axes must be jointly sized against the model's expert structure and the available GPU count. The dense-vs-MoE complexity difference is visible in that configuration alone: a comparable dense model needs only TP and DP degrees.

Where TensorRT-LLM and topology-aware stacks matter

TensorRT-LLM's MoE optimization strategy centers on NVLink-connected hardware. NVIDIA's engineering blog for the framework introduces "NVLinkOneSided AlltoAll, the MoE communication kernels in TensorRT-LLM designed for NVLink-connected systems" targeting DGX B200 and GB200 NVL72 configurations. This is a deliberate design choice: all-to-all communication across experts is the binding constraint in multi-node MoE inference, and NVLink-connected systems are the environment those kernels are built for.

Watch Out: GPU memory bandwidth savings from MoE sparsity can be fully offset by all-to-all communication latency if the interconnect is slower than NVLink or if expert routing frequently crosses node boundaries. On clusters connected only by InfiniBand, MoE communication overhead can dominate end-to-end token latency in ways that do not appear on synthetic benchmarks using short or uniform prompts.

Teams evaluating TensorRT-LLM for MoE should treat topology as a first-class requirement, not a configuration detail. The MoE serving TCO case does not hold if the cluster cannot sustain the all-to-all bandwidth the model's expert structure demands.

Why production teams still keep dense baselines

Bottom Line: Dense serving is the correct default for any production team that cannot satisfy at least two of three criteria: sustained high utilization across expert ranks, a GPU fabric with NVLink or high-bandwidth InfiniBand, and dedicated platform engineering capacity for expert-parallel observability and tuning. The dense baseline is not a fallback for unsophisticated teams — it is the operationally correct choice when the prerequisites for MoE serving economics are not met.

Dense serving avoids expert routing and cross-GPU dispatch entirely. That simplicity has real value: latency and throughput are predictable from batch size and model size alone, failure modes are well-understood, and attribution of cost to traffic is straightforward. The MoE literature and vLLM documentation both show that EP/DP composition, EPLB, and communication-aware placement are required for MoE to deliver its promise — meaning that the dense baseline is also the right control group before committing hardware budget to expert-parallel infrastructure. Teams running dense models should benchmark them rigorously before assuming MoE is worth the operational step-change.

Cost and ROI model for MoE serving decisions

No single public source provides a MoE-vs-dense dollar-per-million-tokens benchmark that generalizes across hardware and traffic. The table below synthesizes the cost dimensions from verified sources into a framework teams can populate with their own rates.

| Cost dimension | Dense serving | MoE serving | Scenario / tier |

|---|---|---|---|

| GPU spend | Higher at the same capacity target because all parameters are active | Lower at scale when sparse activation keeps active parameters limited | Small model / bursty traffic / low utilization: Dense wins; large sparse model / sustained load: MoE can win |

| Utilization efficiency | Predictable and roughly linear with batch size | Non-linear; depends on routing balance and expert saturation | Good balance: MoE improves efficiency; skewed balance: Dense is safer |

| Routing overhead | None | Recurring per-token communication and dispatch cost | Commodity interconnect: Dense wins; NVLink or strong InfiniBand: MoE is more viable |

| Staffing / ops cost | Lower; fewer failure modes | Higher; per-expert telemetry, tuning, and incident response required | Thin platform team: Dense wins; dedicated infra team: MoE can be supported |

| Interconnect / topology cost | Standard Ethernet or PCIe is often enough | NVLink or high-bandwidth InfiniBand is typically required | Mixed or fragmented topology pushes the decision back to Dense |

The GPU spend lever is real: DeepSeek-V3's 37B active parameters mean each token traverses roughly one-eighteenth of the total parameter set, which directly reduces memory bandwidth consumption per token relative to a hypothetical dense 671B model. At vLLM's reported 2.2k tokens/s per H200 GPU, that throughput figure reflects a well-tuned expert-parallel deployment on premium interconnect — the upper bound of what the MoE TCO case can claim, not the expected baseline.

The hidden cost buckets that dense teams underestimate

Pro Tip: Before signing off on an MoE serving business case, add three line items that rarely appear in the initial proposal: (1) communication overhead — all-to-all traffic across EP ranks is a recurring per-token cost that grows with cluster size; (2) debugging and incident response time — expert imbalance failures look like throughput degradation with no obvious cause unless per-expert telemetry is in place; (3) capacity buffer for hot experts — GPU memory bandwidth pressure does not distribute evenly, so overprovisioning to handle routing skew is a real budget line, not a contingency.

Variable tokens per expert is not an edge case — it is the normal operating condition of MoE inference. The MoE inference literature is explicit: "the number of tokens per expert is variable and can be unpredictable, complicating load balancing and parallelization." This variability means the serving stack must continuously rebalance expert load, and when it fails to do so, latency spikes surface in production before the root cause is diagnosed. vLLM's EPLB exists precisely because this is a first-order production concern, not an academic edge case.

The staffing cost is also asymmetric in a direction dense teams routinely underestimate. Debugging a dense serving degradation typically involves batch size, KV-cache pressure, or GPU memory fragmentation — problems with known tooling and escalation paths. Debugging MoE imbalance requires per-expert utilization telemetry, routing distribution analysis, and often topology re-evaluation. Teams without that observability stack should budget the time to build it before counting MoE TCO savings.

A practical break-even rubric for production teams

The break-even question reduces to four inputs: traffic volume, model scale, interconnect quality, and ops maturity. The decision matrix below provides a direct yes/no framework.

| Input | MoE: Go | MoE: Wait / Stay Dense |

|---|---|---|

| Sustained request rate | High enough to keep all EP ranks well utilized over long windows | Low, bursty, or unpredictable |

| Model scale | ≥ 70B parameters; sparse config available (e.g., 671B/37B active) | < 30B; dense model fits in single-node TP without bandwidth saturation |

| Interconnect | NVLink intra-node + InfiniBand inter-node, or NVLink-only (NVL72) | PCIe-only, commodity Ethernet, or mixed/fragmented topology |

| Ops maturity | Per-expert utilization monitoring, EPLB enabled, dedicated infra team | No per-expert telemetry; small or shared platform team |

| All four conditions met? | Proceed with MoE EP deployment | Deploy dense baseline; re-evaluate at scale |

The rubric defaults to dense when any single row is in the "Stay Dense" column and the team cannot remediate it within the deployment timeline. This conservative default reflects the verified constraint: MoE benefits require the complete stack of model sparsity plus serving infrastructure. Architecture alone does not close the TCO gap.

Risks, counterarguments, and failure modes that can flip the decision

Watch Out: The four failure modes most likely to invert a favorable MoE serving decision mid-deployment are: (1) routing skew — gating networks concentrate tokens on a small expert subset under real prompt distributions, saturating those experts while others sit idle; (2) noisy neighbor effects — on shared GPU clusters, expert dispatch latency varies with co-tenant traffic, making SLA commitments unreliable; (3) hardware overbuying — teams provision NVLink-connected H100/H200 clusters for theoretical MoE gains before proving that expert imbalance and communication costs remain controlled at production traffic; (4) benchmark-to-production gap — synthetic benchmark suites often use uniform prompt distributions that hide hot-expert behavior visible only under real request mixes.

The root cause of routing skew is architectural: gating mechanisms route tokens based on learned affinity, not uniform distribution. As the MoE inference literature states, "Routed experts only process tokens selected by the gating mechanism" — and the selection is not guaranteed to be balanced across the expert set under arbitrary input. DeepSeek-V3 and similar models use auxiliary load-balancing losses during training to encourage balance, but training-time balance does not guarantee inference-time balance across all production prompt types.

TensorRT-LLM's NVLink-specific all-to-all optimization is a direct response to the communication overhead risk: by exploiting NVLink, the kernel reduces the latency penalty of cross-expert dispatch. Teams on non-NVLink topologies cannot use this optimization and face higher communication overhead as a structural cost.

Hardware overbuying is the financial risk version of the failure mode. GPU memory bandwidth advantages from sparsity are only realized at high sustained utilization; teams that provision premium multi-node clusters at projected-peak traffic before validating expert balance at actual traffic may find that idle GPUs are paying for unproven throughput gains.

When expert imbalance becomes the bottleneck

Expert imbalance degrades throughput in a non-obvious way: the slowest expert in an EP group determines the effective batch completion time for the entire group, regardless of how efficiently other experts process their tokens. A single hot expert that receives 40% of tokens while each of the remaining experts receives 5–10% will stall the entire forward pass at that layer.

Pro Tip: Benchmark traffic must mirror the domain and prompt-length distribution of production traffic — not a synthetic uniform set — before trusting any MoE throughput measurement for ROI purposes. A balanced benchmark set that distributes tokens evenly across experts will show near-theoretical throughput gains and hide a hot-expert problem that only surfaces under real user queries. Run at least two weeks of shadow traffic through per-expert utilization monitoring before committing MoE serving TCO estimates to finance.

vLLM's EPLB exists as a mitigation, not a cure. Dynamic load balancing adjusts expert assignment across GPUs at serving time, but it cannot redistribute tokens mid-batch to an already-saturated expert. The correct intervention is upstream: audit token distribution across experts during load testing, identify hot experts, and either adjust routing capacity or revisit whether MoE is the right architecture for the specific request mix.

ROI analysis should separate model quality gains from infrastructure efficiency gains explicitly. Teams that attribute throughput improvements entirely to MoE sparsity, without isolating the effect of expert imbalance mitigation, risk overstating the architecture's contribution and underestimating the infrastructure investment required to sustain it.

When the safer answer is to stay dense

Bottom Line: Stay dense when any of the following apply: the platform team cannot instrument per-expert GPU utilization in production; the cluster topology does not include NVLink or equivalent high-bandwidth interconnect between GPU nodes; traffic is bursty or domain-diverse enough to cause routing skew; or the organization needs predictable operations more than marginal throughput gains at the current scale. Dense serving is not an admission of technical limitation — it is the correct engineering choice when MoE's prerequisites are not met and the MoE serving TCO case cannot be closed without assuming away the hard parts.

The vLLM and MoE inference sources together make the dependency chain explicit: MoE benefits require expert parallelism, which requires DP/EP composition, which requires EPLB for dynamic balance, which requires per-expert telemetry to verify that balance is being maintained. Removing any link in that chain degrades the serving economics back toward or below dense baselines. Teams without the staffing or observability to close that chain should treat dense serving as the current-state correct answer and revisit MoE when prerequisites are in place.

FAQ

When is mixture of experts better than dense models?

At scale (≥ 70B parameters), with sustained high-utilization traffic and high-bandwidth GPU interconnect. MoE's per-token active-parameter reduction, such as DeepSeek-V3's 37B active parameters out of 671B total, only converts to cost savings under those conditions.

Why is MoE faster than dense?

Each token activates a small fraction of total parameters — roughly 5.5% in DeepSeek-V3's configuration — reducing per-token memory bandwidth consumption and FLOPs. The model's total capacity remains large; the per-token compute cost drops.

What are the disadvantages of mixture of experts?

Variable token counts per expert create routing skew and load imbalance. Expert-parallel serving requires high-bandwidth interconnect, EPLB configuration, and per-expert observability. Communication overhead from all-to-all dispatch can offset or erase sparsity gains on slower topologies.

How does expert parallelism work in vLLM?

vLLM's EP distributes expert weights across dedicated GPUs. Tokens route to the GPU holding their assigned expert, improving data locality. EP is combined with data parallelism (DP) to balance request load. EPLB dynamically adjusts expert-to-GPU assignment based on observed utilization.

Does MoE always need NVLink?

Not strictly, but NVLink provides the bandwidth headroom that makes all-to-all expert dispatch economically viable. TensorRT-LLM's MoE kernels are specifically optimized for NVLink-connected systems (DGX B200, GB200 NVL72). InfiniBand is the minimum viable interconnect for multi-node EP; PCIe-only clusters will see communication overhead consume most of the sparsity benefit.

Sources and references

- Official documentation: vLLM Expert Parallel Deployment Documentation — authoritative source for EP, DP/EP composition, and EPLB in production serving.

- Official engineering blog: vLLM Large-Scale Serving Blog — source for the 2.2k tokens/s per H200 GPU throughput figure on a CoreWeave InfiniBand cluster.

- Official engineering blog: NVIDIA TensorRT-LLM MoE Communication Blog — source for NVLinkOneSided AlltoAll kernels and topology-sensitive MoE communication.

- Official repositories: vLLM GitHub Repository and TensorRT-LLM GitHub Repository — canonical references for serving stack capabilities and deployment patterns.

- arXiv technical reports: DeepSeek-V3 Technical Report (arXiv:2412.19437) — primary source for 671B/37B active-parameter architecture and sparse-activation economics; Scaling Multi-Node Mixture-of-Experts Inference Using Expert Parallelism (arXiv:2604.23150) — source for variable-tokens-per-expert load balancing analysis and routing overhead characterization.

Keywords: vLLM, TensorRT-LLM, DeepSeek-V3, Mixtral, Switch Transformer, Expert Parallelism (EP), Expert Parallel Load Balancer (EPLB), PagedAttention, GPU memory bandwidth, NVIDIA H100, NVLink, InfiniBand, Megascale-Infer, llm-d, DeepSeek-R1