What Qwen3-Coder-Next changes for coding-agent benchmarks

Qwen3-Coder-Next is an open-weight language model from the Qwen team, designed specifically around agentic coding tasks rather than generic language modeling. The technical report frames it explicitly as an agent-centric model evaluated against a suite of coding-agent benchmarks: SWE-Bench Verified, SWE-Bench Multilingual, SWE-Bench Pro, Terminal-Bench 2.0, and Aider. This evaluation posture separates it from models optimized for HumanEval or MBPP — the claim is task-family fit for agents, not perplexity.

The competitive gap in current SERP coverage is precise: news summaries repeat the abstract claim without interrogating what the benchmark movement actually demonstrates about instruction-tuning design, and whether the reported gains prove anything about agentic generalization beyond the evaluation suites themselves. This article fills that gap.

Bottom Line: Qwen3-Coder-Next reports competitive performance across agentic coding benchmarks relative to its active parameter count, per the arXiv abstract. The accessible source pages support a claim of strong task-family fit on SWE-Bench and Terminal-Bench variants, but they do not establish which instruction-tuning design choices caused those gains, what training compute was used, or whether the gains generalize beyond the five benchmark suites in Figure 1.

Why SWE-Bench and Terminal-Bench became the right evaluation pair

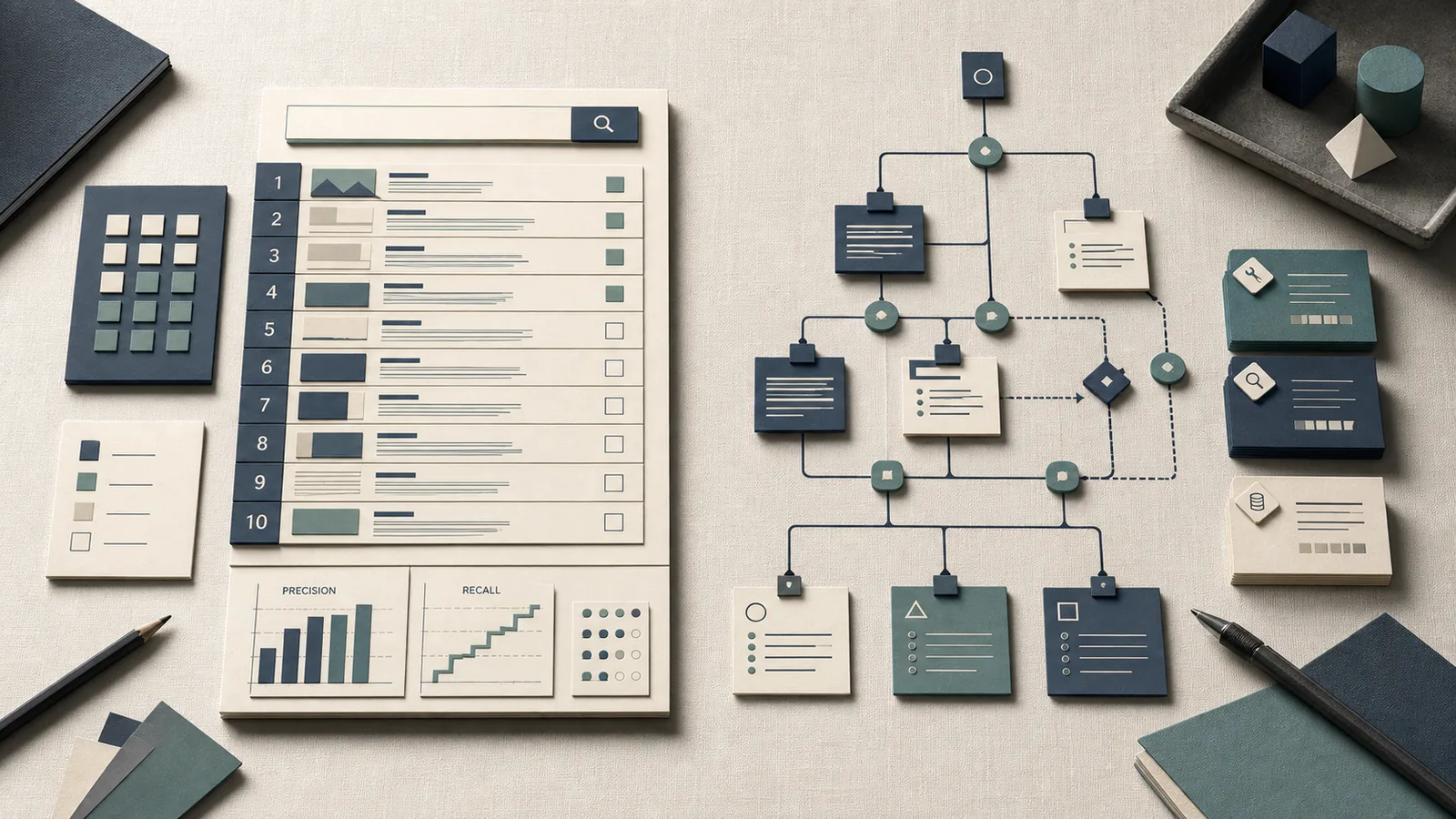

Generic code-generation benchmarks measure single-turn completion quality — pass@k on isolated function stubs. Agentic coding models must do something harder: maintain multi-turn context across tool calls, locate the relevant file in a real repository, and produce a patch that satisfies existing test infrastructure they did not write. SWE-Bench and Terminal-Bench together stress both sides of that competence: patch generation against real repository tests, and autonomous terminal-use behavior.

Terminal-Bench is used to measure whether an agent can execute multi-step terminal tasks in a real shell environment, not just complete isolated commands. That makes it a direct test of command sequencing, state tracking, and recovery from intermediate failures.

| Benchmark | What it measures | Task count | Task format | Scaffold dependency |

|---|---|---|---|---|

| SWE-Bench Verified | Patch generation on real GitHub issues | 500 (human-verified) | Given codebase + issue → generate patch → run repo tests | High — agent scaffold mediates file access |

| Terminal-Bench 2.0 | Autonomous agent behavior in real terminal environments | 89 tasks | Goal + terminal environment → multi-step execution | Medium — direct terminal interaction |

| Aider Polyglot | Instruction-following code editing across 6 languages | 225 exercises | Natural language request → code that passes unit tests | Low — single-model, minimal scaffolding |

SWE-bench evaluates models by applying their generated patches to real-world repositories and running the repository's tests to verify if the issue is resolved. SWE-Bench Verified is a human-filtered subset of 500 instances created in collaboration with OpenAI, selected specifically because engineers confirmed those instances are solvable — reducing noise from ambiguously specified issues that inflated earlier benchmark variance.

Terminal-Bench targets a distinct competence: terminal agents operating in real shell environments across 89 curated tasks. As the Terminal-Bench paper abstract notes, "AI agents may soon become capable of autonomously completing valuable, long-horizon tasks in diverse domains" — and Terminal-Bench 2.0 was designed explicitly because, as the authors state, "current benchmarks either do not measure real-world tasks, or are not sufficiently difficult to meaningfully measure frontier models." That framing explains why the Qwen3-Coder-Next paper uses Terminal-Bench rather than a simpler shell-command completion test.

Aider's polyglot benchmark uses 225 challenging Exercism exercises across C++, Go, Java, JavaScript, Python, and Rust — it "evaluates an LLM's ability to follow instructions and edit code successfully without human intervention," making it a proxy for instruction-following fidelity under code-editing conditions rather than agent autonomy per se.

Using all three in combination gives the paper a triangulated view: patch generation accuracy, terminal autonomy, and instruction-following code editing. No single benchmark covers the full competency surface.

How the paper positions instruction-tuning for agentic coding models

The Qwen3-Coder-Next technical report positions instruction-tuning not as a generic RLHF or SFT improvement on chat quality, but as a design choice aimed specifically at the task families in those five benchmarks. The arXiv HTML page for 2603.00729 shows the primary comparison figure (Figure 1) contrasting Qwen3-Coder-Next against other open-weight models on the full benchmark suite — this is the evidence the paper offers for the quality of its instruction-tuning approach.

Formally, the paper supports correlation, not causation: benchmark_gain ≠ instruction_tuning_cause unless ablations isolate the training recipe from base model capacity, data mixture, and scaffold choice.

What the paper framing implies, but does not prove from accessible source pages alone, is that the instruction-tuning recipe was calibrated to the agentic task families rather than to single-turn coding accuracy. The benchmark selection reinforces that interpretation: choosing SWE-Bench Multilingual and SWE-Bench Pro alongside the Verified set suggests the team tested cross-repo and cross-language generalization within the SWE-Bench family, not just optimizing on the most common leaderboard cut.

Pro Tip: When reading benchmark deltas from a technical report, separate the claim "our model scores higher on benchmark X" from the claim "our instruction-tuning design caused that gain." The first is directly verifiable from a results table; the second requires ablation studies that isolate the instruction-tuning contribution from base model capacity, training data composition, and evaluation scaffold. From the accessible source pages for arXiv 2603.00729, only the first type of claim is verifiable.

What changes in the training recipe are actually visible from the paper page

The accessible arXiv HTML page confirms Figure 1 exists and positions Qwen3-Coder-Next in an open-weight comparison on SWE-Bench Verified, SWE-Bench Multilingual, SWE-Bench Pro, Terminal-Bench 2.0, and Aider. That is the extent of what the scraped source pages expose about the training design. The paper exists as a full technical report, but the snippet-accessible evidence does not surface training data mixture proportions, instruction-tuning stage details, optimizer configuration, RL reward design, or tool-use template specifics.

Watch Out: Do not infer the training recipe from the benchmark choices alone. Papers that evaluate on SWE-Bench and Terminal-Bench may have used reinforcement learning from code execution feedback, SFT on agent trajectories, or a multi-stage pipeline — the benchmark selection does not distinguish between these. No training hardware, dataset-mixture proportions, optimizer settings, instruction-tuning stage boundaries, or compute budget details were visible in the accessible source pages for arXiv 2603.00729. Any reproduction attempt that relies on the abstract alone is working without specification.

Why active parameters matter less than agentic task fit

The abstract's claim — "Across agent-centric benchmarks including SWE-Bench and Terminal-Bench, Qwen3-Coder-Next achieves competitive performance relative to its active parameter count" — deliberately anchors the comparison to active parameter count rather than total parameter count. This framing matters for MoE architectures where total parameters can be large while active inference cost is much lower.

The more important framing is whether parameter-normalized performance holds across task families. SWE-bench measures patch generation against repository tests; Terminal-Bench measures terminal agent tasks. These are different enough that a model can be strong on one and weak on the other, depending on whether instruction-tuning emphasized patch-format generation or multi-step tool-use execution.

The accessible source pages do not expose the exact numeric values from Figure 1, so the section cannot present a concrete Qwen3-Coder-Next BenchmarkTable without the full paper table. What matters from the benchmark sources is the evaluation structure: SWE-Bench Verified = 500 verified instances; Terminal-Bench 2.0 = 89 tasks; Aider Polyglot = 225 exercises. That structure tells you where the model was tested, not how much it outscored peers.

| Benchmark | Official size | Task family | Why it matters for agentic coding |

|---|---|---|---|

| SWE-Bench Verified | 500 | Patch generation + repo tests | Tests whether the model can fix real GitHub issues in an existing codebase |

| Terminal-Bench 2.0 | 89 | Terminal-use autonomy | Tests whether the model can complete multi-step shell goals under real terminal conditions |

| Aider Polyglot | 225 | Instruction-following code editing | Tests whether the model can follow editing instructions across multiple languages |

Watch Out: The exact numeric scores from the paper's Figure 1 were not accessible in the scraped source pages, so the table above intentionally reports official benchmark sizes and task families rather than Qwen3-Coder-Next's specific results. Retrieve the complete PDF or HTML paper at arxiv.org/html/2603.00729 for the numeric results.

What the benchmark movement says about real coding-agent behavior

Benchmark gains on SWE-Bench and Terminal-Bench are meaningful signals about two different slices of coding-agent behavior: repository patching and terminal execution. A model that improves on SWE-Bench is better at locating relevant code, shaping a patch, and satisfying existing tests. A model that improves on Terminal-Bench is better at multi-step command use, interpreting terminal output, and recovering from intermediate failures. Taken together, those movements suggest stronger end-to-end agentic behavior than either benchmark can show alone.

That said, the path from "higher score on these two suites" to "better coding agent for your repository" still has gaps practitioners routinely close by assumption. The benchmark movement is suggestive, not proof: it indicates broader operational competence, but not guaranteed transfer to your codebase, your environment, or your tool stack.

SWE-Bench tests patch generation on 500 human-verified GitHub issues. A model that scores well here has demonstrated it can locate relevant code, generate syntactically valid patches, and satisfy existing test suites in real repositories. That is genuinely useful signal. It does not, however, test whether the model can reason about newly introduced dependencies, handle ambiguous requirements, or maintain coherent state across sessions longer than a typical SWE-Bench trajectory. The benchmark measures one well-defined subtask within repository-level software engineering.

Terminal-Bench 2.0's 89 tasks measure a different competence: executing multi-step goals in a real terminal environment. A model that improves on Terminal-Bench has demonstrated better tool invocation and command sequencing autonomy. What it does not demonstrate is that this autonomy transfers to the specific terminal context of your codebase — installation environments, custom scripts, internal tooling — which Terminal-Bench cannot enumerate.

The practical consequence is that improvement on both benchmarks simultaneously is stronger evidence of general agentic progress than improvement on either alone. Models that score on SWE-Bench by overfitting patch formats tend to fail on Terminal-Bench's open-ended execution tasks, and vice versa. Movement across the full suite in Figure 1 of the Qwen3-Coder-Next paper carries more weight than a single-benchmark gain.

Pro Tip: Before accepting a benchmark gain as a model gain, check whether the scaffold changed. SWE-Bench scores are highly sensitive to the scaffolding agent (SWE-agent, Agentless, Moatless, etc.) used to run the model. Two papers reporting results on "SWE-Bench Verified" can use different scaffolds and produce non-comparable numbers. The Qwen3-Coder-Next paper reports comparisons within a consistent scaffold context per Figure 1 — but when you compare its score against numbers from other papers, verify the scaffold matches.

Where SWE-Bench aligns with software engineering work

"SWE-bench is a benchmark for evaluating large language models on real world software issues collected from GitHub," and task success requires generating a patch that resolves the described problem and passes the repository's existing test suite. The evaluation process "applies their generated patches to real-world repositories and runs the repository's tests to verify if the issue is resolved." This makes SWE-Bench one of the most ecologically valid benchmarks for issue-fixing behavior in coding agents — the task format directly mirrors a GitHub issue workflow.

BenchmarkTable

| Aspect | SWE-Bench Verified | Real-world issue fixing |

|---|---|---|

| Issue source | Real GitHub issues, human-verified solvable | Real GitHub issues, variable solvability |

| Success criterion | Patch passes repo test suite | Patch passes review + tests + deployment |

| Codebase scope | Single snapshot, repo at issue creation | Evolving codebase, merge conflicts possible |

| Ambiguity | Filtered for well-specified issues | Frequently underspecified |

| Size | 500 instances | Unbounded |

The 500-instance Verified subset exists because the full 2,294-instance benchmark contains many issues that turn out to be ambiguously specified or that require context not present in the issue text. By filtering to verified-solvable instances, the benchmark reduces false negatives where the model's patch was reasonable but the tests were incomplete.

Where Terminal-Bench captures terminal-use autonomy

Terminal-Bench 2.0's 89 tasks are designed to test AI agents in real terminal environments rather than synthetic command-completion scenarios. The benchmark targets what the authors describe as "long-horizon tasks in diverse domains" — the kind of multi-step execution sequences that appear in deployment scripts, environment setup, and debugging workflows.

Terminal autonomy is not the same as end-to-end repository reasoning. The distinction from SWE-Bench is task structure: SWE-Bench gives a model a codebase and an issue, then evaluates the patch it generates. Terminal-Bench gives an agent a goal and a terminal, then evaluates whether the agent completes that goal through a sequence of shell interactions. This requires maintained state across steps, correct interpretation of command output, and recovery from intermediate failures — capabilities that patch generation alone does not exercise.

Watch Out: A strong Terminal-Bench score does not imply strong repository-level reasoning. Terminal competence — navigating a file system, running build tools, interpreting command output — is a necessary but not sufficient condition for end-to-end repository work. A model can perform well on Terminal-Bench's 89 tasks by mastering shell semantics without ever needing to understand a large codebase. Conflating terminal autonomy with full coding-agent competence overstates what the benchmark measures.

How Qwen3-Coder-Next compares with neighboring open coding models

The arXiv HTML page for 2603.00729 confirms that Figure 1 presents a comparison of Qwen3-Coder-Next against other open-weight models across SWE-Bench Verified, SWE-Bench Multilingual, SWE-Bench Pro, Terminal-Bench 2.0, and Aider. The comparison set includes models in the same open-weight coding category that practitioners currently evaluate alongside Qwen3-Coder-Next.

The accessible source pages do not expose the numeric values from Figure 1 in retrievable text form. The table below therefore shows the comparison structure the paper uses, while being explicit that exact scores require the full paper.

| Model | Comparison basis | Benchmark suite used | Score status in accessible sources |

|---|---|---|---|

| Qwen3-Coder-Next | Primary subject | SWE-Bench Verified/Multilingual/Pro, Terminal-Bench 2.0, Aider | Stated as competitive relative to active parameter count |

| DeepSeek-V3.2 | Open-weight peer | Included in Figure 1 comparison | Exact score not surfaced in accessible snippets |

| GLM-4.7 | Open-weight peer | Included in Figure 1 comparison | Exact score not surfaced in accessible snippets |

The paper's decision to compare on five benchmarks rather than one reflects a legitimate methodological choice: no single benchmark provides complete coverage of agentic coding competence, and models that look equivalent on SWE-Bench Verified can diverge significantly on Terminal-Bench's execution tasks.

What the rank-order on current coverage does and does not imply

Qwen3-Coder-Next should be read as competitive on SWE-Bench Verified, but not as a permanent leaderboard claim. The paper's accessible sources support a strong benchmark showing, while the precise rank order on SWE-Bench Verified was not surfaced in retrievable text. Practitioners reading leaderboard comparisons should treat rank order as time-sensitive: "SWE-bench Verified is a human-filtered subset of 500 instances; use the Agent dropdown to compare LMs with mini-SWE-agent or view all agents."

With 500 instances, a 2-point movement in resolve rate represents 10 instances. This means small changes in scaffold configuration, runtime environment, or the specific model-checkpoint evaluated can shift apparent ranking without reflecting meaningful differences in underlying model capability.

Pro Tip: Check the evaluation date and scaffold version before drawing conclusions from any SWE-Bench leaderboard position. The SWE-bench leaderboard updates continuously as new scaffolds and model checkpoints are submitted. A rank claimed in a paper published in early 2026 may not reflect the current leaderboard state. For Qwen3-Coder-Next specifically, retrieve the current SWE-bench leaderboard with the same scaffold configuration the paper used before concluding where it stands today.

Limitations that stop benchmark wins from becoming blanket claims

Three structural limitations constrain how far the Qwen3-Coder-Next benchmark story can be extrapolated.

Sample size. SWE-Bench Verified contains 500 instances and Terminal-Bench 2.0 contains 89 tasks. At 500 instances, a 2-percentage-point difference in resolve rate equals 10 issues — a margin well within the noise introduced by nondeterministic generation, scaffold retry behavior, and environment setup variance. Terminal-Bench's 89 tasks make the sample-size problem more acute: a single-task swing represents over 1% of the benchmark. "SWE-bench Verified is a human-filtered subset of 500 instances" — human filtering improves signal quality but does not expand the sample.

Scaffold entanglement. SWE-Bench scores are not model scores in isolation — they are (model × scaffold) scores. The scaffolding agent controls file retrieval strategy, retry logic, context window management, and patch formatting. Two models with identical intrinsic capabilities can produce meaningfully different SWE-Bench numbers depending on whether the scaffold was tuned for that model. The accessible source pages do not isolate the Qwen3-Coder-Next base model contribution from scaffold contributions.

Benchmark freshness and coverage. As the Terminal-Bench paper states directly, "current benchmarks either do not measure real-world tasks, or are not sufficiently difficult to meaningfully measure frontier models." This self-critical framing from the benchmark creators themselves acknowledges that Terminal-Bench 2.0 represents a point-in-time effort to close the gap — not a permanent ceiling test. Models tuned extensively against agentic benchmarks risk fitting to the specific task formats those benchmarks contain rather than generalizing to genuinely novel terminal and repository scenarios.

Watch Out: The accessible source pages for arXiv 2603.00729 do not quantify benchmark leakage risk or overfitting to specific agent suites for Qwen3-Coder-Next. Without ablation studies on held-out agentic tasks or third-party evaluations using different scaffolds, the paper's benchmark story cannot be extended to a blanket claim of superior coding-agent generalization. Treat the results as strong initial evidence requiring corroboration from independent evaluation runs.

What practitioners should take from the paper if they build instruction-following models

The practical signal from Qwen3-Coder-Next's benchmark positioning is about evaluation strategy, not just model selection. The paper demonstrates that instruction-tuning for agentic coding should be validated against task-family-specific benchmarks — patch generation, terminal execution, and instruction-following code editing are distinct enough that optimizing for one does not reliably transfer to the others. This has direct implications for teams building instruction-following models from base checkpoints.

The three benchmark families in the paper map to three different instruction-tuning targets:

- SWE-Bench fit requires the model to understand repository structure, locate relevant files, and format patches that pass an existing test suite — this points toward training data that includes real issue/patch pairs and agent trajectories through real codebases.

- Terminal-Bench fit requires multi-step command sequencing, output interpretation, and state tracking across shell interactions — this points toward training data that includes terminal session traces and tool-use demonstrations.

- Aider polyglot fit requires accurate instruction-following under tight code-edit constraints across multiple languages — this points toward high-quality, instruction-diverse coding examples evaluated by test execution.

DecisionMatrix

- Choose SWE-Bench fit when you are selecting among open-weight coding models for an agentic issue-fixing pipeline, your target task involves repository patching against existing tests, and your evaluation scaffold is comparable to the one used in the paper.

- Choose Terminal-Bench fit when you need multi-step terminal execution, shell command recovery, and environment manipulation rather than repository patch generation.

- Choose Aider polyglot fit when your product depends on instruction-following code edits across several languages and you care about unit-test pass rates on small code changes.

- Treat all three as insufficient alone when you need performance guarantees on a task family not covered by these benchmarks, or when you are deploying with a substantially different scaffold or environment.

- Require additional evidence before committing when the benchmark delta is under 3 percentage points on SWE-Bench Verified or under 2 task completions on Terminal-Bench 2.0, you cannot access the full paper table to verify scaffold configuration, or you need to know whether the gains came from instruction-tuning or base model pretraining.

Which follow-up questions matter before you trust the benchmark story

What is Qwen3-Coder-Next?

An open-weight language model from the Qwen team (Alibaba), reported in arXiv technical report 2603.00729, designed for agentic coding tasks and evaluated on SWE-Bench Verified, SWE-Bench Multilingual, SWE-Bench Pro, Terminal-Bench 2.0, and Aider.

How good is Qwen3-Coder-Next on SWE-Bench Verified?

The abstract describes competitive performance relative to its active parameter count. Exact numeric scores were not surfaced in the accessible source snippets. Retrieve the full paper at arxiv.org/html/2603.00729 and cross-reference with the SWE-bench live leaderboard filtered by the same scaffold configuration.

What is Terminal-Bench used for?

Terminal-Bench is a benchmark for AI agents operating in real terminal environments, containing 89 curated tasks in Terminal-Bench 2.0. It tests multi-step command execution and terminal autonomy — capabilities distinct from patch generation.

Does benchmark performance prove better coding-agent generalization?

No — not on its own. SWE-Bench Verified covers 500 instances of a specific patch-generation task format, and Terminal-Bench covers 89 terminal execution tasks. Strong performance across both is meaningful positive evidence, but generalization to novel agentic scenarios, different repositories, or different terminal environments requires independent validation. The sample sizes and scaffold dependencies described above constrain the inference.

Which scaffold was used in the Figure 1 comparison?

The accessible source pages do not expose this detail in retrievable text. This is the first question to answer before comparing Qwen3-Coder-Next's Figure 1 scores against any external leaderboard number.

Is SWE-Bench Multilingual different from SWE-Bench Verified?

Yes. SWE-Bench Multilingual extends the patch-generation task to multiple programming languages beyond Python. SWE-Bench Verified is a 500-instance human-filtered subset of the original Python-centric SWE-bench. The paper evaluates Qwen3-Coder-Next on both, suggesting cross-language patch generation was a specific design target.

Sources and references for the paper and benchmark ecosystem

- Qwen3-Coder-Next Technical Report, arXiv abstract (2603.00729) — Primary source for the model's benchmark claims and evaluation framing

- Qwen3-Coder-Next Technical Report, arXiv HTML full paper (2603.00729) — Full paper page including Figure 1 comparison table

- SWE-bench overview — Official benchmark definition, task format, and dataset sizes

- SWE-bench evaluation guide — How patch evaluation against repository test suites works

- SWE-bench FAQ — Authoritative source for dataset variant sizes (Full: 2,294; Lite: 300; Verified: 500)

- SWE-bench Verified page — Details on the human-filtered 500-instance subset

- SWE-bench live leaderboard — Current model rankings with scaffold filter

- Terminal-Bench homepage — Official benchmark for terminal agents

- Terminal-Bench GitHub (harbor-framework/terminal-bench) — Repository with task definitions and evaluation harness

- Terminal-Bench paper abstract, arXiv (2601.11868) — Methodology and motivation for the 89-task Terminal-Bench 2.0 design

- Aider leaderboards — Polyglot benchmark covering 225 Exercism exercises across 6 languages

Keywords: Qwen3-Coder-Next, Qwen3-Coder, SWE-Bench Verified, Terminal-Bench, Aider, SWE-Agent, DeepSeek-V3.2, GLM-4.7, Claude 3.5 Sonnet, Hugging Face Papers, arXiv 2603.00729, OpenAI Codex, Local AI Master