What ReAct and Plan-and-Execute are trying to solve

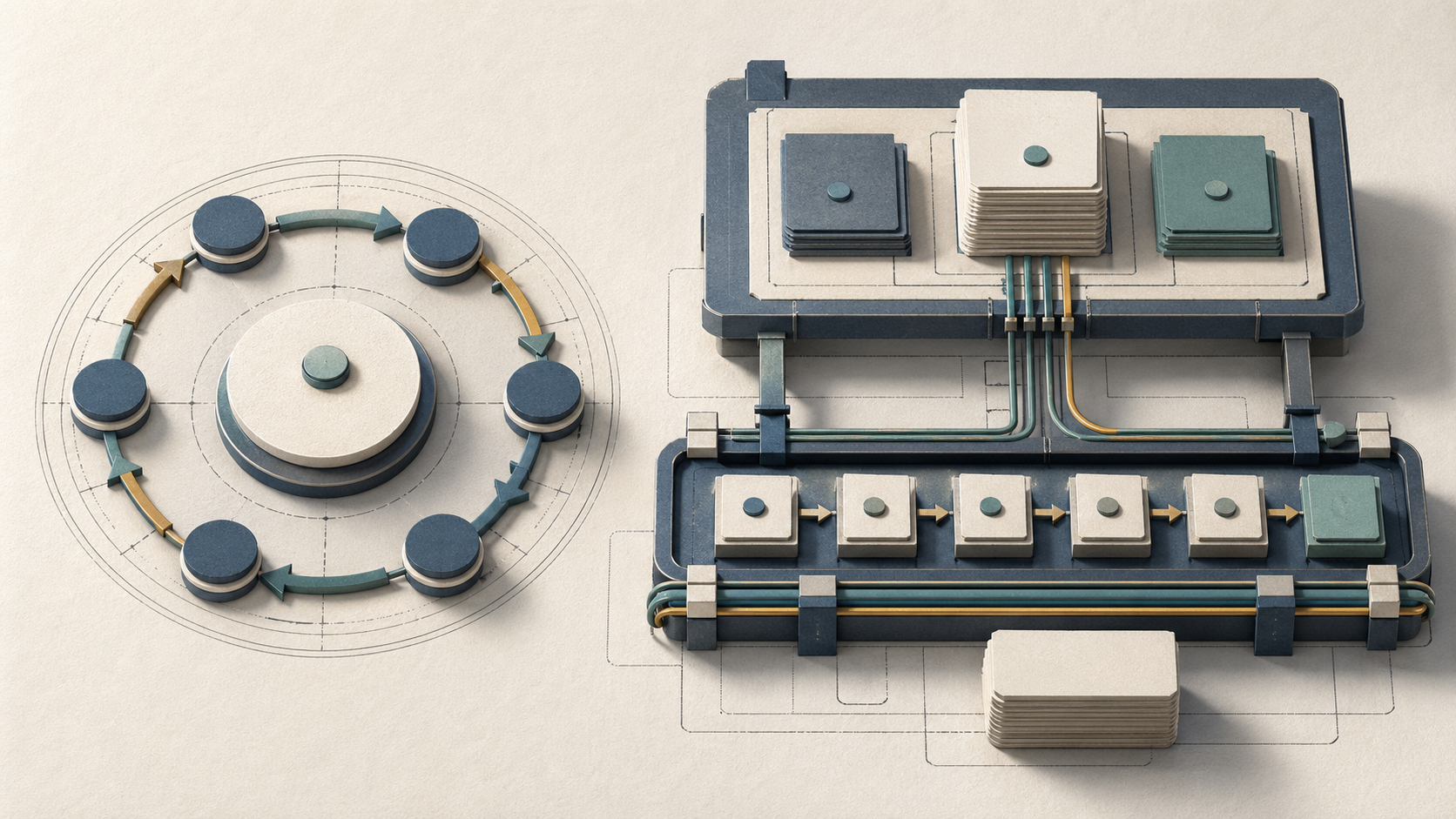

Both architectures attack the same fundamental problem — an LLM completing multi-step tasks that require real-world tool use — but they make opposite bets about where reasoning structure should live. ReAct fuses reasoning traces and tool actions into a single interleaved loop, keeping the model adaptive at every step. LangChain's plan-and-execute family, popularized in part by AutoGPT-style systems, separates upfront strategic decomposition from downstream execution entirely.

Bottom Line: ReAct is an interleaved reasoning-action loop, while Plan-and-Execute separates planning from execution into distinct stages with persistent task state. That structural difference matters most on long-horizon tasks, where maintaining task structure and step history becomes operationally important.

The ReAct paper (Yao et al., arXiv:2210.03629) framed its target environments explicitly as "complex environments that require agents to act over long horizons with sparse rewards" — specifically ALFWorld and WebShop — and reported 34% absolute success-rate gains on ALFWorld and 10% on WebShop over imitation- and reinforcement-learning baselines using only one or two in-context examples. Those results are real, but they measure short-to-medium horizon tasks where the interleaved loop's adaptability is an advantage.

How we compared the two agent patterns

The comparison here is structural, not a single-run benchmark. The analysis draws on the ReAct paper, LangChain's plan-and-execute documentation, LangGraph's graph API docs, and the LangGraphJS plan-and-execute notebook. The criteria below are the dimensions that matter operationally: how the reasoning loop is shaped, where state persists (or doesn't), where latency accumulates, how tools get invoked, and how the pattern fails under stress.

Frameworks like LangChain and CrewAI both implement variants of these two patterns, but the architecture comparison exists at the prompt-and-control-flow level — independent of which framework hosts it. Framework choice affects API ergonomics and observability tooling; it does not change the structural guarantees of the underlying reasoning pattern.

| Criterion | ReAct | Plan-and-Execute |

|---|---|---|

| Reasoning loop | Interleaved: thought → action → observation per turn | Separated: plan phase then execute phase |

| State retention | Implicit in prompt history only | Explicit plan object passed through graph state |

| Latency placement | Amortized across every tool-call turn | Front-loaded to planning; lighter per executor step |

| Tool-use cadence | Opportunistic; tools chosen per thought | Scheduled; tools follow a predetermined step list |

| Failure mode | Context erosion, goal drift, infinite loops | Stale plan, over-committed steps, replanner overhead |

At-a-glance structural comparison

ReAct and Plan-and-Execute differ not just in workflow order but in their fundamental data model. ReAct keeps the task in the conversation context, while Plan-and-Execute keeps an explicit plan object that persists across executor invocations.

| Dimension | ReAct | Plan-and-Execute |

|---|---|---|

| Global task representation | None; task re-derived from context each turn | Explicit ordered step list, updated by replanner |

| Reasoning and acting | Same LLM call; interleaved in one prompt | Separate LLM calls for planner and executor |

| Plan mutation | Ad hoc; any thought can change direction | Controlled; replanner node decides when to rewrite |

| Traceability | Per-turn thoughts visible, but no plan diff | Plan versions are discrete, diffable state objects |

| Statefulness boundary | Prompt window | Persistent graph state (e.g., LangGraph StateGraph) |

As LangChain's blog frames it: "These agent frameworks attempt to separate higher level planning from shorter term execution." That separation is the whole structural bet of Plan-and-Execute.

Reasoning loop shape

The topological difference is the clearest signal. ReAct runs a flat cycle: thought → action → observation → thought. Every iteration re-reads the current conversation history to decide the next move. Plan-and-Execute runs a directed graph with two distinct node types: the planner (a high-context, long-output call that writes steps) and the executor (a lower-context call that works one step at a time).

| Loop property | ReAct | Plan-and-Execute |

|---|---|---|

| Control structure | Uniform cycle; one node type | DAG with distinct planner / executor / replanner nodes |

| Context per step | Grows monotonically with history | Executor sees only current step + minimal context |

| Plan visibility to executor | None explicit | Current step is injected from global plan object |

| Loop exit condition | Model emits a final answer action | All steps complete, or replanner decides task done |

The ReAct paper's title — ReAct: Synergizing Reasoning and Acting in Language Models — encodes the monolithic loop in the word "synergizing": reasoning and acting are deliberately fused, not separated.

Where state lives during execution

This is the competitive gap most articles miss. ReAct's state is carried in the prompt context window. There is no shared plan memory exposed as a separate artifact in the basic pattern, so step structure is reconstructed from conversation history rather than read from a durable plan object.

Plan-and-Execute externalizes plan state. In LangGraph, this is structural: LangGraph's StateGraph passes a typed state object to every node, and reducers can accumulate fields like LLM call counts across the whole execution. The plan itself lives in that state object — it persists regardless of how many tool-call turns the executor takes.

CrewAI uses task and crew abstractions with explicit responsibilities, which makes it a useful comparison point for role separation, without requiring the same state-machine semantics as LangGraph.

| State artifact | ReAct | Plan-and-Execute |

|---|---|---|

| Plan document | Not present | Structured list in graph state |

| Completed steps | Inferred from conversation | Tracked as explicit state field |

| Surviving context truncation | No — history is the only record | Yes — plan object is independent of prompt |

| Step injection mechanism | None; next action from full context | Current step injected into executor prompt |

"State in LangGraph persists throughout the agent's execution." — LangGraph Quickstart

Latency, cost, and step-count shape

The latency profiles are inverse. ReAct distributes reasoning overhead across every turn: each tool call requires a reasoning-capable LLM call before the action is issued. Plan-and-Execute front-loads one large planning call, then issues executor calls that each carry only the current step plus a tool-result context — typically shorter and cheaper per turn.

LangGraph's Quickstart exposes this as a first-class instrumentation concern: the llmCalls field uses a reducer to accumulate call counts across the graph, giving you the raw material to compute cost-per-task. Repeated-loop agents such as AutoGPT-style systems can accumulate substantial step counts on ambiguous tasks, which is why budgeting and loop detection matter in production.

| Cost dimension | ReAct | Plan-and-Execute |

|---|---|---|

| Planning cost | Zero dedicated call; absorbed per-turn | One large upfront call; replanning adds more |

| Executor call cost | High (full reasoning each turn) | Lower (step-scoped context) |

| Step count predictability | Low; unbounded on open-ended tasks | Higher; plan sets an explicit upper bound |

| Cost on task failure | Pays full reasoning cost until loop terminates | Planner/replanner cost is bounded; executor stops early |

ReAct as an interleaved reasoning-action loop

ReAct's strength is that it makes no prior commitment. The model observes the environment, reasons about what to do next, and acts — all in one call. This makes it robust to tasks where the path is genuinely unknown: if a search returns unexpected results, the very next thought can change direction without any plan invalidation machinery. The 34% absolute gain on ALFWorld and 10% on WebShop demonstrate strong results on those sparse-reward benchmarks.

The weakness is structural, not incidental. Without a durable plan object, the agent must reconstruct task context from the conversation every turn. On short tasks (under ~5–7 steps), the conversation history fits comfortably and the full task framing stays in context. On longer tasks, tool-call observations crowd out the original instruction, and the model may begin optimizing for the most recently visible context rather than the original goal.

| Dimension | ReAct |

|---|---|

| Strengths | Adaptive, no upfront planning cost, handles unknown search spaces, strong on short-horizon tasks |

| Weaknesses | Context erosion at scale, no step budget, goal drift under long history, no plan diff visibility |

| Ideal tasks | Open-ended exploration, question answering, short-horizon tool use with uncertain branching |

| Poor fit | Multi-phase projects, tasks requiring step accountability, production agents needing auditability |

How the prompt cycle works step by step

Each ReAct turn follows a strict pattern derived from chain-of-thought prompting extended with an action vocabulary. The model receives the task plus all prior turns, then emits a Thought: block reasoning about the current state, an Action: block naming the tool and its arguments, and awaits an Observation: block containing the tool result — which is then appended to the prompt for the next turn.

The key property is that the Thought: block is generated inline with the Action: selection. In a LangChain LCEL chain or a function-calling agent, this maps directly to a single model call: the model simultaneously decides what to think and what to do. There is no separate planning call, no step commitment, and no external structure governing whether the action makes progress toward the original goal.

Pro Tip: Keep the interleaved

Thought:→Action:→Observation:pattern intact if you want a true ReAct loop rather than a plain tool-calling wrapper.

| Turn component | Content | Visibility |

|---|---|---|

Thought: |

Free-form reasoning about current state | This turn's context only |

Action: |

Tool name + parameters | Executable by the tool harness |

Observation: |

Tool return value | Appended to prompt; grows history |

| Implicit task goal | Original instruction at top of prompt | Can be displaced by long history |

Why ReAct can drift or loop on long tasks

As tool-call rounds accumulate, the Observation: blocks from early steps fill the context window. The original task instruction — the only representation of the goal — gets pushed back in token-position terms, and models with standard positional attention weight recent context more heavily than distant context. The agent begins to respond to the most recently visible tool output rather than to the task goal.

Drift can also show up when tool calls are expensive or slow: the model may re-issue identical searches, re-read the same documents, or oscillate between two partial strategies. AgentBench — which evaluates 29 LLMs across 8 distinct environments for reasoning and decision-making — surfaces this behavior systematically: weaker models loop on tasks that stronger models complete in fewer steps, and WebArena, with its realistic multi-step browser tasks, is particularly diagnostic because tasks span many navigation events before a terminal condition is reachable.

Watch Out: ReAct agents on long-horizon tasks show two failure signatures: (1) context erosion — the original task goal drops out of the effective attention range and the agent starts free-associating from recent observations; (2) loop entrapment — the model calls the same tool with nearly identical arguments across multiple consecutive turns because it cannot distinguish "I already tried this" from "this is the right next action." Neither failure is recoverable without external loop-detection logic, because the agent has no global plan to compare against.

Plan-and-Execute as separated planning and execution

Plan-and-Execute addresses ReAct's state problem by elevating the task plan to a durable, externally managed object. LangChain's official framing is precise: "Build reliable AI agents with Plan-and-Execute framework. Separate planning from execution for complex tasks with fewer errors." That reliability claim is architectural — when the plan lives in LangGraph graph state rather than in the prompt, it is carried through the workflow as an explicit state value.

The LangGraph graph API supports this structurally: sequences, branches, and loops are explicit control-flow edges in the graph rather than implicit behaviors inside a monolithic prompt chain. Each node receives a typed state object, so the executor always has access to the current plan object.

| Component | Responsibility |

|---|---|

| Planner | Generates ordered step list from the task description |

| Executor | Works through one step at a time using available tools |

| Replanner | Evaluates progress and rewrites plan if a step fails or new information requires restructuring |

Planner, executor, and replanner roles

The plan-execute-replan pattern has three distinct roles with different state visibility and different prompt requirements. The LangGraphJS plan-and-execute notebook describes it directly: "The core idea is to first come up with a multi-step plan, and then go through that plan one item at a time." The replanner extends this: "After accomplishing a particular task, you can then revisit the plan and modify as appropriate."

CrewAI expresses similar role separation through its crew/task architecture, where tasks are assigned to agents with explicit dependencies.

| Role | State visibility | Output | Trigger |

|---|---|---|---|

| Planner | Full task description only | Ordered step list | Task start |

| Executor | Current step + minimal tool context | Tool result or step completion | Each step in plan |

| Replanner | Completed steps + remaining plan + last result | Updated step list or final answer | Step failure or unexpected result |

The replanner is what distinguishes plan-and-execute from a simple batch processor. Without it, any step failure terminates the task. With it, the system can recover from partial failures, decompose ambiguous steps further, or short-circuit if earlier steps already satisfy the final goal.

Global plan context and state transitions

The state-machine semantics of Plan-and-Execute are what give it long-horizon reliability. In LangGraph, the graph "walks through state, as well as composing common graph structures such as sequences, branches, and loops" — this isn't metaphorical, it means the plan object in the state schema transitions between discrete states in the implementation.

| State transition | Trigger | State mutation |

|---|---|---|

PENDING → PLANNING |

Task submitted | Planner writes step list to state |

PLANNING → EXECUTING[n] |

Plan ready | Executor receives step n from state |

EXECUTING[n] → EXECUTING[n+1] |

Step n succeeds | Completed steps list updated |

EXECUTING[n] → REPLANNING |

Step n fails or result invalidates remaining steps | Replanner receives full plan + failure context |

REPLANNING → EXECUTING[m] |

Replanner rewrites plan | New step list replaces old; execution resumes |

EXECUTING[final] → DONE |

All steps complete | Final answer synthesized from state |

LangChain and LangGraph make these transitions explicit as conditional graph edges. The ReAct loop has no equivalent — it has no states, only a continuous cycle.

Where asynchronous scheduling changes the control flow

Async scheduling matters most in Plan-and-Execute because the executor loop is the natural place to parallelize independent steps. If a plan contains independent steps, an async executor can dispatch them concurrently rather than serializing them.

LangGraph explicitly supports async nodes: "If you are running LangGraph in async workflows, you may want to create the nodes to be async by default." CrewAI exposes this through its asynchronous task execution mode, where tasks without dependencies can run in parallel within a crew.

| Scheduling mode | ReAct | Plan-and-Execute |

|---|---|---|

| Serial execution | Default; each thought waits for prior observation | Default; steps execute in plan order |

| Parallel execution | Not applicable; thought depends on prior observation | Possible for independent plan steps |

| Async benefit | Minimal (tool I/O latency reduction only) | Significant (step parallelism reduces wall time) |

| Async risk | Race conditions in shared tool state | Plan step ordering must encode real dependencies |

AutoGPT-style systems that run ReAct loops asynchronously often introduce coordination complexity without gaining parallelism, because the sequential reasoning chain cannot be broken apart safely. Plan-and-Execute's explicit step graph makes the parallelism opportunities structurally visible.

When each architecture wins in practice

The honest answer to "Is ReAct better than plan-and-execute?" is: it depends on task horizon length, uncertainty level, tool reliability, and whether the operator needs post-hoc traceability. Neither pattern dominates the other across all regimes.

Choose ReAct when: - The task path is unknown in advance and environmental feedback determines the next action - Tasks are short (≤ 5–7 tool-use steps) and fit comfortably in context - Speed of initial response matters more than structured accountability - Tool calls are fast and reliable; observation quality is high

Choose Plan-and-Execute when: - Tasks have a predictable decomposition into sequential or semi-parallel steps - Horizon length exceeds what fits cleanly in a single ReAct prompt's attention range - Step-level accountability and auditability are required (compliance, debugging, human-in-the-loop review) - Tool failures are possible and the system must recover gracefully without restarting from scratch - LangGraph's persistent state, streaming, and debugging capabilities are priorities in production

Choose hybrid when: - The task has a knowable top-level structure but individual steps require adaptive sub-agent behavior - A single planner step may expand into an unknown number of tool invocations

LangChain and CrewAI both support orchestrating multiple agents with different reasoning patterns, making hybrid architectures deployable within existing tooling.

| Task variable | Favors ReAct | Favors Plan-and-Execute |

|---|---|---|

| Task horizon | Short (1–7 steps) | Long (8+ steps) |

| Path uncertainty | High | Low–medium |

| Tool reliability | High | Any; replanner handles failures |

| Traceability requirement | Low | High |

| Step parallelism opportunity | None | High |

Research, coding, and browsing tasks

WebArena benchmarks realistic multi-step browser navigation tasks across four categories of web environments designed to emulate human problem-solving. These tasks require sustained goal tracking in a realistic browser setting, which is exactly the regime where plan context retention matters.

SWE-bench — and specifically SWE-bench Verified, its human-filtered 500-instance subset — tests software engineering tasks that require reading code, proposing patches, and validating changes. These tasks have clear pre-conditions and post-conditions, which makes them natural fits for Plan-and-Execute's structured decomposition. Short exploratory sub-tasks within a software fix may fit a ReAct-style search step inside a larger plan.

| Task family | Benchmark | Recommended pattern | Rationale |

|---|---|---|---|

| Browser automation | WebArena | Plan-and-Execute | Long navigation chains; state anchoring prevents drift |

| Software engineering | SWE-bench | Plan-and-Execute + ReAct sub-agent | Structured repair loop; adaptive code search within steps |

| Open-ended research Q&A | — | ReAct | Unknown path; adaptive tool selection at each step |

| Data analysis pipelines | — | Plan-and-Execute | Deterministic step sequence; step failures are recoverable |

Hybrid patterns that combine both

The natural hybrid is a Plan-and-Execute outer loop where individual executor steps dispatch a ReAct sub-agent. The planner writes high-level steps ("gather background on topic X," "synthesize findings," "write section Y"); each executor step invokes a ReAct loop that handles the search and retrieval mechanics adaptively. The replanner only fires when an executor step fails to produce usable output.

LangGraph supports this structurally: conditional edges between planner, executor, and replanner nodes can be configured so the replanner triggers only on step failure, while the executor node itself is a sub-graph containing a ReAct cycle. AutoGPT's task-management layer approximates this model, though without the formal state-machine guarantees of a LangGraph StateGraph.

Choose hybrid when: - Top-level task structure is known, but individual steps require open-ended tool exploration - You want Plan-and-Execute's auditability at the macro level and ReAct's adaptability at the micro level - The replanner overhead is acceptable; triggering it only on step failure keeps cost bounded

Prompt templates and tracing signals to watch

Prompt structure is where the architectural difference becomes operational. The planner prompt and the ReAct prompt encode fundamentally different control contracts — get this wrong and you implement the wrong architecture regardless of which graph topology you declared. LangGraph provides persistence, streaming, and debugging as built-in infrastructure; but the prompts determine whether the agent actually behaves as a planner, executor, or interleaved reasoner.

CrewAI follows similar conventions for its agent role descriptions, which function as the prompt layer for its executor agents within a task crew.

| Prompt component | ReAct | Plan-and-Execute |

|---|---|---|

| Task framing | Full task + tool list at top; refreshed every turn | Full task at planner; current step only at executor |

| Output format contract | Thought: / Action: / Action Input: interleaved |

Planner: JSON/YAML step list; Executor: tool call only |

| Plan reference | None | Explicit current_step injected from state |

| Termination signal | Final Answer: action type |

All steps COMPLETE in state, or replanner returns answer |

| History management | Full history in prompt (grows with turns) | Executor history bounded to current step |

Pro Tip: Instrument

llmCallsas a reducer field in your LangGraph state from day one. LangGraph's Quickstart accumulates it as(x, y) => x + y, giving you per-task step counts. Treat unusual call inflation as a heuristic signal to inspect the turn where the agent starts repeating tools or shrinking its reasoning trace.

ReAct prompt template

A minimal ReAct prompt must enforce the interleaved format while keeping the task goal visible at the top. The chain-of-thought prompting structure is central: the Thought: block must precede every Action: block, and the model must be instructed never to act without reasoning. LangChain's built-in ReAct agent prompt follows this pattern with tool descriptions injected into the system message.

The critical design decision is how to handle history truncation. Since the task goal is the only anchor in a ReAct prompt, truncation strategies must preserve the opening task description.

System: You are an agent that answers questions using tools.

Available tools: {tool_descriptions}

Always reason before acting. Format:

Thought: <your reasoning about the current state>

Action: <tool_name>

Action Input: <tool_arguments>

Observation: <tool result — provided by harness>

... (repeat until you can answer)

Final Answer: <your final response>

Task: {task_description}

{conversation_history}

The {conversation_history} block grows with every turn. The task goal in Task: must be preserved at the top regardless of truncation. The chain-of-thought prompting contract enforces that the model "generate both reasoning traces and task-specific actions in an interleaved manner" — removing Thought: from the format degrades the pattern to a simple function-calling loop with no reasoning visibility.

Plan-and-Execute prompt template

The planner prompt serves a different contract: it must produce an explicit, enumerated step list that the executor can consume one item at a time. LangGraph's official description of the pattern is "a planner that generates a multi-step task list, an executor that invokes the tools in the plan, and a replanner that responds or generates an updated plan." The role split is a design pattern that keeps responsibilities clear.

CrewAI achieves the same role separation through its agent backstory and task description structure, where the planner agent's role description emphasizes producing structured task lists rather than executing tool calls.

# Planner prompt

System: You are a planner. Given a task, produce a numbered list of

concrete steps to complete it. Each step must be independently

executable by a tool-calling agent. Output JSON: {"steps": ["step1", ...]}.

Task: {task_description}

# Executor prompt (injected per step)

System: You are an executor. Complete exactly the following step using

available tools. Do not do more than this step.

Current step: {current_step}

Tools: {tool_descriptions}

# Replanner prompt

System: You are a replanner. Given the original task, completed steps,

remaining steps, and the result of the last step, decide: return a final

answer or return an updated step list.

Task: {task_description}

Completed: {completed_steps}

Last result: {last_result}

Remaining: {remaining_steps}

The replanner prompt is the mechanism that distinguishes plan-and-execute from a batch script. Without it, the pattern cannot recover from step failure or adapt to new information discovered mid-execution.

Tracing signals that show a pattern is stalling

Stall patterns are distinct between the two architectures, but both are detectable through step-count instrumentation. LangGraph's cumulative llmCalls field provides the raw signal; the interpretation differs by pattern.

AgentBench — evaluating 29 LLMs across 8 environments — provides a reference for what systematic stalls look like across architectures: weaker models exhibit significantly higher step counts with lower success rates, a pattern that step-count tracing can expose before task failure.

SWE-bench Lite — "a subset curated for less costly evaluation" — makes explicit that token cost scales with step count. The right reporting protocol: for every architecture you evaluate, report (a) task success rate, (b) median total LLM calls per completed task, (c) median total LLM calls per failed task, and (d) token cost per task at the median call count. LangGraph's llmCalls accumulator captures (b) and (c) directly; cost estimates for (d) should be documented as an approximation rather than a benchmark standard.

| Metric | What it reveals | Collection method |

|---|---|---|

| Success rate | Architecture effectiveness on task family | Benchmark standard |

| Median LLM calls (success) | Baseline efficiency | llmCalls reducer |

| Median LLM calls (failure) | Drift/loop severity | llmCalls reducer |

| Token cost per task | Operational cost at scale | Calls × average tokens × model price |

| Plan rewrite rate (P&E only) | Planner quality | Replanner trigger counter in state |

Benchmark signals and evaluation criteria

The three major benchmark families used to evaluate agent architectures — AgentBench, SWE-bench, and WebArena — each stress different structural properties. A critical mistake in the literature is conflating task decomposition performance with state-machine management quality: an agent can score well on AgentBench's short-horizon environments using a pure ReAct loop while completely failing to scale those results to WebArena's long-navigation tasks.

| Benchmark | Environment type | Primary signal | What it stresses |

|---|---|---|---|

| AgentBench | 8 environments; diverse task types | Success rate across task families | General reasoning + decision-making breadth |

| SWE-bench Verified | 500 software engineering instances | Patch resolution rate | Multi-step code reasoning; plan-like task structure |

| WebArena | Realistic browser navigation | Task completion rate | Long-horizon control; drift resistance |

What benchmark families reveal about reasoning structure

AgentBench — "a multi-dimensional benchmark that consists of 8 distinct environments to assess LLM-as-Agent's reasoning and decision-making abilities" — is best used to compare loop quality in isolation: short-horizon environments in the suite isolate whether a reasoning loop makes coherent per-step decisions. High AgentBench scores on short-horizon sub-tasks do not predict WebArena performance, because WebArena's tasks require sustained goal tracking across many navigation events.

WebArena is the most diagnostic benchmark for the ReAct-vs-Plan-and-Execute question specifically. "WebArena creates websites from four popular categories with functionality and data mimicking their real-world equivalents" — these tasks require sustained control over browser state, which is exactly the regime where plan context retention separates the two architectures.

SWE-bench Verified (500 human-filtered instances) benchmarks a structured task type: find the bug, produce the patch, verify the fix. This task structure maps naturally to a Plan-and-Execute decomposition (locate → understand → patch → test), but the code-search phase within each step is adaptive — which is why hybrid architectures tend to fit here.

| Benchmark | Best reveals | Blind spot |

|---|---|---|

| AgentBench | Loop quality, per-step decision coherence | Long-horizon state management |

| WebArena | Drift resistance, long-horizon plan retention | Code-specific tool use |

| SWE-bench | Structured multi-step task completion | Open-ended exploration tasks |

How to report step-count and cost-per-task honestly

Honest step-count reporting requires three numbers per task family: median step count at success, median step count at failure, and the ratio of tool-call steps to reasoning steps. A high ratio of tool calls to reasoning calls in a ReAct agent indicates the Thought: block has degraded — the agent is calling tools without meaningful deliberation.

SWE-bench Lite — "a subset curated for less costly evaluation" — makes explicit that token cost scales with step count. The right reporting protocol: for every architecture you evaluate, report (a) task success rate, (b) median total LLM calls per completed task, (c) median total LLM calls per failed task, and (d) token cost per task at the median call count. LangGraph's llmCalls accumulator captures (b) and (c) directly; multiply by per-token price at your model tier for (d).

| Metric | What it reveals | Collection method |

|---|---|---|

| Success rate | Architecture effectiveness on task family | Benchmark standard |

| Median LLM calls (success) | Baseline efficiency | llmCalls reducer |

| Median LLM calls (failure) | Drift/loop severity | llmCalls reducer |

| Token cost per task | Operational cost at scale | Calls × average tokens × model price |

| Plan rewrite rate (P&E only) | Planner quality | Replanner trigger counter in state |

Sources & References

- ReAct: Synergizing Reasoning and Acting in Language Models (Yao et al.) — primary source for ReAct architecture.

- ar5iv mirror of ReAct paper — full paper with quotes and benchmarks.

- LangChain Plan-and-Execute Agents blog — official plan-and-execute framing.

- LangGraph Quickstart (JS) — state persistence and

llmCallsinstrumentation. - LangGraph Use Graph API — state machine transitions; sequences, branches, loops.

- LangGraph GitHub README — async nodes; StateGraph documentation.

- LangGraphJS plan-and-execute notebook — planner/executor/replanner implementation reference.

- AgentBench (Liu et al.) — multi-environment agent benchmark.

- SWE-bench official site — software engineering benchmark; Verified and Lite subsets.

- WebArena — realistic browser navigation benchmark.

Questions readers ask next

What is the difference between ReAct and plan-and-execute?

ReAct interleaves reasoning and tool use in a single loop. Plan-and-Execute separates the work into a planning stage that writes steps and an execution stage that carries them out.

Is ReAct better than plan-and-execute?

Neither is universally better. ReAct fits short, uncertain tasks where immediate adaptation matters; Plan-and-Execute fits longer tasks with clearer decomposition, state retention needs, and a stronger audit trail.

How does plan-and-execute work in LangChain?

In the LangChain framing, planning is separated from execution so a planner can create a step list and an executor can follow it. LangGraph adds explicit state management and graph control flow around that pattern.

Why does ReAct fail on long-horizon tasks?

The structural risk is that the task must stay in the prompt history instead of in a separate plan artifact. As the conversation grows, the agent has less room for the original task framing and more chance to repeat or drift.

What is the plan-execute-replan pattern?

It is a three-role setup: a planner generates the initial steps, an executor works through them, and a replanner updates the plan when a step fails or new information changes the remaining work.

How do I detect when an agent is stalling?

Watch for repeated tool calls, unusually high call counts, shrinking reasoning traces, and — for plan-and-execute systems — excessive plan rewrites. Those are the practical signals surfaced by step-count instrumentation.

Which benchmarks reveal long-horizon control problems?

WebArena is the clearest long-horizon browser benchmark here, because it forces sustained control over realistic web state. AgentBench is useful for isolating per-step decision quality, and SWE-bench captures structured software tasks.

When should I use a hybrid pattern?

Use a hybrid when the top-level task is planable but individual steps need adaptive search. That gives you macro-level accountability with micro-level flexibility.

How does AutoGPT relate to these patterns?

AutoGPT is often discussed alongside agent loops that revisit actions repeatedly. In this comparison, it is most useful as a reminder that repeated-loop systems need explicit budgeting, tracing, and loop controls when they operate on open-ended tasks.

Keywords: ReAct, Plan-and-Execute, LangChain, LangGraph, CrewAI, AutoGPT, AgentBench, SWE-bench, WebArena, chain-of-thought prompting, state machine, task decomposition, tool calling, planner-executor pattern, long-horizon tasks