Why SmoothQuant exists for W8A8 LLM inference

Bottom Line: SmoothQuant is a training-free post-training quantization method that makes simultaneous 8-bit weight and 8-bit activation (W8A8) quantization feasible for large language models by relocating quantization difficulty from activation tensors to weight tensors through a mathematically equivalent channel-wise rescaling. Naïve INT8 quantization applied to activations collapses accuracy on outlier-heavy models like OPT-175B and BLOOM-176B; SmoothQuant's offline smoothing step eliminates those outliers before any quantization kernel runs, enabling W8A8 INT8 inference across the full matrix-multiplication stack without retraining. The trade-off is an additional preprocessing path and a modified model artifact that production serving stacks must explicitly support.

As Guangxuan Xiao et al. state in the original paper: "We propose SmoothQuant, a training-free, accuracy-preserving, and general-purpose post-training quantization (PTQ) solution to enable 8-bit weight, 8-bit activation (W8A8) quantization for LLMs."

The W8A8 target distinguishes SmoothQuant from weight-only methods like AWQ or GPTQ. Quantizing only weights leaves activations in FP16 at runtime, so matrix-multiply kernels still operate at FP16 throughput. True INT8 GEMM kernels — available on V100, Turing, Ampere, Ada Lovelace, and Hopper hardware — require both operands in INT8, which is exactly what W8A8 delivers. The problem SmoothQuant solves is that activations in transformer LLMs are structurally difficult to quantize: a small number of channels carry extreme magnitudes that a naive INT8 grid cannot represent without catastrophic rounding error across all other channels.

The GitHub repository confirms this scope: SmoothQuant enables INT8 quantization of both weights and activations for all matrix multiplications in LLMs, including OPT-175B, BLOOM-176B, GLM-130B, and MT-NLG 530B.

How activation outliers break naïve INT8 quantization

Activation quantization fails on modern LLMs because outlier channels — specific hidden-state dimensions that consistently emit magnitudes an order of magnitude larger than the median — force the INT8 scale factor to accommodate their range. When that scale is wide enough for the outliers, the quantization step size becomes too coarse to represent the majority of non-outlier activations accurately. Every channel that isn't an outlier loses resolution proportional to the outlier's dominance.

The SmoothQuant paper identifies this asymmetry precisely: weights are easy to quantize while activations are not. Weight tensors in transformer linear layers exhibit smooth per-channel distributions. Activation tensors do not — outliers concentrate in specific input channels and persist across tokens, a pattern that calibration-based methods without smoothing cannot fix because the outlier structure is architectural, not statistical noise.

For OPT-175B specifically, the paper demonstrates that naïve W8A8 per-tensor dynamic quantization produces accuracy loss that SmoothQuant recovers even under an aggressive quantization configuration called O3. BLOOM-176B is more forgiving in the paper's evaluation, but the key deployment lesson is unchanged: outlier structure varies by model family, so assuming all large LLMs are equally smooth or equally outlier-heavy is incorrect.

Watch Out: The outlier distribution is channel-specific and model-family-specific. A smoothing factor calibrated on OPT will not transfer directly to Falcon or Mistral. Every new model requires its own calibration pass before INT8 inference can be trusted. Skipping calibration and using a borrowed smoothing factor degrades accuracy in ways that perplexity on a small calibration set may not fully expose.

The failure mode in practice: a per-tensor INT8 quantization of activations clips the outlier channels (clipping introduces bias) or over-scales the range (coarse step size introduces variance for all other channels). Per-channel activation quantization partially mitigates this but introduces overhead incompatible with fused GEMM kernels that expect a single per-tensor scale. SmoothQuant breaks this deadlock offline.

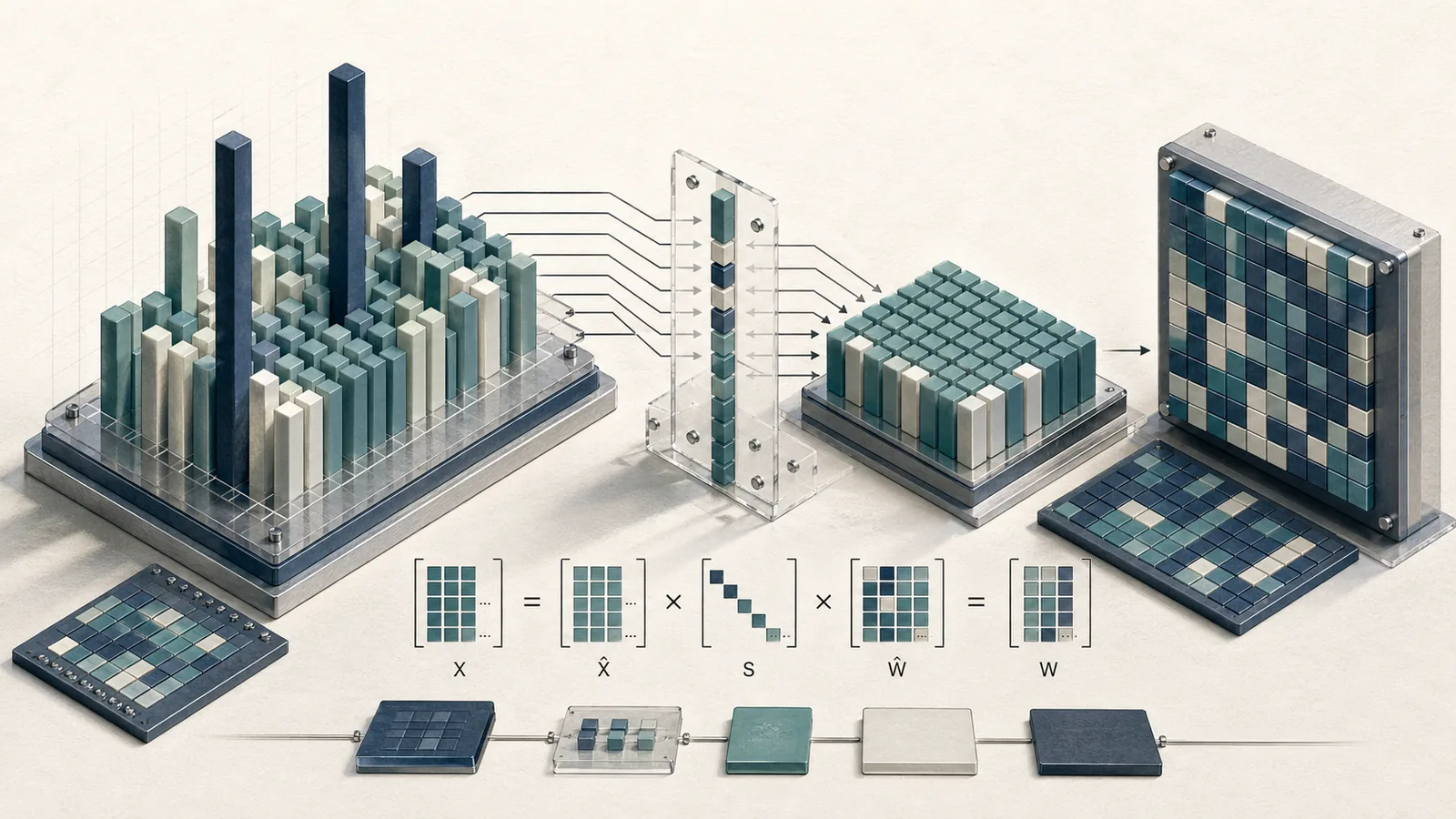

SmoothQuant's smoothing transformation at a glance

SmoothQuant applies a per-channel scale vector (\mathbf{s} \in \mathbb{R}^{C_{\text{in}}}) to the input activations before quantization and absorbs the inverse of that scale into the weight matrix. The smoothed activation (\hat{\mathbf{X}}) and rescaled weight (\hat{\mathbf{W}}) satisfy:

$(\hat{\mathbf{X}} = \mathbf{X} \cdot \text{diag}(\mathbf{s})^{-1}, \quad \hat{\mathbf{W}} = \text{diag}(\mathbf{s}) \cdot \mathbf{W})$

so that the matrix product is unchanged:

$(\hat{\mathbf{X}} \hat{\mathbf{W}} = \mathbf{X} \cdot \text{diag}(\mathbf{s})^{-1} \cdot \text{diag}(\mathbf{s}) \cdot \mathbf{W} = \mathbf{X} \mathbf{W})$

The smoothing factor (\mathbf{s}) is obtained on calibration samples and the entire transformation is performed offline. At runtime, the activations are already smooth — no scaling operation executes in the forward pass. The paper confirms the offline path and the migration-strength parameterization in the PDF.

This is the architectural consequence competitors miss: SmoothQuant does not add a runtime scaling kernel. The scale is absorbed into the weight tensor permanently during preprocessing. The serving path sees a modified weight matrix and smooth activations — both quantizable to INT8 with standard per-tensor or per-token schemes.

What gets scaled, and why the rescaling is mathematically equivalent

The rescaling targets the input channel dimension of each linear layer. Each element of (\mathbf{s}) corresponds to one input feature dimension. Dividing the activation by (s_j) shrinks the outlier in channel $j$; multiplying the $j$-th row of (\mathbf{W}) by (s_j) restores the numerical identity of the full product.

$(Y_{ij} = \sum_k X_{ik} W_{kj} = \sum_k \frac{X_{ik}}{s_k} \cdot (s_k W_{kj}) = \sum_k \hat{X}{ik} \hat{W})$

Equivalence holds exactly in floating point; in INT8, both operands are quantized independently after smoothing, so small rounding errors accumulate from both sides. The key insight is that smoothing makes those rounding errors symmetric and small rather than asymmetric and large (which is what outlier-dominated activations produce). The serving stack must preserve the absorbed scale in (\hat{\mathbf{W}}) — if (\mathbf{s}) is lost or incorrectly applied, the weight matrix is numerically wrong and inference silently produces garbage outputs.

How the alpha smooth factor changes the activation-versus-weight burden

Choosing (\mathbf{s}) involves a scalar trade-off controlled by the migration strength hyperparameter (\alpha \in [0, 1]):

$(s_j = \max(|\mathbf{X}|_j)^\alpha \cdot \max(|\mathbf{W}|_j)^{-(1-\alpha)})$

where (\max(|\mathbf{X}|_j)) is the per-channel activation magnitude observed on calibration data and (\max(|\mathbf{W}|_j)) is the per-channel weight magnitude. The paper introduces (\alpha) explicitly as a hyper-parameter, migration strength α that controls how much quantization difficulty is shifted from activations to weights.

At (\alpha = 0), all difficulty stays in the activations (no smoothing). At (\alpha = 1), all difficulty moves to the weights. At (\alpha = 0.5), difficulty is balanced evenly. The paper reports (\alpha = 0.8) in its LLaMA W8A8 evaluation.

$(\alpha \uparrow \Rightarrow \hat{\mathbf{X}} \text{ is easier to quantize, } \hat{\mathbf{W}} \text{ is harder})$

The right (\alpha) is model-dependent and calibration-set-dependent. There is no universal default; the paper's (\alpha = 0.8) for LLaMA is a starting point, not a specification. Weight quantization is more forgiving of added difficulty because weight distributions are per-channel-smooth; that asymmetry is precisely what justifies pushing (\alpha) above 0.5 for most transformer LLMs.

What changes inside DecoderLayer after smoothing

Before smoothing, a standard transformer DecoderLayer contains FP16 RMSNorm and nn.Linear modules operating on full-precision tensors. After SmoothQuant preprocessing and module replacement, those modules are rewritten as quantized counterparts that carry INT8 weights, quantization scales, and the absorbed smoothing factors baked into the weight tensors.

LMDeploy's SmoothQuant documentation describes this precisely: the workflow smooths the weights of an LLM and then replaces DecoderLayer modules such as RSMNorm and nn.Linear with quantized Q modules (QRSMNorm and QLinear) before saving the quantized model. The docs also use the spelling RSMNorm, which should be preserved when matching the implementation notes.

flowchart LR

subgraph FP16_Model["FP16 DecoderLayer (pre-smoothing)"]

A[RMSNorm] --> B[nn.Linear - QKV]

B --> C[Attention]

C --> D[nn.Linear - Proj]

D --> E[RMSNorm]

E --> F[nn.Linear - FFN Gate]

F --> G[nn.Linear - FFN Down]

end

subgraph Calibration["Offline calibration"]

H[Calibration samples] --> I[Compute s per channel]

I --> J[Absorb s into weights]

J --> K[Determine alpha per layer]

end

subgraph INT8_Model["INT8 DecoderLayer (post-smoothing)"]

L[QRSMNorm] --> M[QLinear - QKV, INT8 weights + scales]

M --> N[Attention]

N --> O[QLinear - Proj, INT8 weights + scales]

O --> P[QRSMNorm]

P --> Q[QLinear - FFN Gate, INT8 weights + scales]

Q --> R[QLinear - FFN Down, INT8 weights + scales]

end

FP16_Model --> Calibration

Calibration --> INT8_Model

The replacement is not a wrapper — QLinear holds INT8-quantized weight tensors with the smoothing scale already absorbed, and QRSMNorm integrates the normalization with the activation quantization step needed to produce INT8 inputs for downstream QLinear layers. The post-smoothing model cannot be loaded as a standard Hugging Face checkpoint because the module class names, weight dtypes, and parameter shapes differ from the original architecture.

Where QRSMNorm and QLinear fit in the post-smoothing path

The quantization pipeline in LMDeploy divides into two distinct stages: an offline preprocessing stage driven by lmdeploy lite smooth_quant and an online serving stage using the PyTorch backend.

sequenceDiagram

participant User

participant CLI as lmdeploy lite smooth_quant

participant Calib as Calibration Engine

participant Model as Model Rewriter

participant Disk as Quantized Checkpoint

participant Backend as LMDeploy PyTorch Backend

User->>CLI: Provide FP16 model + calibration data

CLI->>Calib: Run forward passes, collect activation statistics

Calib->>Model: Compute per-channel s vectors and alpha

Model->>Model: Absorb s into weights, replace RMSNorm → QRSMNorm, nn.Linear → QLinear

Model->>Disk: Save quantized model (INT8 weights + scales)

User->>Backend: Load quantized checkpoint

Backend->>Backend: Map QRSMNorm and QLinear to INT8 GEMM kernels

Backend-->>User: Serve INT8 inference

QRSMNorm handles the normalization and produces a quantized activation tensor (INT8) as output rather than FP16. QLinear consumes that INT8 activation alongside its INT8 weight matrix and dispatches to hardware INT8 GEMM kernels. The quantization scales for both tensors are stored as module parameters alongside the quantized weights. The PyTorch backend in LMDeploy understands these module types natively; a generic PyTorch runtime does not.

Why the saved model is no longer a plain FP16 checkpoint

Production Note: The output of

lmdeploy lite smooth_quantis a modified model artifact, not a FP16 checkpoint with an attached config file. Weight tensors are stored as INT8, smoothing scales and quantization parameters are embedded as module-level attributes, and the module class map (RMSNorm → QRSMNorm,nn.Linear → QLinear) is baked into the serialized model graph. Loading this artifact requires LMDeploy's model classes to be present in the Python environment. Any serving system that loads the checkpoint with a stocktransformers.AutoModelForCausalLMcall will fail at class resolution or silently mis-load the weights. Production deployment pipelines must pin LMDeploy versions, verify the quantized module class registry, and treat the quantized checkpoint as a first-class serving artifact separate from the source FP16 model.

LMDeploy's SmoothQuant documentation says the transformed model is saved after module replacement and quantization, rather than kept as a vanilla FP16 checkpoint.

Where SmoothQuant works well and where it breaks

SmoothQuant's success claims are tied to large, outlier-heavy LLMs where naïve INT8 fails most severely. The ICML/PMLR proceedings page for the paper states: "We can quantize the 3 largest, openly available LLM models into INT8 without degrading the accuracy." The named models are OPT-175B, BLOOM-176B, and GLM-130B. MT-NLG 530B is also listed in the GitHub repository as a supported target.

| Model | Outlier severity | Naïve W8A8 outcome | SmoothQuant W8A8 outcome |

|---|---|---|---|

| OPT-175B | High | Significant accuracy degradation | Accuracy preserved (paper O3 config) |

| BLOOM-176B | Moderate | Accuracy degradation | Accuracy preserved |

| GPT-J-6B | Low–moderate | Minor degradation | Matches or near-matches FP16 |

| OPT-13B (demo) | Moderate | Baseline fails | SmoothQuant matches FP16 |

These results come from the paper's own calibration and evaluation setup. They are not a universal guarantee for arbitrary fine-tuned variants, quantized bases, or domain-shifted deployments.

Why smaller GPT-style models are friendlier than large outlier-heavy models

Outlier severity scales with model size in the OPT and BLOOM families: larger models exhibit more persistent and larger-magnitude outlier channels. Smaller GPT-style models like GPT-J-6B carry fewer dominant outlier channels, making per-tensor INT8 quantization less catastrophic even without smoothing — and making SmoothQuant's benefits harder to observe as a dramatic accuracy jump.

The smoothquant GitHub README notes that OPT-30B was used for single-GPU demonstrations specifically because it is "the largest model we can run both the FP16 and INT8 inference on a single A100 GPU" — a practical constraint that illustrates the memory reduction W8A8 enables, not a claim about model quality.

| Model size range | Expected outlier density | SmoothQuant benefit magnitude | Recommended alpha starting point |

|---|---|---|---|

| 6B–13B (GPT-J, OPT-13B) | Low–moderate | Moderate | 0.5–0.7 |

| 30B–70B (OPT-30B, LLaMA-70B) | Moderate–high | High | 0.7–0.8 |

| 130B–175B (OPT-175B, BLOOM-176B) | High | Very high | 0.8 |

Alpha values above are informed by the paper's reported configurations and should be treated as calibration starting points, not fixed values. Empirical tuning per model and calibration set remains necessary.

What accuracy loss to watch for on long-context and code-heavy workloads

Watch Out: Perplexity on a standard language-modeling benchmark (WikiText-2, PTB) is the primary evaluation metric in the SmoothQuant paper, but perplexity systematically under-represents degradation on long-context tasks, instruction-following benchmarks, and code generation. A model that matches FP16 perplexity within 0.1 nats may still show measurable regression on HumanEval, MBPP, or needle-in-a-haystack retrieval. W8A8 INT8 quantization introduces symmetric rounding noise across all activations; for tasks that depend on precise token probability mass at long range (retrieval, multi-step reasoning, code with deep call stacks), this noise compounds across layers in ways that short-context perplexity does not capture. Before deploying a SmoothQuant-quantized model to a code-generation or long-document summarization endpoint, run the full downstream eval suite on your target task — not just perplexity.

What LMDeploy adds beyond the original SmoothQuant paper

The SmoothQuant paper specifies an algorithm and a reference implementation. LMDeploy layers a production serving stack on top: a CLI preprocessing command (lmdeploy lite smooth_quant), a quantized module registry (QRSMNorm, QLinear), and a PyTorch backend capable of dispatching to hardware INT8 and FP8 kernels. The paper proves the method works; LMDeploy operationalizes it into a deployment artifact with hardware-specific kernel dispatch.

| Dimension | SmoothQuant paper | LMDeploy implementation |

|---|---|---|

| Algorithm | Offline smoothing from calibration samples | Integrated via lmdeploy lite smooth_quant |

| Module replacement | Reference implementation only | RSMNorm / QRSMNorm and nn.Linear / QLinear |

| Saved artifact | Quantized weights after smoothing | Quantized checkpoint with module-class rewrite |

| Serving backend | Not in scope | PyTorch backend with INT8 dispatch |

| FP8 support | Not addressed | On H100-class hardware |

| Hardware coverage | Not specified | V100 through Hopper |

INT8 support across NVIDIA V100, Turing, Ampere, and Ada Lovelace

LMDeploy's documentation confirms INT8 inference support across NVIDIA GPU generations including V100 (sm70), Turing-class cards (sm75, including the T4), Ampere (A100, A10), and Ada Lovelace (RTX 40-series, L40). This breadth is significant: V100 instances are common in cloud infrastructure where GPU upgrades are constrained by cost or availability, and Turing T4 instances are the default inference GPU on several major cloud providers' cost-optimized SKUs.

| GPU generation | INT8 inference support |

|---|---|

| V100 / Volta | ✅ |

| Turing / T4 | ✅ |

| Ampere / A100, A10 | ✅ |

| Ada Lovelace / L40, RTX 40-series | ✅ |

INT8 remains the validated path across these generations; FP8 support is a separate capability and should not be assumed from the INT8 matrix.

Why FP8 on Hopper H100 changes the deployment conversation

LMDeploy's documentation states that for GPUs such as the NVIDIA H100, it supports 8-bit floating point (FP8) inference in addition to INT8. FP8 (specifically E4M3 or E5M2 formats) provides a larger dynamic range than INT8 for the same bit width, which reduces the activation outlier problem at the data-type level rather than through offline smoothing. On H100 hardware with Transformer Engine support, FP8 GEMM throughput is comparable to INT8 GEMM throughput while requiring less aggressive smoothing to maintain accuracy.

Production Note: LMDeploy docs state that for GPUs such as Nvidia H100, LMDeploy also supports 8-bit floating point (FP8).

| Precision | Dynamic range | Outlier sensitivity | Hardware requirement | SmoothQuant dependency |

|---|---|---|---|---|

| INT8 | Limited (256 levels) | High | V100 and newer | Required for outlier-heavy LLMs |

| FP8 (E4M3) | Higher (~448 max) | Moderate | Ada Lovelace, Hopper only | Reduced but not eliminated |

| FP16 | Full | None | Any | Not applicable |

For teams deploying on H100, the choice between SmoothQuant W8A8 INT8 and native FP8 inference is a real architectural decision that the original SmoothQuant paper predates. FP8 does not make SmoothQuant obsolete — it changes the trade-off surface — but engineers on H100 clusters should benchmark both paths before committing to the INT8 smoothing pipeline.

What practitioners should verify before choosing SmoothQuant

SmoothQuant is described in its abstract as "training-free, accuracy-preserving", but both qualifiers are conditional. "Training-free" holds: no gradient updates are required. "Accuracy-preserving" is benchmark- and calibration-dependent. The paper's evaluations are conducted on specific zero-shot benchmarks with controlled calibration sets; production workloads differ in task distribution, sequence length, and calibration data availability.

Verification before production deployment requires four checks:

- Perplexity delta on a held-out language-modeling set. Establish an acceptable regression budget (e.g., ≤0.5 nats on WikiText-2) and verify the smoothed model stays within it at the target alpha.

- Downstream task accuracy on at least one task relevant to your deployment. If the model serves code, run HumanEval. If it serves instruction-following, run MT-Bench or an equivalent suite.

- Per-layer activation statistics. Inspect maximum activation magnitudes before and after smoothing to confirm outliers are suppressed rather than redistributed.

- Latency and throughput on the target hardware. INT8 GEMM throughput gains vary by batch size, sequence length, and GPU generation; validate the speedup assumption on your actual serving configuration.

When SmoothQuant is the right fit versus AWQ or GPTQ

-

Choose SmoothQuant W8A8 when your serving hardware supports INT8 GEMM kernels (V100 through Hopper), your target model is a large outlier-heavy LLM (OPT, BLOOM, LLaMA-scale), and your throughput requirement benefits from true weight-and-activation INT8 rather than FP16 activation with dequantized weights. SmoothQuant wins when both tensor operands in GEMM need to be INT8.

-

Choose AWQ or GPTQ when your primary constraint is GPU memory rather than GEMM throughput, you are targeting a weight-only INT4 regime, or your serving stack does not support fused INT8 GEMM kernels (common on older deployment pipelines with only FP16 kernel support). AWQ and GPTQ quantize weights to INT4 while keeping activations in FP16; this halves the weight memory footprint but does not accelerate the arithmetic the way W8A8 does.

-

Choose FP8 on Hopper when you have access to H100 or Ada Lovelace hardware and your model family is well-served by FP8 kernels. FP8's larger dynamic range reduces the need for aggressive offline smoothing while delivering comparable memory and throughput benefits to INT8.

| Criterion | SmoothQuant W8A8 | AWQ / GPTQ INT4 | FP8 (H100) |

|---|---|---|---|

| GEMM acceleration | ✅ Both operands INT8 | ❌ Dequant to FP16 at runtime | ✅ Both operands FP8 |

| Weight memory reduction | ~2× vs FP16 | ~4× vs FP16 | ~2× vs FP16 |

| Activation quantization | ✅ INT8 | ❌ FP16 | ✅ FP8 |

| Hardware breadth | V100+ | Any (no kernel dependency) | Ada + Hopper only |

| Outlier handling mechanism | Offline smoothing | Weight-only clipping/grouping | Larger FP8 range |

The checks you should run on perplexity and downstream evals

The SmoothQuant paper reports both zero-shot benchmark averages and perplexity, indicating that no single metric is sufficient for production validation. A practical evaluation plan for a W8A8 SmoothQuant deployment should cover:

| Eval dimension | Recommended metric | Acceptable regression threshold |

|---|---|---|

| Language modeling | WikiText-2 perplexity | ≤0.5 nats vs FP16 baseline |

| General reasoning | ARC-Challenge, HellaSwag (zero-shot) | ≤1% absolute accuracy drop |

| Code generation | HumanEval pass@1 | ≤2% absolute pass rate drop |

| Long context | Needle-in-a-haystack, SCROLLS | ≤3% task-specific metric drop |

| Instruction following | MT-Bench score | ≤0.2 point drop on 1–10 scale |

Thresholds above are illustrative; your deployment SLA determines the acceptable budget. The critical operational practice is running all five dimensions — not just perplexity — before promoting a smoothed checkpoint to production, because long-context and code metrics can degrade independently of perplexity.

FAQ about SmoothQuant and W8A8 inference

What is SmoothQuant in LLM quantization?

SmoothQuant is a post-training quantization method that enables W8A8 INT8 inference for LLMs without retraining. It applies a calibration-derived per-channel scale to activations and absorbs the inverse into the corresponding weight matrix, making both tensors amenable to INT8 quantization.

How does SmoothQuant work?

It computes a per-channel smoothing vector (\mathbf{s}) from calibration data, divides activation channels by (\mathbf{s}) (reducing outliers), and multiplies the weight matrix by (\mathbf{s}) (absorbing difficulty into weights). The product (\mathbf{X}\mathbf{W}) is numerically unchanged. Runtime sees smooth activations and rescaled weights, both quantized to INT8.

Why is activation quantization harder than weight quantization?

Weight tensors in transformer linear layers have smooth per-channel distributions with predictable ranges. Activation tensors develop persistent outlier channels — specific hidden dimensions that emit magnitudes far exceeding the rest — after scale. A single INT8 scale covering both outlier and non-outlier channels produces coarse quantization for the majority of values.

Does SmoothQuant improve accuracy?

Relative to naïve W8A8 INT8 quantization on outlier-heavy LLMs, yes — substantially. Relative to FP16 baseline, SmoothQuant is accuracy-preserving on the benchmarks reported in the paper for models like OPT-175B and BLOOM-176B. Results are calibration- and workload-dependent; long-context and code tasks require separate verification.

What models work well with SmoothQuant?

Large outlier-heavy models benefit most: OPT-175B, BLOOM-176B, GLM-130B, LLaMA-scale models. The GitHub repository ships pre-computed activation channel scales for Llama, Mistral, Mixtral, Falcon, OPT, and BLOOM families. Smaller GPT-style models (6B–13B) also work but show less dramatic improvement over naïve INT8 because their outlier structure is less severe.

Pro Tip: Use the pre-computed smoothing scales from the mit-han-lab/smoothquant repository as calibration starting points when your calibration dataset is small or domain-shifted. Verify that the provided scales were computed on a model checkpoint identical to yours — fine-tuned or instruction-tuned variants can shift activation statistics enough to require fresh calibration.

Sources & References

- SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models — arXiv:2211.10438 — Original paper by Guangxuan Xiao et al.; primary source for all algorithmic claims and mathematical formulations.

- SmoothQuant ICML/PMLR proceedings page — Published conference version; source for the "3 largest openly available LLM models" accuracy claim.

- mit-han-lab/smoothquant GitHub repository — Reference implementation; source for supported model families, pre-computed scale files, and OPT-30B demo constraints.

- LMDeploy W8A8 quantization documentation — Primary source for DecoderLayer module replacement (

QRSMNorm,QLinear),lmdeploy lite smooth_quantCLI, PyTorch backend, and hardware support matrix (V100 through H100).

Pro Tip: When citing specific perplexity or benchmark numbers from the SmoothQuant paper in your own evaluations, reference the versioned PDF directly (arXiv:2211.10438v7 or the PMLR version) rather than the abstract, as table values appear only in the full paper body and differ between preprint versions.

Keywords: SmoothQuant, W8A8, INT8 inference, LMDeploy, lmdeploy lite smooth_quant, PyTorch backend, DecoderLayer, RMSNorm, nn.Linear, QRSMNorm, QLinear, OPT-175B, BLOOM-176B, GPT-J-6B, NVIDIA V100, NVIDIA H100