How We Compared TensorRT-LLM's expert parallelism for MoE traffic

TensorRT-LLM frames MoE inference as a first-order systems problem, not a kernel optimization. When a router selects top-k experts per token across hundreds of GPUs, the resulting traffic pattern determines whether your cluster spends cycles on computation or on waiting. This comparison evaluates TensorRT-LLM's expert parallelism design against tensor parallelism and vLLM-style MoE serving across three axes: hardware coupling, traffic balancing, and operational complexity.

The design explicitly targets NVLink-scale environments. As NVIDIA states, the implementation exists specifically "to leverage NVIDIA GB200 Multi-Node NVLink (MNNVL) HW features to implement high-performance communication kernels." That sentence sets the scope of the entire comparison: the performance model is tied to MNNVL-class fabric.

| Dimension | TensorRT-LLM Expert Parallelism | Tensor Parallelism (any stack) | vLLM MoE Serving |

|---|---|---|---|

| Hardware coupling | Tight — GB200/NVLink-optimized communication kernels | Moderate — works across NVLink and PCIe | Loose — portable across H100, A100, other NVIDIA hardware |

| Traffic balancing | Online EPLB with expert replication (Wide-EP) | None — all-reduce splits weight matrices, not expert traffic | Expert-parallel sharding available; no online balancer |

| Operational complexity | High — placement config, EPLB workflow, weight redistribution | Low — standard tensor slicing | Low to Medium — configuration is simpler, benchmarks well-documented |

| Target model scale | 100B+ sparse MoE (DeepSeek-V3, DeepSeek-R1 class) | Any dense or sparse MoE | Mixtral to DeepSeek-V3 scale |

| Primary bottleneck addressed | Expert-load skew and cross-GPU communication | Memory bandwidth per expert shard | Throughput and latency across generalized GPU setups |

At-a-glance comparison of MoE serving approaches

For teams choosing a serving stack for Mixtral 8x7B or DeepSeek-V3, the decision space is narrower than it appears. TensorRT-LLM expert parallelism delivers the most NVLink-optimized path for models where expert-level imbalance measurably caps throughput. vLLM's own large-scale serving materials make the trade-off concrete: "The benefit over tensor parallelism is shown in the following figure, which shows memory usage per GPU for DeepSeek-V3 using tensor parallel and expert parallel sharding strategies." Both stacks acknowledge EP's memory advantage over TP for sparse MoE — the difference is how aggressively they pursue load balancing and how much they tie that pursuit to specific hardware.

| Scenario | Best Fit | Why |

|---|---|---|

| DeepSeek-V3/R1 on GB200 NVL72, latency-critical | TensorRT-LLM EP + Wide-EP | NVLink kernels reduce communication cost; EPLB actively addresses skew |

| DeepSeek-V3 on H100/A100 clusters, mixed workloads | vLLM with EP sharding | Portable, well-benchmarked, lower operational overhead |

| Mixtral 8x7B, single-node or small multi-node | vLLM or TensorRT-LLM TP/EP | Expert-count small enough that EPLB overhead may not pay off |

| Research or fine-tuning environments with frequent model changes | vLLM | Simpler deployment; less placement reconfiguration needed |

| High-throughput production cluster, NVIDIA-dedicated | TensorRT-LLM EP | Interconnect optimization is a first-class requirement, not an afterthought |

What the comparison optimizes for

This comparison prioritizes the question that competitor posts avoid directly: whether NVIDIA-coupled load balancing on NVLink-scale systems is worth its operational cost. Kernel benchmarks and documentation excerpts miss the system-level decision. The real selection criterion is whether your infrastructure can justify the full EPLB workflow — statistics collection, placement generation, weight redistribution — in exchange for lower expert-load skew.

Bottom Line: If your cluster runs on NVLink-scale fabric (GB200 NVL72 or equivalent) and your model has 100B+ sparse parameters with persistent routing imbalance, TensorRT-LLM expert parallelism is the strongest fit. If you need hardware portability or simpler operations, the extra scheduling machinery costs more than it returns.

The hardware and workload assumptions behind the comparison

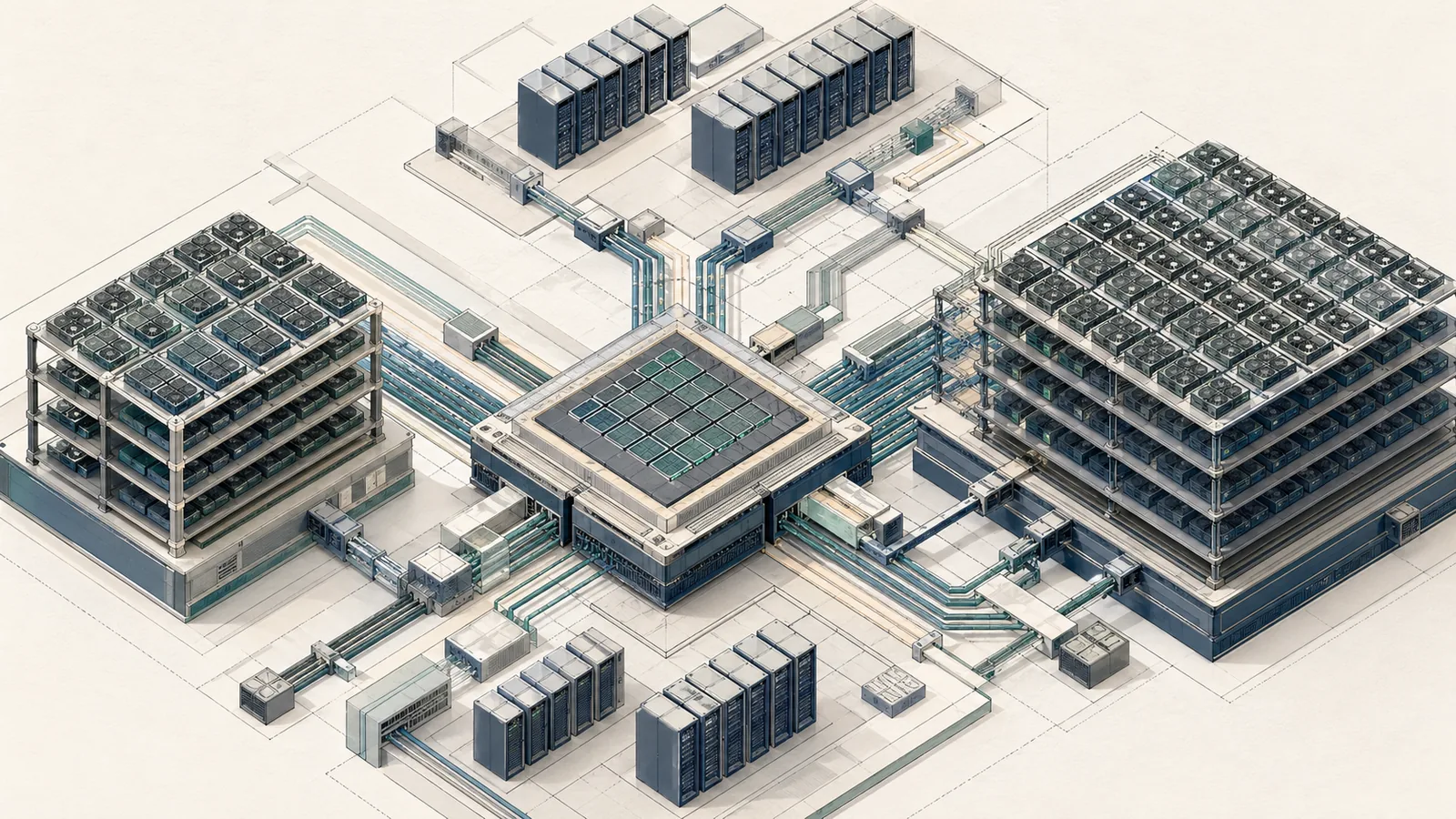

TensorRT-LLM's EP design assumes NVIDIA GB200 multi-node NVLink as the fabric. The blog is explicit: this is not a generic inter-GPU communication design. The workload assumption is equally specific — a model like DeepSeek-V3, with 671B total parameters and 37B activated per token, produces enough routing traffic and enough memory pressure that placement and communication patterns become dominant performance variables.

Production Note: TensorRT-LLM's expert parallelism requires the full MoE runtime machinery: mapping configuration, EPLB config generation, and weight redistribution steps. This is not a drop-in swap from tensor parallelism. Teams operating on mixed-vendor or non-NVLink GPU clusters should treat this design's performance model as inapplicable — the communication kernels are explicitly built for MNNVL fabric.

TensorRT-LLM expert parallelism for large-scale MoE

Expert parallelism in TensorRT-LLM distributes the expert weight matrices across GPU ranks so each GPU holds a subset of experts. The MoE router still selects top-k experts per token, but those experts may now reside on remote GPUs, turning what was a local GEMM into a cross-GPU communication event. This contrasts directly with tensor parallelism, which splits individual weight matrices across ranks regardless of expert boundaries, and with hybrid modes that combine both.

Wide-EP extends standard EP with an additional balancing layer. As the TensorRT-LLM documentation states: "Wide-EP is an advanced form of expert parallelism that addresses the inherent workload imbalance in large-scale MoE models through intelligent load balancing and expert replication." Expert replication means frequently-routed experts can exist on multiple GPUs simultaneously, reducing the probability that a single GPU becomes the bottleneck for a hot expert.

| Parallelism Mode | How weights are split | Communication primitive | Load balancing | Memory per GPU |

|---|---|---|---|---|

| Tensor Parallelism | Each weight matrix split across ranks | AllReduce / AllGather | None — all ranks process same tokens | High (each rank holds shard of every expert) |

| Standard Expert Parallelism | Each expert assigned to one rank | AllToAll | None | Lower (each rank holds subset of experts) |

| Wide-EP (TensorRT-LLM) | Experts assigned + hot experts replicated | AllToAll + NVLink-aware kernels | Online EPLB with replication | Moderate (replication adds memory vs. standard EP) |

| Hybrid TP+EP | Matrix-level split within expert-parallel groups | AllReduce + AllToAll | Partial | Depends on TP and EP degree |

Why MoE traffic becomes the bottleneck

Sparse routing concentrates load on a small number of experts per forward pass, and the distribution is not uniform across token sequences. A router that consistently sends 40% of a batch's tokens to 10% of the experts creates GPU-level stragglers: the GPUs holding hot experts become compute-bound and communication-bound simultaneously, while GPUs holding cold experts sit idle. The aggregate throughput is determined by the slowest GPU.

As NVIDIA's EP blog part 2 states directly: "EP-level workload imbalance is common for large-scale EP inference across multiple datasets and has significant performance impacts." DeepSeek-V3's 671B/37B architecture makes this concrete: even though only 37B parameters activate per token, the routing pattern across 671B parameters determines which GPUs carry that load.

Watch Out: The dominant failure mode in large-scale EP is traffic skew that leaves cold-expert GPUs idle while hot-expert GPUs stall on communication. This failure is data-dependent — it emerges from the input distribution, not from configuration errors — and it worsens as EP degree increases because each GPU holds fewer experts, concentrating the skew effect.

How NVLink-aware communication changes the cost model

Without NVLink-class fabric, cross-node expert parallelism introduces a communication cost that can match or exceed computation time. The DeepSeek-V3 technical report quantifies this directly: "For DeepSeek-V3, the communication overhead introduced by cross-node expert parallelism results in an inefficient computation-to-communication ratio of approximately 1:1." A 1:1 ratio means half of wall-clock time is spent moving tokens to experts rather than computing on them.

TensorRT-LLM's EP implementation builds communication kernels specifically for GB200 MNNVL fabric, exploiting topology to reduce the cost of AllToAll operations that route tokens to remote experts.

| Communication scenario | Compute:Communication ratio | Implication |

|---|---|---|

| Cross-node EP on standard interconnects | ≈1:1 (per DeepSeek-V3 report) | Half of execution time is communication |

| EP on GB200 MNNVL fabric | Improved by topology-aware kernels; exact ratio workload-dependent | Computation regains dominance if routing is balanced |

| Intra-node EP on NVLink | Better than cross-node; within a single NVL72 chassis | Strongest case for EP; communication cost minimized |

| After EPLB rebalancing | Lower skew, but added scheduling and redistribution overhead | Net benefit depends on imbalance severity before rebalancing |

The trade-off is not free. Reducing imbalance through EPLB adds scheduling and communication complexity. The NVLink-aware kernels help, but the architecture still imposes overhead that generic serving stacks avoid by not attempting dynamic redistribution at all.

Where Wide-EP fits relative to standard EP

Standard EP assigns each expert to exactly one GPU and relies on the router's natural distribution to keep load roughly balanced. Wide-EP adds two mechanisms on top: online load balancing via EPLB, and expert replication for persistently hot experts. Replication is the sharper instrument — it physically duplicates an expert's weights onto multiple GPUs so that routing traffic can be distributed across replicas rather than funneled to one.

Pro Tip: Expert replication and expert placement are distinct tuning levers. Placement (which GPU holds which expert) can be adjusted by EPLB without consuming extra memory. Replication reduces hot-expert skew further but increases memory footprint proportionally to the replication factor. Reach for placement optimization first; add replication only when placement alone cannot eliminate the bottleneck.

Wide-EP is most relevant when the model's routing entropy is low — when a small fraction of experts consistently receives a disproportionate share of tokens. At high routing entropy (more uniform distribution), standard EP with optimized placement is often sufficient and avoids Wide-EP's additional coordination cost.

The online expert workload balancer and EPLB workflow

TensorRT-LLM's Expert Parallelism Load Balancer (EPLB) is the system-level mechanism that separates the EP design from static expert assignment. The workflow has three sequential stages: collect expert routing statistics from live inference traffic, generate an EPLB placement configuration, and redistribute MoE weights across GPUs according to that configuration. This is an operational pipeline, not a runtime auto-tune — each stage requires explicit orchestration.

| EPLB Stage | What happens | System requirement |

|---|---|---|

| 1. Statistics collection | Runtime accumulates per-expert token counts across inference requests | Stable, representative traffic window; volatile inputs produce bad placements |

| 2. Configuration generation | EPLB algorithm computes new expert-to-GPU assignment and replication factors | Access to routing statistics and cluster topology |

| 3. Weight redistribution | MoE expert weights moved across GPUs per new placement; runtime remapped | Sufficient memory headroom; potential service interruption or warm cache invalidation |

The EPLB workflow frames this as "a set of functionalities to address this issue" — NVIDIA's framing is deliberately operational rather than purely algorithmic. The implication is that teams adopting EPLB are taking on a recurrent operational process, not a one-time configuration.

What statistics the balancer needs

EPLB requires per-expert token routing counts observed over a traffic window representative of the production workload. Because EP-level imbalance varies across datasets, a statistics window drawn from one request type may generate a placement that worsens performance for another. The runtime must observe enough routing history to distinguish persistent hot experts from transient spikes caused by a single unusual batch.

Pro Tip: Collect statistics over the longest stable window your SLA permits before triggering a placement update. A 10-minute window of mixed production traffic will produce a more durable placement than a 60-second window of a single query type. Short observation windows are the most common cause of EPLB placements that improve one metric while degrading another.

How EPLB changes expert placement over time

Before EPLB runs, expert assignment is static — the placement set at launch time persists until explicitly changed. Hot experts accumulate tokens on their assigned GPU, creating the skew described earlier. After EPLB generates a new configuration, the runtime remaps those hot experts to less-loaded GPUs or replicates them across multiple GPUs so that incoming routing traffic distributes across more compute resources.

| State | Expert assignment | Imbalance | Communication cost |

|---|---|---|---|

| Initial static placement | One expert per GPU, original mapping | High if router has low entropy | Baseline AllToAll |

| After placement-only EPLB | Experts redistributed to balance token counts | Reduced | Similar AllToAll, but better distributed |

| After placement + replication (Wide-EP) | Hot experts duplicated across GPUs | Lowest | Higher — more GPUs involved in routing decisions |

| After volatile traffic shift | Placement may no longer match traffic | Returns toward pre-EPLB levels | Requires another statistics cycle |

The critical constraint: placement changes can invalidate warm GPU caches and may require GPU weight memory to be resynchronized. In continuous-batching production deployments, triggering a redistribution without a maintenance window introduces risk of latency spikes during the remapping phase.

What the balancer costs in practice

Online balancing adds three categories of overhead that static EP avoids entirely. First, statistics collection consumes runtime memory and requires coordination across EP ranks to aggregate per-expert counts. Second, EPLB configuration generation is a compute step that runs outside the critical inference path but still imposes latency before a new placement takes effect. Third, weight redistribution across GPUs introduces a synchronization point that can cause tail-latency spikes.

Watch Out: The EPLB overhead is not a one-time cost. Every time the traffic distribution shifts significantly, the existing placement degrades and the statistics/reconfiguration cycle must restart. On NVLink-coupled systems with stable traffic, this is manageable. On systems with volatile input distributions or non-NVLink fabrics, the balancer can introduce more instability than it removes.

TensorRT-LLM versus adjacent MoE serving stacks

The comparison between TensorRT-LLM expert parallelism and vLLM-style MoE serving reduces to a hardware coupling versus portability trade-off, with operational complexity as the tiebreaker. Both stacks support expert parallelism for sparse MoE models; the divergence is in how aggressively they tie that support to specific fabric and how much operational machinery they require.

vLLM's own materials make the memory argument for EP over TP: for DeepSeek-V3, EP sharding materially reduces per-GPU memory compared to tensor-parallel sharding. The stack is described as "a fast and easy-to-use library for LLM inference and serving" — a positioning that prioritizes deployment breadth over hardware-specific optimization depth.

| Dimension | TensorRT-LLM EP | vLLM (EP mode) |

|---|---|---|

| Hardware requirement | GB200/NVLink strongly preferred; MNNVL kernels are explicit design targets | H100, A100, broader NVIDIA GPU support |

| MoE routing support | Full — AllToAll, NVLink-aware kernels, GroupGEMM | Expert-parallel sharding; broad deployment support |

| Online load balancing | Yes — EPLB with statistics collection and weight redistribution | Portability-focused deployment model |

| Operational complexity | High — EPLB workflow, placement configuration, weight remapping | Low to Medium — standard deployment patterns |

| Ecosystem portability | Low — NVIDIA-coupled | High — broader deployment targets |

| Benchmark transparency | NVIDIA EP blogs describe workflow; headline tokens/sec figures require direct blog extraction | Throughput, TTFT, ITL, P99 latency published for H100/A100 |

When TensorRT-LLM wins

TensorRT-LLM expert parallelism delivers its strongest advantage on NVIDIA-dedicated clusters where NVLink bandwidth is the primary resource distinguishing that infrastructure from a commodity GPU cluster. The GB200 NVL72 chassis, with its MNNVL fabric, is precisely the environment where NVLink-aware communication kernels pay off — and where the EPLB workflow's operational overhead is justified by the imbalance problems that appear on 100B+ sparse MoE models.

Bottom Line: Choose TensorRT-LLM expert parallelism when the cluster is NVIDIA-dedicated with NVLink/MNNVL fabric, the model is 100B+ sparse MoE (DeepSeek-V3/R1 class), routing imbalance has been measured and confirmed to cap throughput, and the team can sustain an EPLB operational workflow. This combination makes the hardware coupling and operational complexity worthwhile.

When another stack is the safer choice

vLLM is the safer choice when portability, ecosystem breadth, or reduced operational overhead is the primary constraint. Its expert-parallel mode addresses the same memory-per-GPU problem without requiring NVLink-specific kernels or the EPLB pipeline.

| Reason to choose vLLM or another stack | Detail |

|---|---|

| Mixed-vendor GPU cluster | TensorRT-LLM's NVLink kernels don't apply; communication advantage disappears |

| Operational team size is small | EPLB workflow requires ongoing maintenance; vLLM's static deployment is simpler |

| Frequent model updates or A/B experiments | Expert placement reconfiguration on each model change adds friction |

| Portability across cloud providers | TensorRT-LLM's MNNVL assumptions are datacenter-hardware-specific |

| Workload is Mixtral 8x7B scale | Expert count is small enough that EPLB overhead may not improve net throughput |

Benchmarks and signals to watch before adopting expert parallelism

Before committing to TensorRT-LLM expert parallelism, measure the actual imbalance in your serving environment. NVIDIA's EP blog confirms that EP-level workload imbalance occurs across multiple datasets and has significant performance impacts — but the magnitude of skew is workload-specific. The decision to absorb EPLB's operational overhead must be grounded in measured skew, not assumed.

Available official sources emphasize qualitative behavior and operational workflow rather than a single public TensorRT-LLM EP tokens/sec figure. vLLM's large-scale serving benchmarks cover throughput, TTFT, ITL, and P99 latency on H100 and A100, providing a concrete baseline for cross-stack comparison on those platforms.

| Metric | What it reveals | How to collect |

|---|---|---|

| Per-expert token count variance | Routing skew — the primary signal for whether EPLB will help | Instrument the MoE router; log expert selection counts per batch |

| Tokens/sec (aggregate) | Overall serving efficiency before and after EP/EPLB | Standard serving benchmark at target batch size and sequence length |

| P99 tail latency | Straggler effect — one overloaded GPU creates latency spikes | Percentile latency under sustained load, not just mean |

| GPU utilization variance across EP ranks | Direct measure of load imbalance | nvidia-smi or DCGM per-GPU utilization during inference |

Which metrics matter most for MoE serving

Throughput alone is an insufficient signal for expert parallelism evaluation. A placement that improves mean throughput can simultaneously worsen P99 tail latency if it redistributes traffic in a way that creates new stragglers under burst conditions. Collect per-expert token counts, aggregate tokens/sec, P99 latency, and GPU utilization variance together — any single metric is gameable by the placement optimizer.

| Metric | Target signal | Why it matters for MoE |

|---|---|---|

| Per-expert token count variance | Low | High variance means EPLB has not converged to a good placement |

| Aggregate tokens/sec | Maximize for target batch size | Captures overall serving efficiency |

| P99 tail latency (ms) | Minimize; watch for regressions after EPLB update | Straggler GPUs show up in tail, not mean |

| GPU utilization variance across ranks | Low | Direct measure of whether placement is actually balanced |

How to interpret the results without overfitting to one model

DeepSeek-V3's 671B/37B architecture and Mixtral 8x7B represent materially different operating points. DeepSeek-V3 has a much larger expert pool, finer-grained routing, and substantially higher communication demands at scale. As NVIDIA's expert-parallel documentation notes: "Mixture of Experts (MoE) architectures have become widespread, with models such as Mistral Mixtral 8×7B." — meaning the EP concepts generalize, but the performance profile does not transfer without re-measurement.

Pro Tip: Separate model-specific gains from architecture-level trends. A placement strategy that removes imbalance on DeepSeek-V3 may show near-zero benefit on Mixtral 8x7B because the two models have different routing behavior and expert counts. Benchmark the specific model you intend to serve at the EP degree you intend to run; do not carry numbers from a different model's report into your infrastructure decision.

Decision matrix for MoE infrastructure teams

| Workload shape | Hardware | Ops tolerance | Best serving option |

|---|---|---|---|

| 100B+ sparse MoE, persistent routing imbalance confirmed | GB200 NVL72 or NVLink multi-node | High — team can run EPLB pipeline | TensorRT-LLM EP + Wide-EP with EPLB |

| 100B+ sparse MoE, routing imbalance unknown or mild | H100/A100 cluster, NVLink intra-node | Medium | vLLM with EP sharding; measure before adding EPLB machinery |

| Mixtral 8x7B or similar small expert count | Any modern NVIDIA GPU | Low | vLLM TP or EP; TensorRT-LLM TP viable; EPLB overhead not justified |

| Mixed-model serving infrastructure | Multi-vendor or cloud-agnostic | Low | vLLM — portability and ecosystem breadth dominate |

| Research environment, frequent model swaps | Any | Low | vLLM — configuration simplicity, faster iteration |

| Dedicated inference cluster, NVIDIA-owned, latency SLA tight | GB200 NVL72 | High | TensorRT-LLM EP — NVLink kernels and EPLB justify complexity |

Choose TensorRT-LLM EP when the cluster is the product

When the serving cluster is NVIDIA-dedicated and NVLink bandwidth is a planned infrastructure asset rather than incidental, TensorRT-LLM expert parallelism is the strongest fit. At GB200 NVL72 scale, the NVLink-aware communication kernels directly exploit MNNVL topology — and the workloads that justify that hardware (DeepSeek-V3/R1-class models at production throughput) are exactly the workloads where EPLB's imbalance reduction pays off.

Bottom Line: TensorRT-LLM EP is the right choice when interconnect bandwidth and expert placement are treated as first-class infrastructure concerns — not configuration afterthoughts. If the team is already managing GB200 NVL72 clusters for large sparse MoE inference, the EPLB operational overhead is an incremental cost on top of infrastructure complexity that already exists.

Avoid EP-first designs when portability matters more than peak efficiency

The vendor coupling in TensorRT-LLM's EP design is not incidental — it is architectural. The communication kernels target MNNVL specifically. Any migration away from NVIDIA NVLink-class fabric invalidates the performance model. Teams that need to serve MoE models across multiple cloud providers, mixed GPU generations, or evolving hardware roadmaps will find that the EPLB tuning work they invest in today does not transfer.

Watch Out: EP-first designs on TensorRT-LLM introduce two categories of lock-in: hardware lock-in (NVLink/MNNVL) and operational lock-in (EPLB workflow, placement configuration, TensorRT-LLM runtime). If either becomes a constraint — hardware budget shifts, team knowledge concentration, regulatory data-residency requirements — the cost to migrate away from TensorRT-LLM EP is high because placements, statistics, and runtime machinery are non-portable. Calibrate the adoption decision against the expected infrastructure lifetime.

People Also Ask on TensorRT-LLM expert parallelism

| Question | Answer |

|---|---|

| What is expert parallelism in TensorRT-LLM? | Expert parallelism distributes MoE expert weight matrices across GPU ranks so each GPU holds a subset of experts. The router selects top-k experts per token; if those experts are on remote GPUs, the runtime issues AllToAll communication to route tokens. Wide-EP adds online load balancing and expert replication on top. |

| How does TensorRT-LLM balance MoE traffic? | Through the EPLB workflow: the runtime collects per-expert token routing statistics, generates a new placement configuration, and redistributes MoE weights across GPUs. Wide-EP can also replicate hot experts across multiple GPUs to split routing load. |

| What is the difference between tensor parallelism and expert parallelism? | Tensor parallelism splits individual weight matrices across GPU ranks (each rank participates in every computation via AllReduce). Expert parallelism assigns whole experts to individual ranks (each rank computes independently for its assigned experts, with AllToAll to route tokens). EP reduces per-GPU memory for sparse MoE; TP is simpler operationally. |

| Why is NVLink important for MoE inference? | Cross-node expert parallelism without high-bandwidth fabric produces approximately 1:1 compute-to-communication ratios, meaning half of execution time is spent moving data. NVLink on GB200 NVL72 provides fabric bandwidth that shifts this ratio back toward compute-dominance, making large-scale EP viable. |

| Is TensorRT-LLM better than vLLM for MoE models? | It depends on hardware and operational constraints. TensorRT-LLM EP outperforms on NVIDIA GB200 NVLink clusters with large sparse MoE models and confirmed routing imbalance. vLLM is the safer choice for mixed hardware, portability requirements, or teams that cannot sustain the EPLB operational pipeline. Neither stack dominates universally. |

Sources and references

| Source type | Contribution | Why it matters |

|---|---|---|

| Official engineering blog | Describes end-to-end EP support, GB200 MNNVL communication kernels, and the EPLB workflow | Primary evidence for TensorRT-LLM's hardware-specific design choices |

| Official engineering blog, follow-up | Extends the EP discussion with EPLB detail, workload imbalance evidence across multiple datasets, and usability improvements | Confirms that imbalance is a recurring systems issue, not a one-off edge case |

| Official documentation | Defines expert parallelism, communication patterns, and placement concepts within TensorRT-LLM | Establishes the terms used throughout the comparison |

| Official documentation | Defines Wide-EP as load-balancing plus expert replication; distinguishes it from standard EP | Supports the distinction between standard EP and Wide-EP |

| Technical report | Source for 671B/37B parameter counts and the 1:1 compute-to-communication ratio for cross-node EP | Grounds the workload and cost-model discussion in model-level evidence |

| Technical report PDF | PDF version of the technical report; source for the communication ratio quote | Verifies the direct quotation used in the article |

| Large-scale serving blog | vLLM's comparison of TP vs. EP memory usage for DeepSeek-V3; throughput/TTFT/ITL/P99 benchmark framing | Supplies the cross-stack comparison baseline on H100/A100 |

| GitHub repository | Source for vLLM's positioning as a fast, easy-to-use inference and serving library | Supports the portability and deployment-breadth discussion |

- NVIDIA TensorRT-LLM Scaling Expert Parallelism Blog (Part 1) — Primary engineering blog describing end-to-end EP support, GB200 MNNVL communication kernels, and the EPLB workflow

- NVIDIA TensorRT-LLM Scaling Expert Parallelism Blog (Part 2) — Extends Part 1 with EPLB detail, workload imbalance evidence across multiple datasets, and usability improvements

- NVIDIA TensorRT-LLM Expert Parallelism Documentation — Official docs defining expert parallelism, communication patterns, and placement concepts within TensorRT-LLM

- NVIDIA TensorRT-LLM Parallel Strategy Documentation — Defines Wide-EP as load-balancing plus expert replication; distinguishes from standard EP

- DeepSeek-V3 Technical Report (arXiv 2412.19437) — Source for 671B/37B parameter counts and the 1:1 compute-to-communication ratio for cross-node EP

- DeepSeek-V3 Technical Report PDF — PDF version; source for the communication ratio quote

- vLLM Large-Scale Serving Blog — vLLM's comparison of TP vs. EP memory usage for DeepSeek-V3; throughput/TTFT/ITL/P99 benchmark framing

- vLLM GitHub Repository — Source for vLLM's positioning as a fast, easy-to-use inference and serving library

Keywords: TensorRT-LLM, expert parallelism, Wide Expert Parallelism (Wide-EP), DeepSeek-V3, DeepSeek-R1, Mixtral 8x7B, NVLink, GB200 NVL72, NCCL, MPI, GroupGEMM, EPLB, vLLM, Tensor Parallelism, MoE router