What problem agentic RAG with knowledge graphs solves

Basic RAG systems retrieve the top-k semantically similar chunks and hand them to the generator. That strategy works well for single-hop questions — "What is the refund policy?" — but collapses on queries that require chaining evidence across multiple sources or entities. Tang and Yang diagnosed this precisely in MultiHop-RAG (arXiv:2401.15391): "existing RAG systems are inadequate in answering multi-hop queries, which require retrieving and reasoning over..." multiple pieces of evidence. The failure is structural, not tunable: nearest-neighbor retrieval has no mechanism to follow a relationship from entity A to entity B to entity C when none of the individual chunks score high enough to surface all three in a single pass.

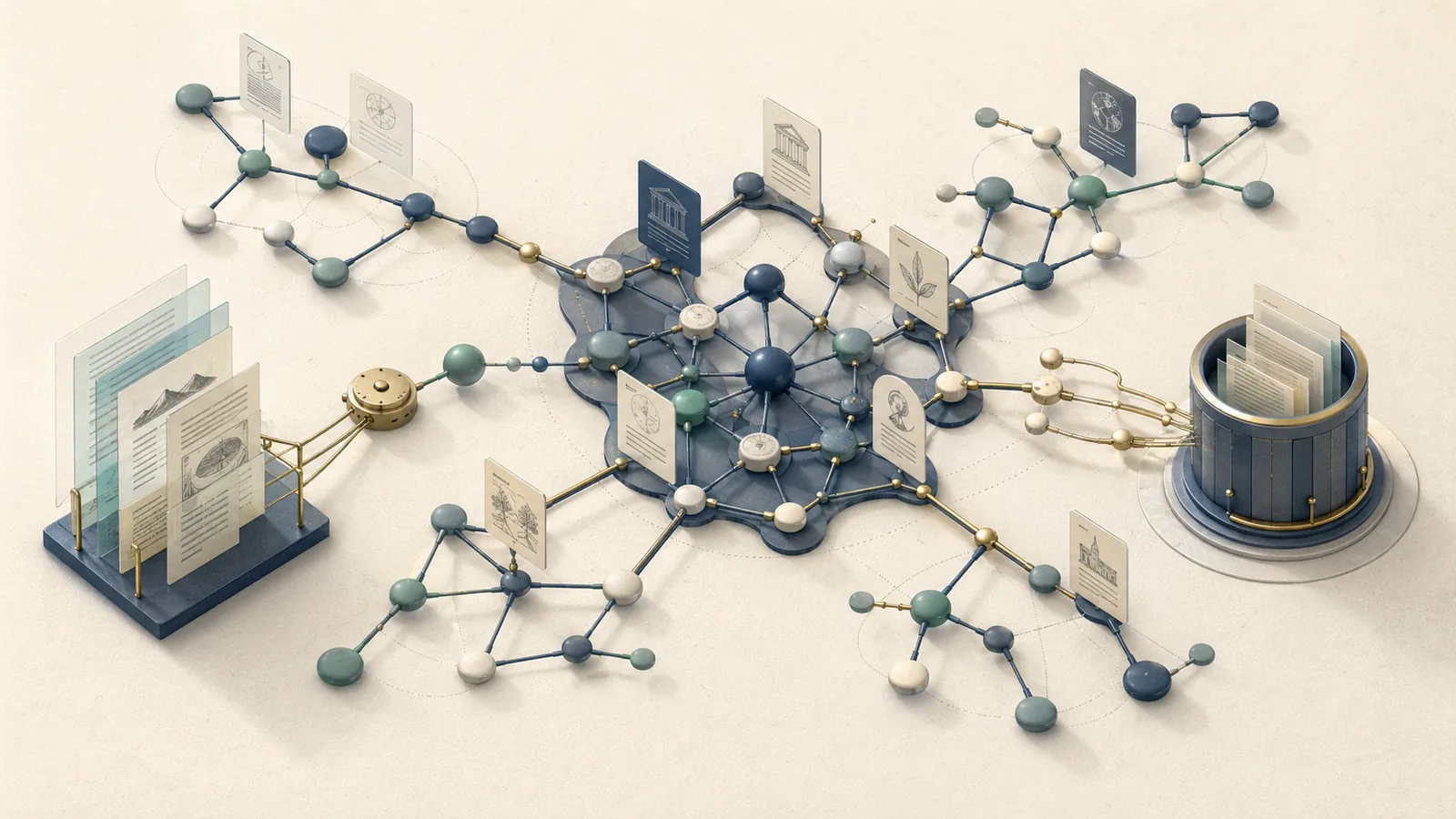

A knowledge graph changes the retrieval topology. Instead of chunks floating in embedding space, the system holds entities, typed relationships, and documents as first-class nodes. Retrieval becomes subgraph expansion: given a query entity, the system traverses edges to collect related evidence, then aggregates it before generation. Adding an agent loop — orchestrated with a framework such as LangGraph — enables the system to decompose multi-hop questions, issue targeted traversal queries, evaluate intermediate evidence, and retry when a hop returns insufficient results.

The cost is real: entity extraction, schema design, traversal policy, evidence scoring, and validation all add architectural layers that a flat vector retrieval system avoids. Noisy extraction does not merely reduce quality; it actively amplifies wrong paths because the agent will follow plausible-looking edges that lead to incorrect subgraphs. GraphRAG-Bench (arXiv:2506.02404) reinforces this by providing a holistic evaluation framework spanning graph construction, knowledge retrieval, answer generation, and rationale generation, which means GraphRAG quality must be measured across the entire pipeline rather than only final-answer correctness.

Bottom Line: Knowledge-graph agentic RAG is worth its complexity when queries structurally require chained evidence — financial, biomedical, legal, or enterprise knowledge domains where relationships between entities matter as much as the entities themselves. For predominantly single-hop question-answering over a well-chunked corpus, the overhead of graph construction and agentic orchestration is not justified.

How the retrieval loop changes from flat chunks to graph traversal

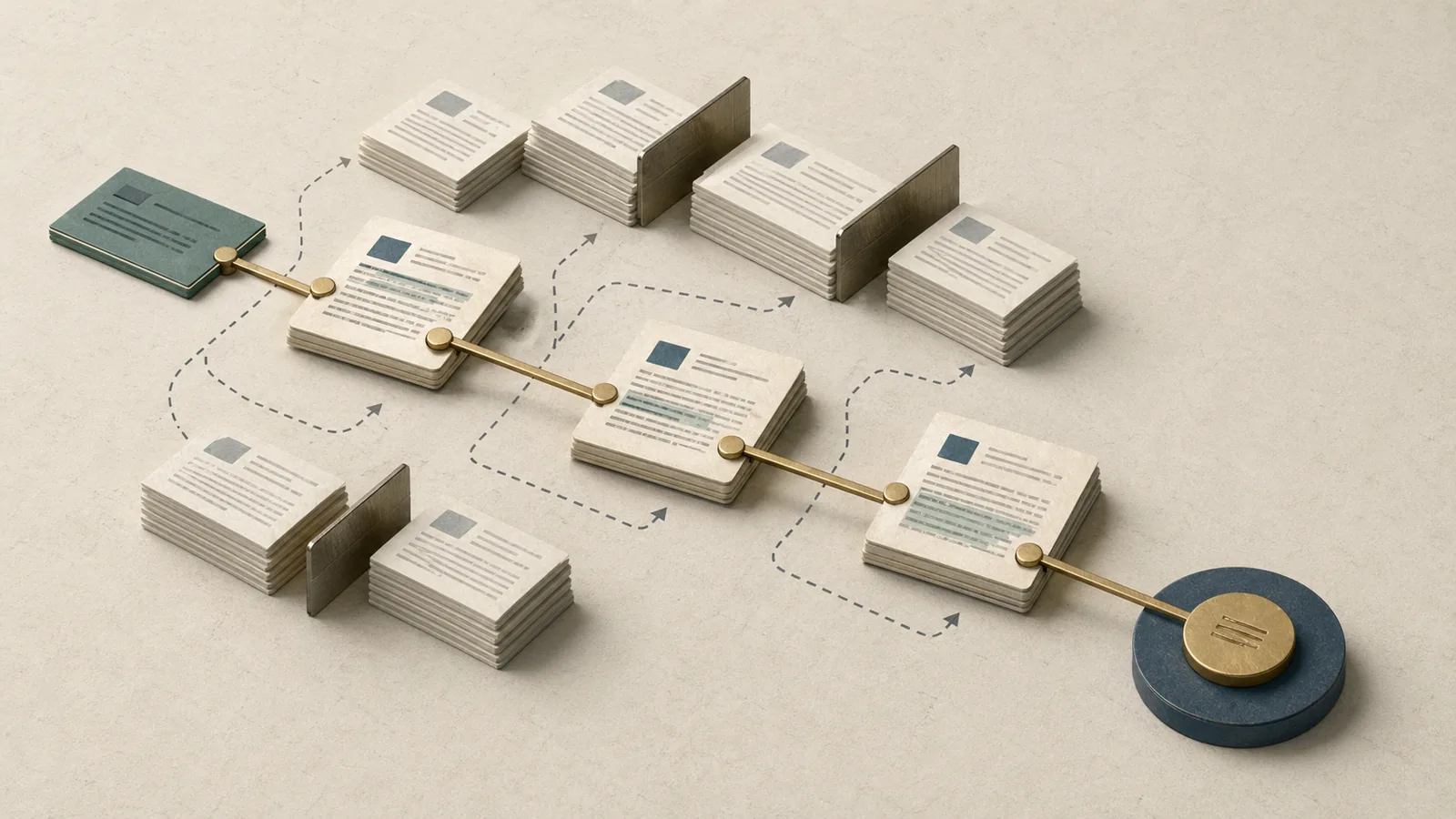

Flat RAG executes a fixed pipeline: embed query → ANN search → top-k chunks → generate. The graph-agentic loop replaces that pipeline with a stateful execution graph in which each node is a distinct operation — planning, entity linking, hop expansion, evidence scoring, synthesis — and the agent can revisit earlier nodes when intermediate results are insufficient.

LangGraph describes graphs as stateful execution flows with graph state and checkpointing, which is the control-plane mechanism used to orchestrate planner, retriever, and synthesis nodes. GraphRAG-Bench validates that graph construction, retrieval, answer generation, and rationale generation are genuinely separate stages — not abstractions — which is why a monolithic pipeline cannot serve them all correctly.

The diagram below maps the full loop:

flowchart LR

Q([User Query]) --> P[Query Planner\nDecompose into sub-questions]

P --> EL[Entity Linker\nMap terms to canonical graph nodes]

EL --> HE[Hop Expander\nTraverse edges per policy]

HE --> ES[Evidence Scorer\nRank, deduplicate, detect contradictions]

ES --> AG{Evidence\nsufficient?}

AG -- No --> P

AG -- Yes --> SY[Synthesis Node\nGenerate answer with citations]

SY --> R([Response])

subgraph Graph Layer

EL

HE

end

subgraph Orchestration — LangGraph

P

ES

AG

SY

end

The Query Planner decomposes the incoming question into sub-questions with explicit data dependencies. The Entity Linker maps natural-language terms to canonical nodes in Neo4j. The Hop Expander traverses typed relationships outward from those seed nodes following the configured traversal policy. The Evidence Scorer ranks and deduplicates the collected subgraph. The sufficiency check — implemented as a LangGraph conditional edge — either routes back to the planner for a revised strategy or forwards to synthesis. LangGraph's interrupt mechanism allows the loop to pause at this edge and accept external signals, which is useful for human-in-the-loop validation in high-stakes settings. As the LangGraph interrupts docs state, “Interrupts allow you to pause graph execution at specific points and wait for external input before continuing.”

LangGraph subgraphs documentation adds a second control point: “Stateful subgraphs inherit the parent graph’s checkpointer, which enables interrupts, durable execution, and state inspection.” That is what makes replay and inspection practical without rebuilding the workflow from scratch.

From semantic similarity to relation-aware evidence expansion

Semantic similarity retrieval asks: "Which chunks are close to the query in embedding space?" Relation-aware retrieval asks: "Which entities are connected by a typed path that the query implies?" The two questions have different answers for multi-hop queries.

LlamaIndex's knowledge-graph RAG engine makes this concrete: "Graph RAG is a Knowledge-enabled RAG approach to retrieve information from Knowledge Graph on given task. Typically, this is to build context based on entities' SubGraph related to the task." The retriever does not score chunks; it expands a subgraph rooted at task entities and collects all evidence reachable within the traversal budget.

Neo4j positions this capability explicitly for multi-hop question answering: the graph layer holds facts about how entities relate, not just what they are. A semantic search over a document corpus cannot invent an edge between "Drug A" and "Clinical Trial B" if no individual chunk mentions both together. A graph that holds a TESTED_IN edge between those nodes surfaces the connection immediately, regardless of embedding proximity.

The boundary condition matters: graph traversal outperforms reranking when the answer depends on explicit relations or chained dependencies that span documents. Reranking can re-order a candidate set, but it cannot manufacture missing entity links. If the extraction pipeline failed to index the relevant relationship, neither reranking nor traversal will recover it — extraction quality is the gating constraint.

Pro Tip: Add graph traversal when your reranker consistently promotes chunks that are semantically close but causally disconnected from the answer. That symptom indicates the evidence chain requires a typed relationship the vector index cannot represent. Graph traversal should replace or augment reranking at that point, not sit on top of it.

Query planning and decomposition inside the agent loop

Before retrieval starts, the agent must determine what to retrieve. For a query like "Which drugs approved by the EMA after 2022 share a mechanism of action with compound X?", a single retrieval call cannot satisfy all three constraints simultaneously. The planner must decompose this into: (1) identify compound X's mechanism, (2) find drugs sharing that mechanism, (3) filter by EMA approval date.

LangGraph supports explicit planning nodes before tool selection. A LangChain forum discussion on dependency resolution states directly: "If you want explicit decomposition, add a planning node or a dedicated 'planner' agent before tool selection." A supervisor-style router alone may not surface hidden dependencies between sub-questions; the planner's role is to make those dependencies explicit so the hop expander can execute them in the correct order.

LangGraph's time-travel mechanism — "Replay: Retry from a prior checkpoint" — means the planner can revise its decomposition after observing that a sub-question returned empty results, without restarting the entire workflow from the query.

Pro Tip: Model sub-question dependencies as a directed acyclic graph, not a flat list. If sub-question B depends on an entity discovered in sub-question A, the planner must sequence them correctly. LangGraph's conditional edges and state passing are the natural implementation point for this dependency graph — store intermediate entity discoveries in graph state so downstream nodes can read them.

Hop expansion policies: breadth-first, depth-first, and bounded search

The traversal policy controls which edges the hop expander follows and how far it goes. Three policies cover most use cases:

Breadth-first (BFS) expands all edges from seed nodes at hop 1 before moving to hop 2. BFS ensures the system finds the shortest path to any connected entity, which is correct for queries where the relevant evidence might be one or two hops away and you do not know which branch holds it.

Depth-first (DFS) follows a single edge chain as far as the hop limit allows before backtracking. DFS is efficient when the query implies a specific traversal direction — "follow the supply chain from manufacturer to end customer" — but brittle when the first edge is wrong. A single bad hop early in the chain takes the traversal away from relevant evidence before BFS would have exhausted alternatives.

Bounded search combines a hard hop cap (typically 2–4) with edge-type filters and confidence thresholds on edges. This is the operationally practical choice for production systems: it prevents both BFS explosion in dense graphs and DFS derailment in noisy ones.

MultiHop-RAG validates that multi-hop retrieval requires gathering evidence across multiple hops — but the traversal budget must remain finite. GraphRAG-Bench reinforces that traversal policy materially affects downstream answer quality, which means tuning the hop cap and edge-type filters is an evaluation task, not a one-time configuration decision.

Watch Out: Over-connected graphs trigger path explosion even with BFS at hop 2. A node with 500 outgoing edges at hop 1 produces potentially 250,000 candidate paths at hop 2. Mitigate with: (1) edge-type allowlists that restrict which relationship types the expander will follow for a given query class, (2) confidence thresholds that prune low-quality edges before traversal begins, and (3) a hard candidate cap that truncates the frontier after N nodes regardless of how many remain. Without all three controls, traversal time and LLM context cost grow super-linearly with graph connectivity.

Entity extraction and resolution as the gating layer

Graph traversal quality is determined before the agent issues its first query. If the extraction pipeline produced noisy entities, ambiguous labels, or missing relationships, the traversal will follow wrong paths or fail to reach the correct subgraph. This makes extraction the gating constraint for the entire architecture — not an upstream preprocessing detail.

GraphRAG-Bench makes this structural: "GraphRAG-Bench provides comprehensive assessment across the entire GraphRAG pipeline, including graph construction, knowledge retrieval, and answer generation." Graph construction is an evaluation dimension because bad construction cascades into bad retrieval and bad generation. The agent loop cannot repair an extraction failure post-hoc.

A minimal graph schema that represents the system's core entities looks like this:

# Minimal graph schema — store in graph layer (e.g., Neo4j)

nodes:

Document:

fields: [id, source_url, ingestion_timestamp, content_hash]

Entity:

fields: [canonical_id, label, type, aliases, confidence]

Chunk:

fields: [id, document_id, text, embedding_vector]

relationships:

MENTIONS: # (Document)-[:MENTIONS]->(Entity)

EXTRACTED_FROM: # (Entity)-[:EXTRACTED_FROM]->(Chunk)

RELATES_TO: # (Entity)-[:RELATES_TO {type, confidence}]->(Entity)

This schema keeps three concerns separate: the source document, the discrete chunk used for semantic retrieval, and the resolved entity used for graph traversal. The RELATES_TO edge carries a typed label and a confidence score so the traversal policy can filter on both. LlamaIndex's knowledge-graph RAG guidance says Graph RAG typically builds context from entities' subgraphs, which implies the pipeline must identify entities plus linking relations sufficient to reconstruct a relevant subgraph.

What the extraction pipeline must identify

The extraction pipeline must produce three outputs for each document: named entities of the types relevant to the domain, typed relationships between those entities, and provenance links that connect each extracted fact back to its source chunk.

LlamaIndex's knowledge-graph RAG documentation confirms this framing: Graph RAG builds context from entities' subgraphs, which means the pipeline must identify entities plus linking relations sufficient to reconstruct a relevant subgraph for any likely query. Neo4j centers its knowledge graph content on document, entity, and relationship modeling as the three pillars.

The practical mistake is extracting too much. Indexing every noun phrase produces thousands of low-confidence nodes connected by weakly typed edges. Traversal across that graph is computationally expensive and semantically noisy. Domain-scoped extraction — extracting only the entity types and relationship types that queries will actually traverse — produces a graph that is smaller, faster, and more accurate.

Production Note: Start with three to five entity types and two to three relationship types. Expand the schema only when evaluation shows that specific query classes consistently fail because the required entity type or relationship is absent. Schema sprawl is harder to reverse than schema gaps: adding a missing entity type is a re-extraction job; removing a noisy type requires cleaning existing graph data.

How entity resolution prevents duplicate or conflicting nodes

Entity resolution collapses surface-form variations of the same real-world entity into a single canonical node. Without it, "BERT," "bert-base-uncased," and "Google BERT" exist as three separate nodes in Neo4j, and traversal from one will not surface evidence attached to the other two. This fragments retrieval: the agent may find partial evidence on each node but never aggregate the complete picture.

Neo4j GraphAcademy describes the mechanism: "In GraphRAG, a knowledge graph provides contextual facts and relationships that ground a Large Language Model (LLM), helping to prevent hallucinations..." That grounding depends on the graph holding a single authoritative node for each real-world entity, not a cluster of near-duplicates.

Resolution operates at ingestion time. The pipeline computes a candidate canonical ID for each extracted entity — using string normalization, alias matching, and optionally a lightweight embedding similarity check against existing nodes. If the confidence that a new extraction matches an existing node exceeds a threshold (commonly 0.85–0.90), the pipeline merges the surface form into the existing node as an alias. Below that threshold, the extraction is flagged for review rather than automatically merged, because false merges (coalescing distinct entities) cause worse retrieval errors than false splits.

Pro Tip: Store aliases as a list property on the canonical node rather than as separate alias nodes with edges. This keeps the entity resolution lookup O(1) at ingestion and prevents the graph from accumulating thousands of alias-edge traversals that slow query planning. Apply a confidence threshold of at least 0.85 before merging; below that, prefer a manual review queue over automated merging.

Evidence aggregation across hops before generation

After the hop expander collects a subgraph, the system holds raw evidence — entity properties, relationship labels, chunk text, provenance links — distributed across multiple hops. That raw collection is not directly usable as a generation context. It must be scored, deduplicated, and compressed before it fits within the generator's context window and before contradictions mislead the synthesis step.

LangGraph's stateful graph model handles this: each node writes intermediate evidence to shared graph state, and the evidence scorer node reads the accumulated state, applies ranking signals, and produces a ranked evidence bundle. Stateful subgraphs inherit the parent graph's checkpointer, which means the aggregated evidence state is durable — the system can inspect it, debug it, or replay from it without re-running traversal. GraphRAG-Bench evaluates rationale generation in addition to final answer accuracy, which requires the model to synthesize evidence across multiple retrieved items into a coherent chain of reasoning — a task that demands clean, ranked evidence as input.

| Aggregation strategy | What it produces | Context quality | Context size | Suited for |

|---|---|---|---|---|

| Per-hop snippets | Raw chunk text from each hop, concatenated | Low — unranked, may duplicate | Large | Prototyping only |

| Stitched context | Ordered snippets with hop labels, deduped | Medium — ordered but uncompressed | Medium | Simple 2-hop queries |

| Ranked evidence bundle | Scored, deduplicated, contradiction-flagged items | High — compact and auditable | Small | Production multi-hop systems |

Scoring, deduplication, and contradiction handling

The evidence scorer applies three signals to rank collected items: relevance to the query (derived from embedding similarity of the chunk to the decomposed sub-question), path confidence (product of edge confidence scores along the traversal path), and source recency (ingestion timestamp relative to the query time).

Deduplication must operate on semantic equivalence, not string matching. The same fact — "Drug A received EMA approval in 2023" — may appear in three documents with different wording. String deduplication preserves all three; semantic deduplication using embedding clustering collapses them to one canonical statement with the highest-confidence provenance.

MultiHop-RAG establishes that multi-hop systems must reason over multiple pieces of evidence, which makes the deduplication step evidence-quality-critical: over-counting the same fact inflates apparent confidence in a claim. GraphRAG-Bench explicitly evaluates answer generation and rationale generation, so the scorer has to prioritize and filter paths before synthesis rather than merely collect them.

Watch Out: Contradictory sources and stale edges are the two most dangerous evidence defects. A graph edge created from a document published in 2022 may contradict a document published in 2025. If the scorer does not apply a recency penalty, the agent can synthesize an answer that is logically consistent with both sources but factually wrong. Explicitly tag each evidence item with its source timestamp, flag pairs with conflicting claims, and pass those flags to the generation prompt so the generator can acknowledge the contradiction rather than resolve it arbitrarily.

Why the generation layer must cite multiple evidence items

If the synthesis step receives only a single-hop evidence snippet, it cannot generate a multi-hop answer — it can only produce what that snippet supports, plus whatever the model fills in from parametric memory. That parametric fill-in is hallucination. Neo4j GraphAcademy states: "a knowledge graph provides contextual facts and relationships that ground a Large Language Model (LLM), helping to prevent hallucinations." That grounding is only effective if the full evidence chain reaches the generation prompt.

The synthesis prompt must instruct the generator to cite the specific evidence item supporting each claim in the answer. A generation prompt that says "Answer based on the following evidence items, and for each claim state which evidence item supports it" produces answers that can be audited for hallucination at the claim level. GraphRAG-Bench evaluates rationale generation as a separate dimension for exactly this reason.

Production Note: Structure the evidence bundle as a numbered list in the generation prompt —

[1] <evidence text> (source: <document_id>, hop: <N>)— and instruct the model to reference item numbers inline. This citation pattern makes post-generation hallucination audits deterministic: any claim not referencing an item number is either unsupported or hallucinated, and automated checkers can flag it without re-running the full pipeline.

Trade-offs, latency costs, and failure modes

Agentic multi-hop retrieval is not a strictly better version of classic RAG. It is a different point in the trade-off space, and the wrong choice of architecture for a given query distribution inflates cost and latency without improving answer quality.

LangGraph's checkpointing, interrupt, and durable-execution features enable the stateful loop required for multi-hop retrieval, but the same orchestration features add extra coordination overhead compared with single-pass retrieval. GraphRAG-Bench frames graph construction, retrieval, and generation as separate evaluation dimensions, which is also a description of separate latency buckets: graph traversal, evidence scoring, and generation each consume wall time that single-pass RAG does not pay.

DecisionMatrix — choosing the right retrieval architecture:

- Choose classic RAG when queries are predominantly single-hop, the corpus is well-chunked and semantically cohesive, and latency or cost budgets are tight. Adding graph infrastructure to a single-hop workload adds maintenance cost with no retrieval lift.

- Choose GraphRAG without a full agent loop when queries require entity-centric retrieval and multi-hop reasoning is predictable enough to execute with a fixed traversal policy. This avoids agent orchestration overhead while gaining relation-aware retrieval. Suitable for structured knowledge domains with stable schemas.

- Choose agentic multi-hop retrieval with a knowledge graph when queries are genuinely ambiguous, require chained evidence across multiple entity types and sources, and where the cost of a wrong answer exceeds the latency and infrastructure cost of the agentic loop. Enterprise regulatory, biomedical, financial, and legal knowledge systems are the target regime.

The latency cost of the agentic loop is material. Planning, hop expansion, evidence scoring, and conditional retries each add round-trip time. In high-connectivity graphs, traversal alone can dominate query latency. Engineers shipping this architecture must budget for per-query latency in seconds, not milliseconds, and design UX accordingly.

Sparse graphs, inconsistent schemas, and missing edges

A sparse graph produces false negatives. When the extraction pipeline missed a relationship, the hop expander reaches a dead end at the node that should have connected to the relevant evidence. The agent either falls back (if fallback routing is implemented) or returns an incomplete answer without signaling the gap to the user.

arXiv:2603.14828 characterizes this precisely: "Graph Retrieval-Augmented Generation enhances multi-hop reasoning but relies on imperfect knowledge graphs that frequently suffer from inherent quality issues." Retrieval drift from spurious noise and retrieval hallucinations from incomplete information are both graph-quality failures, not agent failures.

Inconsistent schemas are equally damaging. If document batch A extracted relationships with the label MANUFACTURED_BY and document batch B used PRODUCED_BY for the same semantic relationship, traversal queries that filter on relationship type will miss half the evidence. Neo4j requires consistent edge labels and directionality for selective traversal — ambiguity in either dimension degrades query precision.

Watch Out: A brittle hop chain fails silently. When DFS follows a single path that terminates at a missing edge, the agent receives an empty result set, which it may interpret as evidence that no answer exists rather than evidence that the graph is incomplete. Implement explicit graph-coverage metrics — track which entity types and relationship types have below-threshold coverage — and surface those metrics in the observability layer so sparse graph regions trigger alerts rather than silent failures.

Over-connected graphs and runaway agent loops

An over-connected graph creates the opposite failure: too much evidence, not too little. At hop 2 from a high-degree hub node, the candidate set can grow large enough that evidence scoring becomes the computational bottleneck, and the context window is overwhelmed before synthesis begins.

LangGraph supports replay from checkpoints and durable execution — capabilities that are necessary for resilient agent loops but that also enable the loop to run indefinitely if stopping conditions are absent. A LangChain forum discussion on dependency resolution illustrates the pattern: re-selection loops and deterministic expansion require explicit iteration budgets or they will retry indefinitely.

Watch Out: Set three hard stops before deploying any agentic retrieval loop: (1) a maximum hop count (typically 3–4), (2) a maximum iteration budget for the planning-retrieval cycle (typically 5–8 total loops), and (3) a confidence threshold above which the agent accepts its current evidence bundle as sufficient and proceeds to synthesis regardless of remaining budget. Without all three, an over-connected graph or an ambiguous query can drive the agent into a loop that exhausts both the token budget and the user's patience before producing an answer.

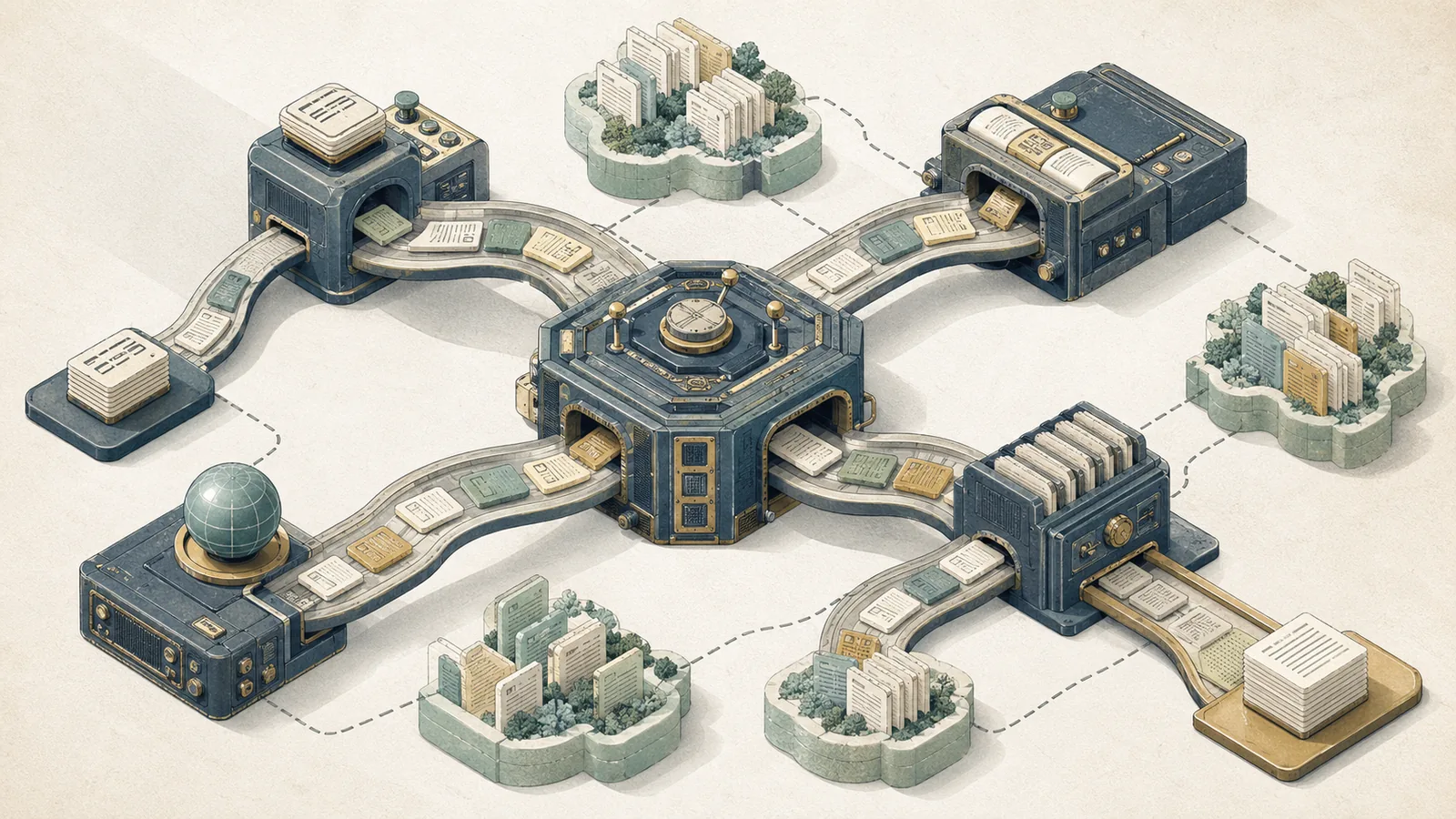

Production architecture for enterprise knowledge systems

Shipping this architecture to production requires four layers beyond the retrieval logic: validation, observability, fallback routing, and schema governance. Without them, graph errors propagate directly into user-visible answer errors with no recovery path.

Validation operates at two points: at ingestion (entity resolution confidence thresholds, schema conformance checks on extracted relations) and at query time (evidence bundle quality checks before synthesis). An evidence bundle that contains zero items above the confidence threshold should trigger fallback routing, not an empty generation.

Observability must track graph-layer metrics — traversal depth per query, hop expansion time, evidence bundle size, entity resolution confidence distribution — in addition to the standard LLM metrics of latency and token consumption. LangGraph supports state inspection via checkpoints, which provides a natural integration point for logging intermediate agent state to an observability backend.

Fallback routing to classic RAG or keyword search is not optional in production. When entity resolution confidence is below threshold or the graph is demonstrably sparse for a query's entity types, the system should route to a lower-complexity retrieval path rather than produce a confidently wrong answer from a bad traversal.

Production Note: Separate the planning and synthesis nodes in LangGraph into distinct subgraphs with independent checkpoints. This boundary allows the system to retry retrieval from a saved planning checkpoint without re-running synthesis, which is the most expensive node. It also isolates the failure domain: a retrieval failure does not corrupt the synthesis state, and a synthesis failure does not invalidate the gathered evidence.

Where Neo4j fits in the retrieval stack

Neo4j occupies the graph layer: it stores canonical entity nodes, typed relationship edges, and document provenance links, and it executes Cypher traversal queries issued by the hop expander. Its role is distinct from the vector database, which stores chunk embeddings for semantic search. In a hybrid retrieval stack, both are present: the vector database handles initial semantic recall for entity linking, and Neo4j handles relational traversal after entity seeds are established.

The Cypher query model aligns naturally with hop expansion. A two-hop traversal from a seed entity with relationship-type filtering is a Cypher pattern match — MATCH (e:Entity {id: $seed})-[:RELATES_TO*1..2 {confidence: {gt: 0.7}}]->(n) — and Neo4j's native graph storage executes these as index-backed traversals rather than table joins.

Pro Tip: Index on

canonical_idfor entity lookups, on(type, confidence)for relationship filtering, and oningestion_timestampfor recency-weighted evidence scoring. Pay attention to relationship directionality in the schema: undirected traversal is more flexible but less selective. If the domain has clear directional semantics —REGULATES,CITES,MANUFACTURES— model them as directed edges and traverse in the correct direction. This keeps traversal selective and prevents the hop expander from following semantically inverted paths.

How LangGraph orchestrates retrieval, reflection, and retries

LangGraph provides the control plane for the entire agent loop. Its core primitives — stateful nodes, conditional edges, checkpoints, interrupts, and replay — map directly to the architectural requirements of agentic retrieval.

The planning node writes its sub-question decomposition to graph state. The entity linker reads sub-questions and writes resolved entity IDs. The hop expander reads entity IDs and writes raw traversal results. The evidence scorer reads raw results and writes a ranked evidence bundle. The conditional edge reads the bundle and routes either back to the planner (with a revised strategy written to state) or forward to synthesis. LangGraph's interrupt mechanism allows the system to pause at that conditional edge for human review before committing to synthesis in high-stakes workflows.

LangGraph supports time travel through checkpoints: "Replay: Retry from a prior checkpoint." This capability is what makes bounded reflection practical: if the agent's first traversal strategy fails, the planner can issue a revised decomposition from the same checkpoint without re-running entity linking, which is typically the most latency-sensitive step.

Production Note: Keep the planning node and the synthesis node in separate LangGraph subgraphs. Planning is a fast, low-token operation; synthesis is a high-token, high-cost operation. Separating them means the reflection loop — retry planning, re-run traversal, re-score evidence — can iterate without consuming synthesis tokens on intermediate attempts. Synthesis runs once, on the final approved evidence bundle.

People Also Ask answers on agentic retrieval and graph RAG

Bottom Line: Agentic retrieval in RAG means the system orchestrates retrieval dynamically — planning, decomposing, retrieving, evaluating evidence, and retrying — rather than executing a fixed single-pass nearest-neighbor lookup. GraphRAG is a retrieval topology that uses a knowledge graph to expand evidence via entity subgraphs. Agentic RAG is a control strategy that wraps any retrieval topology — including GraphRAG — with a planning and reflection loop. The two compose: agentic multi-hop RAG over a knowledge graph is the combination of both.

What is agentic retrieval in RAG?

Agentic retrieval means the retrieval step is orchestrated by an agent that plans what to retrieve, executes retrieval, evaluates the result, and revises its strategy before committing to generation. Unlike single-pass retrieval — embed query, fetch top-k, generate — agentic retrieval treats the retrieval step as a loop with explicit stopping conditions.

LangGraph implements this with stateful nodes and checkpoint replay. The agent can observe that its first retrieval attempt returned insufficient evidence, revise its sub-question decomposition, and retry — all from a durable checkpoint without restarting the workflow.

Pro Tip: The practical distinction between iterative and single-pass retrieval is not complexity — it is query coverage. Single-pass retrieval is optimal for queries where the answer is contained in a single high-scoring chunk. Iterative retrieval is necessary when the answer requires synthesizing evidence from multiple sources that no single chunk covers. Use LangGraph's conditional edges to route between the two strategies at runtime based on a confidence signal from the first retrieval attempt, rather than committing all queries to the full agentic loop.

What is the difference between GraphRAG and agentic RAG?

GraphRAG is defined by what is retrieved: entity subgraphs from a knowledge graph rather than flat chunks from a vector index. Agentic RAG is defined by how retrieval is orchestrated: a planning and reflection loop rather than a fixed pipeline. The two are orthogonal and composable.

LlamaIndex states, "Graph RAG is an Knowledge-enabled RAG approach to retrieve information from Knowledge Graph on given task." Neo4j describes GraphRAG as knowledge-graph-centered retrieval that builds context from entity subgraphs, while LangGraph supplies the agent loop that plans, selects tools, and iterates.

| Dimension | GraphRAG | Agentic RAG | Combined |

|---|---|---|---|

| Retrieval topology | Subgraph expansion from entity seeds | Any — chunks, graphs, APIs | Subgraph expansion with iterative planning |

| Control flow | Fixed traversal policy | Agent loop with reflection | Agent loop driving graph traversal |

| Requires knowledge graph | Yes — core mechanism | No — topology-agnostic | Yes |

| Requires agent orchestration | No — can run deterministically | Yes — core mechanism | Yes |

| Main failure mode | Sparse or noisy graph | Runaway loops, over-retrieval | Both — requires mitigation for each |

LlamaIndex implements GraphRAG as a query engine over a knowledge graph without a full agent loop. Neo4j provides the graph storage layer for either variant. LangGraph provides the agent orchestration layer that upgrades a static GraphRAG pipeline into an iterative agentic system.

Sources and references

- Algolia Engineering Blog: Agentic Retrieval — Primary source framing agentic retrieval as a planning, decomposition, and iteration architecture

- MultiHop-RAG (arXiv:2401.15391) — Tang and Yang; establishes that existing RAG systems are inadequate for multi-hop queries requiring reasoning across multiple evidence sources

- GraphRAG-Bench (arXiv:2506.02404) — Holistic evaluation framework for GraphRAG spanning graph construction, retrieval, answer generation, and rationale generation

- When to Use Graphs in RAG (arXiv:2506.05690) — Examines the shift from flat retrieval to relation-aware retrieval in text settings

- Mitigating KG Quality Issues in GraphRAG (arXiv:2603.14828) — Documents retrieval drift and hallucination from imperfect knowledge graphs

- LangGraph Documentation: Graph API — Stateful graph model, checkpointing, and orchestration primitives

- LangGraph Documentation: Time Travel and Replay — Checkpoint replay for iterative planning and retry loops

- LangGraph Documentation: Interrupts — Pausing graph execution for external input or human-in-the-loop validation

- LangGraph Documentation: Subgraphs — Stateful subgraph inheritance and durable execution

- Neo4j Knowledge Graph Blog — Entity-centric retrieval, multi-hop question answering, and graph schema patterns

- Neo4j GraphAcademy: GraphRAG — Knowledge graph grounding for hallucination mitigation in LLM systems

- LlamaIndex Knowledge Graph RAG Query Engine — Subgraph-based context construction for entity-centric retrieval

- LangChain Forum: Tool Dependency Resolution in LangGraph — Planning node patterns and explicit decomposition for dependent sub-questions

Pro Tip: For implementation details, framework documentation (LangGraph, LlamaIndex) should be used first. For evaluation methodology and failure mode analysis, arXiv papers (especially arXiv:2401.15391, arXiv:2506.02404, arXiv:2603.14828) are preferred. For production patterns and graph schema design, Neo4j's engineering blog and GraphAcademy provide the most operationally grounded guidance.

Keywords: knowledge graph, Neo4j, LangGraph, LlamaIndex, LangChain, entity resolution, graph traversal, multi-hop retrieval, retrieval augmentation, evidence aggregation, hallucination mitigation, agentic retrieval, graph schema, relation extraction, vector database