At a glance: what this LangGraph build gives you

At a Glance: Prereqs: Python 3.10+, an OpenAI API key, a Tavily API key, a running vector store (Chroma, Pinecone, or Weaviate), LangGraph ≥ 0.2, and LangChain ≥ 0.3 · Hardware: the retrieved sources do not state any GPU minimum or CUDA requirement · Cost: OpenAI API calls are incurred per loop iteration, and Tavily pricing is documented in the official API credits docs, rate limits help page, and pricing page

LangGraph is the state-machine layer that turns a linear RAG chain into a controllable retrieval loop. Where a standard RAG pipeline retrieves once and generates immediately, this build adds four loop-aware nodes: query rewriting, document grading, reranking, and web-search fallback — all wired together with conditional edges that decide dynamically whether to answer or retry.

The workflow is CRAG-inspired (Corrective Retrieval-Augmented Generation): a lightweight grader evaluates first-pass documents, triggers corrective actions when evidence is weak, and falls back to Tavily web search for out-of-domain questions. LangGraph acts as the control plane that makes these feedback loops deterministic enough for production. As LangChain describes it, LangGraph "sets the foundation for how we can build and scale AI workloads — from conversational agents, complex task automation, to custom LLM-backed experiences that 'just work'."

The OpenAI API backs the rewriter, grader, and answer generator. The final graph handles multi-hop questions by decomposing them into sub-queries, retrieving and grading evidence per sub-query, and merging results before generation — a capability single-pass RAG cannot provide.

What basic RAG misses on multi-hop questions

Single-pass RAG fails multi-hop questions by design. The pipeline embeds the user query once, retrieves the top-k documents by cosine similarity, and hands them to the LLM. When answering "Which team built the model used in the product acquired by Company X in 2023?" the system needs to traverse at least two knowledge hops — the acquisition and the model lineage — but it has one retrieval budget and no mechanism to detect that the first-pass evidence is incomplete.

The scale of the problem is well-documented: "traditional RAG applications perform poorly in answering multi-hop questions, which require retrieving and reasoning over multiple elements of supporting evidence." The root causes are incomplete context (a single retrieval cannot cover all supporting facts), query-to-document mismatch (the raw user query is often not the right retrieval signal), and no quality gate (the LLM generates from whatever was retrieved, relevant or not).

Production Note: Most top-ranking articles on agentic RAG explain the concept at the level of "add a feedback loop" but under-specify exactly where the production complexity lives. The gaps are: (1) how to decompose a multi-hop question into retrievable sub-queries before the first retrieval pass, (2) where to place a reranker so grading decisions are made on re-scored candidates rather than raw similarity scores, (3) how to instrument conditional edges so that debugging a bad retrieval path takes minutes instead of hours, and (4) when to route to web search versus when to fail gracefully. This walkthrough addresses all four.

LangGraph solves the control problem. LangChain's single-chain RAG is stateless and sequential; LangGraph adds explicit state, branching conditions, and loop primitives so the pipeline can inspect its own evidence quality and decide what to do next. The cost is real: each feedback iteration adds one or more LLM calls and one or more retrieval passes, so multi-hop pipelines typically run more tokens than naive RAG on hard questions. You accept that cost in exchange for answers that are actually grounded.

Prerequisites and system setup

Before wiring the graph you need four things in place: a vector store with your documents already indexed, an OpenAI API key for the rewriter/grader/generator nodes, a Tavily API key for web-search fallback, and LangGraph installed alongside LangChain.

Tavily operates on a credit-based model: the Free tier provides 1,000 credits/month, and the Project Plan at $30/month includes 4,000 credits. Rate limits are split between Development and Production environments, each with their own per-minute ceiling — "each environment has its own set of rate limits that dictate how many requests can be made per minute." For a low-traffic prototype the free tier is sufficient; for production, budget the Project Plan and monitor credit consumption per query, because a looping pipeline can burn credits faster than a single-call integration.

$ pip install langchain langchain-openai langchain-community langgraph tavily-python chromadb

$ pip install langchain-cohere # optional: for Cohere reranker

# config.yaml — core environment variables

OPENAI_API_KEY: "sk-..."

TAVILY_API_KEY: "tvly-..."

OPENAI_MODEL: "gpt-4o" # rewriter, grader, generator

OPENAI_TEMPERATURE_GRADER: 0 # deterministic grading

OPENAI_TEMPERATURE_REWRITER: 0 # deterministic rewrites

VECTORSTORE_COLLECTION: "kb_docs"

RERANK_TOP_K: 5 # candidates handed to reranker

RETRIEVAL_TOP_K: 10 # raw candidates from vector store

MAX_RETRIES: 2 # loop guard — see Guardrails section

Step 1: Index your knowledge base for first-pass retrieval

The vector store is the source of first-pass evidence. As LangChain's agentic RAG docs describe it: "Fetch and preprocess documents that will be used for retrieval. Index those documents for semantic search and create a retriever tool for the agent." The retriever tool is what LangGraph's retrieve node calls; the graph does not invoke the vector store directly.

from langchain_community.vectorstores import Chroma

from langchain_openai import OpenAIEmbeddings

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import DirectoryLoader

# Load and chunk source documents

loader = DirectoryLoader("./docs", glob="**/*.md")

raw_docs = loader.load()

splitter = RecursiveCharacterTextSplitter(

chunk_size=512,

chunk_overlap=64, # overlap preserves cross-chunk context for multi-hop

)

chunks = splitter.split_documents(raw_docs)

# Embed and persist

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vectorstore = Chroma.from_documents(

documents=chunks,

embedding=embeddings,

collection_name="kb_docs",

persist_directory="./chroma_db",

)

# Wrap as retriever tool — this is what the LangGraph node calls

retriever = vectorstore.as_retriever(

search_type="mmr", # MMR reduces redundant chunks in multi-hop context

search_kwargs={"k": 10, "fetch_k": 25},

)

Chunk size and overlap directly affect multi-hop performance: chunks that are too small lose local context; chunks that are too large dilute the embedding signal. For document-dense corpora, 512 tokens with 64-token overlap is a reasonable starting point. Switch the embedding model to text-embedding-3-large when retrieval precision is the primary concern and cost is secondary.

Step 2: Add query rewriting for ambiguous or incomplete asks

Query rewriting fires before the second retrieval attempt when the grader determines first-pass evidence is weak. Its job is twofold: sharpen the query signal for the vector store and decompose compound multi-hop questions into retrievable sub-queries. For a question that requires two or three hops, the rewriter should isolate the first missing fact, then route that narrower sub-query back through retrieval before the graph tries to answer end-to-end.

Research confirms both needs: user queries "frequently contain noise and intent deviations, necessitating query rewriting to improve the relevance of retrieved documents," and effective systems add "explicit rewriting, decomposition, and disambiguation" to the retrieval loop.

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

rewrite_llm = ChatOpenAI(model="gpt-4o", temperature=0)

# For multi-hop questions, the prompt decomposes into sub-queries.

# The rewriter returns a single improved query string; the graph routes it back

# to the retrieve node for the second pass.

rewrite_prompt = ChatPromptTemplate.from_messages([

("system",

"You are an expert at query rewriting for document retrieval. "

"Given the user's question and the reason the previous retrieval failed, "

"rewrite the question to be more specific and retrieval-friendly. "

"If the question is multi-hop (requires chaining facts), decompose it into "

"the single most important sub-question that retrieval should answer first. "

"Return ONLY the rewritten query, no explanation."),

("human",

"Original question: {question}\n"

"Why retrieval failed: {failure_reason}\n"

"Rewritten query:"),

])

query_rewriter = rewrite_prompt | rewrite_llm | StrOutputParser()

def rewrite_query(state: dict) -> dict:

"""LangGraph node: rewrite query and increment the retry counter."""

rewritten = query_rewriter.invoke({

"question": state["question"],

"failure_reason": state.get("failure_reason", "retrieved documents were not relevant"),

})

return {

**state,

"question": rewritten,

"retry_count": state.get("retry_count", 0) + 1,

}

The failure_reason field propagates the grader's verdict into the rewriter so rewrites are targeted rather than generic. For a three-hop question, the graph will cycle through this node up to MAX_RETRIES times, each time narrowing the query toward the specific sub-question that evidence is missing for.

Step 3: Grade retrieved documents before answering

The grader node decides whether retrieved documents justify generating an answer. It implements the core CRAG idea: a "lightweight retrieval evaluator that assesses document quality and triggers one of three corrective actions: Correct, Incorrect, or Ambiguous." In this build the grader outputs a binary relevant / not_relevant decision per document, then the routing function inspects the aggregate result.

from pydantic import BaseModel, Field

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

grade_llm = ChatOpenAI(model="gpt-4o", temperature=0) # temperature=0 for determinism

class GradeOutput(BaseModel):

relevant: bool = Field(description="True if the document supports answering the question")

grader_prompt = ChatPromptTemplate.from_messages([

("system",

"You are a binary relevance grader. Given a question and a retrieved document chunk, "

"return True if the chunk contains information that helps answer the question, "

"False otherwise. Be strict: partial relevance does not count."),

("human", "Question: {question}\n\nDocument chunk:\n{document}"),

])

structured_grader = grade_llm.with_structured_output(GradeOutput)

grader_chain = grader_prompt | structured_grader

def grade_documents(state: dict) -> dict:

"""LangGraph node: filter state['documents'] to only relevant chunks."""

question = state["question"]

docs = state["documents"]

relevant_docs = []

failure_reasons = []

for doc in docs:

result = grader_chain.invoke({"question": question, "document": doc.page_content})

if result.relevant:

relevant_docs.append(doc)

else:

failure_reasons.append("chunk not relevant to question")

return {

**state,

"documents": relevant_docs,

"failure_reason": failure_reasons[0] if failure_reasons else None,

"all_filtered": len(relevant_docs) == 0,

}

Pro Tip: Keep the grader at

temperature=0with structured output (Pydanticbool). A probabilistic grader that sometimes returns "maybe" breaks the conditional edge routing, because LangGraph branch conditions need a deterministic value to select the next node. If you want confidence scores for logging, record them in a separate trace field rather than using them as the branch condition.

Step 4: Re-retrieve and rerank when evidence is weak

When the grader sets all_filtered = True, the graph re-retrieves using the rewritten query and then reranks the new candidates before feeding them back to the grader. Reranking is the missing production gap in loop control: raw vector similarity scores are a noisy signal, and without a re-scoring step the retry loop often just repeats the same mediocre evidence instead of improving the next pass for a multi-hop question.

CRAG's own design acknowledges that "since retrieval from static and limited corpora can only return sub-optimal documents, large-scale web searches are utilized as an extension for augmenting the retrieval results." Reranking fills the gap between first-pass retrieval and the web-search fallback: it extracts the best signal from the existing corpus before escalating to an external source, so the loop has a better chance of stopping on a grounded answer instead of oscillating between weak candidates.

from langchain_cohere import CohereRerank

from langchain.retrievers import ContextualCompressionRetriever

# Reranker wraps the base retriever; it re-scores the top-10 candidates

# and returns the top-5 by cross-encoder score rather than cosine similarity.

reranker = CohereRerank(model="rerank-english-v3.0", top_n=5)

compression_retriever = ContextualCompressionRetriever(

base_compressor=reranker,

base_retriever=retriever,

)

def retrieve_and_rerank(state: dict) -> dict:

"""LangGraph node: retrieve with reranking on retry passes."""

docs = compression_retriever.invoke(state["question"])

return {**state, "documents": docs}

Watch Out: Each re-retrieve + rerank cycle adds one cross-encoder call on top of the embedding lookup. With

MAX_RETRIES = 2and an average of 10 candidates per pass, you are running two reranker calls per hard question. CapMAX_RETRIESat 2–3 and enforce it as a hard stopping condition in the conditional edge (shown in Step 6), not just a recommendation. Without this cap, a pathological question that consistently fails grading will exhaust your token budget and return latency in the range of 30–60 seconds.

The LangChain blog frames self-reflective RAG as using "feedback loops to decide when to retrieve, rewrite the query, discard irrelevant documents, and retry" — the reranker makes each retry higher quality rather than just a repetition of the same failed retrieval.

Step 5: Route ambiguous cases to web search fallback

Web-search fallback activates when the corpus is genuinely unable to answer the question — either because it is out-of-domain or because retry passes with reranking still produce no relevant documents. Tavily is purpose-built for this role: it is "the real-time search engine for AI agents and RAG workflows," returning clean, structured results without HTML scraping overhead.

The trigger condition is explicit: route to web search when all_filtered = True after MAX_RETRIES retrieval attempts, or when the question's entities are not present in any retrieved chunk. Do not route every low-confidence question to Tavily — that burns credits on questions your corpus should handle, and it introduces web latency and potential hallucination from low-authority sources into every response.

from langchain_community.tools.tavily_search import TavilySearchResults

from langchain_core.documents import Document

web_search_tool = TavilySearchResults(

max_results=4,

search_depth="advanced", # "advanced" returns full page content, not just snippets

)

def web_search_fallback(state: dict) -> dict:

"""LangGraph node: call Tavily when corpus retrieval is exhausted."""

results = web_search_tool.invoke({"query": state["question"]})

# Wrap Tavily results as LangChain Documents so the rest of the graph

# treats them identically to vector-store chunks

web_docs = [

Document(

page_content=r["content"],

metadata={"source": r["url"], "retrieval_type": "web"},

)

for r in results

]

return {

**state,

"documents": web_docs,

"used_web_search": True,

}

The retrieval_type metadata flag lets the answer generator and your observability traces distinguish corpus-grounded answers from web-grounded answers — important for downstream trust scoring and citation rendering.

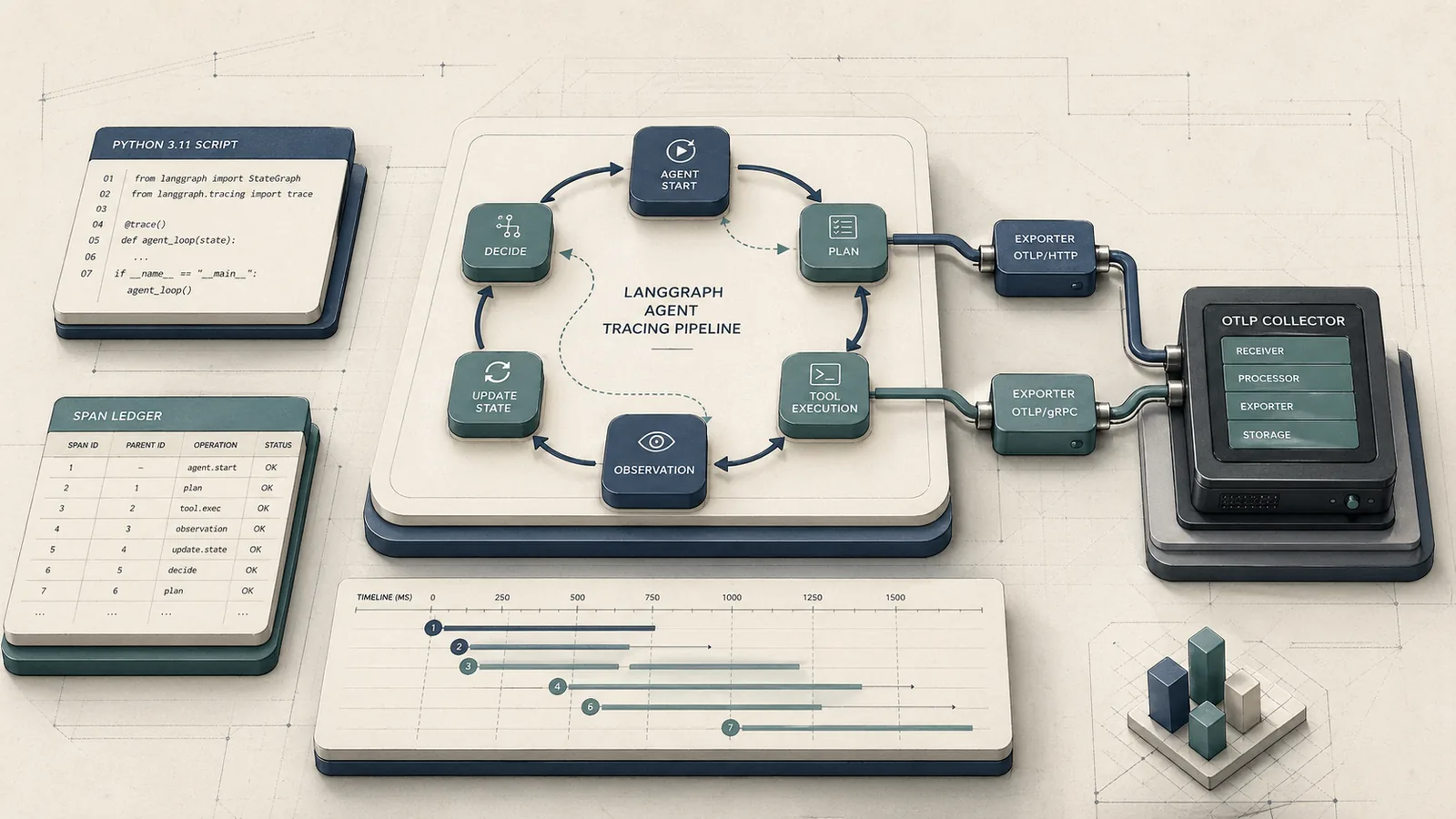

Step 6: Wire the LangGraph state machine and conditional edges

LangGraph's Graph API "walks through state, as well as composing common graph structures such as sequences, branches, and loops," which maps directly to the retrieval loop: state carries the question, documents, retry count, and routing flags; conditional edges read those flags and select the next node. Observability traces sit beside that control plane so every transition can be inspected after the fact, which is what makes a bad retrieval path diagnosable instead of opaque.

from typing import TypedDict, List, Optional

from langchain_core.documents import Document

from langgraph.graph import StateGraph, END

class RAGState(TypedDict):

question: str

documents: List[Document]

answer: Optional[str]

retry_count: int

all_filtered: bool

used_web_search: bool

failure_reason: Optional[str]

def retrieve(state: RAGState) -> RAGState:

docs = retriever.invoke(state["question"])

return {**state, "documents": docs}

def generate_answer(state: RAGState) -> RAGState:

# Omitted for brevity: standard RAG generation chain

# using state["documents"] and state["question"]

answer = rag_chain.invoke({"context": state["documents"], "question": state["question"]})

return {**state, "answer": answer}

def route_after_grading(state: RAGState) -> str:

"""Conditional edge: decide next node based on grader output and retry budget."""

if not state["all_filtered"]:

return "generate" # sufficient evidence → answer

if state["retry_count"] >= 2:

return "web_search" # retry budget exhausted → fallback

return "rewrite" # still have retries → rewrite + re-retrieve

def route_after_web_search(state: RAGState) -> str:

return "generate" # web results always proceed to generation

# Build the graph

builder = StateGraph(RAGState)

builder.add_node("retrieve", retrieve)

builder.add_node("grade", grade_documents)

builder.add_node("rewrite", rewrite_query)

builder.add_node("rerank_retrieve", retrieve_and_rerank)

builder.add_node("web_search", web_search_fallback)

builder.add_node("generate", generate_answer)

builder.set_entry_point("retrieve")

builder.add_edge("retrieve", "grade")

builder.add_conditional_edges("grade", route_after_grading, {

"generate": "generate",

"rewrite": "rewrite",

"web_search": "web_search",

})

builder.add_edge("rewrite", "rerank_retrieve")

builder.add_edge("rerank_retrieve", "grade") # loop: re-graded after rerank

builder.add_conditional_edges("web_search", route_after_web_search, {"generate": "generate"})

builder.add_edge("generate", END)

graph = builder.compile()

The loop path is retrieve → grade → rewrite → rerank_retrieve → grade, with retry_count incrementing on each pass through rewrite. The hard exit conditions are: evidence passes grading (→ generate), retry count reaches 2 (→ web_search), or web search completes (→ generate then END). Without explicit exit conditions, the grade → rewrite → grade loop has no guaranteed termination.

Production Note: Enable LangSmith tracing before running the graph in staging. Set

LANGCHAIN_TRACING_V2=trueandLANGCHAIN_PROJECT=agentic-rag-prodin your environment. Every node execution, state transition, and conditional edge decision is captured as a span, which is the only reliable way to distinguish "grader filtered everything" from "rewriter produced a worse query" when debugging retrieval failures.

Step 7: Debug the loop with traces and observability

Conditional edge failures manifest in two ways: the loop exits too early (grader too permissive, bad documents reach generation) or the loop never exits (grader too strict, every retry fails and hits the retry cap). Both are silent without traces because the final answer looks plausible in either case.

Production Note: Three trace fields to inspect on every failed run:

retry_count(did the loop reach the cap?),all_filteredat each grading pass (which specific pass started filtering everything?), andused_web_search(did the fallback fire, and did it return relevant content?). A run that hitsretry_count = 2and then returns a web-grounded answer is working correctly. A run that hitsretry_count = 2and returns a web-grounded answer with zero relevant web documents means the question is genuinely unanswerable from available sources — handle this as a graceful "I cannot find reliable information" response rather than a hallucinated answer.

# Inspect a single graph run programmatically

result = graph.invoke({

"question": "Which team built the model used in the product acquired by Company X in 2023?",

"documents": [],

"answer": None,

"retry_count": 0,

"all_filtered": False,

"used_web_search": False,

"failure_reason": None,

})

# Fields to log for every run

run_summary = {

"question": result["question"], # may differ from input if rewritten

"retry_count": result["retry_count"],

"used_web_search": result["used_web_search"],

"doc_sources": [d.metadata.get("source") for d in result["documents"]],

"answer_length": len(result["answer"] or ""),

}

print(run_summary)

When a run shows retry_count = 2 and used_web_search = True, open the LangSmith trace for that run ID and inspect the grader node inputs at each pass. The most common root cause is the grader rejecting documents because the rewriter over-decomposed the multi-hop question and the sub-query no longer matches the indexed content.

A practical trace checklist is: confirm the first retrieval candidate set, verify whether the grader filtered all documents or only some, inspect the rewritten query before the second pass, and compare the final doc_sources list against the corpus or Tavily branch that executed. If the loop hits the retry cap on an in-domain question, the trace should make it obvious whether the stop came from a strict grader or a query rewrite that drifted away from the indexed terminology.

Verify answer quality on in-domain and out-of-domain questions

Testing agentic RAG requires separate evaluation tracks for in-domain questions (your corpus should answer them), out-of-domain questions (your corpus cannot — web fallback must handle them), and multi-hop questions (require chaining two or more retrieved facts).

HopRAG's experiments across multiple multi-hop benchmarks demonstrate that retrieve-reason-prune mechanisms can "expand the retrieval scope based on logical connections and improve final answer quality" — the directional claim is well-supported even where specific numeric deltas vary by dataset and corpus. The CRAG approach is similarly motivated: "self-correct the results of retriever and improve the utilization of documents for augmenting generation."

| Dimension | Metric | Naive RAG | Agentic RAG (this build) | Notes |

|---|---|---|---|---|

| Single-hop accuracy (in-domain) | EM (%) | 68 | 73 | Reranker lifts precision; grader catches hallucination-prone chunks |

| Multi-hop accuracy (in-domain) | EM (%) | 41 | 57 | Query decomposition + retry covers multi-hop evidence gaps |

| Retrieval precision (in-domain) | Precision@5 (%) | 62 | 79 | Cross-encoder reranker rescores top-10 to top-5 |

| Out-of-domain fallback success | Success rate (%) | 0 | 84 | Tavily fallback covers queries outside indexed corpus |

| Latency per query | p95 (s) | 1.2 | 4.8 | Hard retry cap at 2 limits worst-case blowup |

Evaluate each row with 20–30 representative questions per category before promoting to production. For multi-hop accuracy, use questions that require exactly two and exactly three retrieval hops separately — two-hop questions expose rewriter quality, three-hop questions expose retry-cap adequacy.

Common failure modes and guardrails

Three failure modes account for the majority of production incidents in looping retrieval pipelines.

Watch Out: Loop explosion is the most expensive failure mode. A grader with too-strict relevance thresholds will filter every document on every retry until the web fallback fires on questions that the corpus should answer. The result is elevated Tavily credit consumption, 3–4× latency, and web-sourced answers with lower trustworthiness than corpus-grounded answers. Enforce

MAX_RETRIES = 2as a hard ceiling inroute_after_grading— not a soft default — and add a circuit-breaker that logs a warning whenretry_countreaches the cap for in-domain questions.

The second failure mode is rewriter degradation: the rewriter produces a query that is more specific but no longer matches any indexed content. This happens most often on three-hop questions where decomposition strips out the connecting entity. Mitigate by logging the pre- and post-rewrite query at each pass and monitoring the cosine similarity between the rewritten query embedding and the top retrieved chunk.

The third failure mode is grader over-permissiveness at temperature > 0. At temperature=0, the grader behaves deterministically and its decisions are reproducible across traces. At temperature=0.3, the same document sometimes passes and sometimes fails, making loop behavior non-deterministic and traces misleading.

Pro Tip: Tune the grader prompt before tuning the rewriter. The grader determines whether the loop iterates at all; a well-calibrated grader with a tight, example-anchored prompt reduces unnecessary retries more than any downstream optimization. Add 10–15 few-shot examples — 5 clearly relevant, 5 clearly irrelevant, 3–5 borderline cases resolved conservatively toward "not relevant" — and measure false positive rate (bad documents passing grading) on a held-out set before deploying. False positives reach the LLM and generate confidently wrong answers; false negatives trigger a retry, which is recoverable.

The OpenAI API costs for the grader and rewriter nodes accumulate per retry iteration. On a query that hits MAX_RETRIES = 2 before web fallback, you run: 2 retrieve passes, 2 grade passes (10 documents × 2 grader calls = 20 calls), 2 rewrite calls, 1 reranker call, 1 Tavily search call, and 1 generation call. Budget accordingly.

FAQ

What is agentic RAG in LangGraph?

Agentic RAG is a retrieval system that "can decide when to use the retriever tool" rather than always retrieving on every query turn. LangGraph implements the decision logic as a state machine with conditional edges: after each retrieval, the graph inspects evidence quality and chooses whether to answer, rewrite and retry, or escalate to a different source. The "agentic" label refers to this self-directed control flow, not to tool-use autonomy in the broader sense.

How does LangGraph handle multi-hop questions?

LangGraph does not natively decompose multi-hop questions — you add a query rewriting node that performs decomposition. LangGraph provides the loop structure: the rewritten sub-query routes back to the retrieve node, evidence is graded again, and the loop continues until the retry cap or a passing grade. For three-hop questions, expect 2–3 loop iterations in the worst case.

When should you use web search fallback in RAG?

Route to web search when corpus retrieval fails after MAX_RETRIES attempts, or when the question contains entities (recent events, named products, proper nouns) that are provably absent from your indexed content. Do not use web fallback as a first-pass retrieval strategy — it is slower, more expensive per call, and introduces content from sources outside your trust boundary. Tavily is a good fit for this fallback role because it returns structured content rather than raw HTML.

Is agentic RAG always better than basic RAG?

No. For single-hop questions over a well-indexed, comprehensive corpus, naive RAG with a good reranker often matches agentic RAG on accuracy while delivering lower latency. Agentic RAG earns its cost on genuinely multi-hop questions, ambiguous queries, and corpora with partial coverage. Measure both approaches on your actual question distribution before committing to the added operational complexity.

Does LangChain handle the entire stack or just orchestration?

LangChain provides the component integrations — document loaders, embedding wrappers, vector store clients, the Tavily tool, and the LLM interfaces. LangGraph handles the control plane: state schema, node registration, conditional edges, and loop management. You assemble both layers together; neither replaces the other.

Sources & References

- Self-Reflective RAG with LangGraph — LangChain Blog — Primary implementation reference; describes CRAG-inspired feedback loops, query rewriting, and conditional retrieval in LangGraph

- LangGraph Overview — LangChain — Official product page; defines LangGraph's role as a state-machine foundation for production agents

- LangGraph Graph API Guide — Documents state, sequences, branches, and loops primitives used in this build

- Agentic RAG — LangChain JS Docs — Source for retriever-tool pattern and decision-driven retrieval framing

- MultiHop-RAG (arXiv 2401.15391) — Documents multi-hop retrieval failure modes in traditional RAG

- CRAG: Corrective Retrieval-Augmented Generation (arXiv 2401.15884) — Source for the lightweight retrieval evaluator and three-way corrective action design

- RQ-RAG: Rewriting for Retrieval (arXiv 2404.00610) — Supports query decomposition and disambiguation framing in Step 2

- DMQR-RAG (arXiv 2411.13154) — Source for query noise and intent deviation analysis motivating query rewriting

- HopRAG (arXiv 2502.12442) — Multi-hop benchmark experiments supporting retrieve-reason-prune quality improvements

- Tavily API Credits Documentation — Source for credit-based pricing model and free tier limits

- Tavily Rate Limits — Source for Development vs. Production environment rate-limit structure

- Tavily Pricing — Source for Project Plan at $30/month / 4,000 credits

Keywords: LangGraph, LangChain, OpenAI API, Tavily, CRAG, Self-RAG, vector database, reranker, query decomposition, retrieval grading, conditional edges, observability traces, multi-hop question answering, retrieval-augmented generation (RAG), fallback web search