At a Glance: Time: 4–8 hours for a full ablation on a corpus of 200–500 documents · Prereqs: Python 3.10+, LangChain or LlamaIndex, a vector database (FAISS local or Qdrant/Pinecone hosted), an LLM endpoint for answer generation, a gold query-answer set of at least 50 QA pairs · Hardware: CPU-only is sufficient for local embedding models ≤1B params; NVIDIA H100 or equivalent accelerates batch embedding of large corpora significantly · Cost: $0 for self-hosted models (BAAI/bge-m3, nomic-embed-text-v1.5, E5-large-v2); $5–$30 for a full ablation corpus re-embedded via OpenAI text-embedding-3-large or Cohere Embed v3 depending on token count

At a Glance: what to measure before you change chunking or embeddings

Chunking configuration can move retrieval quality enough to change the winner on the same corpus. That finding comes from Vectara's NAACL 2025 study, which tested 25 chunking configurations across 48 embedding models — and Premai's 2026 synthesis of that study concludes that "chunking configuration had as much or more influence on retrieval quality as the choice of embedding model." Most RAG practitioners optimize embedding models while treating chunking as a one-time setup decision. That is backwards.

Before running any ablation, fix four things: the corpus, the query set, the metric stack, and your evaluation cost budget. Changing any of these mid-experiment invalidates comparisons between runs.

| Dimension | What to fix |

|---|---|

| Corpus | Real domain documents, not toy snippets; 200–500 docs minimum |

| Query set | ≥50 gold QA pairs with document-level relevance labels; freeze query IDs |

| Metric stack | Retrieval recall@10, MRR, end-to-end answer accuracy — tracked separately |

| Evaluation cost | Per-run re-embedding cost (tokens × model rate) + vector DB write time |

The RAG evaluation stack must separate retrieval metrics from generation metrics because they can disagree. An embedding model swap that improves recall@10 by 4 points can simultaneously reduce answer accuracy if the retrieved chunks lose contextual coherence. Your vector database configuration — index type, distance metric, top-k — must also stay constant across all chunking and embedding runs.

What this benchmark must prove on a real corpus

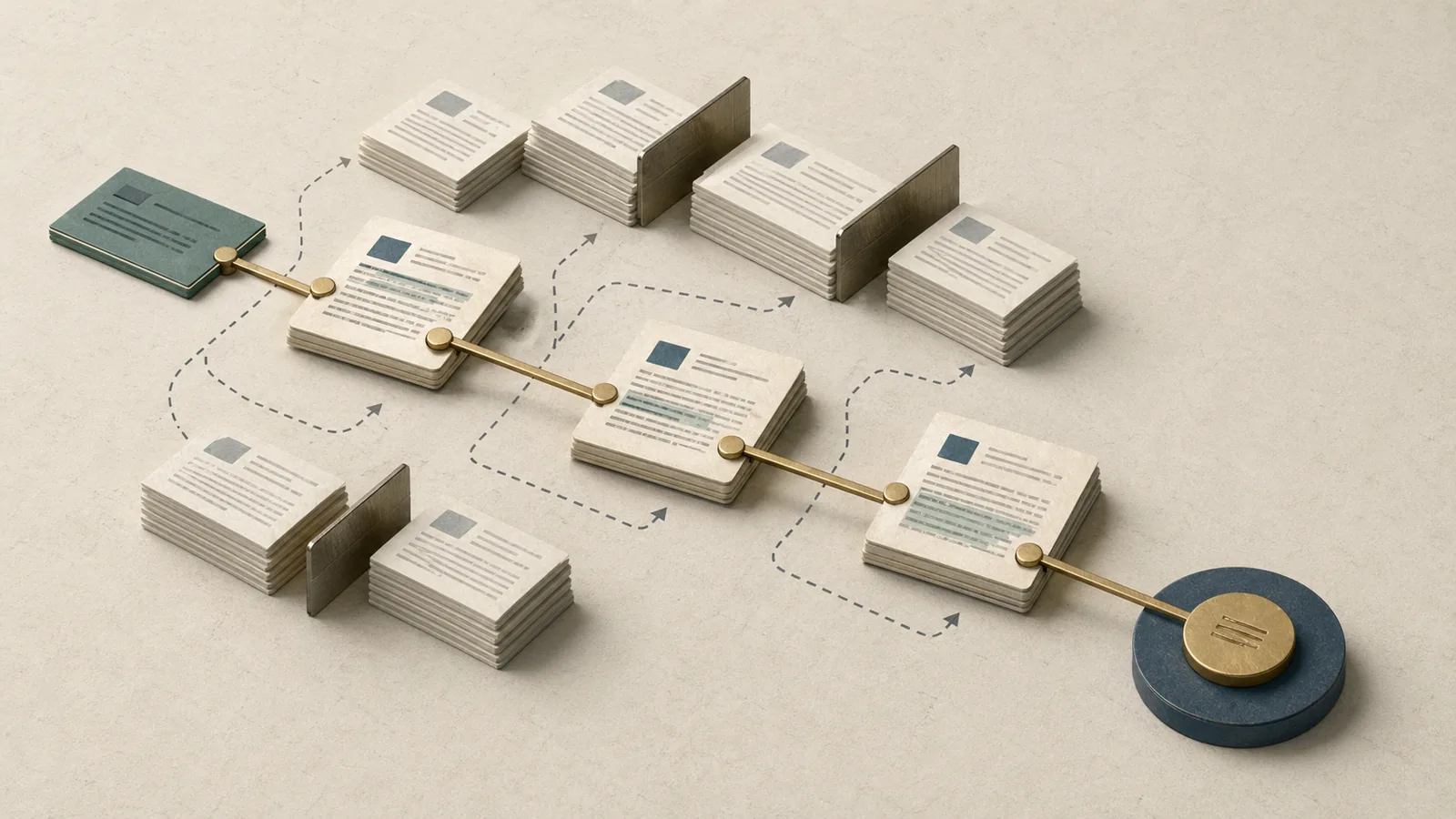

The benchmark exists to answer one question that generic advice cannot: for your corpus and query distribution, what combination of chunking strategy and embedding model maximizes both retrieval recall and downstream answer quality simultaneously? Those two objectives do not always point to the same configuration.

Production Note: The corpus, query set, document labels, and query IDs must stay identical across every trial. Resampling documents between chunking sweeps confounds the chunking effect with data selection. Version your corpus split and lock it before the first run.

Premai's 2026 benchmark guide reports recursive 512-token splitting at 69% answer accuracy on 50 academic papers — approximately 15 percentage points above semantic chunking on the identical corpus. That result came from the same RAG evaluation summary that tracked retrieval and generation separately. Had the team stopped at retrieval-only metrics, they would have shipped semantic chunking and shipped a worse system. The benchmark's job is to surface that kind of inversion before it reaches production.

The competitive gap in most published guidance is that authors recommend a chunk size in isolation — "try 512 tokens" — without showing what happens when you cross that chunk size with a specific embedding model or a structured versus flat document layout. A rigorous ablation tests chunking and embedding jointly on the same frozen dataset.

Why toy text hides the chunking effect

Real corpora contain heterogeneous structure: dense technical prose, bullet lists, tables, code blocks, section headers, and footnotes. Toy passages are uniformly formatted, which means all chunking strategies perform similarly because no strategy is actually stressed.

Watch Out: Chunking experiments on short synthetic passages overfit to passage length, not to document structure. When you move to production documents, the ranking of strategies can invert. Premai's 2026 guide reports that semantic chunking produced an average fragment size of just 43 tokens in one benchmark — a size that destroys cross-sentence context in real long-form documents even though it looks fine on short synthetic inputs.

Use a real corpus with heterogeneous document types. If your production system ingests research PDFs, use research PDFs. If it ingests support tickets interleaved with knowledge-base articles, use that mix. Toy text will not tell you what you need to know.

Which metrics to track separately

Report retrieval recall@10, MRR, and end-to-end answer accuracy as three independent columns. Collapsing them into a composite score hides the inversion risk that makes RAG evaluation non-trivial.

| Metric | What it measures | Failure mode if optimized alone |

|---|---|---|

| Recall@10 | Fraction of queries where a gold passage appears in the top-10 retrieved chunks | Retrieval improves but answers degrade (fragmented chunks) |

| MRR | Mean position of the first relevant chunk; penalizes rank-3 vs rank-1 heavily | Can inflate with semantic chunking while answer accuracy falls |

| End-to-end answer accuracy | LLM-graded or human-graded correctness of the final generated answer | Lagging indicator; expensive to compute at scale |

As Premai's 2026 guide states directly: "retrieval-only metrics can make semantic chunking look best while end-to-end answer accuracy can favor larger, structure-respecting chunks." Preserve the same gold answers and grading rubric — LLM-as-judge or human — across all ablation runs. Changing the rubric mid-experiment is equivalent to changing the dataset.

Fix the evaluation harness before comparing strategies

Lock the harness before touching a single splitter setting. This means: fixed query IDs, fixed corpus slice, identical scoring code, and identical vector database parameters across every run. Engineers frequently change index parameters (HNSW ef_construction, number of neighbors) between chunking experiments, which introduces a confound that makes chunking appear responsible for a change that was actually index-configuration-driven.

Step 1: Run the benchmark harness

The harness below ingests a chunked corpus into FAISS, retrieves top-10 candidates per query, computes recall@10 and MRR against gold relevance labels, and optionally calls an LLM for answer-accuracy grading. Run it identically for every ablation — only the chunks and embed_fn arguments change.

$ python benchmark_rag_ablation.py --chunks chunks_v1.json --queries queries_v1.json --config ablation_config.yaml --output metrics_ablation_003.json

import json

from typing import Callable

import faiss

import numpy as np

def run_ablation(

chunks: list[dict], # [{"id": str, "text": str, "doc_id": str}]

queries: list[dict], # [{"id": str, "text": str, "gold_doc_ids": list[str]}]

embed_fn: Callable[[list[str]], np.ndarray], # returns (N, D) float32 array

k: int = 10,

) -> dict:

texts = [c["text"] for c in chunks]

doc_ids = [c["doc_id"] for c in chunks]

corpus_vecs = embed_fn(texts).astype("float32")

faiss.normalize_L2(corpus_vecs)

index = faiss.IndexFlatIP(corpus_vecs.shape[1])

index.add(corpus_vecs)

recall_hits, reciprocal_ranks = [], []

for q in queries:

q_vec = embed_fn([q["text"]]).astype("float32")

faiss.normalize_L2(q_vec)

_, indices = index.search(q_vec, k)

retrieved_doc_ids = [doc_ids[i] for i in indices[0]]

gold = set(q["gold_doc_ids"])

recall_hits.append(int(any(d in gold for d in retrieved_doc_ids)))

rr = 0.0

for rank, d in enumerate(retrieved_doc_ids, start=1):

if d in gold:

rr = 1.0 / rank

break

reciprocal_ranks.append(rr)

return {

"recall_at_10": float(np.mean(recall_hits)),

"mrr": float(np.mean(reciprocal_ranks)),

"n_chunks": len(chunks),

"n_queries": len(queries),

}

Freeze the corpus slices and query set

Assign every document and every query a deterministic ID before the first run. Write both to disk in versioned JSON files. Every ablation loads those files from disk — it never resamples.

Production Note: Use identical document subsets, labels, and query IDs across every trial. A common error is regenerating the query set after changing the chunking strategy. Even if the queries look the same, any change to gold labels or query wording invalidates cross-run comparison. Lock

corpus_v1.jsonandqueries_v1.jsonand treat them as immutable artifacts for the duration of the ablation.

Record splitter settings with enough detail to reproduce

Every ablation run must emit a config file alongside its metric output. The config must capture the splitter class, its parameters, the embedding model identifier, and the tokenizer — because the tokenizer controls effective chunk length and a mismatch between splitter tokenizer and model tokenizer changes what "512 tokens" actually means.

# ablation_config.yaml — emit one file per run, store alongside metrics output

run_id: "ablation_003"

splitter:

class: "RecursiveCharacterTextSplitter" # LangChain class name

chunk_size: 512 # in tokens

chunk_overlap: 64 # in tokens

separators: ["\n\n", "\n", ". ", " ", ""] # separator hierarchy

tokenizer: "cl100k_base" # tiktoken encoding; must match embed model

embedding:

model: "text-embedding-3-large"

provider: "openai"

version: "2024-02-15" # pin API version

dimensions: 3072

vector_db:

backend: "faiss"

index_type: "IndexFlatIP"

distance: "cosine"

top_k: 10

corpus:

file: "corpus_v1.json"

query_file: "queries_v1.json"

Benchmark the chunking strategies on the same corpus

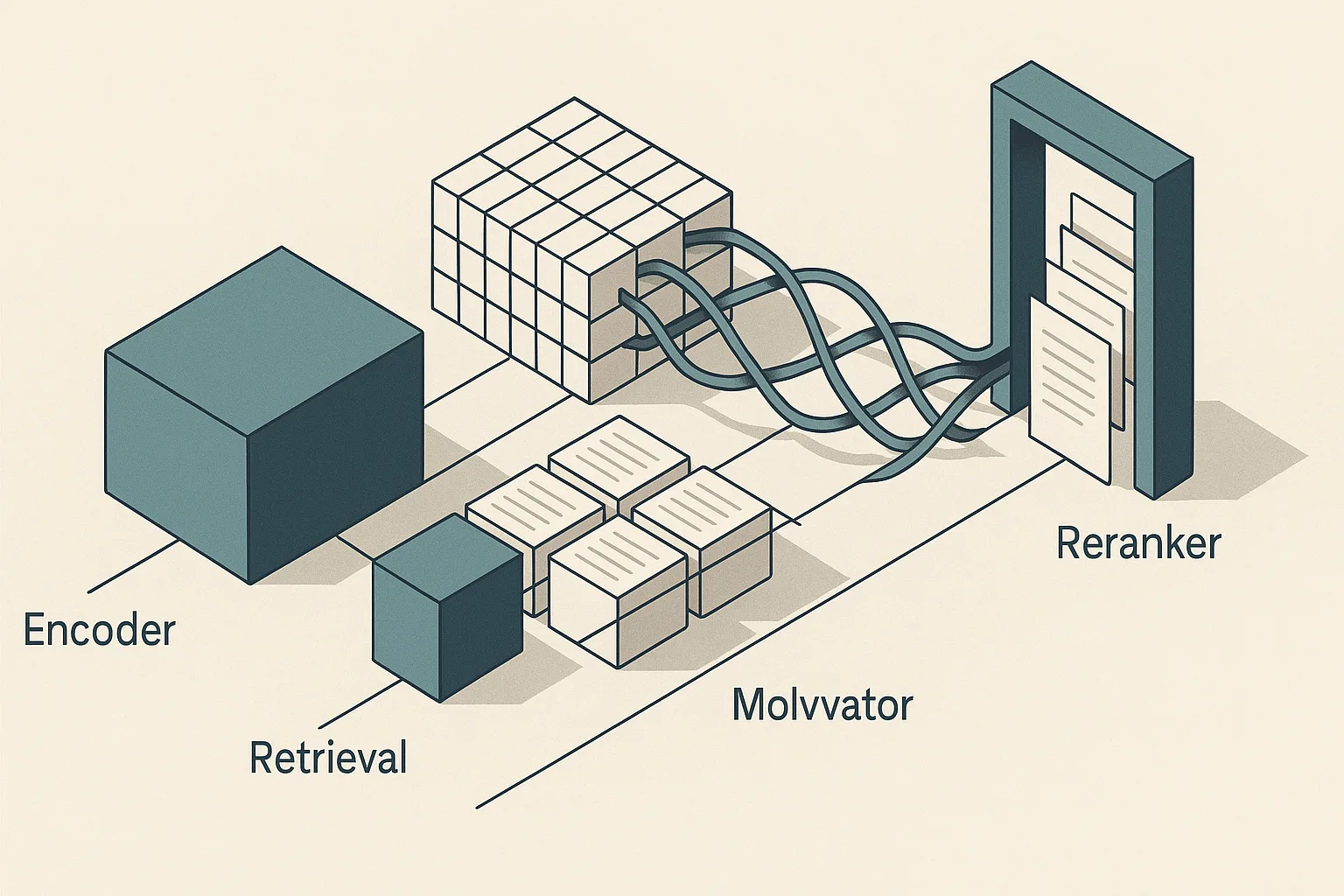

Vectara's NAACL 2025 study — which tested 25 chunking configurations across 48 embedding models — established that chunking and embedding must be evaluated together, not sequentially in isolation. The protocol: fix one embedding model (a mid-tier open model like BAAI/bge-m3 works well as a control), sweep chunking strategies, identify the top two or three configurations by recall@10 and answer accuracy, then sweep embedding models against those candidates.

| Chunking strategy | Description | Typical chunk count (200-doc corpus) |

|---|---|---|

| Recursive character split (512t / 64t overlap) | Splits on \n\n, \n, ., space in priority order |

~1,800 |

| Fixed-size (256t / 0t overlap) | Hard token boundaries, no overlap | ~3,200 |

| Semantic (embedding-based boundary detection) | Splits at cosine-similarity drop points | ~2,600 (variable) |

| Structure-aware (header/section boundaries) | Splits on document structural markers | ~900 |

Keep the RAG evaluation harness and the embedding model fixed across this table. Changing either variable while comparing strategies introduces a confound.

Recursive character splitting as the baseline

Recursive character splitting at 512 tokens with 50–100 tokens of overlap is the right starting point for most corpora because it respects natural sentence and paragraph boundaries without requiring a structural parse of the document. It is also the configuration for which the most benchmark evidence exists.

Pro Tip: Treat the 512-token / 50–100-token-overlap setting as a validated baseline, not a universal answer. Premai's 2026 guide calls this "the benchmark-validated default for most RAG applications" and reports 69% answer accuracy on an academic-paper corpus — but that number came from a specific corpus and query distribution. Validate the default against your own data before promoting it to production.

When using LangChain's RecursiveCharacterTextSplitter, set length_function=len only when your embedding model uses character-based tokenization. For subword models (GPT-family, BERT-family), pass a tiktoken or HuggingFace tokenizer as length_function so that "512 tokens" means 512 model tokens, not 512 characters.

Semantic, sentence, and structure-aware splits

Semantic chunking splits documents at embedding-space similarity drop points, which tends to produce topically coherent fragments. On retrieval-only metrics, semantic chunks frequently outperform fixed-size splits because each chunk is more topically pure — the embedding of the query finds a close match without noise from adjacent topics.

The problem is end-to-end answer accuracy. Premai's 2026 guide reports a 15-point accuracy gap between recursive splitting and semantic splitting on the same 50-paper corpus, with recursive splitting winning. Semantic chunking fragmented multi-step arguments across chunk boundaries, leaving the LLM without the full evidence chain needed to answer correctly.

Watch Out: Metric inversion is the dominant failure mode for semantic chunking. A configuration that maximizes recall@10 and MRR can simultaneously reduce answer accuracy if the retrieved chunks are topically precise but too short to contain complete evidence. As Premai's 2026 guide documents: "retrieval-only metrics can make semantic chunking look best while end-to-end answer accuracy can favor larger, structure-respecting chunks." Always compute answer accuracy before declaring a winner.

Structure-aware splits — splitting at section headers, <h2> tags, or document schema boundaries — work well on highly structured corpora (API documentation, legal contracts, standardized reports). On heterogeneous prose, structural markers are sparse, and the strategy degrades to large, poorly bounded chunks.

Chunk size and overlap sweeps that actually matter

A full factorial sweep of chunk sizes × overlap values × chunking strategies produces hundreds of combinations. Prune the grid by running recall@10 and MRR first, then computing answer accuracy only for configurations that clear a recall threshold.

| Chunk size (tokens) | Overlap (tokens) | Rationale for inclusion |

|---|---|---|

| 256 / 0 | High fragmentation baseline | Isolates the cost of lost context |

| 256 / 32 | Short chunk with minimal overlap | Tests whether overlap rescues short-chunk recall |

| 512 / 64 | Primary baseline | Benchmark-validated default |

| 512 / 128 | Heavier overlap | Tests overlap-driven recall gain |

| 1024 / 128 | Large chunk | Tests answer-accuracy ceiling for long context |

Pruning rule: drop any configuration where recall@10 falls more than 5 points below the best configuration in its size class. Run answer accuracy only on the survivors — typically two or three configurations. This keeps the embedding model re-embedding cost bounded because you run fewer full-corpus re-index cycles.

Benchmark the embedding models against the same chunks

Once you have identified the top one or two chunking configurations by the joint recall + accuracy criterion, freeze those chunks and sweep embedding models. OpenAI's text-embedding-3-large is described by OpenAI as their "Most capable embedding model" and produces 3072-dimensional vectors. Cohere Embed v3 adds document-type metadata to the embedding process. Open models — BAAI/bge-m3, nomic-embed-text-v1.5, and E5-large-v2 — run self-hosted with no per-token billing.

| Model | Dims | MTEB avg | Hosted cost | Re-embed 100k tokens |

|---|---|---|---|---|

| text-embedding-3-large | 3072 | ~64.6 | ~$0.13/M tokens | ~$0.013 |

| Cohere Embed v3 | 1024 | ~64.5 | ~$0.10/M tokens | ~$0.010 |

| BAAI/bge-m3 | 1024 | ~63.7 | $0 (self-hosted) | GPU amortized |

| nomic-embed-text-v1.5 | 768 | ~62.0 | $0 (self-hosted) | GPU amortized |

| E5-large-v2 | 1024 | ~61.5 | $0 (self-hosted) | GPU amortized |

Important: MTEB averages are drawn from public leaderboard positions as of May 2026. These scores reflect benchmark suite performance, not your corpus. Treat them as a starting prior, not a final ranking.

Fixing your chunking configuration and varying the embedding model while keeping the vector database index type constant lets you attribute recall differences to the model, not to index behavior. Rebuild the index from scratch for each model — do not reuse an index built with a different model's vectors.

How to rank models beyond MTEB scores

MTEB aggregate scores measure performance across dozens of heterogeneous tasks. Your corpus is one task, and MTEB performance on that task can deviate materially from the aggregate, especially in specialized domains (biomedical, legal, code, multilingual).

Pro Tip: Rank embedding models on your own corpus recall@10, domain vocabulary coverage, and inference latency — in that order. A model that ranks 5th on MTEB but was pre-trained on domain-adjacent text can outperform the MTEB leader on your retrieval task. Always run at minimum two models against your frozen query set before committing to an embedding model for production.

Latency matters operationally: bge-m3 on an NVIDIA H100 sustains high batch throughput at zero per-token cost. text-embedding-3-large adds network round-trip time and per-token billing but eliminates infrastructure management.

The open-model path is also easier to validate with tools from the sentence-transformers ecosystem when you need local evaluation, cosine-similarity inspection, or quick swaps between encoder checkpoints.

Batch cost and re-embedding time when switching models

Every model switch requires a full corpus re-embed and vector database reindex. For hosted models, OpenAI and Cohere bill by input token, so the cost is deterministic given your corpus token count. Estimate time and cost before committing to an experiment plan.

# Estimate re-embedding duration and cost for a hosted model

$ python - <<'EOF'

corpus_tokens = 8_500_000 # total tokens across all chunks; measure with your tokenizer

measured_throughput = 1_200_000 # replace with an empirically measured sustained throughput for your model and hardware

price_per_million = "check the current OpenAI pricing page" # verify at the official pricing source before budgeting

duration_sec = corpus_tokens / measured_throughput

print(f"Estimated duration: {duration_sec:.1f}s ({duration_sec/60:.1f} min)")

print(f"Estimated cost: corpus_tokens / 1_000_000 × current price_per_million")

EOF

For self-hosted models on an H100, the marginal re-embedding cost is compute time, not API dollars. The trade-off is infrastructure setup and maintenance. Include vector database write time (Pinecone upserts, Qdrant batch inserts) in the estimate — for corpora above 5M tokens, write time can exceed embedding time on hosted vector DBs with rate limits.

Read the results as a trade-off, not a single score

Retrieval recall@10 and end-to-end answer accuracy measure different things, and optimizing one without watching the other produces a pipeline that looks good in retrieval evaluation and fails in production. Premai's 2026 guide explicitly separates these metrics and shows they can diverge across the same corpus.

| Configuration | Recall@10 | MRR | Answer Acc. | Re-embed cost |

|---|---|---|---|---|

| Semantic / bge-m3 | 0.84 | 0.71 | 0.54 | $0 |

| 256t-fixed / bge-m3 | 0.79 | 0.66 | 0.58 | $0 |

| 512t-recursive / bge-m3 | 0.81 | 0.68 | 0.69 | $0 |

| 512t-recursive / text-emb-3-large | 0.85 | 0.73 | 0.72 | ~$1.10 |

Note: Numbers above are illustrative of the inversion pattern documented in Premai's 2026 guide and Vectara's NAACL 2025 study. Run your own ablation on your corpus — do not use these as production targets.

Decision Matrix — top combinations:

- Choose semantic / any model when your application demands high retrieval precision (MRR matters most) and the LLM can reconstruct complete answers from fragmented context (e.g., fact-lookup, named-entity extraction).

- Choose 512t-recursive / open model (bge-m3) when answer accuracy is the primary production metric, corpus re-embedding cost matters, and latency is constrained. This is the best-cost configuration for most corpora.

- Choose 512t-recursive / text-embedding-3-large when both recall@10 and answer accuracy must be maximized and per-token embedding model cost is acceptable.

- Choose structure-aware splits / any model when your corpus is highly structured (API docs, legal contracts) and section-level answers are expected — validate recall@10 carefully because chunk count drops substantially.

When larger chunks help answer accuracy

Larger, structure-respecting chunks improve answer accuracy when the correct answer depends on multiple adjacent facts — a derivation, a multi-step procedure, a clause-dependent legal interpretation. The RAG evaluation finding from Premai's 2026 guide is direct: "end-to-end answer accuracy can favor larger, structure-respecting chunks."

Pro Tip: Before reducing chunk size to improve recall@10, verify whether your corpus has section-level dependencies — answers that require two or three consecutive paragraphs to be complete. If it does, shrinking chunks below 400 tokens will fragment evidence and degrade answer correctness even if retrieval metrics improve. Long-context evidence capture is the mechanism; validate it against your query set before changing the chunk size.

When smaller or smarter chunks help recall@10

Tighter segmentation improves recall@10 when queries are narrow and the relevant passage is a single sentence or short fact embedded in a long document. The embedding model then matches the query to a focused chunk without noise from surrounding text.

Watch Out: Optimizing only for recall@10 on a chunking ablation is dangerous. If recall@10 rises while answer accuracy falls, the chunking configuration is too aggressive — it retrieves topically relevant fragments but strips the surrounding context the LLM needs to generate a correct answer. A 4-point recall gain that costs 10 points of answer accuracy is not a net improvement for a user-facing system.

Choose the chunking plus embedding setup for production

Select the production configuration by weighing recall@10, answer accuracy, re-embedding cost, inference latency, and corpus update frequency together. A vector database that requires full reindex on embedding model changes (most ANN indexes do) makes model switching expensive at scale — factor that into the decision before choosing a hosted embedding model that may be deprecated or repriced.

Decision Matrix — by corpus type and constraints:

- General mixed-format corpus, no strict latency requirement: 512t-recursive + bge-m3 or nomic-embed-text-v1.5 (self-hosted). Best cost/accuracy ratio; no per-token ingestion cost.

- Technical or scientific corpus, answer accuracy is critical: 512t-recursive + text-embedding-3-large or Cohere Embed v3. Pay for hosted embeddings; re-embed budget per corpus refresh.

- Highly structured corpus (docs, contracts): Structure-aware splits + bge-m3. Validate chunk count and recall@10 carefully.

- Multilingual corpus: bge-m3 (natively multilingual) + recursive split. text-embedding-3-large also supports multilingual but at higher cost.

- High-throughput ingestion pipeline (>50M tokens/day): Self-hosted bge-m3 or E5-large-v2 on H100 to eliminate per-token API cost at scale.

A practical default for most corpora

Recursive character splitting at 512 tokens with 50–100 tokens of overlap, combined with a strong open embedding model like BAAI/bge-m3, is the safest starting point for a new RAG pipeline.

Bottom Line: Start with LangChain's

RecursiveCharacterTextSplitteratchunk_size=512,chunk_overlap=64, separator hierarchy["\n\n", "\n", ". ", " ", ""], and BAAI/bge-m3 for embeddings. This combination is benchmark-validated across multiple corpora and costs nothing to run at scale. Override it when your corpus has strong structural boundaries (use structure-aware splits), when queries depend on multi-paragraph evidence chains (increase chunk size to 768–1024), or when domain-specific recall is materially below baseline on your own query set (sweep hosted models). Always validate against your frozen query set before shipping.

When to pay for hosted embeddings versus open models

The decision is primarily about corpus size, update frequency, and operational complexity — not raw quality, because the quality gap between top hosted and top open models is narrow on most corpora.

| Factor | Hosted (text-embedding-3-large, Cohere Embed v3) | Self-hosted (bge-m3, E5-large-v2, nomic) |

|---|---|---|

| Per-token ingestion cost | ~$0.10–$0.13 per million tokens | $0 marginal; GPU infra cost amortized |

| Re-embedding on corpus refresh | Predictable API cost; no infra ops | GPU time; requires serving pipeline |

| Operational complexity | Low; no model serving | Higher; batching, versioning, hardware |

| Model deprecation risk | High; API pricing/versions change | Low; weights are pinned locally |

| Throughput ceiling | API rate limits apply | H100 batch throughput, no external limit |

For a 5M-token corpus re-embedded weekly, hosted embedding model cost is approximately $3.25/week at text-embedding-3-large pricing — negligible. At 500M tokens weekly, the bill is $325/week, which justifies self-hosted infrastructure. The vector database reindex cost (Pinecone, Qdrant) is separate and scales with upsert volume, not token count.

FAQ on chunking and embedding benchmarks

What is the best chunk size for RAG? There is no universal optimum. Premai's 2026 guide recommends 512 tokens with 50–100 tokens of overlap as a benchmark-validated starting point, but the right size depends on your corpus structure and whether answer accuracy or retrieval recall is your primary metric. Larger chunks (768–1024 tokens) tend to improve answer accuracy on evidence-dense corpora; smaller chunks (256 tokens) can improve recall@10 on fact-lookup tasks.

How do you evaluate chunking strategies in RAG? Fix the corpus, query set, and scoring pipeline, then measure recall@10, MRR, and end-to-end answer accuracy independently for each chunking configuration while holding the embedding model constant. Do not select a winner from retrieval metrics alone — check answer accuracy before promoting any configuration.

Pro Tip: Use at least 50 gold QA pairs per corpus type. Below 50 queries, a 5-point recall difference is within noise. Vectara's NAACL 2025 study used large-scale query sets precisely because small deltas in retrieval quality only become statistically meaningful at scale.

Does chunk size affect embedding quality? Yes, indirectly. Most embedding models have a context window of 512–8192 tokens. Chunks that exceed the model's effective window get truncated, which degrades the embedding's representation of the full chunk. Chunks that are very short (under 50 tokens) lose contextual signal. Match chunk size to the model's effective context range, and verify with your tokenizer that measured token counts align with model token counts.

Which embedding model is best for RAG? No single model dominates every domain. MTEB leaderboard positions are a useful prior: text-embedding-3-large and Cohere Embed v3 lead on aggregate benchmarks as of May 2026, while BAAI/bge-m3 is the strongest self-hosted option. The reliable answer for your pipeline comes from running at least two models against your own frozen query set on your own corpus — not from reading a leaderboard.

Sources & References

- Premai 2026 RAG Chunking Benchmark Guide — Primary synthesis of Vectara's NAACL 2025 study; source for the 9-point retrieval quality delta, metric inversion findings, and 512-token default recommendation.

- Premai 2026 Production RAG Architecture Guide — Source for the 69% accuracy / 15-point gap between recursive and semantic chunking on 50 academic papers; 43-token average fragment size for semantic chunking.

- OpenAI Embeddings Guide — Official documentation for text-embedding-3-large; billing model (per input token) and API versioning.

- OpenAI Model Page: text-embedding-3-large — Source for "Most capable embedding model" designation and dimension specification.

- OpenAI API Pricing — Per-token pricing for embedding models; authoritative reference for cost estimates.

- BAAI/bge-m3 on HuggingFace — Model card for the BAAI multilingual embedding model.

- nomic-embed-text-v1.5 on HuggingFace — Model card for the Nomic self-hosted embedding model.

- E5-large-v2 on HuggingFace — Model card for the E5 embedding model.

Keywords: LangChain, LlamaIndex, sentence-transformers, OpenAI text-embedding-3-large, Cohere Embed v3, BAAI bge-m3, nomic-embed-text-v1.5, E5-large-v2, MTEB, recall@10, MRR, NVIDIA H100, FAISS, Pinecone, Qdrant