What BGE-M3 and BGE Reranker claim to solve

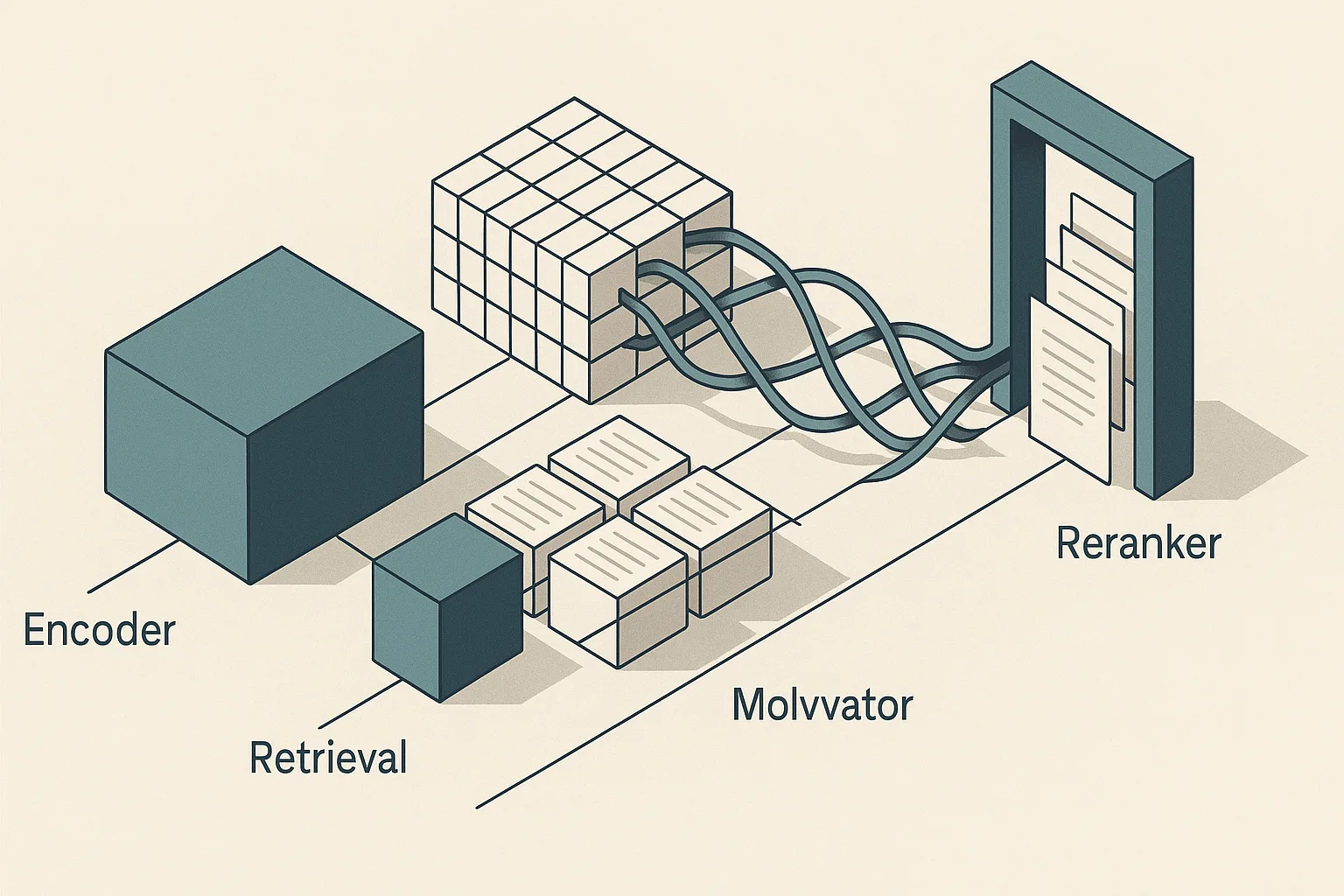

Bottom Line: BGE-M3 is a single embedding model that simultaneously produces dense vectors, sparse/lexical signals, and multi-vector (ColBERT-style) token representations from the same encoder, over 100+ languages and inputs up to 8,192 tokens. The BGE Reranker is a distinct second-stage model that re-scores a candidate set already retrieved by the first stage. The benchmark story only becomes actionable when you treat these two components as addressing different failure modes — dense retrieval misses exact-match entities, lexical retrieval misses semantic paraphrases, and reranking cannot recover candidates that were never retrieved. Using any one component as a substitute for the others leaves precision or recall on the table.

BGE-M3 is used for first-stage retrieval when you need one encoder to cover semantic, lexical, and token-level matching. The model card frames BGE-M3's design philosophy directly: "M3 stands for multilinguality, multi-granularity, and multi-functionality." — BAAI BGE-M3 model card. The paper extends that claim: the model "can simultaneously accomplish the three common retrieval functionalities: dense retrieval, multi-vector retrieval, and sparse retrieval" — BGE-M3, arXiv:2402.03216. That sentence does real architectural work: it means a single inference call can feed three parallel indexing strategies rather than requiring three separate models.

The BGE Reranker family (base and large variants) is positioned orthogonally. Its model card identifies it as a reranking model distinct from the embedding retrieval line, designed to re-rank candidate passages rather than generate the first-stage index. Nothing in the official documentation frames the reranker as an alternative to strong first-stage retrieval; the framing throughout is second-stage precision refinement after candidate generation.

How the benchmark evidence was framed

The benchmark evidence for BGE-M3 and BGE Reranker comes from five evaluation families cited in the model card: BEIR, C-MTEB, MIRACL, MKQA, and a LlamaIndex evaluation. Each measures a different slice of retrieval quality, and conflating their scores produces misleading conclusions. The claims are benchmark-family reporting across BEIR, C-MTEB, MIRACL, MKQA, and LlamaIndex — not a single production deployment evaluation — and independent replication would be needed to validate them on any specific domain corpus.

BEIR was designed precisely as "A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models" — its 18 datasets span document types, query styles, and domains that most retrieval models were not fine-tuned on, making it a meaningful stress test for generalization. MTEB, the parent framework from which C-MTEB is derived, "spans 8 embedding tasks covering a total of 58 datasets and 112 languages" — providing cross-lingual and cross-task coverage that a single-domain evaluation cannot. MIRACL and MKQA extend coverage to multilingual and cross-lingual retrieval specifically.

| Benchmark family | Primary scope | Key metric | What it tests for BGE-M3 |

|---|---|---|---|

| BEIR (18 datasets) | Zero-shot IR generalization | nDCG@10 | Dense + sparse generalization to unseen domains |

| C-MTEB/Retrieval | Chinese + multilingual embedding tasks | nDCG@10 | Cross-lingual retrieval quality |

| MIRACL | Multilingual retrieval (16+ languages) | nDCG@10 | Non-English first-stage recall |

| MKQA | Cross-lingual open-domain QA | Recall@k | Multilingual answer retrieval |

| LlamaIndex eval | RAG pipeline quality | Various | End-to-end answer relevance in RAG context |

No single benchmark in this table proves production-level system performance. BEIR scores, for instance, reflect zero-shot retrieval without domain-specific fine-tuning — a condition that rarely holds in a production deployment where labeled data usually exists. BGE Reranker benchmark reporting is primarily anchored to C-MTEB, and the reranker documentation ties it to that evaluation frame rather than to BEIR directly.

Which retrieval tasks and languages matter most

BGE-M3 supports more than 100 working languages, as stated in both the model card and the paper abstract — "It can support more than 100 working languages" — BGE-M3, arXiv:2402.03216. The benchmark selection in the model card reflects that: MIRACL covers 16+ languages specifically chosen for low-resource and non-Latin-script coverage, while C-MTEB includes Chinese retrieval tasks absent from English-centric BEIR.

The relevance of language breadth to benchmark selection is architectural, not cosmetic. MTEB's 112-language span means that a model claiming multilingual parity must be evaluated across script diversity, morphological complexity, and resource availability — not just on high-resource European languages where most embedding models already perform well. "Particularly, M3-Embedding is proficient in multi-linguality..." — BGE-M3, arXiv:2402.03216.

Pro Tip: When evaluating BGE-M3 for a multilingual deployment, prioritize reproducing MIRACL results on the specific languages in your corpus first. The 100+ language coverage claim represents model-card and benchmark scope; performance variance across languages within that set can be substantial, and low-resource languages near the tail of the training distribution will not match high-resource performance.

Why nDCG@10 and recall curves are the right lens here

nDCG@10 and recall@k measure different retrieval stages and should not be treated as interchangeable. Recall@k captures whether the relevant document appears anywhere in the top-k candidates — it is the right lens for evaluating first-stage retrieval, because if a relevant document is absent from the candidate set, no reranker can recover it. nDCG@10 captures ranked precision within the top 10 — it is the right lens for evaluating how well a reranker re-orders a candidate set where relevant documents are already present.

Normalized Discounted Cumulative Gain at cutoff $k$ is defined as:

$(\text{nDCG@}k = \frac{\text{DCG@}k}{\text{IDCG@}k}, \quad \text{where } \text{DCG@}k = \sum_{i=1}^{k} \frac{2^{rel_i} - 1}{\log_2(i+1)})$

Here (rel_i) is the graded relevance of the document at rank $i$, and IDCG@$k$ is the DCG of the ideal ranking. The logarithmic discount penalizes relevant documents appearing lower in the ranked list — which is precisely why a reranker that moves a relevant document from rank 8 to rank 2 registers as a substantial nDCG@10 improvement without affecting recall@k at all.

BEIR reports nDCG@10 as its primary metric across datasets, making it the correct frame for comparing first-stage retrieval methods against each other and for measuring the additive lift of a reranker. A benchmark delta visible only in nDCG@10 but not in recall@k means the model improves ranking precision, not candidate coverage. A delta visible in both means the model actually retrieves more relevant documents in the first place.

What the benchmark story says about BGE-M3

BGE-M3's central claim is that three retrieval modes — dense, sparse/lexical, and multi-vector — emerge from one encoder without requiring separate models or fine-tuning per mode. "It can simultaneously accomplish the three common retrieval functionalities: dense retrieval, multi-vector retrieval, and sparse retrieval" — BGE-M3, arXiv:2402.03216. The practical implication is that a single inference call generates all three representations, which means hybrid retrieval pipelines can draw on dense vectors, sparse token weights, and token-level ColBERT scores without a zoo of specialized models.

The long-input capability — "Multi-Granularity: It is able to process inputs of different granularity, spanning from short sentences to long documents of up to 8192 tokens" — BAAI BGE-M3 model card — matters for retrieval quality on chunked documents. Most dense embedding models cap inputs at 512 tokens; at 8,192 tokens, BGE-M3 can encode whole documents or long passages as single units, reducing the chunking artifacts that degrade retrieval on technical documentation, legal text, or scientific papers.

| Benchmark family | Retrieval mode(s) | Verified claim | What it tests |

|---|---|---|---|

| BEIR | Dense + sparse | Zero-shot generalization across 18 datasets | Whether one encoder remains robust on unseen IR tasks |

| C-MTEB | Dense + sparse + reranking-adjacent retrieval framing | Chinese and multilingual retrieval tasks | Cross-lingual retrieval quality and ranking stability |

| MIRACL | Dense + sparse | 16+ multilingual retrieval settings | Non-English first-stage recall |

| MKQA | Dense retrieval | Cross-lingual open-domain QA | Multilingual answer retrieval |

| Model card | Multi-vector + long input | Inputs up to 8,192 tokens | Whether long documents can be encoded without aggressive chunking |

The benchmark families cited in the model card (BEIR, C-MTEB, MIRACL, MKQA) collectively test these modes across zero-shot generalization, multilingual retrieval, and RAG-style evaluation. The model card reports new SOTA on multilingual and cross-lingual benchmarks, but as noted above these claims come from BAAI's own benchmark reporting and warrant independent reproduction before treating them as universal.

Dense retrieval strengths and where it still misses

Dense retrieval with BGE-M3 targets semantic similarity — queries and documents are projected into a shared embedding space, and approximate nearest-neighbor search surfaces semantically related passages regardless of surface-level lexical overlap. This is exactly where BM25 fails: a query like "cardiac arrest resuscitation protocol" may miss documents that use "CPR" or "defibrillation sequence" exclusively, because BM25 scores term frequency directly without modeling synonymy or paraphrase.

The paper frames dense retrieval as one of three retrieval functions rather than a complete substitute for lexical or multi-vector modes — BGE-M3, arXiv:2402.03216 — which is itself a signal that BAAI's own evaluation found cases where dense retrieval alone is insufficient. The failure modes are consistent with the broader IR literature: dense models struggle with rare entities (product serial numbers, gene identifiers, legal citation codes) where the query is an exact string rather than a semantic concept, and they can exhibit term drift on short, highly specific queries where the embedding space smears signal across neighbors.

| Query type | BGE-M3 dense | BM25 | Winner |

|---|---|---|---|

| Semantic paraphrase ("heart attack" → "myocardial infarction") | Strong — embedding captures synonymy | Weak — term mismatch | Dense |

| Exact entity match ("CVE-2023-44487") | Weak — rare token, low training signal | Strong — exact term match | Lexical |

| Multilingual query → English corpus | Strong — cross-lingual embedding | Fails — no term overlap | Dense |

| Short exact-match product code | Weak — embedding space dilutes signal | Strong — TF scoring | Lexical |

| Long-passage semantic similarity | Moderate at 8k tokens | Fails on synonym-heavy passages | Dense |

The honest read: dense retrieval with BGE-M3 outperforms BM25 on semantic and cross-lingual tasks; BM25 retains an advantage on exact-match entity queries. Neither dominates every regime — which is the core argument for hybrid first-stage retrieval.

Lexical retrieval and sparse signals in the same model

BGE-M3's sparse retrieval mode generates learned term weights rather than raw BM25 term frequencies. The key distinction is that these are not the static inverse document frequency weights of classical BM25 — they are contextualized sparse weights produced by the same encoder that generates dense vectors, meaning the sparse representation can upweight semantically important terms even if their raw frequency is low.

This has a specific practical benefit: the sparse signals live alongside the dense vectors in a single model call, enabling a hybrid index (inverted + ANN) from one set of model outputs. For exact-match entities, rare technical terms, or queries where the user has strong lexical intent, the sparse channel captures signal that the dense channel dilutes.

Pro Tip: When the primary failure mode in your retrieval evaluation is exact-match recall on rare or domain-specific terms (product SKUs, clinical codes, proper names), run BGE-M3's sparse mode in hybrid with dense before adding a reranker. Sparse signals in the same model avoid the tokenization mismatches that arise when combining a separate BM25 index (which uses a different tokenizer vocabulary) with a neural embedding index.

Official sources do not quantify per-dataset sparse-only scores separately from the hybrid results, so any claim about the isolated lift from BGE-M3's sparse mode versus classical BM25 requires local evaluation against your own corpus.

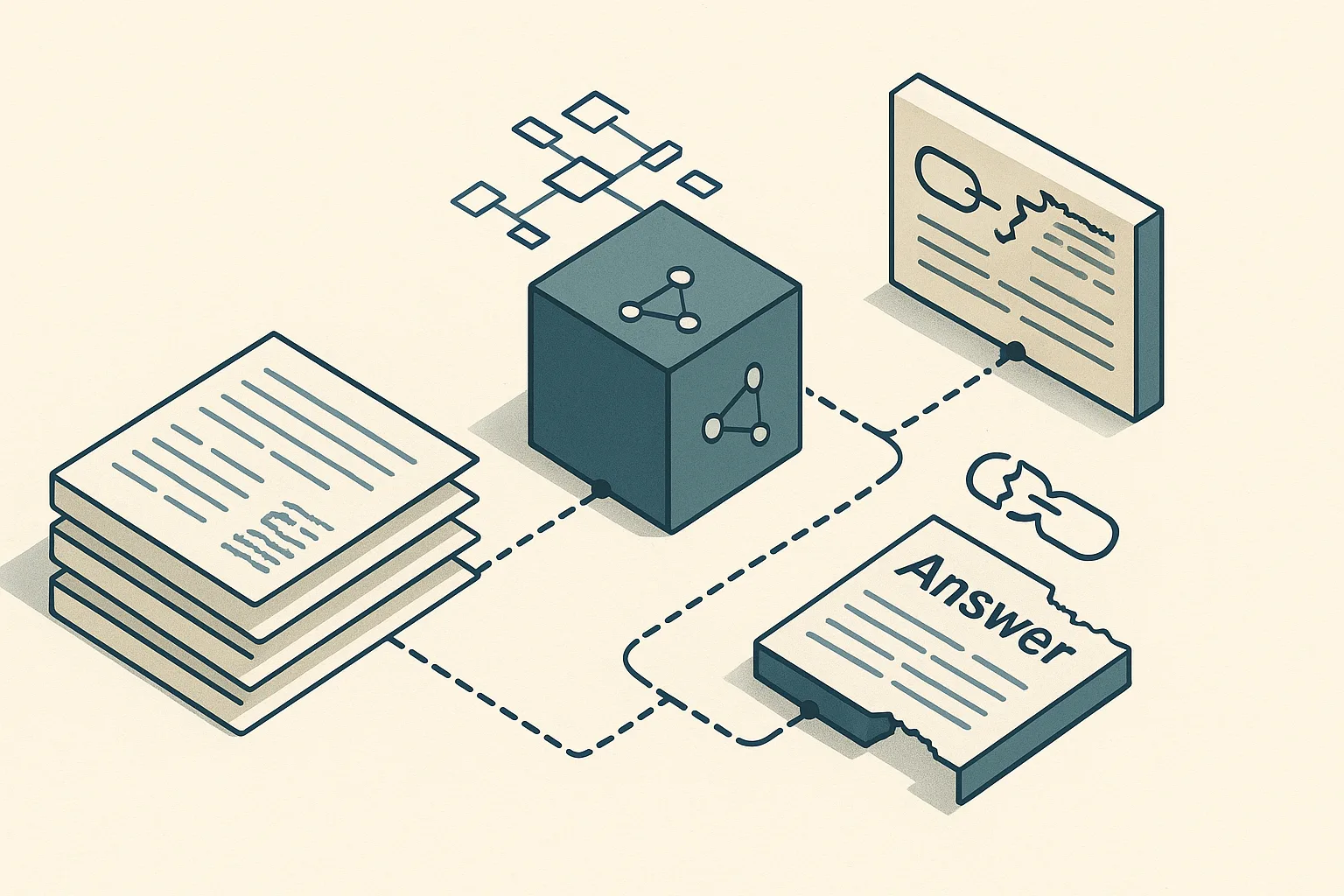

Multi-vector retrieval and ColBERT-style matching

Multi-vector retrieval stores one vector per token in the input rather than one vector per document. At query time, relevance is computed as a MaxSim score: for each query token, find the maximum similarity across all document token vectors, then sum those per-token maxima across the query.

$(\text{score}(q, d) = \sum_{i=1}^{|q|} \max_{j=1}^{|d|} \cos(E_q^{(i)}, E_d^{(j)}))$

The model card explicitly cites ColBERT as the canonical example: "Multi-vector retrieval: use multiple vectors to represent a text, e.g., ColBERT" — BAAI BGE-M3 model card. The MaxSim operator preserves token-level alignment, which means a long document can match a short query through a subset of its tokens rather than requiring the document's single compressed vector to capture the query's semantics globally.

At 8,192 tokens, the multi-vector mode becomes particularly relevant for long documents. A single-vector dense representation of an 8k-token technical document must compress all semantic content into one vector; multi-vector representation lets the retriever match query tokens against the specific passage sections that are actually relevant. The trade-off is indexing cost: multi-vector indexes require substantially more storage and a compatible MaxSim-capable retrieval backend (e.g., PLAID-style indexing). This is not a reason to avoid the mode — it is a constraint to account for before choosing it for production.

Multi-vector retrieval is a first-stage retrieval mechanism, not a form of reranking. Conflating them leads to incorrect pipeline design: multi-vector narrows candidates via token-level similarity; reranking re-scores those candidates with a cross-encoder that reads both query and passage together.

What the benchmark story says about BGE Reranker

The BGE Reranker is a cross-encoder family — base and large variants — that takes a query-passage pair as joint input and produces a relevance score, rather than encoding them independently and comparing in vector space. Its benchmark references are tied primarily to C-MTEB reranking tasks, and the model card frames it explicitly as a second-stage model: "Reranker Model: llm rerankers, BGE Reranker" — BAAI/bge-reranker-large, Hugging Face. The LLM reranker variants (built on larger generative backbones) represent a higher-capacity tier in the same family. BGE Reranker is therefore complementary to embedding retrieval rather than interchangeable with it.

| Model family | Benchmark family | What it tests | Why it matters |

|---|---|---|---|

| bge-reranker-base | C-MTEB reranking | Re-ordering within a fixed candidate pool | Measures second-stage precision on short lists |

| bge-reranker-large | C-MTEB reranking | Higher-capacity re-ordering within a fixed candidate pool | Measures whether larger cross-encoder capacity improves ranking quality |

| LLM reranker variants | C-MTEB reranking + BEIR context | Reranking under a larger backbone and broader evaluation framing | Useful when top-k is small and precision is more important than throughput |

| First-stage retriever context | BEIR / candidate generation | Candidate recall before reranking | Establishes whether the reranker receives the right documents at all |

The benchmark narrative for the reranker cannot be read in isolation from the first-stage retrieval results, because reranking precision is only meaningful relative to the recall of the candidate set it receives. A reranker operating on a top-100 candidate set with 60% recall@100 has 40% of queries where the relevant document is structurally absent — no ranking model recovers that. The C-MTEB reranking tasks assume a fixed candidate pool, so their scores reflect how well the model re-orders within that pool, not how well it compensates for a poor first stage.

| Reranker variant | Backbone type | Primary eval | Typical deployment position |

|---|---|---|---|

| bge-reranker-base | Encoder-only (smaller) | C-MTEB reranking | Low-latency second stage, small candidate sets |

| bge-reranker-large | Encoder-only (larger) | C-MTEB reranking | Higher-quality second stage, moderate latency |

| LLM reranker variants | Generative backbone | C-MTEB + BEIR | Highest quality, highest latency, top-k ≤ 20 |

The model card documentation does not supply verified per-dataset nDCG@10 deltas for BGE Reranker over BGE-M3 dense retrieval in this review. Practitioners must treat the benchmark framing as indicative of relative ordering among reranker tiers rather than as a precise lift estimate for their own query distribution.

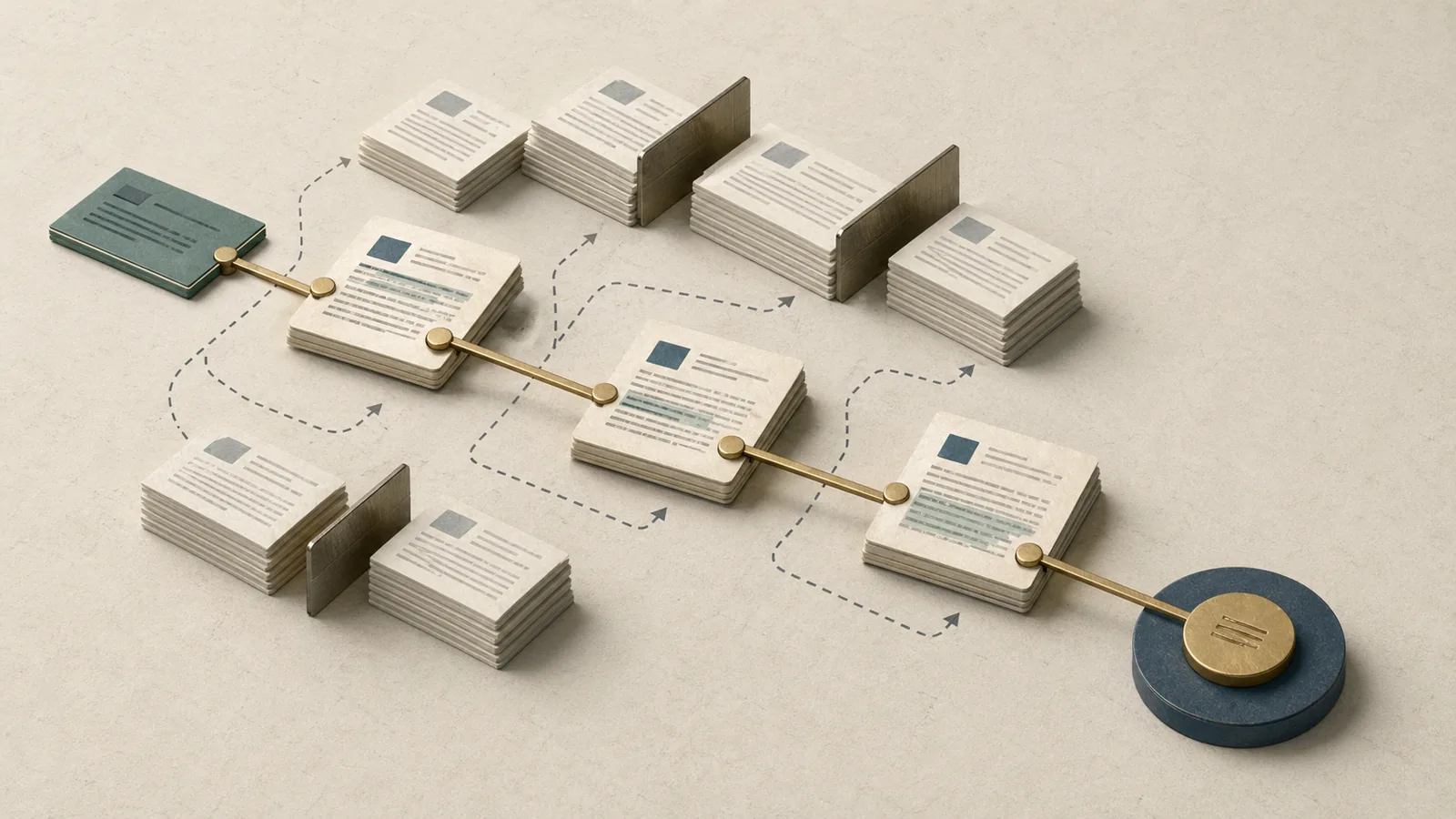

Where reranking improves precision after first-stage recall

Dense retrieval and reranking solve different problems at different pipeline stages. Dense retrieval maximizes recall — getting relevant documents into the candidate set at all. Reranking maximizes precision — ensuring that the highest-ranked documents in the final output are actually the most relevant ones.

The distinction matters for evaluation: improvements visible in recall@k but not nDCG@10 indicate first-stage gains; improvements visible in nDCG@10 but not recall@k indicate reranker gains. A system that improves both metrics simultaneously has both better candidate generation and better re-ranking.

| Dimension | First-stage dense retrieval | BGE Reranker (second stage) |

|---|---|---|

| Input | Query embedding vs. index | Query + passage text (joint) |

| Output | Top-k candidate documents | Re-scored ranking of candidates |

| Failure mode | Misses relevant docs (recall gap) | Misranks present-but-buried relevant docs |

| Metric sensitivity | Recall@k, MRR | nDCG@10, Precision@1 |

| Latency profile | Sub-10ms (ANN search) | Scales with candidate set × model size |

| Can recover from first-stage miss? | N/A | No — cannot score absent candidates |

The literature recognizes the sequential nature of this bottleneck explicitly: "To bypass the sequential reranking bottleneck..." — Reranker-Guided Search, arXiv:2509.07163 — indicating active research interest in reducing the latency tax that cross-encoder reranking imposes. The BGE Reranker family does not bypass this bottleneck; it occupies it by design. Engineers choosing a reranker variant must account for the per-candidate inference cost scaling with candidate set size.

How the reranker changes BEIR and C-MTEB interpretation

The separation of BGE Reranker's benchmark references (primarily C-MTEB) from BGE-M3's (BEIR, C-MTEB, MIRACL, MKQA) is itself informative. C-MTEB's reranking tasks supply a fixed candidate set to the reranker and measure how well it re-orders that set — the retrieval quality entering the reranker is controlled. BEIR zero-shot tasks measure full end-to-end retrieval quality including candidate generation.

| Benchmark | What changes with reranker added | Metric most affected | Interpretation risk |

|---|---|---|---|

| BEIR (end-to-end) | Full pipeline nDCG@10 including first stage | nDCG@10 | Cannot isolate reranker lift from retrieval model change |

| C-MTEB reranking tasks | Re-ranking within fixed candidate pool | nDCG@10, MRR | Controlled — isolates ranking quality from retrieval quality |

| MIRACL (first stage) | Not directly applicable to reranker | Recall@k | Reranker benchmark not reported here |

When BGE Reranker documentation reports C-MTEB improvements, those numbers reflect re-ranking quality given an assumed candidate pool — "Reranker Model: llm rerankers, BGE Reranker" — BAAI/bge-reranker-large, Hugging Face. Comparing C-MTEB reranker scores against BEIR first-stage retrieval scores as if they were on the same axis is a category error that shows up frequently in informal benchmark comparisons.

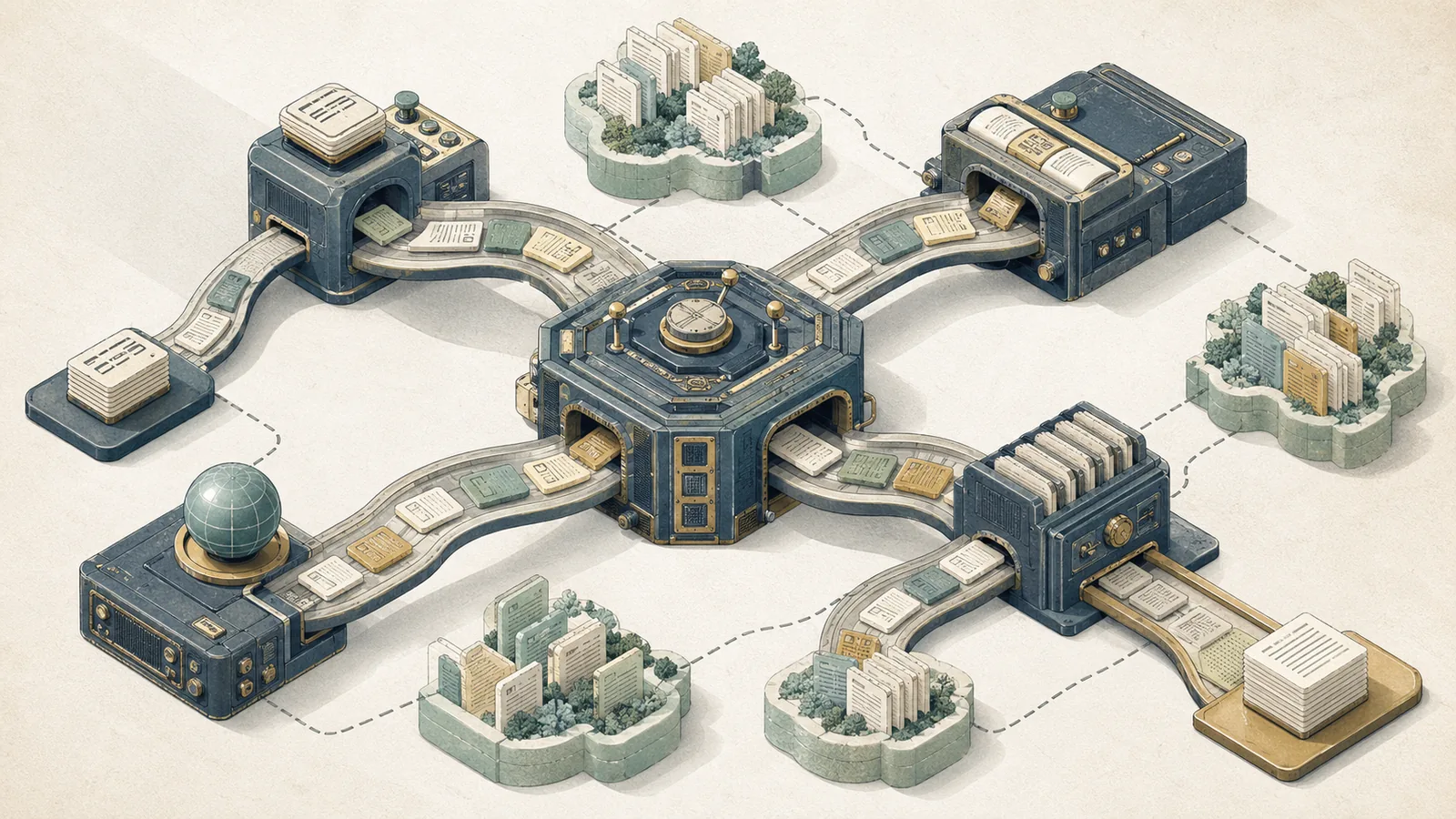

When the benchmark evidence points to a hybrid retrieval stack

The competitive gap that most benchmark summaries miss: dense retrieval, BGE-M3's sparse signals, and BGE Reranker are not competing substitutes — they address structurally different failure modes at different pipeline stages. BGE-M3's model card documents all three retrieval modes in a single model precisely because no single mode dominates every query type on BEIR or MIRACL. The reranker is documented separately because its value is conditional on what the first stage already retrieved.

| Option | When to use it | Why it fits | Main limitation |

|---|---|---|---|

| Dense-only | High query volume, low latency budget, semantically rich paraphrases | Fast ANN search and strong semantic recall | Misses exact-match entities and codes |

| Dense + sparse hybrid | Mixed semantic and exact-match queries, or complementary recall patterns | Dense covers paraphrases while sparse recovers lexical hits | Higher index complexity than dense-only |

| Reranker added | High first-stage recall, need for better nDCG@10 or Precision@1 | Improves ordering inside an already good candidate pool | Cannot rescue missing candidates |

| Multi-vector retrieval | Long, semantically heterogeneous documents with MaxSim-capable infrastructure | Token-level matching preserves local relevance in long text | Higher storage and retrieval cost |

A pure dense-only pipeline leaves recall gaps on exact-match entity queries. Adding BGE-M3's sparse signals in a hybrid first stage recovers those candidates without requiring a separate BM25 index. BGE Reranker then refines the precision of whatever candidates survived the first stage — but it cannot compensate for candidates that were never retrieved. The BEIR benchmark's heterogeneous design is what makes this architecture argument empirically grounded: BEIR's 18 datasets include argument retrieval, fact verification, question answering, and scientific claim verification — exactly the breadth that exposes single-mode retrieval weaknesses.

Which query patterns favor dense, lexical, or hybrid first-stage retrieval

BGE-M3's triple-mode design allows query-pattern-aware retrieval routing, but the BEIR benchmark evidence does not supply per-query-pattern score splits. The taxonomy below derives from the model's documented capabilities and the established IR literature on failure modes, not from a single numeric BGE-specific benchmark.

| Query pattern | Best first-stage mode | Reason | Failure risk of wrong mode |

|---|---|---|---|

| Semantic drift ("cardiovascular event" → "heart attack docs") | Dense | Embedding space captures synonymy | Lexical: term mismatch, zero BM25 score |

| Exact entity lookup (CVE IDs, ISBNs, gene symbols) | Sparse/lexical | Term weight preserves exact token | Dense: rare token embedding diluted |

| Cross-lingual (French query → English corpus) | Dense | Cross-lingual embedding alignment | Lexical: no token overlap |

| Long document, multi-topic passage | Multi-vector | Token-level MaxSim matches query to relevant passage segment | Dense: single vector misses sub-topic |

| Mixed semantic + entity (drug name + symptom) | Hybrid (dense + sparse) | Each mode covers one failure mode | Either alone leaves gaps |

The BEIR benchmark is the appropriate evaluation frame for stress-testing these failure modes because its heterogeneous dataset composition forces a retrieval model to generalize across all five patterns — BEIR, arXiv:2104.08663. A model that scores well on BEIR's average nDCG@10 is demonstrating robustness across these patterns, not just on a single query style.

Where the reranker tax is worth paying

The sequential reranking bottleneck is real: the cross-encoder must process every query-candidate pair jointly, meaning latency scales with candidate set size. The literature explicitly identifies this as a design constraint — "To bypass the sequential reranking bottleneck..." — Reranker-Guided Search, arXiv:2509.07163. No verified per-millisecond benchmark for BGE Reranker variants was found in the official model documentation, so any fixed latency figure should come from your own hardware measurement, not from a published claim.

| When to pay the reranker tax | Why it is justified | When not to pay it | Why not |

|---|---|---|---|

| Ranked search results, QA passage selection, or other precision-sensitive outputs | nDCG@10 and Precision@1 directly affect user outcomes | Bulk document processing or unranked extraction | Precision on a top-k list is not the output metric |

| First-stage recall@100 is already high enough that relevant docs are present | Reranker can refine ordering instead of compensating for missing candidates | First-stage recall is poor | A reranker cannot recover absent candidates |

| Candidate set is kept small (top-20 to top-50) | Keeps inference cost manageable | Candidate set is large | Cross-encoder cost scales with every pair |

| Application tolerates second-pass latency within SLA | Quality gain can justify the extra pass | Latency must stay at single-pass retrieval levels | The reranker adds a sequential bottleneck |

- BGE Reranker is worth the latency cost when: The application presents a ranked list (search results, QA passage selection) where Precision@1 or nDCG@10 directly affects user outcomes, first-stage recall@100 is confirmed to be high enough that relevant documents are reliably present in the candidate set, and the candidate set passed to the reranker is kept small (top-20 to top-50) to manage inference cost.

- BGE Reranker is not worth the cost when: Latency is hard-constrained below the time required for a second inference pass, first-stage recall is poor (reranking a bad candidate set returns a marginally better-ordered bad candidate set), or the application is a bulk document processing pipeline where ranked precision is not the output metric.

- Use the smaller reranker variant (bge-reranker-base) when: Latency sensitivity is high and the quality delta between base and large has not been verified on your query distribution.

- Use the LLM-backbone reranker variant when: Candidate set size is small (top-10 to top-20) and maximum precision on each result matters more than throughput.

Limitations and caveats in the 2026 benchmark narrative

Watch Out: The benchmark families cited in the BGE-M3 model card (BEIR, C-MTEB, MIRACL, MKQA, LlamaIndex eval) collectively represent benchmark reporting conditions — zero-shot generalization, controlled multilingual splits, fixed candidate pools — that will not map directly onto a production corpus with domain-specific terminology, private document collections, or non-standard query distributions. BEIR's 18 datasets were selected to expose zero-shot generalization; a model that scores at the top of the BEIR leaderboard was optimized under exactly those conditions. Production retrieval on legal contracts, biomedical literature, or internal enterprise knowledge bases introduces domain shift that benchmark scores cannot predict.

BEIR's heterogeneous design is a strength for comparative evaluation but a source of dataset selection sensitivity: "BEIR: A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models" — arXiv:2104.08663. Average nDCG@10 across 18 datasets obscures per-dataset variance that can be 20+ points between the best and worst performing datasets for any given model. A model that excels on NQ and MSMARCO but struggles on TREC-COVID or Touché-2020 still reports a high average.

MTEB's 112-language coverage — "MTEB spans 8 embedding tasks covering a total of 58 datasets and 112 languages" — arXiv:2210.07316 — demonstrates breadth, but language representation in the benchmark is uneven. High-resource languages (English, Chinese, German, French) dominate training data and evaluation datasets. BGE-M3's 100+ language coverage claim should be interpreted as benchmark and model-card scope, not as a guarantee of equal retrieval quality across every language in the set.

No verified 2026 production telemetry was found in the official sources for either BGE-M3 or BGE Reranker. The benchmark narrative is what the evidence supports — and that is what it should be treated as.

How to read claims without overfitting to a leaderboard

BEIR leaderboard rankings change as new models are submitted and as evaluation protocols are refined. A model that held SOTA at time of paper submission may have been superseded by the time a practitioner reads the model card. More critically, BEIR's zero-shot framing — "A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models" — arXiv:2104.08663 — means it measures retrieval quality on data the model was not fine-tuned on, which is a different condition from a practitioner who has labeled data and can fine-tune.

Pro Tip: Treat BEIR and C-MTEB scores as a prior on model quality, not as a prediction of domain performance. Before committing to BGE-M3 as the embedding layer in a production pipeline, run nDCG@10 and recall@100 on a held-out sample of your own query-document pairs. A model with a lower BEIR average score may outperform BGE-M3 on your specific corpus if that corpus's domain distribution better matches the lower-scoring model's training data.

The correct relationship between leaderboard evidence and deployment decisions: leaderboard performance establishes which models are worth evaluating locally. It does not eliminate the local evaluation step.

What to reproduce before adopting the model pair

Before treating the BGE-M3 + BGE Reranker combination as a validated retrieval stack for a specific application, reproduce the following benchmark-family conditions against your own query and document distribution:

| Reproduction target | Benchmark family | Metric | What it validates |

|---|---|---|---|

| First-stage dense recall | BEIR subset closest to your domain | Recall@100 | Whether relevant docs enter candidate set |

| First-stage sparse/hybrid lift | BEIR or local held-out set | Recall@100 delta over dense-only | Whether sparse signals add coverage |

| Multi-vector benefit | Local long-document corpus | nDCG@10 vs. dense-only | Whether ColBERT-style matching helps your doc lengths |

| Reranker precision lift | C-MTEB style fixed candidate pool | nDCG@10 delta | Whether reranker improves ranking given your candidates |

| Multilingual transfer | MIRACL language subset matching your corpus | nDCG@10 | Whether 100+ language claim holds for your languages |

The model card acknowledges "Benchmarks from the open-source community" — BAAI/bge-m3 model card — meaning the benchmark table reflects aggregated community evaluations under varying conditions. BGE Reranker documentation anchors to C-MTEB — BAAI/bge-reranker-large, Hugging Face — which provides a controlled reranking evaluation but does not cover every domain or language pair. Reproduction should be treated as a prerequisite for production adoption, not an optional validation step.

Implications for search and RAG practitioners

The benchmark story translates to a concrete retrieval architecture recommendation: BGE-M3 belongs at the first stage as a multi-mode retrieval component, and BGE Reranker belongs at the second stage as a precision refinement layer — BGE-M3, arXiv:2402.03216; BGE Reranker, Hugging Face. These are not competing options for the same slot in the pipeline.

For RAG specifically, the failure mode taxonomy from the benchmark evidence maps directly to pipeline stages. RAG quality degrades when the retriever fails to surface the relevant passage (recall failure) or when the generator receives a top-k context window where the relevant passage is buried at rank 5–10 (precision failure). BGE-M3's hybrid retrieval modes address the recall failure; BGE Reranker addresses the precision failure. A RAG pipeline that applies the reranker without measuring first-stage recall first is treating a precision tool as a recall fix — which the benchmark evidence does not support.

| Architecture choice | When to use it | Why it fits | Main risk |

|---|---|---|---|

| BGE-M3 dense-only | Semantic search over a monolingual English corpus with well-formed queries | Minimum viable first stage with low latency | Misses exact-match entities and codes |

| BGE-M3 dense+sparse hybrid | Corpus includes identifiers, codes, or proper names where exact-match failures appear | Reclaims lexical hits without a separate BM25 index | More moving parts than dense-only |

| BGE Reranker | nDCG@10, Precision@1, or answer accuracy is below threshold and recall is already sufficient | Precision refinement after candidate generation | Adds sequential latency |

| Multi-vector retrieval | Documents are long and single-vector compression is measurably reducing recall | Token-level matching on long, heterogeneous passages | Higher storage and indexing cost |

- BGE-M3 as first stage, dense-only, is the minimum viable configuration for semantic search over a monolingual English corpus with well-formed queries.

- Add BGE-M3's sparse signals in hybrid mode when your corpus includes identifiers, codes, or proper names where exact-match failures appear in retrieval error analysis.

- Add BGE Reranker when downstream quality metrics (nDCG@10, Precision@1, answer accuracy in RAG evaluation) are below threshold and first-stage recall is confirmed to be sufficient.

- Add multi-vector retrieval when documents are long (approaching 8k tokens) and single-vector compression is measurably reducing recall on semantically heterogeneous passages.

What to try first in OpenSearch or Elasticsearch DSL

The model card confirms: "For embedding retrieval, you can employ the BGE-M3 model using the same approach as BGE" — BAAI/bge-m3, Hugging Face. Both OpenSearch and Elasticsearch support kNN dense vector fields natively, making the dense mode the lowest-friction starting point. The model card also documents sparse and multi-vector modes, implying hybrid indexing configurations are supported — but no official integration tutorial for OpenSearch/Elasticsearch DSL was verified in the reviewed sources.

This should be sequenced as an evaluation pipeline, not a single-shot deployment decision: start with a BM25 or dense baseline, add hybrid retrieval when recall gaps show up, then add reranking only after first-stage recall is proven adequate.

| Pipeline configuration | First-stage method | Second stage | When to try it |

|---|---|---|---|

| Baseline | BGE-M3 dense (kNN) | None | Start here; establishes recall and latency baseline |

| Hybrid v1 | BGE-M3 dense + sparse (hybrid kNN + inverted index) | None | When exact-match recall gaps appear in error analysis |

| Hybrid v2 | BGE-M3 dense + BM25 (score fusion) | None | When BGE-M3 sparse mode is not yet supported in your search stack version |

| Reranker added | BGE-M3 dense (or hybrid) | BGE Reranker (top-50 → top-10) | When nDCG@10 is below target and recall@50 is confirmed sufficient |

| Full hybrid + rerank | BGE-M3 dense + sparse | BGE Reranker | Maximum quality; highest latency; validate against your SLA |

Sequence this as an evaluation experiment, not a deployment decision. Each configuration above should be measured against your own held-out query set with nDCG@10 and recall@k before advancing to the next tier.

How to measure whether the reranker is worth its latency

The measurement protocol follows directly from the metric split established earlier: nDCG@10 captures reranker value; recall@k captures first-stage adequacy. BEIR provides the benchmark frame for zero-shot retrieval quality comparison — arXiv:2104.08663 — and MTEB provides broader cross-task validation across 58 datasets and 112 languages — arXiv:2210.07316.

| Measurement step | Metric | Tool | Decision gate |

|---|---|---|---|

| Measure first-stage recall | Recall@50, Recall@100 | BEIR harness or local eval | If recall@100 < 60%, fix retrieval first |

| Measure nDCG@10 without reranker | nDCG@10 | BEIR/MTEB or local eval | Establishes baseline precision |

| Add reranker, measure nDCG@10 delta | ΔnDCG@10 | Same harness, reranked output | If delta < 0.02 absolute, reranker unlikely to justify cost |

| Measure per-query reranker latency | ms per query at your candidate set size | Profiled inference on target hardware | Compare against your application's p95 latency SLA |

| Check latency × quality trade-off | nDCG@10 per ms | Derived from above | Reranker is worth it when quality delta exceeds SLA headroom |

No fixed latency threshold from official BGE documentation was verified; the 100–300ms range cited in search practitioner discussions is hardware- and batch-size-dependent and should be measured rather than assumed. The correct decision process is corpus-specific nDCG@10 improvement measured against your own p95 latency budget — not a universal payback rule.

FAQ

What is BGE-M3 used for?

BGE-M3 is an embedding model designed for information retrieval tasks requiring multilingual, multi-granularity, and multi-functional support. Concretely, it generates dense vectors for semantic similarity search, sparse/lexical weights for exact-match retrieval, and multi-vector (ColBERT-style) token representations for fine-grained passage matching — all from a single encoder pass. It is used as the first-stage retrieval component in search systems, RAG pipelines, and multilingual document retrieval applications. "M3 stands for multilinguality, multi-granularity, and multi-functionality" — BAAI BGE-M3 model card.

How does BGE M3 compare to BM25?

BGE-M3's dense retrieval mode outperforms BM25 on semantic and cross-lingual queries where surface-level term matching fails — synonym handling, paraphrase retrieval, and cross-lingual query-to-document matching. BM25 retains an advantage on exact-match entity queries (product codes, CVE identifiers, gene symbols) where the query is a precise string and dense embedding space compression dilutes the signal. The BEIR benchmark, framed as "A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models" — arXiv:2104.08663 — is the appropriate frame for head-to-head comparison, but practitioners should reproduce the comparison on their own corpus before drawing conclusions.

Is BGE Reranker better than embedding retrieval?

BGE Reranker is not a replacement for embedding retrieval — it is a second-stage precision model that operates on candidates already retrieved by the first stage. It improves nDCG@10 when relevant documents are present in the candidate set but ranked below the top positions. If first-stage recall is low, the reranker cannot compensate for absent candidates. The right question is not "which is better" but "does my first stage retrieve the right candidates, and does the reranker meaningfully improve their ordering for my use case?"

What is the difference between dense retrieval and reranking?

Dense retrieval encodes query and documents independently into vectors and uses approximate nearest-neighbor search to find top-k candidates — it maximizes recall efficiently at scale. Reranking takes a small candidate set and scores each query-candidate pair jointly with a cross-encoder, which reads both texts together and produces a more accurate relevance score — it maximizes precision within the candidate set. Dense retrieval operates at index search speed; reranking scales with candidate set size and model inference time.

What languages does BGE-M3 support?

BGE-M3 supports more than 100 working languages, as documented in both the paper abstract and model card — "It can support more than 100 working languages" — BGE-M3, arXiv:2402.03216. Multilingual benchmark coverage includes MIRACL (16+ languages), C-MTEB (Chinese-centric multilingual tasks), and MKQA (cross-lingual open-domain QA). Performance parity across all 100+ languages is not guaranteed; languages near the tail of the training distribution will perform below high-resource languages.

Pro Tip: For FAQ-style rapid validation before committing to BGE-M3, run it through the MTEB retrieval task subset closest to your domain and language. MTEB's 58 datasets across 112 languages — arXiv:2210.07316 — provide a controlled evaluation harness that is faster to run than a full BEIR sweep and returns task-specific metrics rather than a single aggregate score.

Sources & References

Production Note: All benchmark claims cited in this article originate from model cards, paper abstracts, and established benchmark framework papers. They represent controlled evaluation conditions (zero-shot retrieval, fixed candidate pools, specific dataset mixes) that do not automatically transfer to production deployments on private or domain-specific corpora. Before relying on any nDCG@10 or recall figure cited here for an architecture decision, reproduce the relevant benchmark on a representative held-out sample of your own data.

- BAAI/bge-m3 — Hugging Face model card — Primary source for BGE-M3 capabilities, supported retrieval modes, language coverage, and benchmark references

- BGE-M3: Multi-Lingual, Multi-Functionality, Multi-Granularity Text Embeddings — arXiv:2402.03216 — Paper abstract and architecture description for BGE-M3

- BAAI/bge-reranker-large — Hugging Face model card — Primary source for BGE Reranker large variant, benchmark framing, and model family description

- BAAI/bge-reranker-base — Hugging Face model card — BGE Reranker base variant documentation

- BEIR: A Heterogenous Benchmark for Zero-shot Evaluation of Information Retrieval Models — arXiv:2104.08663 — Benchmark design, dataset composition, and evaluation framing for zero-shot IR

- MTEB: Massive Text Embedding Benchmark — arXiv:2210.07316 — Benchmark framework spanning 58 datasets and 112 languages used to derive C-MTEB

- Reranker-Guided Search — arXiv:2509.07163 — Source for sequential reranking bottleneck characterization

Keywords: BGE-M3, BGE Reranker, BEIR, C-MTEB, MIRACL, MKQA, LlamaIndex, FlagEmbedding, BM25, ColBERT, OpenSearch, Elasticsearch DSL, nDCG@10, MTEB