What MultiHop-RAG proves about naive retrieval in multi-hop QA

Bottom Line: MultiHop-RAG (arXiv:2401.15391) demonstrates that naive top-k retrieval is structurally insufficient for questions whose answers require chaining evidence across multiple documents. A system can score well on local relevance while still failing to compose a correct answer — because the evidence chain is incomplete, not because any single retrieved chunk is wrong. The benchmark's value is diagnostic: it names and isolates the failure mode rather than proving that any one architecture universally fixes it.

MultiHop-RAG, introduced by Yixuan Tang and Yi Yang, is a benchmark dataset of 2,556 queries built on an English news article corpus, where the evidence for each query is intentionally distributed across two to four documents. As the repository README states, it "contains 2556 queries, with evidence for each query distributed across 2 to 4 documents." That structural choice is the benchmark's core design decision: no single document contains the complete answer. A retrieval pipeline must surface the right combination of chunks across multiple sources, and the answer generation step must then compose across that evidence set coherently.

As Tang and Yang state directly: "existing RAG systems are inadequate in answering multi-hop queries, which require retrieving and reasoning over multiple pieces of supporting evidence." The benchmark operationalizes that inadequacy by making incomplete evidence chains the primary failure mode under examination. Multi-hop QA in this context is not about semantic similarity to a query — it is about whether a retrieval pipeline can recover the full set of supporting evidence across documents, not just the most locally relevant chunk.

Why this benchmark matters for RAG evaluation

The MultiHop-RAG benchmark fills a specific gap in RAG evaluation: it separates retrieval quality from answer quality, and it does so in a regime where the two can diverge sharply. Tang and Yang "demonstrate the benchmarking utility of MultiHop-RAG in two experiments" — the first comparing embedding models on evidence retrieval, the second evaluating end-to-end QA correctness. That two-layer structure is deliberate: it lets researchers isolate where a pipeline breaks down.

What the benchmark proves is narrow but important: when evidence is distributed across multiple documents, retrieval recall and final answer correctness are not interchangeable metrics. What it does not prove is broader; it does not show that one retriever, one generator, or one graph-based method is always best across all workloads. The paper is an evaluation tool, not a deployment specification, and the RAG evaluation lesson is that a system can look strong on retrieval metrics while still failing to produce correct answers because the evidence set is incomplete.

The benchmark is an evaluation tool, not a deployment specification. It reports no production latency, no throughput figures, and no hardware sizing — because those are not the questions it is designed to answer. Architects who read the paper expecting infrastructure guidance will find nothing useful. Architects who read it to understand where their retrieval assumptions are wrong will find a precise instrument.

| Evaluation regime | Query structure | Evidence scope | What it measures |

|---|---|---|---|

| Single-hop retrieval | One document contains the answer | Single document | Local relevance accuracy |

| Naive top-k RAG | Query → top-k chunks → answer | Typically 1–3 chunks, same or different docs | Chunk-level recall |

| MultiHop-RAG evaluation | Answer requires 2–4 documents | Distributed across corpus | Evidence chain completeness + answer composition |

The practical difference matters enormously for RAG evaluation design. A system that achieves high recall-at-5 on a single-hop dataset may be scoring on a fundamentally easier problem than the one your enterprise knowledge base actually presents.

Where single-hop datasets mislead architects

Single-hop benchmarks overstate production readiness for any knowledge base where answers require cross-document reasoning. When your evaluation corpus assumes a one-to-one mapping between query and document, you are not measuring the failure mode that actually breaks RAG in production.

MultiHop-RAG targets questions whose evidence spans two to four documents — a materially different regime from single-document QA. A retrieval pipeline tuned on single-hop data can achieve high recall numbers and still fail consistently on the class of questions an enterprise support system will receive daily: "What changed between version 2.1 and 2.3 of the API?" or "Which two teams shipped the features that caused this regression?" Both require chaining evidence across distinct documents.

Pro Tip: A high recall-at-k score on a single-hop evaluation dataset does not transfer to multi-hop query accuracy. Before accepting a retrieval benchmark as validation, confirm whether its queries require evidence from more than one document. If they do not, the benchmark cannot surface the evidence-chaining failure mode that MultiHop-RAG was built to expose.

What counts as a multi-hop question in practice

MultiHop-RAG defines multi-hop questions operationally through three query types — Inference, Comparison, and Temporal — plus a Null type, according to the OpenReview version of the paper. Each type requires combining evidence from distinct sources rather than finding a single semantically close passage.

- Inference queries require deriving a conclusion from facts distributed across documents.

- Comparison queries require retrieving attributes of two or more entities from separate sources and comparing them.

- Temporal queries require ordering or relating events across documents where no single document contains the full timeline.

- Null queries are included as a control class in the benchmark taxonomy, so evaluation can distinguish genuine multi-hop behavior from cases where no cross-document chain is needed.

Watch Out: Multi-hop QA is not the same as high semantic similarity across a topic. Two documents about the same company can both score highly against an embedding-based query without either one containing the specific comparative or temporal relationship the question requires. Topic proximity and evidence chaining are orthogonal properties — conflating them produces retrieval pipelines that look competent but compose answers incorrectly.

How the MultiHop-RAG dataset was built

The MultiHop-RAG paper describes a deliberate construction process: Tang and Yang "We detail the procedure of building the dataset, utilizing an English news article dataset as the underlying RAG knowledge base". News articles are a natural fit for multi-hop evaluation because they reference shared entities across stories — a person, company, or event can appear in multiple articles with complementary or conflicting details, forcing retrieval to recover several sources to answer accurately.

The GitHub repository confirms the dataset contains 2,556 queries with evidence distributed across two to four documents per query, and that document metadata is included in the benchmark artifacts. That metadata is not incidental: pipelines that strip it during ingestion will break the evidence-linking mechanism the benchmark depends on, even if the raw text chunks still look plausible.

| Dataset property | Value / Description |

|---|---|

| Data source | English news article corpus |

| Total queries | 2,556 |

| Evidence distribution | 2–4 documents per query |

| Query types | Inference, Comparison, Temporal, Null |

| Evaluation artifacts | Queries, evidence mappings, document metadata |

| Repository | yixuantt/MultiHop-RAG |

The paper's two experiments address retrieval and QA separately: Experiment 1 compares embedding models on evidence retrieval quality; Experiment 2 evaluates end-to-end answer correctness. This two-stage structure reflects the benchmark's core thesis — a pipeline can fail at retrieval, or it can retrieve adequately but fail at composition, and the benchmark makes both failure modes visible.

What the repository adds beyond the paper

The "Repository for \"MultiHop-RAG...\"" is the canonical source for benchmark assets. The paper describes methodology; the repository provides the evaluation artifacts needed to reproduce and extend it. The README confirms the 2,556-query count, the evidence distribution structure, and the metadata requirements that pipelines must satisfy.

Pro Tip: Treat the GitHub repository as the ground truth for benchmark assets, not the arXiv PDF. The repository contains the query splits, evidence mappings, and metadata structures that determine whether your evaluation run is actually measuring what the paper measured. If your run diverges from those artifacts, you are running a different benchmark.

Why the evidence is distributed across multiple documents

The two-to-four document evidence distribution is not an artifact of the dataset construction — it is the stress condition the benchmark is designed to apply. As the repository README states: "it contains 2556 queries, with evidence for each query distributed across 2 to 4 documents."

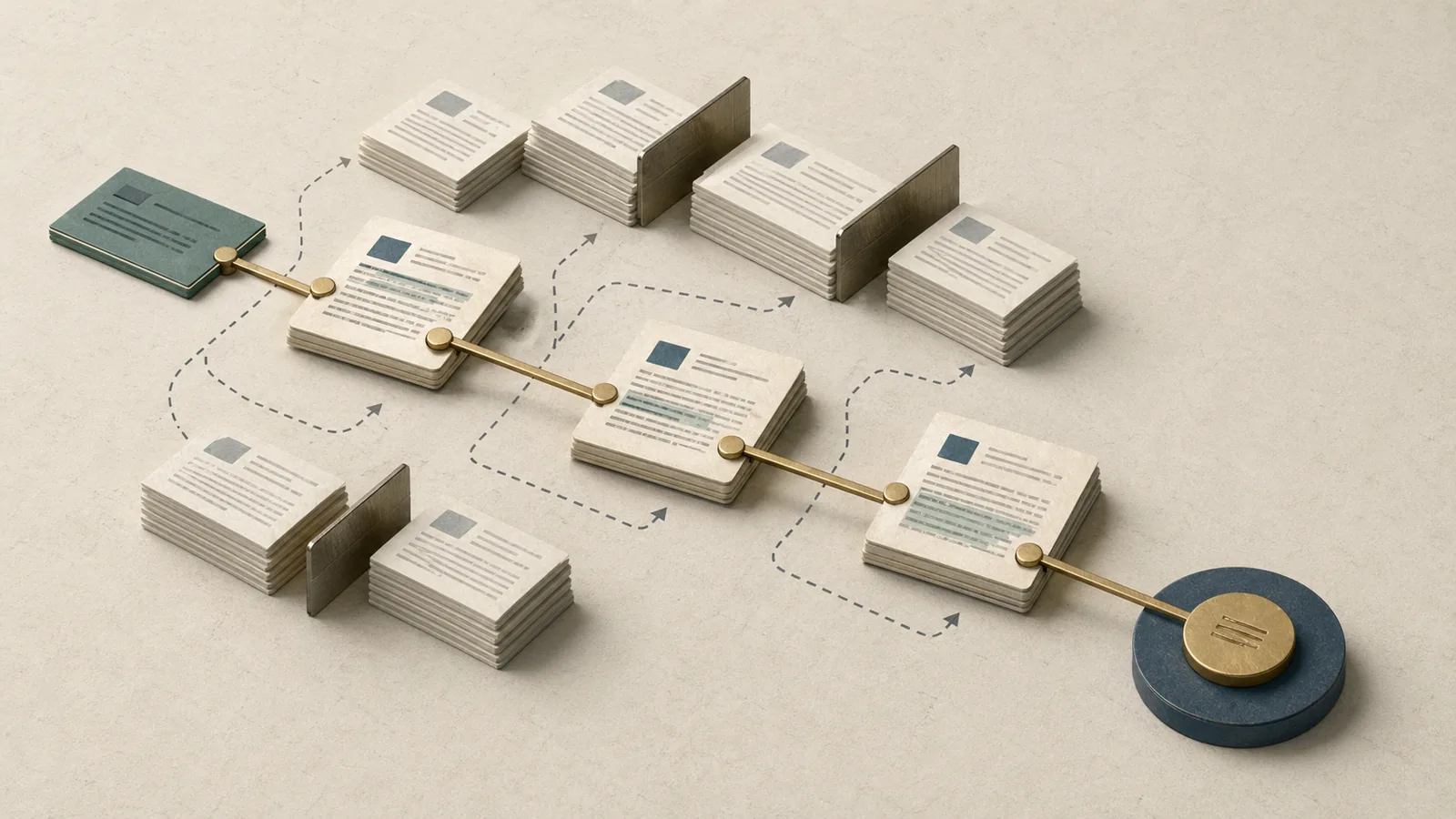

Naive top-k retrieval fails here for a structural reason: it optimizes for chunk-level semantic relevance to the query, not for coverage of a distributed evidence set. A k=5 retrieval pass may return five chunks from two related documents while missing the third document that contains the temporal or comparative fact needed to complete the answer. The system has not made a retrieval error in the local sense — each returned chunk is genuinely relevant — but it has failed to cover the full evidence chain.

This is the precise failure mode the benchmark was built to expose: partial evidence coverage masquerading as successful retrieval, visible only when you measure end-to-end answer correctness rather than chunk-level recall.

Watch Out: Over-relying on top-k chunk retrieval for multi-hop QA produces a specific class of silent failure. Retrieval metrics look acceptable; answer accuracy does not. If your RAG evaluation pipeline only measures retrieval recall and not final answer correctness, you will not detect this failure mode at all. MultiHop-RAG requires both layers to be evaluated.

Benchmark results and what they mean for retrieval pipelines

The MultiHop-RAG paper's two experiments produce findings at two distinct levels: embedding model performance on evidence retrieval, and end-to-end QA correctness across the full pipeline. The central finding across both levels is consistent with the benchmark's design thesis — "existing RAG systems are inadequate in answering multi-hop queries, which require retrieving and reasoning over multiple pieces of supporting evidence."

The accessible source materials do not expose full numeric score tables in their public-facing snippets, so the table below reflects the comparative structure the paper reports rather than specific accuracy deltas that cannot be verified from the public sources. The patterns, however, are well-defined.

| Evaluation layer | What MultiHop-RAG measures | Observed pattern |

|---|---|---|

| Retrieval (Experiment 1) | Embedding model recall across multi-hop evidence sets | Models vary; no single embedding dominates across all query types |

| QA correctness (Experiment 2) | End-to-end answer accuracy requiring composed evidence | Lower than retrieval metrics alone would predict |

| Gap between layers | Difference between retrieval recall and answer correctness | Material gap — retrieval success does not imply answer success |

| Query type sensitivity | Performance variance across Inference / Comparison / Temporal | Temporal and Comparison types show higher failure rates than Inference |

The practical implication for RAG evaluation design is that measuring only one layer is insufficient. A pipeline that retrieves well but composes poorly is not a retrieval problem — it is a reasoning or context-assembly problem. A pipeline that fails retrieval will also fail composition, but the converse does not hold.

Reading the retrieval metrics without overclaiming

The benchmark's first experiment uses retrieval metrics — precision, recall, and related measures over the evidence set — to compare embedding models. These scores are meaningful within the benchmark's scope: they tell you which embedding approach is more likely to recover the complete evidence set for a given multi-hop query type.

They do not tell you whether the downstream answer generator will use that evidence correctly. Retrieval and RAG evaluation are nested problems, and conflating them produces overconfident assessments.

Pro Tip: When reporting MultiHop-RAG retrieval results, always pair them with end-to-end QA accuracy. A retrieval recall score in isolation overstates pipeline quality for multi-hop queries. The benchmark is designed to make both layers measurable — use both.

What the results say about naive top-k retrieval

The benchmark design directly stress-tests naive top-k retrieval by distributing evidence across two to four documents per query. The finding is structural: a fixed-k retrieval window that is not query-type-aware will statistically miss part of the evidence chain on a significant portion of the 2,556 queries, because the evidence is not co-located.

"Existing RAG systems are inadequate in answering multi-hop queries" — and the benchmark makes the mechanism explicit. A k=5 retrieval pass can return five chunks that each score highly on embedding similarity while still missing the second or third document that completes the reasoning chain. The error is invisible at the chunk level and only appears at the answer level.

Watch Out: Recall-at-k on individual chunks is an optimistic proxy for end-to-end multi-hop accuracy. A system with recall-at-5 of 0.85 can still fail to retrieve the complete evidence set for queries requiring three distinct documents, because the probability of covering all required documents within a fixed-k window decreases with evidence spread. Do not use recall-at-k as a proxy for multi-hop QA readiness without also measuring composed answer correctness.

When GraphRAG or other graph-augmented approaches are worth the complexity

The MultiHop-RAG benchmark establishes that naive retrieval fails on multi-hop queries — it does not establish that any single architecture fixes all cases. The decision to adopt GraphRAG or another graph-augmented approach requires a workload-driven analysis, not a benchmark score read in isolation.

Microsoft's GraphRAG project states explicitly that "GraphRAG uses knowledge graphs to provide substantial improvements in question-and-answer performance when reasoning about complex information." The mechanism is relevant here: knowledge graphs encode entity relationships across documents explicitly, which means multi-hop traversal can follow named relationships rather than relying on embedding similarity to infer connection. For Comparison and Temporal query types — where the relationship between entities matters as much as the entities themselves — graph structure provides a direct advantage.

Choose naive top-k RAG when: - Queries are primarily single-document in nature (FAQ retrieval, clause lookup, factual lookups with a clear single source) - Latency and infrastructure simplicity are primary constraints - The evaluation corpus confirms that evidence rarely spans more than one document per query - The team needs a reproducible baseline to measure any improvement from added complexity

Choose graph-augmented retrieval (GraphRAG or equivalent) when: - Queries require multi-hop reasoning across named entities — particularly Comparison and Temporal types - The knowledge base contains documents that share entities with distinct, complementary facts - Answer quality is the primary production metric and latency budget accommodates graph traversal - Your MultiHop-RAG evaluation scores show a material gap between retrieval recall and answer accuracy

Choose a hybrid pipeline when: - The query distribution mixes single-hop and multi-hop questions at production volume - You want the speed of top-k retrieval for simple queries with graph traversal as a fallback for detected multi-hop patterns - Operational teams can maintain graph index freshness alongside the embedding index

Decision signals from your workload

The most reliable signal for architecture selection is empirical: run your actual production queries through the MultiHop-RAG evaluation framework and measure where your current pipeline breaks.

| Question complexity | Evidence spread | Latency tolerance | Recommended retrieval strategy |

|---|---|---|---|

| Single-hop, factual | 1 document | Low (< 500 ms) | Naive top-k |

| Single-hop, comparative | 1–2 documents | Medium | Top-k with re-ranking |

| Multi-hop, inferential | 2–3 documents | Medium–High | Graph-augmented or iterative retrieval |

| Multi-hop, temporal/comparative | 3–4 documents | High | GraphRAG or hybrid |

| Mixed workload | Variable | Mixed | Hybrid with query-type routing |

Pro Tip: Before committing to graph infrastructure, use the MultiHop-RAG dataset to measure your current system's gap between retrieval recall and answer accuracy on the query types that match your production distribution. If the gap is small, naive retrieval with better re-ranking may close it without the operational cost of a graph index.

When naive retrieval is still the right baseline

Graph-augmented systems are not always superior — they are superior for specific workload characteristics. The MultiHop-RAG benchmark is a RAG evaluation instrument; it does not prove that GraphRAG wins in all production deployments.

Production Note: Keep naive top-k RAG as an instrumented, measurable baseline before adding graph complexity. Graph indexes introduce additional infrastructure components (index build pipelines, graph query layers, freshness maintenance), and their improvements should be quantified against a stable baseline rather than assumed. A system that shows no material improvement over baseline on your specific query distribution does not justify the added operational surface area.

Practical reproduction notes for architects and reviewers

To run a valid reproduction of the MultiHop-RAG benchmark, the minimum artifact set comes from two sources: the arXiv paper for methodology and the GitHub repository for evaluation assets.

| Artifact | Source | Notes |

|---|---|---|

| Benchmark paper | arXiv:2401.15391 | Methodology, query types, experiment design |

| Query dataset (2,556 queries) | yixuantt/MultiHop-RAG | Canonical query splits; do not regenerate |

| Evidence mappings | GitHub repository | 2–4 documents per query; must match paper splits |

| Document metadata | GitHub repository | Required for pipeline preprocessing |

| Evaluation metrics | Paper + repository | Separate retrieval and QA correctness metrics |

Preprocessing must preserve document metadata — the benchmark's evidence-linking mechanism depends on it. Pipelines that strip metadata during chunking or ingestion will break the evidence mappings and produce results that cannot be compared to the paper's findings.

What to verify before trusting your own run

Small deviations in dataset handling compound into significant metric differences for multi-hop evaluation, because the evaluation depends on exact evidence set coverage per query.

Watch Out: Before accepting your reproduction run as valid, verify three things: (1) Dataset version — confirm your query files match the repository's current canonical splits, not an earlier or locally modified version. (2) Query split handling — train/dev/test splits must match exactly; cross-contamination will inflate scores. (3) Metric implementation — retrieval recall and answer correctness must be computed on the same evidence set definitions used in the paper. Discrepancies in any of these three areas will produce metrics that look plausible but are not comparable to published results.

The benchmark artifacts include metadata in the RAG pipelines, so preprocessing must preserve metadata structure exactly; otherwise the evidence-linking path no longer matches the repository's canonical artifacts, even if the text chunks are unchanged.

FAQ: MultiHop-RAG, multi-hop QA, and RAG evaluation

What is MultiHop-RAG?

MultiHop-RAG is a benchmark dataset and evaluation framework for retrieval-augmented generation systems, specifically designed to stress retrieval pipelines on questions whose answers require combining evidence from two to four documents. It contains 2,556 queries across four types (Inference, Comparison, Temporal, Null) and is available through the yixuantt/MultiHop-RAG repository.

How does MultiHop-RAG benchmark retrieval-augmented generation?

The benchmark runs two evaluation layers: a retrieval layer that compares embedding models on evidence recall, and a QA layer that measures end-to-end answer correctness requiring multi-document composition. The gap between these two layers is the core diagnostic signal.

Why does naive retrieval fail in multi-hop question answering?

Naive top-k retrieval optimizes for chunk-level semantic relevance to the query. When evidence is distributed across two to four documents, a fixed-k retrieval window will statistically miss parts of the evidence chain. The chunks it retrieves are not wrong; the set is incomplete.

Is GraphRAG better than naive RAG for multi-hop QA?

GraphRAG — which uses knowledge graphs to encode entity relationships across documents — addresses multi-hop QA structurally by making cross-document relationships traversable without relying on embedding proximity. It shows "substantial improvements in question-and-answer performance when reasoning about complex information." However, MultiHop-RAG does not prove graph augmentation is universally superior. The right architecture depends on your query distribution, evidence spread, and operational constraints.

How do you evaluate RAG systems on multi-hop queries?

Measure retrieval recall and end-to-end answer accuracy separately, using a benchmark where evidence is known to be distributed across multiple documents. MultiHop-RAG provides that structure with typed queries and explicit evidence mappings. Running only retrieval metrics is insufficient.

Bottom Line: MultiHop-RAG proves that naive top-k retrieval is structurally inadequate for multi-hop QA — not because it retrieves irrelevant chunks, but because it cannot guarantee complete evidence coverage when answers require chaining across two to four documents. Use the benchmark to measure the gap between retrieval recall and answer accuracy in your own pipeline before choosing between naive RAG, GraphRAG, or a hybrid approach.

Sources and references

| Source | Type | Why it matters |

|---|---|---|

| MultiHop-RAG: Benchmarking Retrieval-Augmented Generation for Multi-Hop Queries (arXiv:2401.15391) | Primary paper | Methodology, query types, and benchmark experiments |

| yixuantt/MultiHop-RAG (GitHub) | Canonical repository | Benchmark dataset, query splits, evidence mappings, and metadata artifacts |

| MultiHop-RAG on Hugging Face Papers | Reference artifact | Paper page with community discussion and links |

| MultiHop-RAG on OpenReview | Reference artifact | Peer-reviewed version with query type taxonomy detail |

| Microsoft GraphRAG project page | Reference artifact | Official documentation for graph-augmented retrieval approach; source for GraphRAG capability claims |

Keywords: MultiHop-RAG, arXiv:2401.15391, yixuantt/MultiHop-RAG, multi-hop question answering, retrieval-augmented generation, top-k retrieval, GraphRAG, embedding models, evidence chaining, supporting evidence, RAG evaluation, news article dataset, OpenReview, Hugging Face Papers

Pro Tip: For editorial tagging and SEO alignment, use exact-match tags

MultiHop-RAG,RAG evaluation, andarXiv:2401.15391alongside near-match variantsmulti-hop question answering,retrieval-augmented generation, andevidence chainingto cover both precise benchmark searches and practitioner-oriented query patterns.