Bottom line: buy when time-to-value and shared observability matter most

Bottom Line: Default to a managed LLM evaluation platform if your team is shipping a production RAG system in the next quarter, stakeholders need shared dashboards, or compliance requires audit-ready trace retention. Build your own pipeline only when your CI workflow is already mature, your datasets are internally owned and stable, and you have engineers who can absorb the ongoing cost of golden-set refresh, regression-gate tuning, and production monitoring — costs that compound every month and are invisible in any library's README. For most teams, the evaluator library itself (RAGAS, DeepEval, Vectara's Open RAG Eval) is the cheap part. The expensive part is everything around it.

RAG observability covers the full runtime picture of a retrieval-augmented generation system: tracing requests through retrieval and generation, evaluating answer quality against ground truth or an LLM judge, and monitoring production traffic for drift and regressions. Vectara's Open RAG Eval, released in April 2025 under Apache 2.0, frames the core pitch cleanly: it "lets teams evaluate RAG systems without needing predefined answers, making it faster and easier to compare solutions or configurations." That is a genuine capability advantage — but it is also exactly the kind of framing that makes the build path look cheaper than it is, because the evaluator is not the expensive part.

What actually drives the build-vs-buy decision in RAG eval

The decision is not which metric library you prefer. It is who owns the full operating model: dataset curation, regression gates, trace storage, alert review, and stakeholder-facing dashboards. A library like RAGAS gives you metrics; a managed LLM evaluation platform gives you a workflow. Those are different products solving different problems.

LangSmith documentation explicitly separates offline evaluation on curated datasets during development from online evaluation on production traces — confirming that evaluation is not a single artifact but a split workflow spanning pre-release and live traffic. When you build, you own both halves. When you buy, the platform unifies them.

| Factor | Build | Buy |

|---|---|---|

| Ownership model | Full internal ownership: code, datasets, infra, dashboards | Vendor owns infra and workflow; team owns datasets and review |

| Operating burden | High: golden-set refresh, gate tuning, trace storage, alert review | Moderate: configuration, retention policy, subscription management |

| Time to first metric | Weeks to months (CI integration + dataset bootstrap) | Days (instrument SDK, enable tracing, run first eval) |

| RAG CI pipeline integration | Native — you define the harness | Requires SDK/API instrumentation per platform |

| Collaboration | Manual (shared repo, Slack screenshots) | Built-in (shared traces, annotations, history) |

| Compliance / audit trail | Self-managed log retention | Platform-managed with configurable retention |

Why the evaluator is not the expensive part

The evaluator — the code that scores a retrieval step or grades a generated answer — is typically a few hundred lines, installable from PyPI, and straightforward to run in CI. What is not straightforward is everything the evaluator depends on:

| Cost item | One-time setup | Recurring maintenance |

|---|---|---|

| Golden-set creation | High (initial labeling sprint) | Ongoing: label drift as docs and intents change |

| Regression gates | Moderate (threshold decisions) | Ongoing: retune when model or retrieval params change |

| RAG CI pipeline wiring | Moderate (CI config, secrets) | Low once stable, but breaks on dependency upgrades |

| Dashboard / trace storage | Moderate (infra provisioning) | Ongoing: storage growth, retention policy enforcement |

| Production incident review | None (deferred) | High: triage time per alert, per regression cycle |

LangSmith's online-evaluator documentation notes that traces meeting evaluation criteria are preserved for investigation, and that evaluation activity can affect trace pricing — meaning recurring storage and execution costs are baked into the production-monitoring workflow regardless of whether you use their platform or run your own. Phoenix defaults to indefinite trace retention in self-hosted mode, which means teams that run their own observability stack still accumulate long-lived storage obligations; the cost shifts from a subscription line to an infrastructure bill.

Which teams usually underestimate the recurring work

Small teams underestimate recurring work most severely, because they bootstrap evaluation during a sprint and then never staff the maintenance cycle. A 2025 survey of RAG evaluation methodology (arXiv:2504.14891) identifies the root cause explicitly: enterprise RAG evaluation must span retrieval quality, factual accuracy, safety, latency, and cost — not a single metric — and "the high costs of data construction" make this a sustained burden rather than a one-time project.

Pro Tip: Map your team to the ownership risk before choosing a path.

- Small team (1–3 ML engineers, no dedicated platform eng): Build cost is underpriced; recurring work will crowd out feature work within 6 months. Start with a managed platform.

- Mid-size team (4–10 engineers, a QA or eval focus): Hybrid is viable — own CI-side evaluation in RAGAS or DeepEval, buy production RAG observability from a platform.

- Platform-heavy team (dedicated infra/ML platform org): Build is justified if datasets are proprietary, compliance is strict, and the team has bandwidth to absorb golden-set refresh and incident review as an ongoing function.

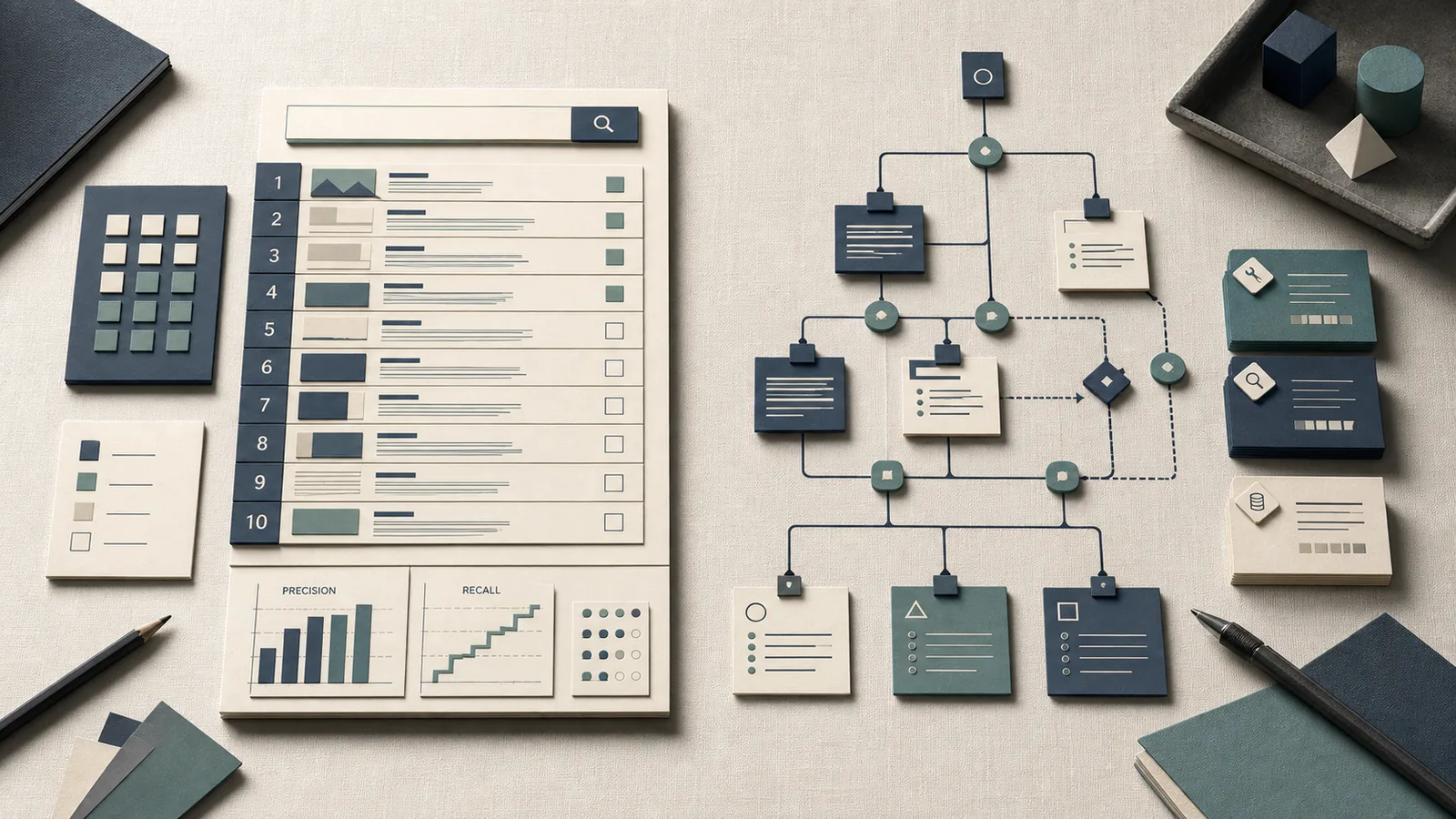

How the market splits across libraries, hosted platforms, and hybrid stacks

The RAG observability and evaluation tool market divides into three tiers that differ primarily in operating model, not feature count.

| Tier | Tools | Who owns infra | Who owns datasets | Retention |

|---|---|---|---|---|

| Open-source libraries | RAGAS, TruLens, DeepEval, Open RAG Eval, Promptfoo | You | You | You |

| Managed LLM evaluation platform | LangSmith, Arize Phoenix, Langfuse, Braintrust | Vendor (or self-hosted with your infra) | You | Vendor-managed or configurable |

| Hybrid | Library for CI + platform for production | Split | You | Split |

Open-source evaluation libraries

RAGAS positions itself as the tool that moves teams "from 'vibe checks' to systematic evaluation loops," which accurately describes its scope: it handles metric computation at development time or in CI, not production tracing. DeepEval adds retriever-specific metrics and test-case infrastructure. Vectara's Open RAG Eval targets configuration comparison without predefined answers, which lowers the dataset bootstrap cost for teams that cannot afford a manual labeling sprint. Promptfoo fits adjacent CI work such as prompt-level testing and red-teaming, and it pairs naturally with library-based eval when the team wants a lightweight gate before traces ever reach production.

| Library | Primary use case | Ownership boundary | Maintenance load |

|---|---|---|---|

| RAGAS | Offline / CI metric scoring | All internal | Dataset refresh, metric config |

| TruLens | LLM app evaluation with feedback functions | All internal | Feedback logic, storage |

| DeepEval | Test-case eval + retriever metrics | All internal | Test harness, metric tuning |

| Vectara Open RAG Eval | Config comparison without predefined answers | All internal | Metric selection, scoring cadence |

| Promptfoo | Prompt-level testing and red-teaming | All internal | Test cases, assertions, model routing |

All five push the full RAG CI pipeline burden — harness engineering, dataset management, reporting, and production follow-up — onto the internal team. They are best treated as CI-layer tools unless paired with separate trace infrastructure.

Managed observability platforms

Managed platforms shift the operating model: instead of owning trace storage and the evaluation workflow UI, teams configure a vendor product that already provides those capabilities.

LangSmith bundles "observability, tracing, and evaluations in the UI and API" and supports both offline evaluation on curated datasets and online evaluation against live production traces. Arize Phoenix provides trace-centric RAG observability with configurable retention, defaulting to indefinite in self-hosted mode. Langfuse and Braintrust target similar workflows with different collaboration and prompt-management features.

| Platform | Tracing | Collaboration | Retention responsibility | Self-host option |

|---|---|---|---|---|

| LangSmith | Yes | Annotations, shared runs | Vendor or self-hosted | Yes |

| Arize Phoenix | Yes | Team dashboards | Vendor or self-hosted (indefinite default) | Yes |

| Langfuse | Yes | Prompt versioning, team scores | Vendor or self-hosted | Yes |

| Braintrust | Yes | Dataset management, scoring UI | Vendor | Limited |

Existing LangSmith users became billable in July 2024, so this tier carries a recurring subscription line regardless of usage pattern. On the judge side, teams commonly compare GPT-4o and Claude 3.5 Sonnet for rubric-based scoring; on the infra side, self-hosted stacks can also pick up storage and compute costs on hardware such as an NVIDIA H100 when trace volume or local model execution is part of the design.

Hybrid setups that often win in practice

The most operationally sustainable configuration for mid-size teams is to keep CI-side evaluation in an open-source library and production monitoring in a managed platform. RAGAS or DeepEval handles the RAG CI pipeline gate on every pull request; LangSmith or Phoenix handles trace retention, alerting, and stakeholder dashboards in production.

| Model | CI eval | Production RAG observability | Who owns each side |

|---|---|---|---|

| CI-only | Open-source library | None (gap) | Fully internal |

| Production-only | None (gap) | Managed platform | Vendor-managed |

| Full-stack hybrid | Open-source library | Managed platform | Split ownership |

The main constraint is operational: two systems mean two maintenance surfaces and a handoff point between test-time scores and production traces that teams must actively bridge.

What the money looks like over 12 months

No vendor publishes a universal price card that supports a precise side-by-side table; LangSmith confirmed billing started for existing users in July 2024 and that evaluation activity can increase trace costs, but specific per-seat or per-trace rates require direct engagement. The 12-month cost model below uses verified cost categories and the operational reality that judge calls, retention, and engineering time grow with usage.

| Cost bucket | Build (DIY) | Buy (managed platform) |

|---|---|---|

| Platform subscription | $0 (software) | Vendor subscription, usage, or seat pricing |

| Cloud hosting / infra | Self-managed trace storage and compute | Included or add-on, depending on deployment |

| LLM judge / API usage | Depends on sample rate and reruns | Often separate; can exceed the subscription |

| Engineering time: initial build | Full internal setup and instrumentation | Faster rollout through SDKs and UI setup |

| Engineering time: ongoing maintenance | Continuous ownership of gates, drift, and review | Lower day-to-day overhead, but still requires configuration and triage |

| Dashboard / trace retention | Self-managed storage policy | Included or configurable |

| Production incident review | Owned internally | Shared with platform tooling |

One-time build costs versus recurring buy costs

| Cost | Type | Build | Buy |

|---|---|---|---|

| Evaluator code | One-off | Internal implementation | SDK integration |

| CI wiring | One-off | Internal test harness | Platform configuration |

| Initial golden set | One-off (but drifts) | Internal labeling effort | Platform may assist, still your data |

| Platform subscription | Monthly (buy only) | N/A | Recurring |

| Trace storage | Monthly | Your infra bill | Included or add-on |

| RAG CI pipeline maintenance | Recurring | Ongoing engineering | Ongoing configuration |

| Incident triage | Incident-driven | Unbounded | Partially tooled via platform alerts |

The asymmetry is structural: build front-loads engineering cost but leaves recurring maintenance open-ended; buy inverts the profile with a predictable subscription but caps how much you customize the evaluation workflow.

The real cost of golden sets and regression gates

Golden sets are the most underestimated recurring cost in any DIY RAG CI pipeline budget. The RAG evaluation survey (arXiv:2504.14891) cites "the high costs of data construction" as an explicit barrier to systematic enterprise evaluation, and the same survey enumerates correctness, factuality, latency, cost, and safety as distinct evaluation dimensions — each requiring labeled examples or rubrics.

| Maintenance task | Trigger | Estimated labor per cycle |

|---|---|---|

| Golden-set label refresh | Document corpus update, product change | Labeling and review work |

| Drift review | Quarterly or post-deploy | Re-scoring and analysis |

| Regression gate threshold tuning | Model upgrade, retrieval param change | Threshold adjustment work |

| Rubric update for LLM judge | New failure mode or user complaint | Prompt and rubric revision |

| Gate failure triage | Every CI failure | Engineering investigation |

These costs accrue whether you use RAGAS, DeepEval, or a custom evaluator. A managed platform may provide tooling that accelerates triage and annotation, but it does not eliminate the underlying data-curation work.

When model-judge spend becomes the hidden tax

Automated evaluation at scale — the core promise of tools like Vectara's Open RAG Eval and online evaluators in LangSmith — replaces expensive human labeling with LLM-judge API calls. The trade-off is that judge spend scales with sample volume and rerun frequency, and LangSmith's documentation explicitly notes that evaluation activity can increase trace pricing.

| Judge workload driver | Cost behavior | Notes |

|---|---|---|

| Per-trace online eval | Linear with traffic | Judge calls on production traces add up over time |

| CI reruns on regression | Bursty | Each failed gate can trigger full re-evaluation of the golden set |

| Alert-triggered investigations | Incident-driven | A single latency spike can trigger many re-scored traces |

| Model used as judge | Varies by model | GPT-4o and Claude 3.5 Sonnet token rates differ; verify current pricing before budgeting |

At low sample rates, judge spend is negligible. At production scale, the API bill from the LLM evaluation platform or from your own OpenAI/Anthropic account can exceed the platform subscription cost itself.

Decision framework by team maturity and operating constraints

| Signal | Lean buy | Lean build | Lean hybrid |

|---|---|---|---|

| Team size | ≤5 ML engineers | Large platform org | 5–15 engineers |

| CI maturity | Early / ad-hoc | Mature, stable pipelines | Intermediate |

| Dataset ownership | External / evolving | Internal, curated, stable | Mixed |

| Compliance / audit | Required | Optional | Selective |

| Stakeholder dashboards | Needed now | Not required | Partial |

| RAG observability budget | Subscription tolerable | Minimized | Bounded |

| Incident rate | High / unknown | Low and characterized | Moderate |

Choose buy when you need fast rollout and shared visibility

Buy wins decisively when multiple stakeholders need shared trace history, when evaluation results must feed a reporting workflow outside the engineering team, or when the team cannot absorb the initial build cost without delaying the product.

LangSmith's self-hosted documentation bundles observability, tracing, and evaluations into a single UI and API — eliminating the need to stitch together separate tools for each layer. Its offline and online evaluation modes cover both pre-release validation and production monitoring from a single configuration surface.

| Condition | Managed platform advantage |

|---|---|

| Distributed team needing shared annotation | Built-in collaboration, no shared-repo friction |

| Stakeholder-facing quality reporting | Dashboard out of the box |

| Compliance trace retention required | Configurable retention, access control |

| Fast time-to-value | Days to first dashboard vs. weeks for DIY |

| High incident rate | Alerting and triage tooling included |

Choose build when your workflow is stable and infra ownership is cheap

Build is rational when CI is already mature, datasets are proprietary and internally maintained, compliance does not require third-party trace storage, and the engineering team treats platform ownership as a normal function rather than a tax.

RAGAS and DeepEval both fit this model well — they provide evaluation logic as a library that plugs into an existing RAG CI pipeline without requiring a managed backend.

| Condition | DIY advantage |

|---|---|

| Proprietary datasets that cannot leave the network | Full data residency control |

| Mature CI with existing test infra | Incremental integration, no new vendor dependency |

| Modest trace retention needs | Self-managed storage is cheaper than a subscription |

| Stable retrieval and generation stack | Regression gate tuning is infrequent |

| Compliance that prohibits third-party data access | Build is the only option |

Choose hybrid when product risk is high but budgets are bounded

Hybrid ownership assigns RAG CI pipeline evaluation (per-PR gates, offline benchmarks) to an open-source library and production RAG observability (traces, alerts, stakeholder dashboards) to a managed platform. This minimizes subscription cost while preserving production visibility.

| Layer | Ownership | Tooling example |

|---|---|---|

| Offline / dev-time eval | Internal | RAGAS, DeepEval, Open RAG Eval, Promptfoo |

| CI regression gate | Internal | RAGAS + pytest + CI runner |

| Production trace capture | Vendor | LangSmith, Phoenix, Langfuse |

| Production eval / alerting | Vendor | LangSmith online evaluators |

| Incident review | Shared | Platform UI + internal triage process |

The operational cost of hybrid is maintaining two systems and a handoff: CI scores do not automatically feed production dashboards unless you build that bridge, which reintroduces engineering overhead.

Risks, objections, and where automated eval still lies

Automated evaluation across any stack — library or platform — carries systematic blind spots that compound when teams treat metric coverage as a proxy for answer quality.

Watch Out: Two failure modes recur regardless of which tool you choose. First, a high retrieval score (precision, recall, NDCG) does not guarantee user task success or satisfaction; retrieval metrics measure what was returned, not whether the answer was useful. Second, stale golden sets create false confidence — if your labeled examples were written against an older version of the corpus or product, your CI gate can pass cleanly while production quality degrades. Both failure modes are invisible to the evaluator unless you actively manage golden-set freshness and supplement retrieval scores with generation and end-to-end metrics.

The RAG evaluation survey (arXiv:2504.14891) is explicit: enterprise RAG evaluation must account for retrieval quality, factual accuracy, safety, latency, and cost simultaneously, and answer quality cannot be collapsed to a single metric. Vectara's Open RAG Eval scores metrics like UMBRELA and Hallucination, which are meaningful signals — but they remain metrics, not guarantees of user outcome.

Why metric coverage can look better than answer quality

A RAG observability dashboard that shows green retrieval scores can mask generation failures. Retrieval precision tells you the retrieved chunks were topically relevant; it does not tell you the generated answer was factually correct, safe, or responsive to the user's actual intent.

Watch Out: Single-number dashboards that aggregate retrieval and generation scores into one composite metric are especially dangerous. They collapse multiple independent failure modes — chunk relevance, answer faithfulness, safety — into a figure that can improve on one dimension while degrading on another. Track retrieval, generation, and end-to-end metrics separately and set independent gates for each.

The survey identifies factual accuracy and safety as distinct evaluation dimensions that retrieval scores cannot proxy. Teams that gate CI on retrieval precision alone will ship factual errors at scale.

Where production monitoring changes the ownership decision

Production monitoring introduces costs that do not appear in any library's feature list: alert noise reduction, triage workflows, trace retention policies, and incident review time. LangSmith's online evaluators preserve traces that meet evaluation criteria for investigation, which creates a recurring retention obligation that grows with production traffic. Phoenix's indefinite default retention in self-hosted mode means storage accumulates without a ceiling unless you configure explicit policies.

Production Note: The moment you enable production monitoring — regardless of whether you use a managed platform or self-hosted tooling — you commit to: (1) a storage cost that scales with traffic, (2) an alert noise calibration problem (too sensitive = alert fatigue; too loose = missed regressions), and (3) an incident review process that someone must own. These responsibilities do not disappear by choosing build over buy; they only shift from a vendor's platform to your own infrastructure and engineering time. If no one on the team currently owns the production incident review function, buying a platform does not create that ownership — it only provides the tooling.

The ownership decision permanently changes once trace retention, auditability, and stakeholder-facing reporting become requirements. At that point, the question is not whether to have a platform, but whether to buy one or operate one yourself.

FAQ: build vs buy RAG observability and evaluation

What is RAG observability?

RAG observability is the practice of capturing, evaluating, and monitoring the full request lifecycle of a RAG system in production — from query receipt through document retrieval to generated response delivery. It is distinct from offline evaluation in that it operates on live traffic rather than curated test sets.

Tracing, evaluation, and monitoring are related but not interchangeable. A team that only runs offline evaluation has no production observability. A team that only traces has no quality signal.

How do you evaluate a RAG pipeline?

A complete evaluation program spans three layers that the RAG CI pipeline and production monitoring must together cover: offline benchmark, CI regression gate, and production monitoring.

Evaluation should cover both retrieval (chunk relevance, recall) and generation (faithfulness, factuality, safety) independently. Merging them into a single score creates the false-confidence problem described in the risks section.

What are the best tools for RAG evaluation?

Tool choice follows ownership model. The best tool for CI is not the best tool for production monitoring; libraries and platforms serve different functions.

RAGAS is strong for CI-time metric scoring, DeepEval for test-case eval and retriever metrics, and Vectara Open RAG Eval for configuration comparison without predefined answers. LangSmith fits combined dev and production evaluation, Arize Phoenix fits trace-centric observability with retention control, Langfuse fits prompt versioning and team scoring, Braintrust fits dataset management and scoring UI, and Promptfoo fits prompt-level testing and red-teaming.

No tool is "best" in the abstract. Fit to your ownership model and retention requirements determines which tool wins for your team.

How much does an LLM observability platform cost?

Platform cost is not a single line item. Existing LangSmith users became billable in July 2024, evaluation activity can increase trace pricing, and self-hosted retention adds storage and compute costs. On top of that, judge-model spend varies by provider; GPT-4o and Claude 3.5 Sonnet are common choices for rubric-based scoring, and local or self-hosted infrastructure may require hardware such as an NVIDIA H100 if you run heavier workloads in-house.

Trace storage, judge API usage, and engineering time often matter more than the nominal platform fee. Verify current token pricing before finalizing a budget.

Sources & References

- Vectara: Introducing Open RAG Eval — primary source for Open RAG Eval framing and automated evaluation without predefined answers

- LangSmith evaluation documentation — offline and online evaluation workflow definitions

- LangSmith self-hosted documentation — observability, tracing, and evaluations in a unified UI/API

- LangSmith online evaluators documentation — trace preservation and pricing impact of evaluation activity

- LangSmith pricing FAQ — confirms existing users became billable in July 2024

- Arize Phoenix data retention documentation — indefinite default retention in self-hosted mode

- RAGAS documentation — library-first framing; "vibe checks to systematic evaluation loops"

- DeepEval documentation — open-source LLM evaluation framework with retriever metrics

- Promptfoo documentation — prompt-level testing and red-teaming

- arXiv:2504.14891 — RAG evaluation survey — enterprise RAG evaluation dimensions, high costs of data construction

Keywords: Vectara Open RAG Eval, RAGAS, TruLens, DeepEval, LangSmith, Arize Phoenix, Langfuse, Braintrust, Promptfoo, Claude 3.5 Sonnet, OpenAI GPT-4o, NVIDIA H100, RAG CI pipeline, golden sets, LLM judge