Why reference-free RAG evaluation matters now

Curating golden answers at scale breaks production evaluation cycles. The traditional path — write a question, write the canonical answer, store the authoritative supporting chunks, run your RAG system, score the delta — collapses under real-world query volumes. A corpus of ten thousand production questions needs ten thousand human-verified answers before a single metric fires, which means teams either under-test or run on stale golden sets that no longer reflect the retrieval index.

Open RAG Eval, released by Vectara under Apache 2.0, sidesteps this bottleneck. Its README is direct: "The core metrics (UMBRELA, AutoNuggetizer) do not require golden chunks or golden answers, making RAG evaluation easy and scalable." The toolkit implements the metrics used in the TREC 2024 RAG Track, adds optional hallucination scoring via the vectara/hallucination_evaluation_model or the commercial Vectara API, and ships with connectors for reporting and visualization.

Bottom Line: Open RAG Eval makes large-scale benchmarking tractable by eliminating the gold-label requirement, but that scalability comes at a defined cost — UMBRELA and AutoNuggetizer are proxy metrics optimized for repeatability and comparative ranking, not proofs of factual correctness. Teams that treat a high UMBRELA score as evidence their system is factually accurate are misreading what the metric can guarantee.

How Open RAG Eval fits the benchmark lineage

RAG evaluation has a longer lineage than the current tooling ecosystem suggests. The TREC 2024 RAG Track paper (arxiv:2411.09607) frames the problem plainly: "We have identified RAG evaluation as a barrier to continued progress in information access (and more broadly, natural language processing and artificial intelligence)." That paper introduced the nugget-evaluation methodology as a path forward, and Open RAG Eval operationalizes that methodology in a pip-installable toolkit.

Positioning Open RAG Eval inside the broader evaluation ecosystem clarifies what it is and what it is not:

| Era / System | Scoring method | Requires gold labels? | Primary goal |

|---|---|---|---|

| Classic QA benchmarks (SQuAD, NQ) | Exact match / F1 vs. gold answer | Yes — gold answers required | Measure reading comprehension on fixed datasets |

| TREC AutoJudge (pre-2024) | LLM judge replaces manual relevance assessors | Partially — pooled judgments | Scale relevance assessment for ad-hoc IR |

| TREC 2024 RAG Track | Nugget extraction + automated scoring | No gold answers; topics only | Benchmark RAG systems at track scale |

| Open RAG Eval | UMBRELA + AutoNuggetizer + hallucination score | No gold chunks or gold answers | Portable, repeatable RAG benchmarking |

| RAGAS / TruLens / DeepEval | LLM-judge triad (relevance, groundedness, answer relevance) | No gold answers; uses LLM self-evaluation | Production observability and regression detection |

Pro Tip: Benchmark scoring and production diagnosis are not the same problem. TREC-style metrics tell you how a system ranks against other systems on a shared topic set; production harnesses tell you why a specific query failed. Open RAG Eval is the former. Use it for comparative benchmarking and regression gates, not as the primary instrument for root-cause analysis on a live system.

Why golden answers became the bottleneck

Gold-answer evaluation requires someone — a human, or a trusted model whose outputs are treated as ground truth — to write the authoritative response to every query before evaluation can proceed. At benchmark scale that means thousands of answers. At production scale it means continuously updating those answers as the retrieval corpus changes.

The three dominant evaluation paradigms, compared on their core constraint:

| Evaluation mode | What you must provide | Scalability | Factual-correctness signal |

|---|---|---|---|

| Gold-answer | Query + canonical answer | Low — linear annotation cost | Strong, if annotations are fresh |

| Gold-chunk | Query + authoritative source passages | Medium — less annotation than full answers | Partial — retrieval correctness, not answer correctness |

| Reference-free (UMBRELA, AutoNuggetizer) | Query + retrieved passages + generated answer | High — no human annotation required | Proxy only — judge-model dependent |

Open RAG Eval eliminates the annotation step entirely for its core metrics. That decision enables continuous evaluation in CI pipelines, but it also means the correctness signal is indirect. Reference-free methods should be treated as proxy metrics, not truth labels.

What TREC-RAG changed about evaluation

The TREC 2024 RAG Track ran 45 submitted systems across 21 topics and produced a result that validated the automated nugget approach: "Based on initial results across 21 topics from 45 runs, we observe a strong correlation between scores derived from a fully automatic nugget evaluation and a (mostly) manual nugget evaluation by human assessors." (arxiv:2411.09607)

That correlation result gave automated nugget scoring its credibility as a benchmark instrument. UMBRELA and AutoNuggetizer in Open RAG Eval inherit that lineage directly.

Pro Tip: Strong correlation between automated and human scores on 21 TREC topics does not mean the correlation holds in your domain. TREC topics are carefully selected for coverage and ambiguity balance. Proprietary corpora with narrow technical vocabulary, strong temporal sensitivity, or significant jargon drift can weaken the automated-human correlation substantially. Treat the TREC correlation number as an upper-bound estimate until you measure it on your own data.

What UMBRELA measures and what it does not

UMBRELA operates as a judge-model-driven relevance scorer for RAG outputs. Given a query, the retrieved passages, and the generated answer, UMBRELA uses a language model judge to assign relevance signals without consulting any pre-defined gold answer. It is a core metric in Open RAG Eval and one of the two pillars of TREC 2024 RAG evaluation.

The README states: "The core metrics (UMBRELA, AutoNuggetizer) do not require golden chunks or golden answers, making RAG evaluation easy and scalable." (Open RAG Eval repository)

| Metric | Inputs required | Judge model needed | What it proxies | What it cannot verify |

|---|---|---|---|---|

| UMBRELA | Query + retrieved passages + generated answer | Yes (OpenAI API by default) | Answer relevance and passage coverage | Factual correctness against external ground truth |

| AutoNuggetizer | Query + retrieved passages + generated answer | Yes | Information completeness at nugget level | Whether extracted nuggets are factually accurate |

| Hallucination score (HHEM) | Generated answer + source passages | No (local model) | Factual consistency between answer and passages | Whether the passages themselves are correct |

| TruLens RAG Triad | Query + context + answer | Yes (LLM judge) | Relevance, groundedness, answer relevance | External factual correctness |

UMBRELA does not require golden chunks or golden answers, which makes it deployable at scale, but its scores shift with judge model version and prompt wording. That dependence is explicit in the repository notes and launch materials (Open RAG Eval repository notes, Vectara launch post).

Input signals, judge model, and scoring shape

UMBRELA ingests three signals: the original query, the set of retrieved passages, and the generated answer. The judge model — OpenAI's API by default, per the Open RAG Eval repository — evaluates whether the answer is supported by the passages and whether it addresses the query. The score shape is a relevance judgment, not a factual audit.

From the repository notes: "OpenAI API Key: Required for the default LLM judge model used in some metrics." For hallucination detection, the repository exposes two distinct paths: an open-source path using vectara/hallucination_evaluation_model (accessed via Hugging Face token) and a commercial path using the Vectara hallucination API. These are separate connector choices, not fallbacks.

Pro Tip: Judge-based metrics correlate most reliably with human judgment when the judge model has strong domain coverage and the scoring prompt is tightly scoped. On well-represented general-knowledge queries, GPT-4-class judges show high inter-rater agreement with human assessors. On narrow technical domains — biomedical ontology, securities regulation, firmware specifications — judge models frequently lack the vocabulary to distinguish a plausible-sounding but wrong answer from a correct one. Calibrate against human review before trusting UMBRELA scores in specialized verticals.

Failure modes when UMBRELA is treated as truth

The Vectara launch post frames Open RAG Eval as offering "transparent, efficient, and flexible evaluation" (Vectara launch post) — but transparency about the scoring process does not imply the scores are truth labels. Three failure modes occur regularly when UMBRELA is treated as a correctness oracle:

Hallucination pass-through. UMBRELA checks whether the generated answer is consistent with the retrieved passages, not whether those passages contain accurate information. A retriever that surfaces plausible-but-wrong documents will produce answers that score well on UMBRELA while being factually incorrect end-to-end.

Citation-quality blindness. A generated answer that cites a passage without faithfully representing it can still receive a high relevance judgment if the surface-level semantic overlap is sufficient. UMBRELA does not verify attribution fidelity.

Prompt sensitivity. Because the judge model's behavior is shaped by its system prompt, small changes to the scoring template can shift UMBRELA scores by non-trivial margins without any change to the underlying RAG system. This makes cross-run comparisons unreliable unless the judge prompt is version-controlled as strictly as the model weights.

Watch Out: Never promote a RAG configuration to production based solely on UMBRELA improvement. A score increase may reflect judge-prompt drift, retrieval-index changes that happen to align with the judge's priors, or generator fine-tuning that learns to produce judge-pleasing phrasing without improving factual accuracy. UMBRELA is a comparison instrument, not a deployment gate.

How AutoNuggetizer supports scalable nugget-level evaluation

AutoNuggetizer evaluates RAG output at the information-unit level rather than the whole-answer level. A "nugget" in TREC terminology is a discrete, independently assessable factual claim that a good answer should contain. AutoNuggetizer automates the extraction of those nuggets from retrieved passages and the generated answer, then scores how completely the answer covers the expected information units.

The TREC 2024 RAG Track paper states: "Our central hypothesis is that the nugget evaluation methodology … provides a solid foundation for evaluating RAG systems." (arxiv:2411.09607) Open RAG Eval ships AutoNuggetizer as the scalable implementation of that hypothesis, and the same paper reports strong automated-versus-human correlation across 21 topics and 45 runs (arxiv:2411.09607).

| AutoNuggetizer stage | Input | Output | Benchmark value |

|---|---|---|---|

| Nugget extraction | Retrieved passages + query | List of discrete factual claims | Defines the information space for a topic |

| Nugget coverage scoring | Generated answer + nugget list | Per-nugget coverage signal | Measures completeness of the answer |

| Aggregation | Coverage signals across nuggets | Single topic-level score | Enables cross-system ranking at benchmark scale |

| Human calibration (optional) | Human assessor judgments | Correlation coefficient vs. automated score | Validates automated nugget quality |

Because nugget extraction is automated, AutoNuggetizer scales to the full production query distribution — a task that would require thousands of annotator-hours with manual nugget creation. The TREC 2024 paper demonstrated strong automated-versus-human correlation across 45 runs, which gives the methodology empirical grounding that pure LLM-judge approaches lack.

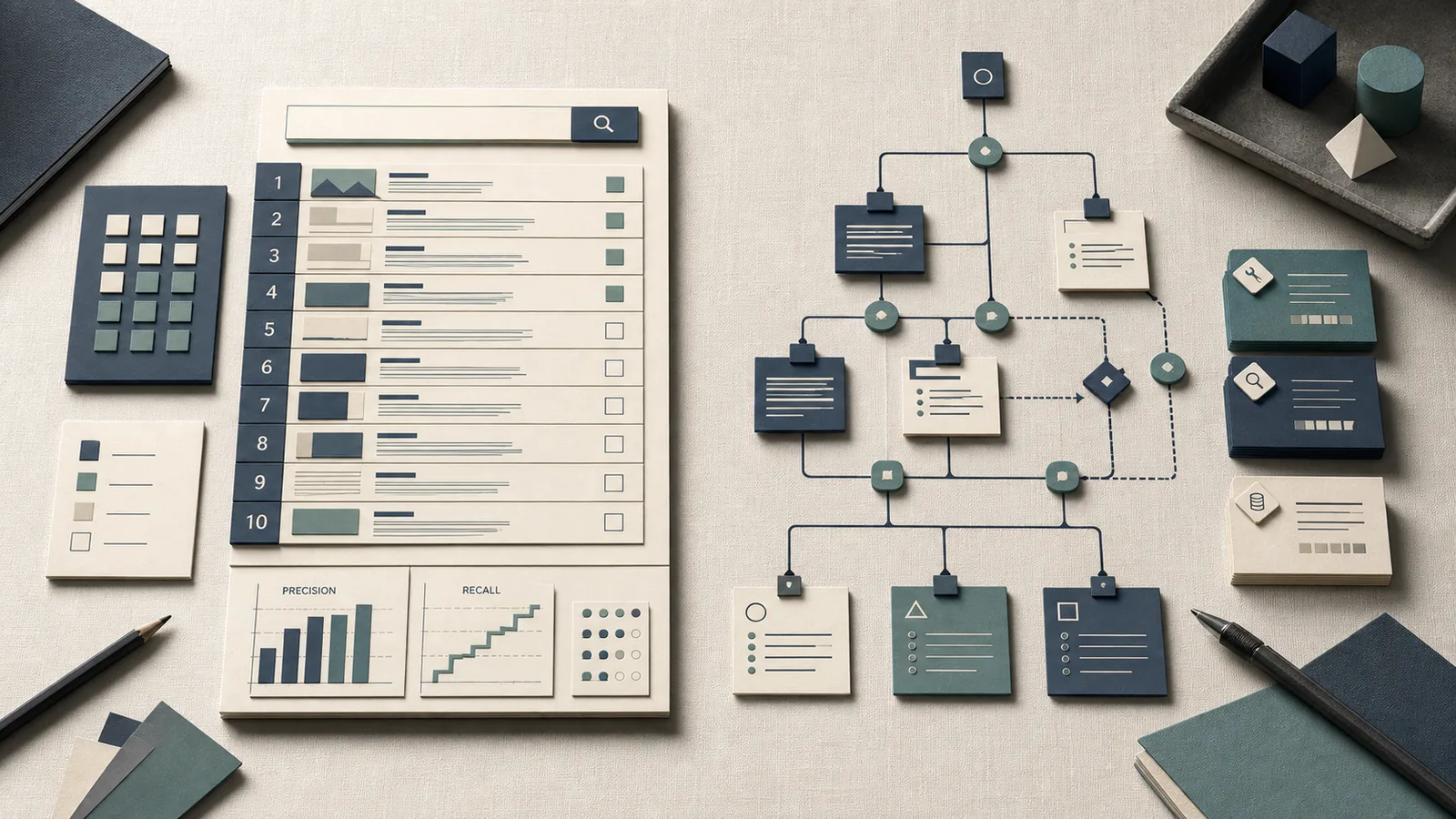

Why nuggetization helps separate retrieval from generation

Nugget-level scoring gives diagnostic resolution that whole-answer metrics cannot. If a topic has eight nuggets and the generated answer covers three, the question becomes: were the missing five nuggets present in the retrieved passages? If yes, the generator dropped information. If no, the retriever failed to surface it. That split is the diagnostic value of AutoNuggetizer.

The TREC 2024 strong-correlation finding supports this use: automated nugget evaluation correlated with human assessment across 21 topics from 45 runs, meaning the nugget coverage signal is stable enough to use as a diagnostic layer — not just a ranking instrument.

Pro Tip: To use AutoNuggetizer as a production diagnostic rather than just a benchmark score, log the nugget-level coverage breakdown per query rather than only the aggregated score. A batch where 90% of uncovered nuggets are missing from retrieved passages points to a retrieval problem — chunking strategy, embedding model, or top-k cutoff. A batch where 90% of uncovered nuggets appear in retrieved passages but not in the generated answer points to a generator issue — context-window utilization, instruction-following, or summarization compression.

Where nugget scoring can still mislead teams

AutoNuggetizer's reference-free design means the nuggets themselves are extracted by a language model rather than defined by a domain expert. Three failure modes follow directly from that design choice.

Proxy drift. When the judge model used for nugget extraction is updated, the nugget set for a fixed query can change, making scores from different evaluation runs non-comparable even when the RAG system is identical. Open RAG Eval's reference-free core enables scale but does not eliminate this drift.

Annotation leakage. If the language model used for nugget extraction was trained on data that overlaps with the evaluation topics, the nuggets it extracts may reflect training-set priors rather than the actual information needs of the query. This inflates scores on topics the model has seen and deflates them on genuinely novel queries.

Judge bias toward fluency. Nugget coverage scoring rewards answers that use language similar to the source passages. A generator that paraphrases extensively — which may be necessary for readability — can receive lower coverage scores than one that copies passage text verbatim, even when the paraphrase is more accurate.

Watch Out: Reference-free evaluation with AutoNuggetizer is reliable only to the extent that its proxies correlate with the target quality dimension in the domain being measured. On broad, factual queries with well-represented topics, the TREC correlation result applies reasonably well. On narrow-domain, temporally sensitive, or multi-hop reasoning queries, proxy drift and judge bias can make AutoNuggetizer scores actively misleading. Maintain a small human-verified calibration set and measure automated-human correlation on your own query distribution before relying on nugget scores as a regression gate.

Open RAG Eval versus RAGAS, TruLens, and DIY eval stacks

Open RAG Eval, RAGAS, and TruLens address related but distinct problems. Open RAG Eval is a benchmark-oriented toolkit — it implements research-grade metrics from the TREC lineage and is designed for comparative system evaluation. RAGAS and TruLens are production observability frameworks that instrument live applications with continuous evaluation feedback.

| Tool | Primary orientation | Reference-free? | Human-eval support | Best fit |

|---|---|---|---|---|

| Open RAG Eval | Benchmark / comparative scoring | Yes (UMBRELA, AutoNuggetizer) | Optional | System comparison, CI regression gates |

| RAGAS | Production eval / metric library | Yes (LLM-as-judge) | Limited | Metric-level regression in dev/test |

| TruLens | Production tracing + eval | Yes (RAG Triad) | Feedback functions | App-level observability in staging/prod |

| DeepEval | Pytest-native CI eval | Yes (LLM-as-judge) | Dataset management | CI-integrated test suite with pass/fail thresholds |

| DIY stack | Custom | Depends | Fully custom | Teams with specific domain metrics not covered above |

| Decision matrix | Choose when | Signals to prioritize | Trade-off |

|---|---|---|---|

| Choose Open RAG Eval when... | You need TREC-style comparative scoring, repeatable offline benchmarking, or a gold-free regression gate aligned with the 2024 RAG Track | UMBRELA, AutoNuggetizer, topic-level ranking | Less direct root-cause visibility than tracing tools |

| Choose RAGAS when... | You want a metric library that you can embed into a custom harness with minimal infrastructure overhead | Context relevance, faithfulness, answer relevance | You must build the orchestration and logging yourself |

| Choose TruLens when... | You need live trace inspection, app-level observability, and feedback functions across retrieval and tool use | Context relevance, groundedness, answer relevance, traces | Less aligned to benchmark-style offline comparison |

| Choose DIY when... | You need domain-specific metrics, custom labels, or internal governance requirements not covered by packaged tools | Bespoke annotations, business KPIs, domain heuristics | Highest maintenance burden and lowest portability |

| Choose Open RAG Eval when fast regression testing in a production RAG system matters... | You want a repeatable CI gate that compares runs against a fixed topic set and surfaces score drift before release | Stable judge model, frozen prompts, run-to-run deltas | Requires disciplined versioning of topics, prompts, and connectors |

TruLens defines its core evaluation unit as the RAG triad: "context relevance, groundedness and answer relevance", with the claim that "satisfactory evaluations on each provides us confidence that our LLM app is free from hallucination." (TruLens docs) That framing is production-observability-first; the triad fires on every query in a live trace, not on a curated benchmark topic set.

Open RAG Eval occupies a different position: it is the right tool when you need to compare multiple RAG configurations on a common topic set, reproduce TREC-RAG numbers, or run a structured offline evaluation sweep before promoting a configuration to production.

When a judge-driven stack is the right choice

Judge-driven evaluation stacks — whether Open RAG Eval, DeepEval, or TruLens — are appropriate when you need repeatable, automated scoring that does not require a human in the loop for every run.

| Choice | Use it when | Why it fits |

|---|---|---|

| Open RAG Eval | The goal is comparative benchmarking across RAG system variants, or you want to align with the TREC 2024 RAG Track methodology for reproducibility | It is the most research-aligned option and gives benchmark-style scores without gold answers |

| DeepEval | You need pytest-native pass/fail thresholds integrated into CI | Its test-assertion model maps directly to standard software QA workflows and supports G-Eval, DAG-based metrics, and dataset regression |

| TruLens | You need continuous eval instrumentation in a live application | TruLens documents evaluation of "retrieved context, tool calls, plans, and more" as part of the app execution flow, which Open RAG Eval does not provide |

| RAGAS | You need a metric library with minimal infrastructure overhead and are comfortable composing your own eval harness around individual metric functions | It is lightweight and composable for dev/test metric composition |

| Fast regression testing in a production RAG system | You want a judge-driven CI gate with trace logging (query, retrieved passages, generated answer, judge verdict) so you can detect regressions before promotion | Logging sufficient granularity gives you both the score and the evidence needed to debug a failure |

For fast regression testing in a production RAG system: a judge-driven stack with trace logging (query, retrieved passages, generated answer, judge verdict) gives you both the score and the evidence needed to debug a regression — provided you log at sufficient granularity.

When to keep golden sets anyway

Automated evaluation cannot prove factual correctness across all domains and queries. TruLens frames its RAG triad as confidence-building rather than certainty-proving — "satisfactory evaluations on each provides us confidence that our LLM app is free from hallucination" — and that framing is accurate: automated scores are probabilistic quality indicators, not guarantees.

Watch Out: Discontinuing a golden evaluation set entirely because reference-free metrics are available is a mistake with a predictable failure mode. Automated scores can drift silently when the judge model is updated, when the retrieval index changes character, or when the generator shifts its output distribution. A small human-verified set — 50 to 200 queries with expert-reviewed answers — gives you a calibration signal that catches metric drift before it becomes a production incident. Retain the golden set; run it on every major model update and every index rebuild.

RAGAS and TruLens both benefit from this hybrid approach. Automated metrics handle the volume; human-verified examples handle the calibration.

Reproducing Open RAG Eval in a production-like setup

Open RAG Eval is pip-installable and requires Python 3.9+, an OpenAI API key for the default judge-driven metrics, and a connector choice for hallucination evaluation. The supported setup and version constraints are documented in the repository notes (Open RAG Eval repository notes).

Minimal setup requirements and connector choices

The repository is explicit about prerequisites. Python 3.9 or higher is required. The default LLM judge path requires an OpenAI API key. Hallucination detection runs on one of two paths: the open-source HHEM path using vectara/hallucination_evaluation_model on Hugging Face (requires a Hugging Face token), or the commercial Vectara hallucination API (requires Vectara API credentials).

$ pip install open-rag-eval

For development or contribution, install from source:

$ git clone https://github.com/vectara/open-rag-eval.git

$ cd open-rag-eval

$ pip install -e .

Production Note: The open-source hallucination path (

vectara/hallucination_evaluation_modelvia Hugging Face) and the commercial Vectara API path are distinct connector choices with different latency and cost profiles. The HHEM model runs locally and is free at inference time but requires GPU memory. The Vectara API path is managed and requires a paid API key but adds no local hardware dependency. Choose based on your infrastructure constraints, not on which is easier to configure initially — swapping connectors later requires updating your evaluation pipeline configuration. The baseline runtime assumptions in the repository also include Python 3.9+ and an OpenAI API key for the default judge path.

What to log so the scores stay diagnosable

Benchmark scores become useless for debugging the moment you lose the evidence trail that produced them. Open RAG Eval computes scores from the query, retrieved passages, generated answer, and judge outputs — all of which should be captured in a structured log alongside the final metric values.

Pro Tip: Log five fields for every evaluated query: (1) the verbatim query, (2) the retrieved passages with their source identifiers and retrieval scores, (3) the exact prompt sent to the judge model, (4) the raw judge output before score extraction, and (5) the final metric values. Without the judge prompt and raw output, a score regression is nearly impossible to attribute — you cannot determine whether the system changed, the judge model changed, or the prompt template changed. Store these logs with a run identifier and the judge model version so post-hoc audit is possible.

Limitations and caveats for practitioners

Open RAG Eval is transparent about its scope: UMBRELA and AutoNuggetizer are reference-free proxies, not ground-truth validators. The Vectara launch post accurately describes the framework as offering "transparent, efficient, and flexible evaluation" (Vectara launch post) — efficient precisely because it avoids the annotation cost of gold-label creation.

Watch Out: Reference-free metrics are proxies for evaluation scalability, not proofs of factual correctness. A RAG system that scores well on UMBRELA and AutoNuggetizer may still hallucinate on queries outside the benchmark topic distribution, fail on temporally sensitive questions where the judge model lacks recency, or produce citation artifacts the metrics cannot detect. These are not bugs in Open RAG Eval — they are structural properties of proxy evaluation. Use the scores to rank configurations and catch regressions; validate factual correctness through a separate, human-calibrated process.

Correlation with human judgment is context-dependent

The strongest evidence for automated RAG evaluation comes from the TREC 2024 RAG Track: strong correlation between fully automatic and mostly manual nugget evaluation across 21 topics and 45 runs. That result is meaningful, but its generalization boundary matters. The paper’s benchmark framing is explicit: "We have identified RAG evaluation as a barrier to continued progress in information access (and more broadly, natural language processing and artificial intelligence)." (arxiv:2411.09607)

| Context | Automated-human correlation | Key risk factor |

|---|---|---|

| Broad factual queries, TREC-style topics | Strong (TREC 2024 paper result) | Limited — methodology is well-calibrated here |

| General knowledge QA, well-represented domains | Moderate to strong | Judge model must have domain coverage |

| Narrow technical domains (biomedical, legal, firmware) | Weak to moderate | Judge model vocabulary mismatch |

| Temporally sensitive queries (recent events, regulatory changes) | Weak | Training cutoff effects in judge model |

| Multi-hop reasoning queries | Weak | Nugget extraction misses implicit inference chains |

| Ambiguous or multi-intent queries | Moderate | Nugget coverage scoring underestimates valid partial answers |

How to combine automated and human evaluation

The right architecture for production RAG evaluation combines automated scoring for volume with human review for calibration and escalation.

| Review trigger | Recommended action | Frequency |

|---|---|---|

| Automated score regression on benchmark set | Block promotion, inspect judge logs | Every CI run |

| Calibration set automated-human divergence | Re-calibrate thresholds, review judge prompt | Per model update |

| New query category entering top-10 by volume | Add representative queries to calibration set | Monthly |

| Production complaint cluster | Manual audit of affected query bucket | On-demand |

| Decision matrix | Trigger | Action |

|---|---|---|

| Automated regression on the benchmark set | UMBRELA or AutoNuggetizer drops below the release threshold | Block promotion and inspect judge logs before merge |

| Calibration divergence | Automated and human scores diverge on the 50–200 query calibration set | Re-calibrate thresholds and review the judge prompt/template |

| New query category | A new topic bucket moves into the top-10 by volume | Add representative examples to the calibration set |

| Complaint cluster | Users report a concentrated failure pattern on a query bucket | Escalate to manual audit of the affected bucket |

FAQ

What is Open RAG Eval?

Open RAG Eval is an open-source Python toolkit (Apache 2.0, Python 3.9+) developed by Vectara for evaluating RAG systems. Its core metrics — UMBRELA and AutoNuggetizer — implement the methodology from the TREC 2024 RAG Track and require no golden chunks or golden answers, making large-scale automated evaluation practical.

How do you evaluate a RAG system without golden answers?

Reference-free evaluation replaces human-curated gold labels with a language model judge that scores the generated answer against the retrieved passages and the original query. UMBRELA uses this approach for relevance scoring; AutoNuggetizer extends it to nugget-level information completeness. The trade-off is that the resulting scores are proxies tied to the judge model's quality and domain coverage, not direct factual-correctness measurements.

What is the difference between UMBRELA and AutoNuggetizer?

UMBRELA scores whole-answer relevance — it judges whether the generated answer addresses the query given the retrieved passages. AutoNuggetizer operates at the information-unit level: it extracts discrete factual claims (nuggets) from the retrieved passages and measures how completely the generated answer covers them. UMBRELA is faster; AutoNuggetizer provides more diagnostic resolution.

Is reference-free RAG evaluation reliable?

Reliable for comparative ranking and regression detection under stable judge configurations — the TREC 2024 RAG Track demonstrated strong automated-human correlation on 21 topics. Not reliable as a substitute for human-verified factual correctness, particularly in narrow technical domains, on temporally sensitive queries, or when judge models are updated without recalibration.

How does Open RAG Eval compare to RAGAS or TruLens?

Open RAG Eval is benchmark-oriented: it implements TREC 2024 RAG Track metrics for comparative system evaluation. RAGAS is a metric library for composing eval functions into custom harnesses. TruLens is a production observability framework that instruments live application traces with continuous evaluation. The tools are complementary rather than competitive — Open RAG Eval for offline benchmarking, TruLens for production tracing, RAGAS for metric composition.

Sources and references

- Open RAG Eval — GitHub Repository — Canonical implementation reference; source for UMBRELA, AutoNuggetizer, and connector documentation

- Introducing Open RAG Eval — Vectara Launch Post — Framework framing and design intent from Vectara

- Initial Nugget Evaluation Results for the TREC 2024 RAG Track with the AutoNuggetizer Framework — Primary research source; reports 21-topic, 45-run correlation results for automated vs. manual nugget evaluation

- TruLens RAG Triad Documentation — Source for RAG triad definitions (context relevance, groundedness, answer relevance)

- TruLens Homepage — Source for production tracing and agent evaluation framing

Keywords: Open RAG Eval, UMBRELA, AutoNuggetizer, reference-free RAG evaluation, TREC 2024 RAG Track, TREC AutoJudge, RAG benchmark lineage, judge-based metrics, RAGAS, TruLens, DeepEval, hallucination evaluation, Vectara, hallucination_evaluation_model, Hugging Face, OpenAI API, Python 3.9+, nugget evaluation, proxy evaluation metrics, RAG system quality