How we compared KeyDiff, H2O, and StreamingLLM

At a Glance: Compare three inference-time KV cache policies for long-context serving: KeyDiff for key-space diversity, H2O for heavy-hitter retention, and StreamingLLM for sink-token plus fixed-window streaming; the practical trade-offs show up in memory ceiling, retrieval fidelity, and selection overhead across stacks built on vLLM, SGLang, PagedAttention, and FlashAttention on hardware like the NVIDIA H100.

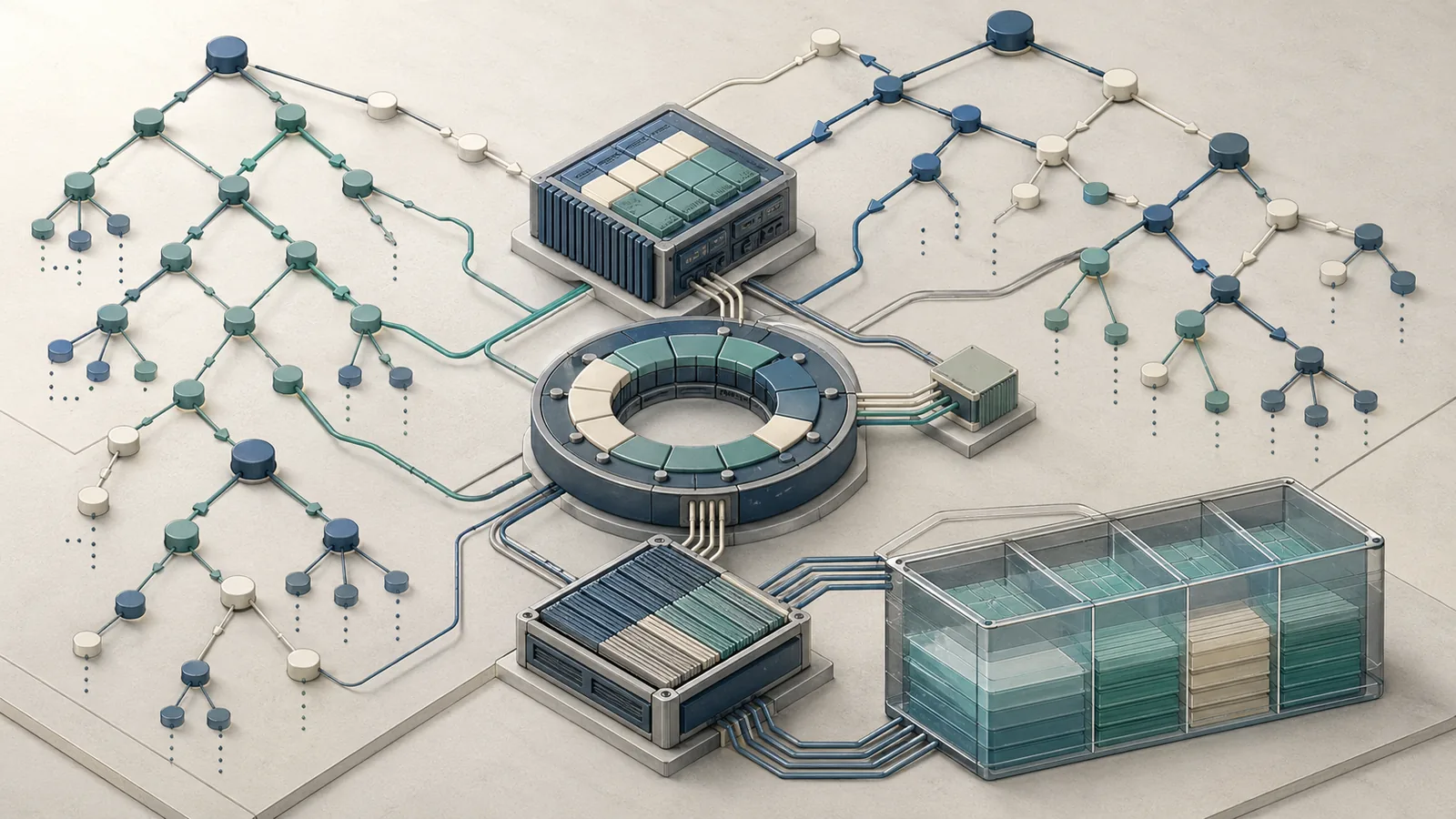

KV cache eviction is the practice of selectively discarding key-value pairs from the transformer attention cache before the cache exhausts available HBM, so that long-context inference can continue without recomputation or OOM failure. Each policy answers the same question differently: which tokens are safe to drop? KeyDiff evicts tokens whose keys are redundant — measured by pairwise cosine similarity — keeping the cache maximally diverse. H2O scores tokens by accumulated attention weight ("heavy hitters") and retains the ones the model has most attended to, discarding the rest. StreamingLLM anchors on a small set of attention-sink tokens at the sequence start and appends a fixed recent window, discarding everything in between.

These are not interchangeable compressions of the same idea. Each policy makes a different bet on what the model needs, and each fails in different regimes.

| Property | KeyDiff | H2O | StreamingLLM |

|---|---|---|---|

| Eviction signal | Key cosine similarity | Accumulated attention score | Positional (sink + window) |

| Training required? | No | No | No (sink token optional at pre-train) |

| Handles arbitrary prompt length | Yes (paper claim) | Yes | Yes |

| Preserves mid-context tokens | Diversity-driven | Score-driven | No — gap is dropped |

| Primary accuracy claim | 1.5% / 0.04% drop vs baseline | Not verified in this fact set | Stable generation, not retrieval |

Comparison criteria that matter in serving

Three criteria separate a useful eviction policy from a dangerous one in production: memory ceiling (how aggressively you can compress the cache without OOM), accuracy retention (how much the evicted cache degrades downstream task quality vs full-cache baseline), and latency impact (does the eviction computation add to TTFT or per-token decode time).

KeyDiff's selection criterion is computational: it minimises pairwise cosine similarity among retained keys, maximising diversity. As the KeyDiff abstract states, "We justify KEYDIFF by showing that it minimizes the pairwise cosine similarity among keys in the KV cache, maximizing the aforementioned diversity." This makes eviction cost a function of cache size — you pay a similarity computation overhead to earn better retention fidelity. H2O's selection is score-aggregation-based — it accumulates attention weights across decoding steps, which means the eviction signal sharpens as generation proceeds but is noisier during prefill-heavy workloads. StreamingLLM's selection is O(1) in the sense that no per-token score is needed — its design target is streaming generation continuity, not retrieval precision.

| Criterion | KeyDiff | H2O | StreamingLLM |

|---|---|---|---|

| Memory ceiling under budget | High — arbitrarily long prompts claimed | High — score-driven budget adherence | High — fixed window enforces ceiling |

| Accuracy retention (long retrieval) | Strong (paper-reported low degradation) | Unverified in this fact set; consult H2O directly | Weak — mid-context is structurally dropped |

| Eviction compute overhead | Moderate (similarity matrix) | Low-moderate (score accumulation) | Minimal (window pointer) |

| Effective for streaming dialogue | Overkill | Overkill | Purpose-built |

What the live SERP gets wrong or leaves vague

Paper mirrors and topic aggregators consistently flatten these three methods into a generic bucket called "KV cache eviction." That framing is operationally useless. The KeyDiff paper is specifically distinguished as key-similarity-based eviction, not score-based or window-based — a distinction that directly predicts accuracy under long-context retrieval tasks. Aggregator summaries often label StreamingLLM as "infinite context," which misrepresents its guarantee: the paper frames it as "stable and efficient streaming language modeling" with attention sinks, not universal full-history retention. StreamingLLM does not preserve mid-sequence tokens by design.

Similarly, H2O is sometimes presented as the conservative, always-safe default. It is not universally safer — it depends on the workload. In generation tasks where attention weight concentration is a reliable signal for token importance, H2O's heavy-hitter logic works well. In tasks where important tokens receive diffuse attention (multi-hop retrieval, instruction-following across a long document), score aggregation can evict tokens that matter. When teams prototype on vLLM or SGLang, the same caveat applies whether the backend uses PagedAttention or FlashAttention kernels: serving throughput changes, but the eviction policy still determines what information survives in cache.

Pro Tip: Before picking an eviction policy, run your specific task through a full-cache baseline and measure accuracy. The eviction policy that loses least accuracy on your task is the right policy — paper rankings on one benchmark family do not transfer automatically.

At a glance: memory, latency, and accuracy trade-offs

KeyDiff reports the strongest accuracy retention of the three methods under strict cache budgets, with the KeyDiff paper claiming it "significantly outperforms state-of-the-art KV cache eviction methods under similar memory constraints, with only a 1.5% and 0.04% accuracy drop from the non-evicting baseline." StreamingLLM's headline number is a different kind of win: up to 22.2× speedup over sliding-window recomputation in streaming settings, with demonstrated stability up to 4 million tokens. H2O is marked unverified here because the accessible fact set does not surface benchmark numbers from the H2O paper, so it should be treated as a qualitative option until you inspect the primary source. In practice, teams comparing inference stacks on NVIDIA H100s often weigh those trade-offs alongside vLLM or SGLang integration effort and whether the serving path is already built around PagedAttention or FlashAttention.

| Method | Reported accuracy drop vs baseline | Latency characteristic | Sequence length target |

|---|---|---|---|

| KeyDiff | 1.5% / 0.04% (task-dependent) | Moderate overhead (similarity compute) | Arbitrarily long prompts |

| H2O | Not verified in this fact set; see H2O | Low-moderate (score accumulation) | Long-context generation |

| StreamingLLM | Not measured (generation quality, not retrieval) | Up to 22.2× faster than window recompute | 4M+ tokens streaming |

What each row means for a serving engineer

KeyDiff's claim that it "can process arbitrarily long prompts within strict resource constraints and efficiently generate responses" (arXiv:2504.15364) means it targets the hardest regime: you have a fixed KV budget (e.g., whatever HBM remains after model weights and activation memory on an H100 80GB), and the prompt keeps growing. KeyDiff's diversity-maximising selection means the retained cache is informationally richer per token slot than a window or score-based policy, which is the mechanism behind its lower accuracy drop.

For engineers sizing concurrent request capacity: lower accuracy-drop-per-compression-ratio means you can push the cache budget tighter before quality degrades — directly translating to more concurrent requests per GPU at the same quality floor.

StreamingLLM's 22.2× speedup is measured against sliding-window recomputation, not against full-cache serving. Engineers who previously dealt with context overflow by re-encoding the sliding window on each step will see wall-clock wins from StreamingLLM. Engineers comparing against other eviction policies (where no recomputation occurs) will not see that headline speedup.

| Workload | Implication |

|---|---|

| Long-document Q&A, multi-hop retrieval | KeyDiff or H2O — retention fidelity matters |

| Streaming chat, session continuation | StreamingLLM — lowest overhead, stable generation |

| Strict HBM budget with many concurrent requests | KeyDiff — best accuracy per token slot |

| Score-signal is reliable (attention concentrates on key tokens) | H2O — heavy-hitter logic works well |

KeyDiff: when key-similarity-aware eviction wins

KeyDiff's mechanism is the most principled of the three if you care about minimizing redundancy in the retained cache. When two keys in the cache are near-identical in cosine distance, one of them carries redundant information — the attention output computed from either key is approximately the same. KeyDiff removes the redundant key, maximising the diversity — and therefore the representational coverage — of the retained cache. The paper explicitly justifies this: "We justify KEYDIFF by showing that it minimizes the pairwise cosine similarity among keys in the KV cache, maximizing the aforementioned diversity." (arXiv:2504.15364)

This is distinct from H2O's importance-score logic and from StreamingLLM's positional logic. KeyDiff does not ask "which tokens did the model attend to most?" or "which tokens are recent?" — it asks "which retained set spans the most distinct regions of key space?"

When teams evaluate this behavior inside vLLM or SGLang, the deployment detail that matters is not the framework brand but the cache path: PagedAttention and FlashAttention change how attention is executed, while KeyDiff changes which key-value pairs remain available to those kernels.

| Design axis | KeyDiff | H2O | StreamingLLM |

|---|---|---|---|

| Eviction criterion | Key-space diversity (cosine similarity) | Attention-score importance | Positional (sink + recency window) |

| Adapts to content? | Yes — similarity is data-dependent | Yes — score is data-dependent | No — window is fixed |

| Requires score accumulation? | No | Yes | No |

| Metric: cosine vs alternatives | Cosine outperforms other metrics (Table 16 of paper) | N/A | N/A |

Strengths in long-context retention

KeyDiff achieves the lowest published accuracy degradation of the methods compared here under memory-constrained inference. The abstract reports 1.5% and 0.04% accuracy drops from the non-evicting baseline across two benchmark settings — both while maintaining cache compression. The 0.04% figure indicates near-lossless compression on at least one task class.

The intuition is structural: a diverse cache covers more of the input's semantic content. A score-based method will over-retain whatever tokens happen to attract high attention mass early in decoding (often syntactic anchors), while a key-similarity method specifically avoids this bias by measuring redundancy rather than salience.

| Metric | KeyDiff | Baseline (no eviction) |

|---|---|---|

| Accuracy drop (setting A) | ~1.5% | 0% |

| Accuracy drop (setting B) | ~0.04% | 0% |

| Cache budget | Compressed (strict) | Full |

| Eviction criterion | Cosine similarity | N/A |

Source: KeyDiff paper abstract, arXiv:2504.15364. Exact benchmark names and model configurations require the full paper tables.

Weak spots and operational constraints

Key-similarity computation is not free. To select the most redundant key at eviction time, KeyDiff must compute pairwise (or approximate nearest-neighbour) similarity across the retained cache. At long sequence lengths — the exact regime where you need eviction — this overhead grows with cache size. The paper's accessible snippets confirm that cosine similarity is the chosen metric (and outperforms alternatives per Table 16), but they do not expose wall-clock TTFT numbers for the similarity computation step. Before deploying KeyDiff in a latency-sensitive stack, measure the eviction overhead in your specific prefill-length distribution.

Watch Out: KeyDiff's similarity computation adds overhead proportional to cache occupancy. On long-prefill workloads (document ingestion, RAG with large retrieved chunks), this overhead can appear as a TTFT spike. Profile with your actual prompt-length distribution — abstract-level accuracy numbers do not include this cost.

Additionally, the verified fact set does not expose the specific benchmark tasks behind the 1.5% and 0.04% figures. If your production task sits outside those benchmark families, assume degradation could be higher until you validate on your own eval set.

H2O: when importance-aware eviction is the conservative choice

H2O (Heavy Hitter Oracle) retains the tokens that accumulated the most attention mass across decoding steps, plus a small set of recent tokens. The heavy-hitter logic assumes that high cumulative attention weight is a proxy for semantic importance — tokens the model repeatedly attends to are likely load-bearing for generation quality. This assumption holds well in tasks with clear focal tokens: factual question answering over short documents, code generation with a dominant function signature, summarisation where a few named entities drive the output.

H2O is a research baseline built around a simple, interpretable retention rule rather than a more elaborate similarity metric, which makes it useful as a comparison point when evaluating long-context serving policies in vLLM or SGLang. "KEYDIFF significantly outperforms state-of-the-art KV cache eviction methods under similar memory constraints" (arXiv:2504.15364) — and H2O is the standing state-of-the-art the paper competes against.

| Property | H2O |

|---|---|

| Eviction signal | Accumulated attention score (heavy hitters) |

| Recent token retention | Yes — hybrid: heavy hitters + recency |

| Score accumulation overhead | Low-moderate (online update per step) |

| Workload fit | Generation tasks with concentrated attention |

| Primary risk | Evicts diffusely-attended but important tokens |

Why H2O often feels safer in retrieval-heavy workloads

In workloads where important tokens attract disproportionate attention mass — named entities being cross-referenced, a key constraint in a code problem, a date being reasoned about — H2O's score accumulation correctly identifies those tokens and retains them. This makes H2O predictably conservative: it loses tokens the model genuinely ignored, not tokens it needed diffusely.

For SREs who need a policy with interpretable failure modes, H2O is easier to reason about than KeyDiff. When H2O fails, it is usually because the task requires a token that never attracted high attention during prefill — a symptom you can sometimes detect by watching attention heatmaps on validation samples. If the serving stack is already tuned around PagedAttention or FlashAttention in vLLM or SGLang, H2O can fit into the existing runtime without changing model weights, but the cache-selection rule still needs task-level validation.

| Workload signal | H2O retention quality |

|---|---|

| Attention concentrates on few tokens (factual QA, summarisation) | High — heavy-hitter logic fires correctly |

| Attention diffuses across many tokens (multi-hop, instruction-following) | Moderate to low — scores spread, eviction less precise |

| Short-to-medium context (< 32K tokens) | Strong — score accumulation has less noise |

| Long context with many relevant tokens | Degrades — score budget thins across candidates |

Note: No primary-source H2O benchmark table was verified in the accessible fact set. Quantitative H2O figures require direct consultation of the H2O paper.

Where H2O loses to key-similarity selection

H2O's score-aggregation logic is blind to key-space redundancy. Two tokens can both accumulate high attention scores yet carry nearly identical information in key space — H2O retains both; KeyDiff drops one. Under tight cache budgets, this redundancy wastes slot capacity that could hold genuinely distinct tokens. As cache compression ratios increase, this inefficiency compounds: H2O's retained set becomes increasingly saturated with high-score-but-redundant tokens, and the accuracy drop accelerates.

Pro Tip: If you are running H2O and observing accuracy degradation at aggressive compression ratios, check whether your retained tokens cluster in key space. High key-cosine-similarity within the retained set is a signal that H2O is wasting budget on redundant tokens — exactly the regime where switching to KeyDiff or a hybrid policy pays off.

StreamingLLM: when sliding-window retention is enough

StreamingLLM solves a different problem from H2O and KeyDiff. It does not attempt to identify which historical tokens are important — it discards everything outside a fixed window except a small set of attention-sink tokens at the sequence start. This design makes StreamingLLM purpose-built for streaming generation: conversations that grow indefinitely, agent loops, continuous transcription — workloads where generation quality depends on recent context and where mid-conversation retrieval of distant tokens is not required.

The paper demonstrates stable and efficient language modeling on Llama-2, MPT, Falcon, and Pythia up to 4 million tokens and more, with a reported 22.2× speedup over sliding-window recomputation in streaming settings. On practical deployments, that makes it a cleaner fit for recency-dominant chat or transcription services than a long-context retrieval policy, especially when the backend is optimized around vLLM or SGLang rather than custom cache logic.

| Property | StreamingLLM |

|---|---|

| Retention strategy | Attention sinks (few) + recency window (fixed) |

| Mid-context token retention | None — gap is discarded |

| Compute overhead | Minimal — no scoring, no similarity |

| Token length target | 4M+ tokens demonstrated |

| Primary use case | Streaming generation, continuous dialogue |

| Not suitable for | Long-document retrieval, multi-hop over distant tokens |

Why attention sinks help streaming stability

Without attention-sink retention, naive sliding-window attention produces model instability: the attention mechanism loses the anchoring tokens that absorb probability mass during softmax normalisation. StreamingLLM discovered that a small set of initial tokens act as these sinks — they attract disproportionate attention regardless of semantic content — and that retaining them is sufficient to stabilise generation across arbitrarily long sequences. The paper states: "We discover that adding a placeholder token as a dedicated attention sink during pre-training can further improve streaming deployment." (arXiv:2309.17453)

| Configuration | Behaviour |

|---|---|

| Sliding window only (no sinks) | Model instability at long sequences |

| StreamingLLM (sinks + window) | Stable generation up to 4M+ tokens |

| vs. sliding-window recomputation | Up to 22.2× speedup |

| vs. full-cache eviction methods | Lower accuracy on retrieval tasks |

Source: StreamingLLM paper abstract, arXiv:2309.17453. Full configuration details require the paper's experimental section.

Where StreamingLLM breaks down

StreamingLLM's structural limitation is not a bug — it is a design consequence. Discarding mid-context tokens is how it achieves constant-memory streaming. The paper's own framing is "stable and efficient language modeling" — not retrieval accuracy, not multi-hop reasoning fidelity. Any task that requires the model to recall a fact, instruction, or entity from outside the recency window will degrade as sequence length grows, because that information no longer exists in the cache.

Watch Out: StreamingLLM cannot substitute for H2O or KeyDiff on long-document retrieval tasks. If your workload asks "what did the user say 200 turns ago?" or "find the constraint stated in paragraph 3 of a 50-page document," StreamingLLM will fail structurally — the token is gone. Use it exclusively for workloads where only recent context and initial anchors drive output quality.

Benchmarks that separate the three policies

The verified benchmark data available from accessible paper abstracts is asymmetric: KeyDiff and StreamingLLM both publish headline numbers; H2O's quantitative profile requires consulting the primary paper directly. The table below integrates verified figures and marks unverified slots explicitly.

| Method | Best reported accuracy drop vs baseline | Latency metric | Sequence scale |

|---|---|---|---|

| KeyDiff | 1.5% / 0.04% (two settings) | Not published in accessible source | Arbitrarily long (paper claim) |

| H2O | Not verified in this source set | Not verified in this source set | Long-context generation |

| StreamingLLM | Not measured (generation stability) | Up to 22.2× vs window recompute | 4M+ tokens demonstrated |

Sources: KeyDiff arXiv:2504.15364, StreamingLLM arXiv:2309.17453. H2O figures require arXiv:2306.14048.

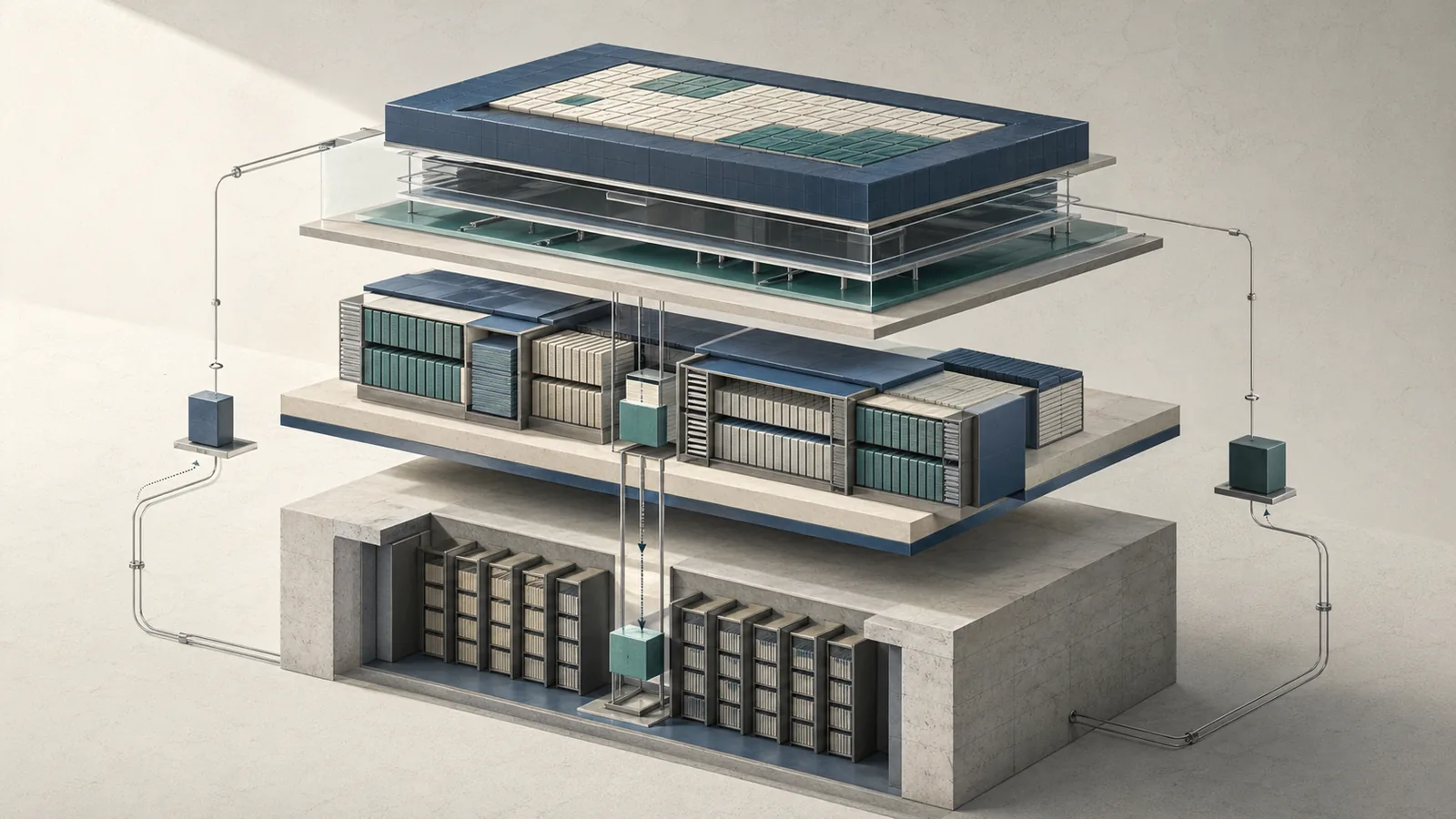

Memory ceiling versus compression ratio

KeyDiff's paper states it "can process arbitrarily long prompts within strict resource constraints and efficiently generate responses" (arXiv:2504.15364), which is the strongest claim on memory-ceiling behaviour. The mechanism — evicting redundant keys — means each retained token carries maximal marginal information, so the usable quality per token slot is higher than score-based or window-based retention at the same compression ratio.

StreamingLLM achieves its memory ceiling trivially by fixing window size — memory footprint is O(window size + sink count) regardless of sequence length. This is the tightest possible ceiling, but the cost is complete loss of mid-context content.

| Method | Memory growth with sequence length | Compression mechanism |

|---|---|---|

| KeyDiff | Bounded by cache budget (eviction enforces ceiling) | Similarity-based redundancy removal |

| H2O | Bounded by cache budget (score-based budget) | Score-threshold eviction |

| StreamingLLM | O(constant) — fixed window + sink count | Positional discard |

H2O compression ratio formula not verified in accessible sources; consult arXiv:2306.14048 for exact budget mechanics.

Latency impact on TTFT and end-to-end serving

The only verified latency statistic in the accessible source set is StreamingLLM's 22.2× speedup over sliding-window recomputation — a baseline that involves re-encoding the window on each generation step, which is expensive. Against other eviction methods that do not recompute, StreamingLLM's latency advantage collapses to the difference in selection overhead: positional pointer (StreamingLLM) < score accumulation (H2O) < similarity matrix (KeyDiff).

| Method | Eviction selection cost | TTFT impact (qualitative) | Relevant baseline |

|---|---|---|---|

| KeyDiff | Similarity computation over cache | Moderate — grows with cache size | Non-evicting full cache |

| H2O | Score accumulation (online, per step) | Low-moderate | Non-evicting full cache |

| StreamingLLM | Pointer increment (O(1)) | Minimal | Sliding-window recomputation |

No verified TTFT numbers for KeyDiff or H2O were available in the accessible source set. Measure on your own prefill distribution before committing to a policy.

Accuracy retention under long-context retrieval tasks

Accuracy retention is where the policies diverge most sharply and where the serving engineer's choice has the largest consequence. KeyDiff's abstract-reported numbers — 1.5% and 0.04% drops from baseline — are the only verified figures in this comparison. StreamingLLM does not report retrieval accuracy because its design does not target retrieval; it targets generation stability.

| Method | Retrieval task accuracy (vs full cache) | Generation stability |

|---|---|---|

| KeyDiff | ~1.5% or ~0.04% drop (task-dependent) | High — eviction preserves diverse context |

| H2O | Not verified in this source set | High — score retention preserves salient tokens |

| StreamingLLM | Degrades structurally beyond window | High — design target is stable streaming |

Decision matrix for choosing the right eviction policy

Policy selection is a function of three variables: whether your task requires mid-context retrieval, how aggressively you need to compress the cache, and whether eviction compute overhead matters in your TTFT budget. No single policy dominates across all three. For teams working in vLLM or SGLang, the choice often comes down to whether the stack already depends on PagedAttention or FlashAttention and how much operational churn you can tolerate when moving from a full-cache baseline on an NVIDIA H100 deployment.

| Workload characteristic | KeyDiff | H2O | StreamingLLM |

|---|---|---|---|

| Long-doc retrieval / multi-hop | ✅ Best retention | ✅ Good if attention concentrates | ❌ Structural failure |

| Streaming dialogue / agent loop | ✅ Works, overkill | ✅ Works, overkill | ✅ Purpose-built |

| Aggressive cache compression | ✅ Best accuracy/slot | ⚠️ Redundancy wastes slots | ✅ Constant memory |

| Minimal eviction overhead | ⚠️ Similarity cost | ✅ Score accumulation | ✅ O(1) pointer |

| Attention diffuses across many tokens | ✅ Unaffected | ⚠️ Score signal degrades | ❌ N/A |

Choose KeyDiff when

- Your task requires mid-sequence retrieval accuracy and you cannot afford more than ~2% degradation from a full-cache baseline.

- Cache compression ratio is high — you are pushing token budgets tight and need each retained slot to carry maximum information.

- Attention patterns are diffuse — the model does not concentrate on a small set of heavy-hitter tokens, which would neutralise H2O's advantage.

- You have CPU/GPU budget for similarity computation during eviction and can absorb the overhead in TTFT.

| Signal | Recommendation |

|---|---|

| Accuracy drop < 2% from baseline required | Choose KeyDiff |

| Task: long-document QA, RAG, instruction following | Choose KeyDiff |

| Attention is spread across many tokens | Choose KeyDiff |

Choose H2O when

- Your task's attention patterns concentrate reliably on a small set of semantically important tokens — factual QA, summarisation, code generation.

- You need a well-studied baseline with a broad research literature to compare against.

- Eviction compute budget is tighter than KeyDiff allows but you need better mid-context retention than StreamingLLM provides.

- You are benchmarking a new eviction method and need a standard comparison point.

| Signal | Recommendation |

|---|---|

| Task: factual QA, summarisation, code gen | Choose H2O |

| Need interpretable failure mode | Choose H2O |

| Eviction overhead must stay low | Choose H2O |

Choose StreamingLLM when

- Your workload is streaming generation — chatbot dialogue, continuous transcription, indefinitely-growing agent sessions — where only recent context and initial anchors drive output quality.

- Memory ceiling must be O(constant) regardless of sequence length.

- You are replacing a sliding-window recomputation baseline and want the latency savings (up to 22.2× speedup demonstrated in streaming settings).

- Mid-context retrieval is explicitly out of scope for the product requirement.

| Signal | Recommendation |

|---|---|

| Streaming dialogue, agent loop | Choose StreamingLLM |

| Memory must not grow with sequence length | Choose StreamingLLM |

| Replacing sliding-window recomputation | Choose StreamingLLM |

FAQ: the questions engineers ask before shipping KV-cache eviction

Engineers evaluating these policies in production tend to hit the same decision points. The table below maps the most common questions to a direct answer.

| Question | Short answer |

|---|---|

| Which policy has the lowest accuracy cost? | KeyDiff — 1.5%/0.04% drop reported vs baseline |

| Which policy has the lowest runtime overhead? | StreamingLLM — O(1) positional selection |

| Which policy works for 4M+ token streams? | StreamingLLM (demonstrated); KeyDiff (claimed for arbitrary length) |

| Which policy is safest for retrieval tasks? | KeyDiff > H2O > StreamingLLM |

| Which is the most-studied baseline? | H2O |

| Do any require fine-tuning or training changes? | None — all three are training-free at inference |

Is KeyDiff better than H2O for long-context serving?

On accuracy retention under strict memory constraints, KeyDiff's abstract-level claim is unambiguous: it "significantly outperforms state-of-the-art KV cache eviction methods under similar memory constraints" (arXiv:2504.15364), and H2O is the standing state-of-the-art it displaces. The 1.5%/0.04% accuracy drop figures, if they hold on your task distribution, represent a clear win over H2O's accuracy profile at equivalent cache budgets.

The caveat is the similarity computation overhead. H2O's score accumulation is cheaper per eviction step than KeyDiff's pairwise similarity, so in TTFT-sensitive deployments with short cache budgets, H2O may be the practical choice even if KeyDiff's accuracy ceiling is higher. The answer depends on whether your bottleneck is accuracy or prefill latency.

| Criterion | KeyDiff wins | H2O wins |

|---|---|---|

| Accuracy retention at tight cache budget | ✅ | |

| Eviction compute overhead | ✅ | |

| Research literature depth | ✅ | |

| Diffuse attention tasks | ✅ |

Can StreamingLLM replace eviction methods for all workloads?

No. StreamingLLM's paper demonstrates "stable and efficient language modeling with up to 4 million tokens and more" (arXiv:2309.17453) — but stability of generation is not the same as retention of arbitrary mid-sequence information. StreamingLLM's memory efficiency comes from discarding mid-context entirely. Any workload that requires the model to reference tokens outside the recency window will fail with StreamingLLM, and that failure is structural, not tunable.

Watch Out: Deploying StreamingLLM on a retrieval-augmented or long-document workload and then tuning the window size upward to "fix" retrieval failures just converts StreamingLLM into an expensive sliding window — you lose the constant-memory property without gaining KeyDiff's or H2O's principled selection. Use StreamingLLM only when the workload is genuinely streaming and recency-dominant.

What should I measure before adopting KeyDiff or H2O?

Run these four measurements on your production prompt distribution before committing to either policy:

| Measurement | Why it matters |

|---|---|

| Accuracy on your task vs full-cache baseline | Sets your degradation budget ceiling |

| TTFT at your p95 prefill length | Determines whether similarity overhead (KeyDiff) or score overhead (H2O) is acceptable |

| Attention weight distribution across sequence positions | High concentration → H2O fits; diffuse → KeyDiff fits |

| Cache occupancy at your target compression ratio | Validates that the claimed accuracy drops hold at your specific budget |

KeyDiff's selection criterion is "pairwise cosine similarity among keys" (arXiv:2504.15364) — so key-space clustering in your model's attention layers is the core diagnostic. StreamingLLM's reference point is the 22.2× speedup it achieves "over the sliding window recomputation baseline" (arXiv:2309.17453) — measure whether you are comparing against that baseline or against a zero-recomputation eviction policy, because the speedup figure does not carry over to the latter comparison.

Sources & References

Pro Tip: The KeyDiff paper (arXiv:2504.15364) is a preprint as of April 2026. Verify that the version you implement matches the version whose numbers you cite — the abstract's 1.5%/0.04% figures are from v4 of the PDF. H2O benchmark tables require a separate retrieval pass against the H2O primary paper; do not rely on aggregator summaries for production-grade accuracy figures.

- KeyDiff: Training-free KV Cache Eviction via Key Similarity (arXiv:2504.15364) — Primary source for KeyDiff method description, cosine-similarity criterion, and reported accuracy figures

- StreamingLLM: Efficient Streaming Language Models with Attention Sinks (arXiv:2309.17453) — Primary source for attention-sink mechanism, 22.2× speedup, and 4M+ token stability claims

- H2O: Heavy-Hitter Oracle for Efficient Generative Inference of Large Language Models (arXiv:2306.14048) — Primary source for H2O method; benchmark tables require direct paper access

- KeyDiff PDF (arXiv:2504.15364) — Full paper including Table 16 (cosine vs alternative distance metrics) and extended benchmark results

Keywords: KeyDiff, H2O, StreamingLLM, vLLM, SGLang, PagedAttention, FlashAttention, OpenReview, arXiv 2504.15364, NVIDIA H100, KV cache, attention sinks, sliding-window attention, long-context inference