What Megatron-LM is solving at cluster scale

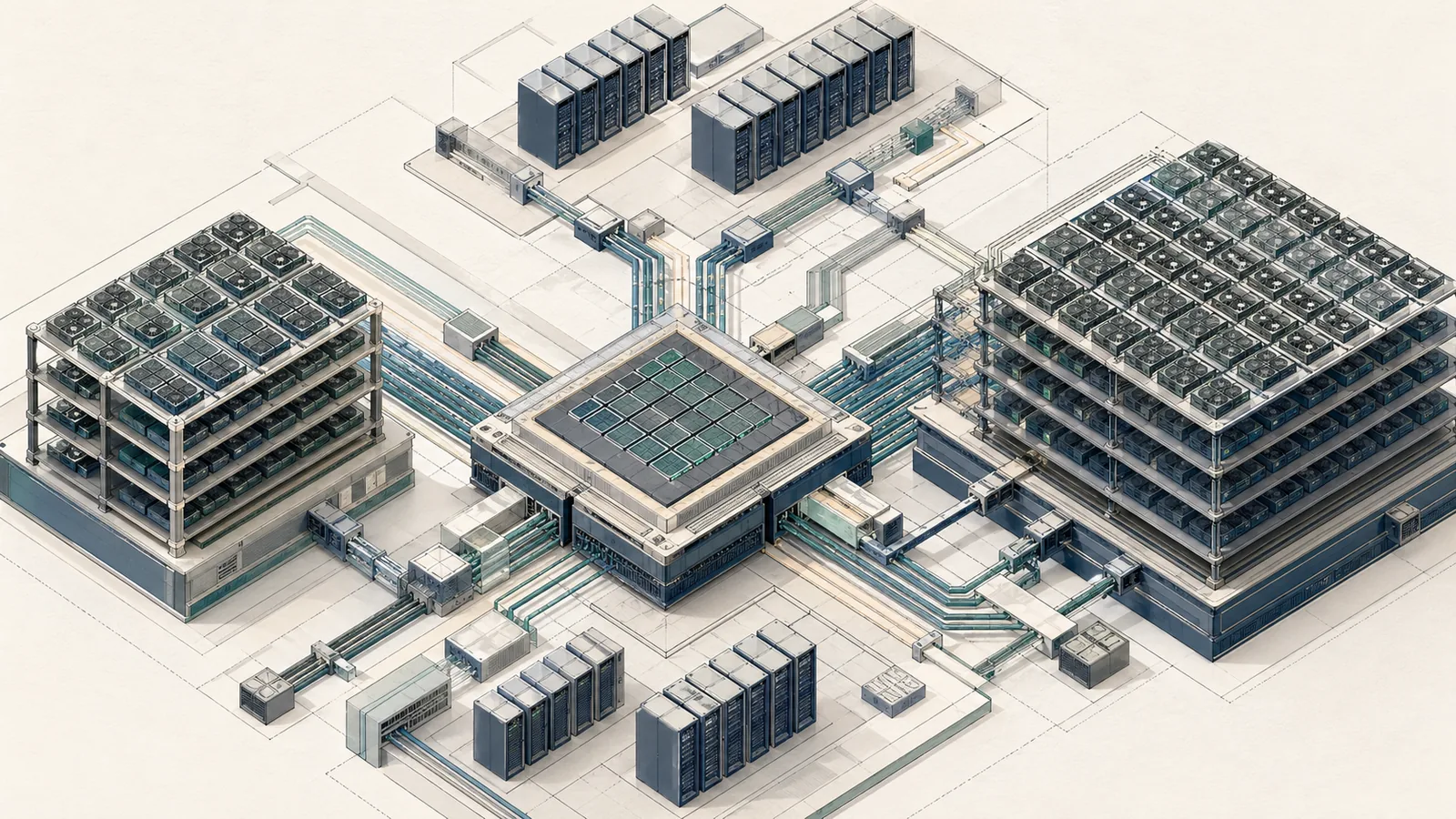

Training large transformers requires splitting model state, optimizer state, gradients, and activations across multiple GPUs so that memory and communication remain manageable. Megatron-LM exists to solve that distributed-training problem by composing multiple parallelism strategies so that no single GPU needs to hold the full model, the full optimizer state, or the full activation buffer.

The repository exposes two distinct components. Megatron-LM is the reference example: "Megatron-LM is a reference example that includes Megatron Core plus pre-configured training scripts." Megatron Core is the modular substrate underneath it — a GPU-optimized library of composable building blocks that any training framework can consume. The repo makes this split explicit: "Megatron-LM is a reference example that includes Megatron Core plus pre-configured training scripts."

The answer to whether Megatron-LM can use tensor parallelism and pipeline parallelism simultaneously is yes — and it is designed to. Megatron Core composes TP, PP, DP, expert parallelism (EP), and context parallelism (CP) as orthogonal process-group dimensions, meaning you can dial each one independently and the intersection forms the actual device mesh.

Bottom Line: Megatron-LM's value is not any single parallelism strategy — it is the ability to compose tensor parallelism, pipeline parallelism, data parallelism, expert parallelism, and sequence/context parallelism simultaneously inside one training job, with mathematical equivalence to single-GPU training preserved at every point. The cost is added communication overhead, scheduling complexity, and a steep configuration surface that punishes incorrect partitioning choices.

How Megatron Core layers TP, PP, DP, EP, and CP

Megatron Core treats each parallelism axis as a separate process-group dimension. When you launch a job with TP, PP, and DP values chosen for your cluster, the global process count is the product of those dimensions, and NCCL initializes separate communicators for each axis. Collectives within the TP group use the fastest available intra-node links where the topology allows it. Collectives within the PP group use point-to-point sends and receives between pipeline stages. DP gradient reductions follow the data-parallel topology and can be bucketed and overlapped with backward computation.

The official Megatron Core parallelism documentation adds EP for mixture-of-experts token routing and CP for long-context attention partitioning as first-class axes in the same composable mesh.

flowchart LR

subgraph DP_Replica_0["DP Replica 0"]

subgraph PP_Stage_0["PP Stage 0 (Layers 0–11)"]

TP0["GPU 0\nTP rank 0"]

TP1["GPU 1\nTP rank 1"]

TP2["GPU 2\nTP rank 2"]

TP3["GPU 3\nTP rank 3"]

TP0 <-->|"all-reduce / reduce-scatter\n(NVLink)"| TP1

TP1 <-->|NVLink| TP2

TP2 <-->|NVLink| TP3

end

subgraph PP_Stage_1["PP Stage 1 (Layers 12–23)"]

TP4["GPU 4\nTP rank 0"]

TP5["GPU 5\nTP rank 1"]

TP6["GPU 6\nTP rank 2"]

TP7["GPU 7\nTP rank 3"]

TP4 <-->|NVLink| TP5

TP5 <-->|NVLink| TP6

TP6 <-->|NVLink| TP7

end

PP_Stage_0 -->|"activation send\n(InfiniBand)"| PP_Stage_1

end

subgraph DP_Replica_1["DP Replica 1"]

PP_Stage_2["PP Stage 0 (mirror)"]

PP_Stage_3["PP Stage 1 (mirror)"]

end

DP_Replica_0 <-->|"gradient all-reduce\n(InfiniBand)"| DP_Replica_1

The diagram above represents TP=4, PP=2, DP=2 — 16 GPUs total. Sequence parallelism and context parallelism layer on top of the TP dimension without requiring additional process-group creation: SP reuses the TP communicator to shard non-TP activations, and CP adds a separate ring-attention communicator for the attention computation across long sequences.

Parallelism domains and what each one splits

Each parallelism axis splits a different tensor dimension:

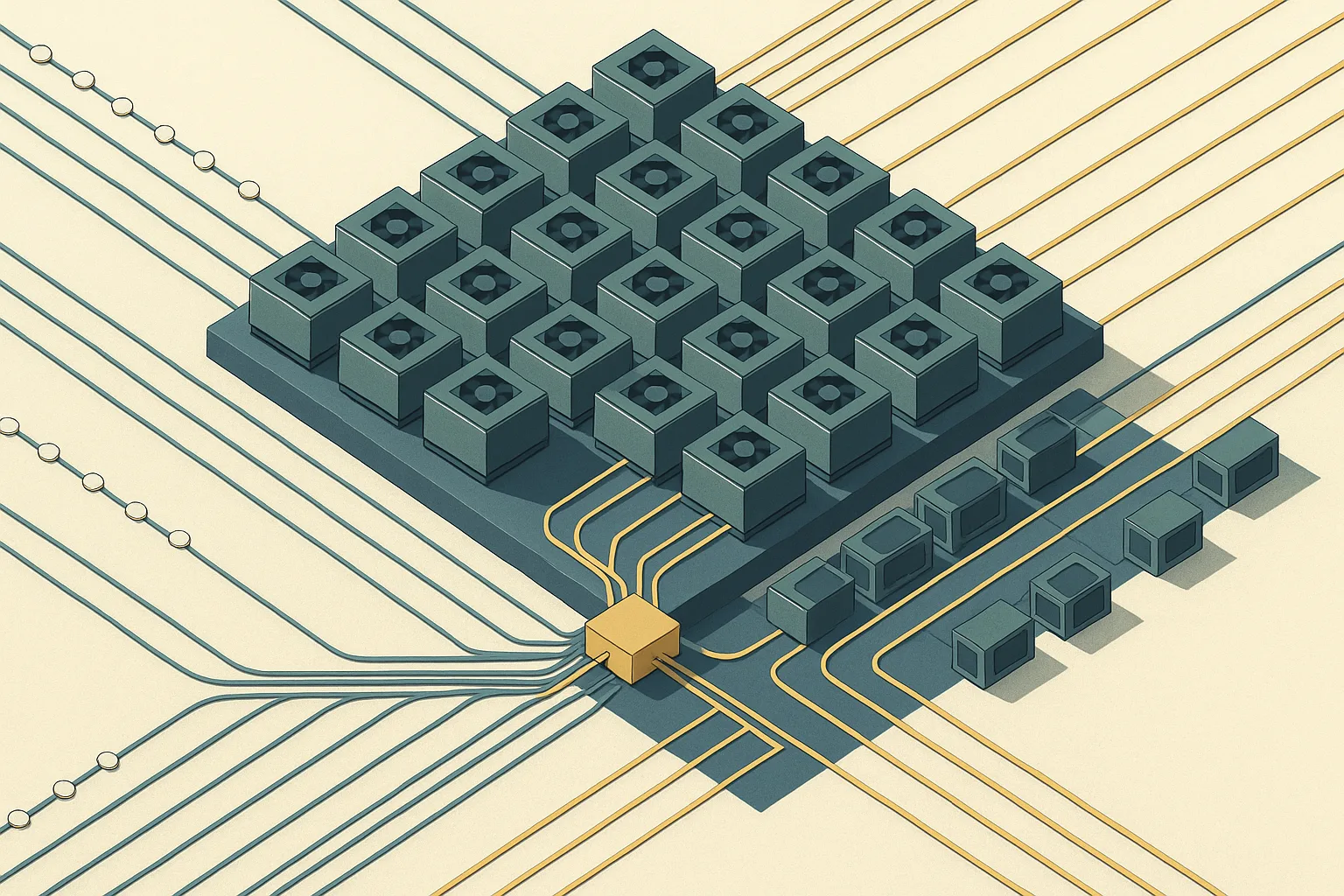

Tensor parallelism (TP) splits individual weight matrices and the corresponding activation tensors within a single transformer layer across GPUs. A matrix multiply that would normally produce a full output on one GPU instead produces a partial result on each TP rank; a collective then reconstructs the full result. TP keeps all TP-rank GPUs busy on every forward step, which is why it is best contained within one NVLink domain.

Pipeline parallelism (PP) assigns complete transformer layers to GPU stages. Stage 0 holds layers 0–L/P, stage 1 holds layers L/P–2L/P, and so on. Microbatches flow through the stages sequentially, with activation tensors transmitted between stages as ordinary NCCL point-to-point sends.

Data parallelism (DP) replicates the model across PP × TP device groups and synchronizes gradients after each backward pass. At large scale, DP becomes the axis where the distributed optimizer shards optimizer states.

Expert parallelism (EP) partitions MoE expert weights across EP ranks, with NCCL all-to-all collectives routing tokens to the correct experts.

Context parallelism (CP) splits the sequence dimension specifically for the attention computation, enabling ring attention across long sequences where each CP rank holds a contiguous sequence chunk.

The Megatron Bridge parallelism guide states: "Enable sequence parallelism when using tensor parallelism to reduce activation memory. Use context parallelism for long sequence training." The Hugging Face Megatron-LM integration guide adds, "As all-reduce = reduce-scatter + all-gather, this saves a ton of activation memory at no added communication cost."

Pro Tip: Keep TP degree ≤ the number of GPUs within a single NVLink domain (typically 8 on an H100 SXM node). Crossing NVLink boundaries with TP collectives increases latency by an order of magnitude and can erase any memory benefit the sharding provides.

Why Megatron Core is composable instead of monolithic

Megatron Core exposes transformer building blocks — attention, MLP, normalization, embedding — as individually instantiable modules that accept a TransformerConfig carrying the parallelism parameters. A custom training framework imports these modules directly without inheriting the full Megatron-LM training loop.

As the official repository states: "Megatron Core is a composable library with GPU-optimized building blocks for custom training frameworks." This is architecturally distinct from DeepSpeed or FSDP, which wrap an existing model rather than providing model building blocks.

Production Note: Megatron Core's composability is a double-edged property. It gives infrastructure teams explicit control over every parallelism decision, but it means you own the integration: data loading, checkpointing, logging, and learning-rate scheduling are not included. Teams migrating from an opinionated training script should budget significant engineering time for the integration layer before claiming Megatron Core's performance at scale.

Tensor parallelism inside a transformer block

Tensor parallelism preserves the transformer's mathematical behavior by splitting weight matrices in a way that makes partial results computable on each rank and then reconstructable via a single collective. In general linear algebra, a matrix multiply $Y = XW$ can be decomposed as a column-partitioned or row-partitioned operation without changing the final result.

For an MLP with weight matrices (W_1 \in \mathbb{R}^{d \times 4d}) and (W_2 \in \mathbb{R}^{4d \times d}) and TP degree $t$, the decomposition is:

$(Y = \text{GeLU}(X W_1) W_2 = \left[\text{GeLU}(X W_1^{(1)}) \;\Big|\; \cdots \;\Big|\; \text{GeLU}(X W_1^{(t)})\right] \begin{bmatrix} W_2^{(1)} \ \vdots \ W_2^{(t)} \end{bmatrix})$

Each GPU $i$ holds (W_1^{(i)} \in \mathbb{R}^{d \times 4d/t}) (column partition) and (W_2^{(i)} \in \mathbb{R}^{4d/t \times d}) (row partition). GPU $i$ computes its partial output (Y^{(i)} = \text{GeLU}(X W_1^{(i)}) W_2^{(i)} \in \mathbb{R}^{s \times d}), and an all-reduce across the TP group sums the $t$ partial outputs to recover $Y$. The full transformer forward pass completes with mathematical equivalence to the single-GPU version.

The Megatron Core tensor-parallel layers implement this via reduce_scatter_to_sequence_parallel_region and related collectives in megatron/core/tensor_parallel/layers.py.

Column-wise and row-wise sharding in attention and MLP

Column-wise sharding partitions a weight matrix along its output (column) dimension: each rank computes a subset of output features independently and holds the full input. Row-wise sharding partitions along the input (row) dimension: each rank holds a subset of input features and accumulates partial dot products that must be summed. Chaining column-wise → row-wise within a single layer means the input broadcast happens once at the column layer, the partial results flow through activation, and the all-reduce happens once after the row layer — two collectives per MLP block regardless of $t$.

For multi-head attention, the QKV projection is column-partitioned (each rank holds a subset of heads), and the output projection is row-partitioned. Attention computation per head is local to each rank. The Hugging Face Megatron-LM integration guide describes the sequence-parallel variant: "To put it simply, it shards the outputs of each transformer layer along sequence dimension."

Pro Tip: With sequence parallelism enabled, the all-reduce after the row-wise linear is replaced by a reduce-scatter (producing sequence-sharded output) and the all-reduce before the column-wise linear is replaced by an all-gather. The Hugging Face guide notes: "As all-reduce = reduce-scatter + all-gather, this saves a ton of activation memory at no added communication cost." The key insight is that the sequence-sharded activations between transformer sub-layers need not be reassembled until a collective is already required.

Communication cost: all-reduce, reduce-scatter, and overlap

Every TP forward pass issues two collective operations per transformer layer (or four in the SP variant — reduce-scatter and all-gather in each direction). NCCL provides the underlying implementations: "NCCL provides efficient implementations of all-reduce, all-gather, reduce-scatter, broadcast, and reduce" and selects algorithms based on hardware topology and message size automatically.

When TP is confined to a single NVLink node, NVSwitch bandwidth (up to 900 GB/s bidirectional on H100 SXM) absorbs these collectives cheaply. Crossing InfiniBand with TP is where latency compounds: each layer now waits on a cross-node fabric round trip per collective, and at large $t$ the per-layer communication can exceed the compute time for moderate hidden dimensions.

Watch Out: Increasing TP degree beyond your NVLink domain boundary does not just add communication — it changes the communication latency from nanoseconds (NVSwitch) to microseconds (InfiniBand). At TP=16 across two nodes, each transformer layer's forward pass issues collectives that cross InfiniBand four times (with SP). Profile with

NCCL_DEBUG=INFObefore assuming TP=16 outperforms TP=8 with more DP replicas.

Pipeline parallelism and stage scheduling

Pipeline parallelism partitions the transformer's layers across PP stage GPUs so that no single GPU holds the full model depth. In Megatron-LM, pipeline parallelism partitions model layers into stages across GPUs and uses microbatches to reduce pipeline bubbles. Stage 0 executes layers 0 through $L/P - 1$, sends its output activation tensor to stage 1, which executes the next layer block, and so on. The activation transfer between stages is a peer-to-peer send/receive over the cluster fabric, which is significantly cheaper than a collective but introduces a sequential dependency that creates pipeline bubbles.

Bottom Line: Pipeline parallelism is the correct axis when the model does not fit on one TP group even at the maximum feasible TP degree. The Megatron Bridge parallelism guide is direct: "Consider pipeline parallelism for very large models that don't fit on a single GPU." The bubble overhead is real but manageable if you schedule enough microbatches relative to pipeline depth.

Microbatching, bubbles, and schedule choices

A pipeline bubble is idle time on a stage GPU while it waits for its upstream stage to produce activations. For a simple 1F1B (one-forward-one-backward) schedule with $P$ stages, the bubble fraction is approximately $(P-1)/(m + P - 1)$ where $m$ is the number of microbatches per global batch. With $P = 8$ stages and $m = 8$ microbatches, the bubble fraction is (\approx 47\%). Increasing to $m = 64$ drops it to (\approx 10\%).

Megatron-LM supports interleaved pipeline schedules (virtual stages) that split each physical stage into $v$ virtual stages, reducing the bubble fraction to approximately $(P-1)/(mv + P - 1)$. Interleaving trades reduced bubble time for increased activation memory because more microbatches must be live simultaneously.

Watch Out: Choosing $m$ too small to hit the memory budget while assuming the bubble is acceptable is a common misconfiguration. At PP=8 with $m=4$, you are running at roughly 64% pipeline utilization before accounting for any other overhead. The right trade-off is a function of your global batch size requirement — if your batch size forces $m < P$, reconsider the PP depth.

When pipeline depth helps and when it hurts

Pipeline depth helps precisely when the model exceeds single-node memory even after applying maximum TP and optimizer sharding. A 530B parameter model at BF16 occupies roughly 1 TB of weight memory alone; no single H100 pod can hold it at any TP setting, so PP is unavoidable.

Pipeline depth hurts when: - Stages are imbalanced (embedding layers are lightweight; later layers with heavy attention at long sequences are heavier), creating a bottleneck stage that stalls all others. - The number of microbatches is constrained by the global batch size, driving bubble overhead above 30%. - The activation tensors crossing stage boundaries are large (long sequences, large hidden dimensions), saturating the InfiniBand link that also carries DP gradient traffic.

Production Note: Virtual pipeline stages (interleaving) are Megatron-LM's primary tool for attacking bubble overhead without increasing global batch size. Setting

--num-layers-per-virtual-pipeline-stagereduces the effective bubble but increases peak activation memory per pipeline rank. At scale on NVIDIA H100 clusters, the combination PP=8 with $v=2$ virtual stages and $m=16$ microbatches is a common starting configuration, but the right answer depends on model depth, sequence length, and network topology.

Sequence parallelism for activation memory reduction

Sequence parallelism addresses an activation-memory problem that tensor parallelism alone does not solve. TP shards weight matrices but, in the regions between sub-layers (after layer norm, before the next column-wise linear), the full-sequence activation tensors still exist unsharded on each TP rank. This is the memory pressure that SP is designed to reduce.

The Megatron Bridge guide states: "Sequence Parallelism distributes the sequence dimension across multiple GPUs, reducing activation memory." The arXiv paper 2405.07719 formally defines this as dividing the sequence dimension of input tensors across multiple computational devices.

How SP complements tensor parallelism

Sequence parallelism is not a standalone strategy — it is an augmentation of the TP process group. The same TP communicator handles both the weight-parallel collectives (reduce-scatter/all-gather for the linear layers) and the sequence-dimension transitions between sub-layers. There is no new process group, no new communication topology.

When SP is active, the activation between sub-layers is sequence-sharded: each TP rank holds contiguous sequence positions. Before a column-wise linear (which needs the full sequence to compute its partial output features), Megatron Core issues an all-gather on the TP group to reconstitute the full sequence. After the row-wise linear, it issues a reduce-scatter to both sum partial outputs and immediately re-shard along the sequence axis, leaving the next sub-layer with sequence-sharded activations again.

As the Hugging Face guide confirms: "Enable sequence parallelism when using tensor parallelism to reduce activation memory."

Pro Tip: SP's memory benefit scales linearly with TP degree: at TP=8, non-layer-norm activations are 8× smaller per rank. The throughput cost is near-zero because reduce-scatter and all-gather together complete the same total data movement as a standalone all-reduce. Enabling SP whenever TP ≥ 4 is almost always the right call unless you have a specific reason to keep activations unsharded.

Sequence parallelism versus context parallelism

SP and CP are frequently confused because both operate on the sequence dimension, but they solve fundamentally different problems at different sequence-length regimes.

| Dimension | Sequence Parallelism (SP) | Context Parallelism (CP) |

|---|---|---|

| What it shards | Activations between sub-layers | Attention computation across sequence |

| Primary benefit | Activation memory reduction | Enables longer sequences per GPU |

| Works with | TP process group (no new communicator) | Separate CP ring communicator |

| Collective pattern | all-gather + reduce-scatter per linear | Ring attention send/recv |

| Recommended when | Always with TP ≥ 4 | Sequence length > NVLink-node capacity |

| NVIDIA guidance | Activation memory reduction | Long sequence training |

The Megatron Core developer docs and the Megatron Bridge guide are explicit about this split: "Use context parallelism for long sequence training." CP partitions the KV cache and attention scores across CP ranks using a ring pattern, which is architecturally distinct from SP's activation-sharding role. The arXiv paper 2405.07719 also notes that different SP approaches carry different communication costs and robustness characteristics, which is why NVIDIA chose a separate CP axis rather than extending SP to cover long-context attention.

Optimizer sharding, recomputation, and memory economics

A 70B parameter model in BF16 occupies ~140 GB of weight memory. With mixed-precision Adam, optimizer states (FP32 master weights, first moment, second moment) add another ~840 GB. Activations for a 4,096-token sequence at 80 layers without recomputation add tens of GB more. No combination of TP and PP alone eliminates this; optimizer sharding and activation recomputation are required as complementary tools.

| Strategy | Memory saved | Compute cost | Communication added |

|---|---|---|---|

| TP=8 (weights only) | Weights / 8 per rank | None (local) | 2 collectives per layer |

| SP enabled | Non-TP activations / 8 per rank | None | Absorbed into TP collectives |

| PP=4 | Weights / 4 (across stages) | Bubble overhead | P2P activation transfers |

| Distributed optimizer (DP sharding) | Optimizer states / DP degree | Gather at update | Scatter/gather at step |

| Full activation recomputation | All activations (keep only inputs) | ~33% extra FLOPs | None |

| Selective recomputation | Expensive activations only | ~5–10% extra FLOPs | None |

Megatron Core's distributed data parallel implementation (megatron/core/distributed/distributed_data_parallel.py) shards optimizer states across the DP group, mirroring ZeRO-1/ZeRO-2 semantics. This is separate from and composable with TP and PP.

Activation recomputation versus throughput

Activation recomputation (checkpoint recomputation) discards intermediate activations during the forward pass and recomputes them from layer inputs during the backward pass. As confirmed by Megatron-LM benchmarking references: "To reduce GPU memory usage when training a large model, we support various forms of activation checkpointing and recomputation."

Full recomputation adds roughly one additional forward pass of compute per training step — approximately 33% overhead in a compute-balanced regime. Selective recomputation targets only the activations with the highest memory-to-recompute-cost ratio (typically attention softmax intermediates and dropout masks), reducing overhead to 5–10% at the cost of moderate activation memory.

Watch Out: Full recomputation at TP=8 + PP=8 becomes counterproductive when compute utilization is already above 80 MFU (model FLOP utilization). You are paying 33% compute overhead to recover memory that could instead be freed by tuning SP degree or reducing microbatch size. Profile FLOP utilization with and without recomputation before committing to it as a default.

Where distributed optimizer sharding fits in

Megatron Core's distributed optimizer shards FP32 optimizer states across the DP group, so each DP rank holds optimizer states only for the parameters it owns within the DP partition. This is the primary tool for attacking the optimizer-state memory floor that neither TP nor PP addresses.

The interaction with TP and PP is indirect but important: TP shards weight tensors, so each TP rank holds a different weight slice; the DP group then shards optimizer states over copies of those slices. Increasing TP degree reduces weight memory per rank AND reduces optimizer-state memory per rank in the distributed optimizer, making TP × distributed optimizer the highest-leverage combination for parameter memory.

Production Note: Distributed optimizer state sharding interacts with gradient bucket sizing and communication overlap scheduling. Setting bucket sizes too large delays optimizer updates; too small increases collective overhead. The default Megatron Core configuration balances these, but multi-node deployments with heterogeneous network fabric (e.g., mixed NVLink and HDR InfiniBand) may require explicit bucket tuning to maintain compute/communication overlap during optimizer steps.

Practical engineering constraints from the official repo

The official NVIDIA/Megatron-LM repository identifies two installation paths and one migration tool that practitioners need to know before touching a cluster.

Bottom Line: PyPI installation is faster for evaluation; source builds are required for custom CUDA extensions and Transformer Engine integration. Megatron Bridge is the official checkpoint conversion path when moving models between Hugging Face and Megatron format — mismatched parallelism layouts during conversion silently produce incorrect weights.

PyPI install versus source build

# PyPI install — fastest path, no custom kernel compilation

$ pip install megatron-core

# Source build — required for Transformer Engine, custom fused kernels

$ git clone https://github.com/NVIDIA/Megatron-LM.git

$ cd Megatron-LM

# Limit parallel compilation jobs to avoid OOM during build

$ MAX_JOBS=4 pip install -e .

The repo is explicit: "Building from source can be memory-intensive." On a machine with limited CPU RAM (under 64 GB), compilation of CUDA extensions with the default job count can exhaust system memory. Setting MAX_JOBS=4 or lower prevents OOM kills during pip install. The PyPI package (megatron-core) provides the core library without the reference training scripts and does not compile CUDA extensions by default, making it suitable for framework integration work but insufficient for production training runs that require fused kernels.

Checkpoint conversion with Megatron Bridge

Megatron Bridge is NVIDIA's bidirectional conversion tooling for checkpoints between Hugging Face format and Megatron's sharded format.

ConfigBlock: The following configuration example shows the required parallelism layout for conversion.

# Example Megatron Bridge conversion config (megatron_bridge_config.yaml)

# Direction: Hugging Face → Megatron Core sharded checkpoint

source_format: huggingface

target_format: megatron_core

model_type: llama # architecture identifier

tensor_parallel_size: 8 # must match target training config

pipeline_parallel_size: 4 # must match target training config

sequence_parallel: true # must match target SP setting

bf16: true # precision must be consistent

The critical constraint is that parallelism parameters in the conversion config must exactly match the target training configuration. A mismatch between tensor_parallel_size in the Bridge config and the value used at training launch does not raise an error during conversion — it produces weight tensors with wrong shard boundaries that cause silent numerical errors during training. The Bridge docs also carry configuration guidance for sequence_parallel and context parallelism settings, reinforcing that conversion is layout-aware, not just weight-format-aware.

Where Megatron-LM fits against FSDP and DeepSpeed

| Dimension | Megatron-LM / Megatron Core | PyTorch FSDP2 | DeepSpeed ZeRO |

|---|---|---|---|

| Parallelism model | TP + PP + DP + EP + CP composable | Pure sharding (ZeRO-3 style) | ZeRO-1/2/3 + optional TP/PP via Megatron-DeepSpeed |

| Memory strategy | Shard weights by axis; optimizer sharding per DP | Shard all: weights, grads, optimizer | Shard weights/grads/optimizer; optional offload |

| Communication pattern | Multi-collective (TP), P2P (PP), AR (DP) | All-gather + reduce-scatter per param | All-gather + reduce-scatter (ZeRO-3), AR (ZeRO-1/2) |

| Framework coupling | Megatron Core integrates; Megatron-LM is opinionated | PyTorch-native, low integration cost | Wraps existing models; engine API |

| Best regime | Large pre-training, MoE, long context | Fine-tuning, moderate scale | Fine-tuning to mid-scale pre-training |

The arXiv study 2311.03687 directly compares DeepSpeed and Megatron-LM training, evaluating ZeRO-2, ZeRO-3, offloading, activation recomputation, and FlashAttention across training configurations. The Megatron-DeepSpeed fork (github.com/deepspeedai/Megatron-DeepSpeed) combines both stacks: "DeepSpeed version of NVIDIA's Megatron-LM that adds additional support for several features such as MoE model training, Curriculum Learning, 3D Parallelism, and others." This is a distinct codebase from NVIDIA's Megatron-LM.

Best fit by workload, cluster, and team maturity

-

Choose Megatron Core when you are running pre-training at scale (>100B parameters), need explicit TP/PP/CP composition, run on an NVIDIA NVLink cluster, and your team can invest in the integration layer. The Megatron Core documentation confirms its design target is custom training frameworks requiring GPU-optimized building blocks.

-

Choose FSDP2 when you are fine-tuning an existing Hugging Face model at up to ~70B parameters, your team is PyTorch-native, and you want the lowest integration cost. FSDP2's all-gather/reduce-scatter pattern is simpler to reason about and debug than Megatron's multi-axis collective graph.

-

Choose DeepSpeed ZeRO when memory is the binding constraint and you need ZeRO-3 CPU offload to train a model that does not fit on GPU memory even with sharding. DeepSpeed's offload path has no Megatron Core equivalent.

-

Choose Megatron-DeepSpeed when you need MoE with curriculum learning or want 3D parallelism without building the Megatron Core integration from scratch.

-

Choose Megatron-LM (the reference scripts) when you want NVIDIA's exact pre-training configuration for GPT-style models and are willing to stay inside the script's assumptions.

Failure modes and scaling gotchas

Watch Out: Mathematical correctness and operational correctness are independent properties of a Megatron configuration. A configuration with correct TP/PP/DP sizes, valid layer partitioning, and matching SP flags can still run at 30% of theoretical MFU if NCCL collectives are not overlapped with compute, InfiniBand is contended, or microbatch count is too low for the pipeline depth. Preserving model math does not guarantee throughput.

NCCL collective imbalance is the most common failure mode at scale. At TP=8 + PP=8 across 512 GPUs, NCCL manages multiple distinct communicators: the TP all-reduce/reduce-scatter group, the PP point-to-point group, and the DP gradient-sync group. If any of these contend on the same InfiniBand rail without proper affinity pinning, step time inflates non-deterministically.

Diagnosing communication hot spots

Pro Tip: Set

NCCL_DEBUG=INFOandNCCL_DEBUG_SUBSYS=COLLto log every collective operation with size and algorithm selection. Pipe the output to a per-rank log file (NCCL_DEBUG_FILE=/tmp/nccl_rank_%d.log) and grep forRINGversusTREEalgorithm selection — NCCL choosing TREE for large TP collectives over InfiniBand indicates it has detected sub-optimal topology and is adapting, which is a signal to checkNCCL_IB_HCAbinding andNCCL_SOCKET_IFNAMEfor correct interface selection.

On multi-node deployments over InfiniBand, verify that each GPU maps to the InfiniBand HCA on the same PCIe root complex. Mismatched GPU-to-HCA affinity can halve effective bandwidth for TP collectives that must cross the InfiniBand fabric. Use nvidia-smi topo -m to confirm GPU–NIC topology before launching any multi-node Megatron job.

NCCL's algorithm selection is topology-aware but not cluster-topology-aware: it cannot know that your InfiniBand fabric has a particular congestion bottleneck. At very large scales (1,000+ GPUs), collective hot spots typically appear only in production runs, not in single-node or small-scale tests. Monitoring step time variance across training steps is a more reliable indicator than any single profiling run.

When model math is unchanged but performance collapses

Three configuration mistakes consistently produce mathematically valid but operationally poor runs:

Stage imbalance in PP: Assigning the embedding layer and first two transformer layers to stage 0 while assigning 20 heavy layers to stage 1 creates a bottleneck stage. The pipeline stalls on stage 1's compute, and all other stages idle. Megatron-LM's layer assignment is uniform by default; non-uniform layer counts require explicit configuration.

TP width exceeding NVLink domain: At TP=16 on a cluster where each node has 8 GPUs, TP collectives cross InfiniBand. Collective latency for a 512 MB all-gather at InfiniBand speeds is measurable in milliseconds — long enough to stall the compute stream on a layer with moderate FLOPs. The model math remains correct; throughput collapses.

Over-recomputation at high MFU: Enabling full activation recomputation when the cluster is compute-bound rather than memory-bound adds ~33% backward-pass compute with no memory headroom gain (since memory was not the constraint). Selective recomputation targeting only attention softmax intermediates is the correct default for a GPU running above 50% MFU.

Watch Out: Always profile MFU (model FLOP utilization) before and after enabling recomputation, SP, or changing TP/PP configuration. A configuration change that improves memory headroom but drops MFU from 45% to 30% is a net loss for training cost per token.

FAQ on Megatron-LM parallelism

What is tensor parallelism in Megatron-LM?

Tensor parallelism shards individual weight matrices (QKV projections, MLP weights) across a group of GPUs within a single layer. Each GPU computes a partial result; a collective (all-reduce or reduce-scatter) assembles the full result. The transformer's mathematical output is identical to single-GPU computation.

How does pipeline parallelism work in Megatron-LM?

Pipeline parallelism assigns contiguous groups of transformer layers to distinct GPU stages. Microbatches stream through stages sequentially. Stage-to-stage activation tensors transfer via NCCL point-to-point sends. Bubble overhead is managed by increasing microbatch count or using interleaved (virtual) pipeline schedules.

What is sequence parallelism in Megatron-LM?

Sequence parallelism shards activation tensors along the sequence dimension between transformer sub-layers, using the same process group as tensor parallelism. It is enabled alongside TP to reduce activation memory. It does not partition the attention computation — that is context parallelism's role.

How does Megatron-LM differ from DeepSpeed?

Megatron-LM (and Megatron Core) use model parallelism — TP splits weight tensors, PP splits layers. DeepSpeed ZeRO uses pure weight/gradient/optimizer-state sharding across all data-parallel ranks without changing the forward-pass computation graph. Megatron Core is composable building blocks; DeepSpeed wraps existing models. They can be combined in the Megatron-DeepSpeed fork.

Can Megatron-LM use tensor and pipeline parallelism together?

Yes. Megatron Core treats TP and PP as orthogonal process-group dimensions. A job with TP, PP, and DP values chosen for the cluster uses separate NCCL communicators for each axis. SP and CP can be layered on top without additional process-group changes.

Bottom Line: Sequence parallelism does not replace tensor parallelism — it is an activation-memory augmentation that runs within the TP process group. Context parallelism is the separate axis for long-sequence attention scaling. Megatron Core's composability lets you combine all five axes (TP, PP, DP, EP, CP) simultaneously, but correct throughput requires balancing all five against your hardware fabric and batch-size constraints.

Sources and references

Production Note: The NVIDIA/Megatron-LM GitHub repository and NVIDIA's developer documentation are the authoritative sources for Megatron Core's current API and parallelism semantics. ArXiv papers cover the algorithmic foundations and comparative evaluations. Megatron Bridge documentation is the canonical reference for checkpoint conversion. All URLs below were current as of May 2026.

- NVIDIA/Megatron-LM GitHub Repository — Official source for Megatron-LM reference scripts and Megatron Core library; contains README, architecture notes, and installation instructions

- Megatron Core Parallelism Guide — NVIDIA developer documentation covering TP, PP, DP, EP, and CP axes in Megatron Core

- Megatron Bridge Parallelisms Documentation — NVIDIA NeMo/Bridge guide covering SP, CP, and configuration recommendations for checkpoint conversion

- Megatron Core Tensor Parallel Layers (layers.py) — Source implementation of column-wise and row-wise tensor-parallel linear layers with reduce-scatter collectives

- Megatron Core Distributed Data Parallel — Source implementation of distributed optimizer state sharding

- arXiv 2405.07719 — Sequence Parallelism Survey — Formal definition and comparative analysis of sequence parallelism variants and their communication costs

- arXiv 2311.03687 — DeepSpeed vs. Megatron-LM Runtime Comparison — Empirical comparison evaluating ZeRO-2/3, offloading, activation recomputation, and FlashAttention across frameworks

- Hugging Face Accelerate — Megatron-LM Integration Guide — Integration documentation covering SP activation-sharding mechanism and TP collective decomposition

- Megatron-DeepSpeed Repository — DeepSpeed fork of Megatron-LM with MoE, curriculum learning, and 3D parallelism additions

- DeepSpeed Megatron Tutorial — DeepSpeed documentation on combined Megatron-DeepSpeed multi-node training

- NCCL Communication Optimization Overview — Coverage of NCCL collective algorithms, topology selection, and overlap strategies

Keywords: Megatron-LM, Megatron Core, Megatron Bridge, tensor parallelism, pipeline parallelism, sequence parallelism, context parallelism, expert parallelism, FSDP, DeepSpeed, NCCL, InfiniBand, NVIDIA H100, Transformer Engine, BF16