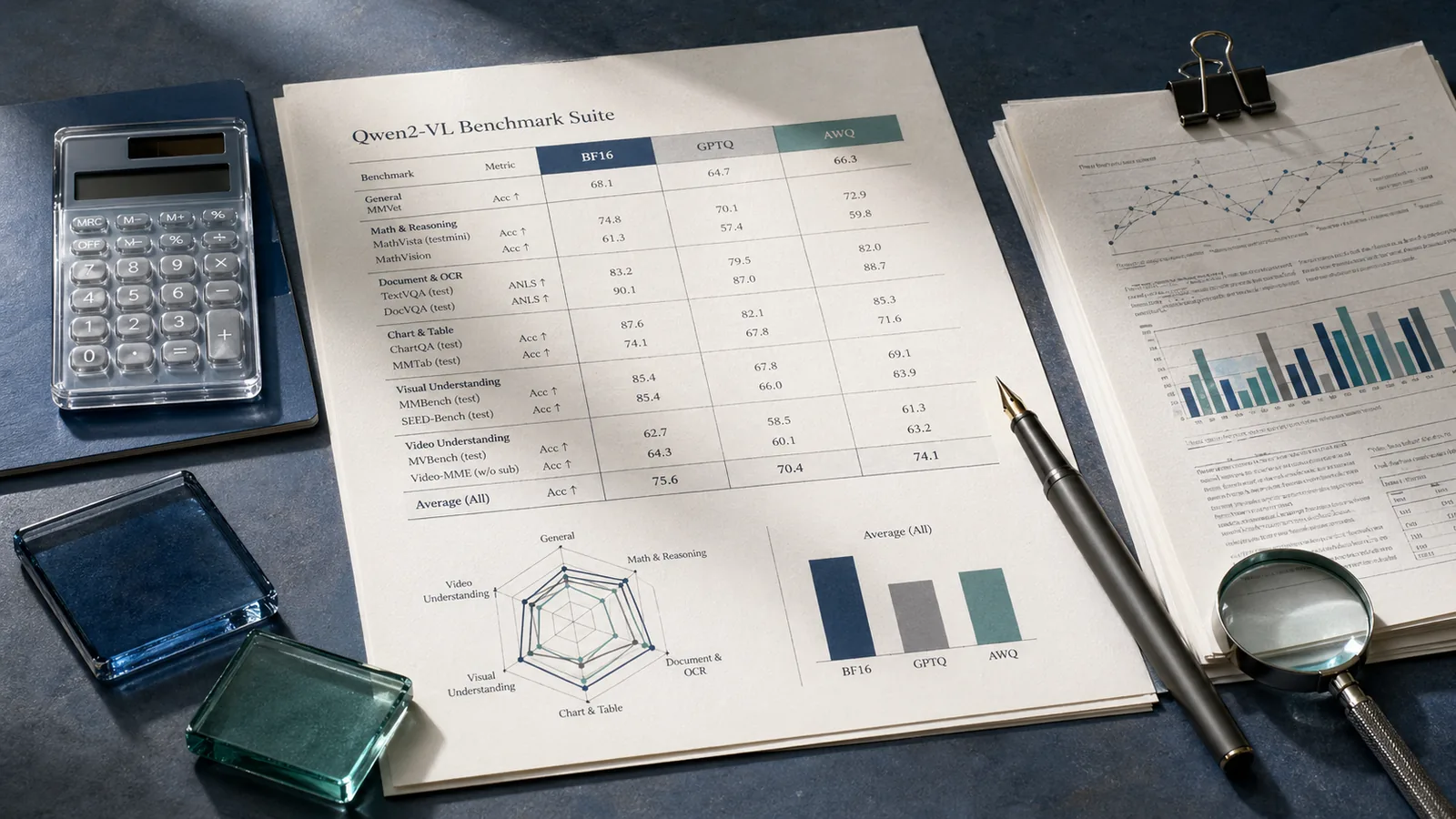

Why these Qwen2-VL quantization results matter

Bottom Line: Quantization does affect multimodal accuracy on Qwen2-VL-2B-Instruct, but the magnitude of that effect is strongly task-dependent. GPTQ-Int4 drops 2.66 points on MMMU and 2.71 points on MathVista versus BF16, while DocVQA loses only 1.13 points. AWQ presents an inverted pattern: it stays within 0.55 points of BF16 on MMMU but loses 4.50 points on MathVista. The practical implication is that choosing a quantized checkpoint based on bit-width label alone — without knowing the task sensitivity of your workload — is the wrong approach. The published model card for Qwen2-VL-2B-Instruct-GPTQ-Int4 reports scores for all four precision settings across four benchmarks, and the cross-task delta pattern is the signal that most existing pages omit.

The model card states: "This section reports the generation performance of quantized models (including GPTQ and AWQ) of the Qwen2-VL series." What it does not do is synthesize which benchmark categories are most exposed to precision loss. That synthesis is this article's purpose.

At BF16, Qwen2-VL-2B-Instruct scores 41.88 on MMMU, 88.34 on DocVQA, 72.07 on MMBench, and 44.40 on MathVista. GPTQ-Int4 brings those numbers to 39.22, 87.21, 70.87, and 41.69. AWQ produces 41.33, 86.96, 71.64, and 39.90. GPTQ-Int8 remains closest to BF16 at 41.55, 88.28, 71.99, and 44.60. The headline finding is not that quantization hurts — it is that the pain is concentrated in reasoning-heavy benchmarks, not in document-reading tasks.

How the benchmark results were measured

| Evaluation subset | Abbreviation | Task domain | Evaluation stack |

|---|---|---|---|

| MMMU_VAL | MMMU | Multidisciplinary multimodal reasoning | VLMEvalkit |

| DocVQA_VAL | DocVQA | Document visual question answering | VLMEvalkit |

| MMBench_DEV_EN | MMBench | General vision-language perception | VLMEvalkit |

| MathVista_MINI | MathVista | Mathematical visual reasoning | VLMEvalkit |

The Qwen2-VL quantization benchmarks were produced using VLMEvalkit across four named validation sets: MMMU_VAL, DocVQA_VAL, MMBench_DEV_EN, and MathVista_MINI. These are the subsets most commonly used for vision-language model comparisons, covering general multimodal reasoning (MMMU), document visual question answering (DocVQA), multi-ability vision-language evaluation (MMBench), and mathematical visual reasoning (MathVista). The model card presents all four quantized variants — BF16, GPTQ-Int8, GPTQ-Int4, and AWQ — within a single comparison table, meaning the numbers are internally consistent rather than drawn from separate evaluation runs.

The card also documents a separate speed benchmarking section covering throughput and generation performance by variant. This article focuses exclusively on the accuracy benchmarks.

| Benchmark | Subset Used | Task Domain |

|---|---|---|

| MMMU | MMMU_VAL | Multidisciplinary multimodal reasoning |

| DocVQA | DocVQA_VAL | Document visual question answering |

| MMBench | MMBench_DEV_EN | General vision-language perception |

| MathVista | MathVista_MINI | Mathematical visual reasoning |

Model variants and precision settings in scope

| Variant | Bits | HuggingFace Repo |

|---|---|---|

| BF16 | 16 | Qwen/Qwen2-VL-2B-Instruct |

| GPTQ-Int8 | 8 | Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8 |

| GPTQ-Int4 | 4 | Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int4 |

| AWQ | 4 | Qwen/Qwen2-VL-2B-Instruct-AWQ |

The comparison covers exactly four precision configurations of Qwen2-VL-2B-Instruct: the BF16 full-precision baseline, GPTQ-Int8, GPTQ-Int4, and AWQ. As the model card states: "This repo contains the quantized version of the instruction-tuned 2B Qwen2-VL model." The GPTQ-Int4 and AWQ variants are both 4-bit, but they use different quantization algorithms and, critically, produce different benchmark outcomes on the same tasks.

These numbers apply only to the 2B instruction-tuned family. The model card does not publish equivalent quantization delta tables for larger Qwen2-VL sizes, and extrapolating these sensitivity patterns to 7B or 72B variants without separate evidence is unsupported.

Evaluation stack and reproduction context

Speed and accuracy benchmarking was performed on an NVIDIA A100 80GB using CUDA 11.8, PyTorch 2.2.1+cu118, Flash Attention 2.6.1, Transformers 4.38.2, AutoGPTQ 0.6.0+cu118, and AutoAWQ 0.2.5+cu118. The visual-token range used was the model default: 4–16384 tokens per image.

Pro Tip: The model card notes: "The code of Qwen2-VL has been in the latest Hugging Face transformers and we advise you to build from source with command

pip install git+https://github.com/huggingface/transformers." If you attempt to reproduce these benchmarks using a pip-installed release of Transformers that predates Qwen2-VL support, you will encounter errors before the first forward pass. Build from source before running VLMEvalkit on any Qwen2-VL variant.

The card also recommends enabling Flash Attention 2: "We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios." Benchmarks run without Flash Attention 2 on a different GPU or memory configuration may yield different accuracy and throughput numbers than those published.

Benchmark score table for BF16, GPTQ-Int8, GPTQ-Int4, and AWQ

The table below reproduces the four-variant accuracy comparison from the Qwen2-VL-2B-Instruct-GPTQ-Int4 model card, with absolute deltas versus BF16 added for each quantized variant.

| Variant | MMMU_VAL | DocVQA_VAL | MMBench_DEV_EN | MathVista_MINI |

|---|---|---|---|---|

| BF16 | 41.88 | 88.34 | 72.07 | 44.40 |

| GPTQ-Int8 | 41.55 (−0.33) | 88.28 (−0.06) | 71.99 (−0.08) | 44.60 (+0.20) |

| GPTQ-Int4 | 39.22 (−2.66) | 87.21 (−1.13) | 70.87 (−1.20) | 41.69 (−2.71) |

| AWQ | 41.33 (−0.55) | 86.96 (−1.38) | 71.64 (−0.43) | 39.90 (−4.50) |

GPTQ-Int8 is effectively lossless at this model size: no delta exceeds 0.33 points, and MathVista actually registers +0.20, likely within evaluation noise. The gap opens materially when moving to 4-bit compression. Both GPTQ-Int4 and AWQ lose more than 1 point on at least two tasks, and the distribution of those losses differs by algorithm.

MMMU and MathVista show the sharpest degradation

Reasoning-heavy benchmarks absorb the largest accuracy hits from 4-bit quantization. On MMMU_VAL, GPTQ-Int4 falls from 41.88 to 39.22, a drop of 2.66 points. On MathVista_MINI, it falls from 44.40 to 41.69, a drop of 2.71 points — nearly identical magnitude on two distinct reasoning-oriented tasks.

AWQ tells a different story: it preserves MMMU well at 41.33 (only −0.55 versus BF16), but MathVista collapses to 39.90, a loss of 4.50 points. That single-task degradation of 4.50 is the largest absolute drop in the entire published table, and it occurs under AWQ on the one benchmark most demanding of numerical visual reasoning.

| Task | BF16 | GPTQ-Int4 | Delta (Int4) | AWQ | Delta (AWQ) |

|---|---|---|---|---|---|

| MMMU_VAL | 41.88 | 39.22 | −2.66 | 41.33 | −0.55 |

| MathVista_MINI | 44.40 | 41.69 | −2.71 | 39.90 | −4.50 |

The asymmetry between MMMU and MathVista in the AWQ column is particularly notable: AWQ loses almost nothing on MMMU yet loses the most of any variant on MathVista. This rules out a simple "AWQ is closer to BF16" generalization. Both MMMU and MathVista require multi-step reasoning over visual content, but MathVista's tighter mathematical structure appears more sensitive to the specific weight approximation AWQ applies. The model card does not expose the calibration recipe, so a root-cause explanation beyond the observed delta is not supported by the source data.

DocVQA stays comparatively stable under quantization

DocVQA is the most quantization-tolerant benchmark in this set. BF16 scores 88.34; GPTQ-Int8 holds at 88.28; GPTQ-Int4 drops to 87.21; AWQ falls to 86.96. Across all reported quantized variants, DocVQA stays above 86.9 — a range of only 1.38 points. By contrast, MathVista spans from 44.60 (GPTQ-Int8) down to 39.90 (AWQ), a range of 4.70 points.

DocVQA tests extractive reading comprehension over document images — a task that can be answered by recognizing and localizing text patterns without extended compositional reasoning. That property likely explains its relative resilience: quantization compresses weight precision, but OCR-like pattern matching depends less on fine-grained weight magnitudes than multi-step numerical inference does.

Watch Out: DocVQA's stability under quantization does not generalize to other document tasks that require layout reasoning, table arithmetic, or comparative analysis across sections. The reported DocVQA_VAL score covers short-answer extraction. If your production workload involves cross-page aggregation or multi-hop document reasoning, treating DocVQA stability as a proxy for overall document task safety is an overreach the model card does not support.

MMBench sits between the extremes

MMBench_DEV_EN lands in the middle of the sensitivity spectrum. GPTQ-Int8 loses 0.08 points versus BF16. GPTQ-Int4 drops 1.20 points. AWQ drops 0.43 points. No variant loses more than 1.20 points on this benchmark, making MMBench less volatile than MathVista but marginally more volatile than DocVQA under GPTQ-Int4.

| Variant | MMBench_DEV_EN | Delta vs BF16 |

|---|---|---|

| BF16 | 72.07 | — |

| GPTQ-Int8 | 71.99 | −0.08 |

| AWQ | 71.64 | −0.43 |

| GPTQ-Int4 | 70.87 | −1.20 |

On MMBench, GPTQ-Int4 underperforms AWQ by 0.77 points, which is the opposite ordering from MathVista (where GPTQ-Int4 leads AWQ by 1.79 points). This reversal further confirms that neither 4-bit algorithm dominates uniformly across all task types.

What the GPTQ-Int4 versus AWQ pattern suggests

Neither GPTQ-Int4 nor AWQ is the better choice for Qwen2-VL-2B-Instruct in the general case. The published benchmark table shows each algorithm outperforming the other on at least one benchmark, and the margin on the worst case differs by nearly 4 points. The "which is better" question only has a clean answer when you specify the task.

| Benchmark | GPTQ-Int4 leads? | AWQ leads? | Margin (pts) |

|---|---|---|---|

| MMMU_VAL | No | Yes (AWQ +2.11) | AWQ +2.11 |

| DocVQA_VAL | Yes (GPTQ +0.25) | No | GPTQ +0.25 |

| MMBench_DEV_EN | No | Yes (AWQ +0.77) | AWQ +0.77 |

| MathVista_MINI | Yes (GPTQ +1.79) | No | GPTQ +1.79 |

AWQ wins on MMMU and MMBench. GPTQ-Int4 wins on DocVQA and MathVista. On MathVista — the benchmark most adversely affected overall by AWQ — GPTQ-Int4's advantage over AWQ is 1.79 points, a meaningful gap on a task where even BF16 scores only 44.40.

Where GPTQ-Int4 preserves more accuracy

GPTQ-Int4 preserves more accuracy than AWQ on DocVQA (87.21 vs 86.96, +0.25), MMBench (70.87 vs 71.64 — wait, AWQ leads here), and MathVista (41.69 vs 39.90, +1.79). The MathVista advantage is the most practically significant: applications involving visual arithmetic, chart interpretation, or geometric reasoning should prefer GPTQ-Int4 over AWQ when 4-bit compression is required.

Versus BF16, GPTQ-Int4's DocVQA loss of 1.13 points and MMBench loss of 1.20 points are in a range many production pipelines can absorb. The harder question is whether the 2.66-point MMMU and 2.71-point MathVista losses are acceptable — that depends on whether those reasoning tasks appear in your workload.

| Task | GPTQ-Int4 | AWQ | Difference |

|---|---|---|---|

| DocVQA_VAL | 87.21 | 86.96 | +0.25 |

| MathVista_MINI | 41.69 | 39.90 | +1.79 |

Where AWQ trails despite similar bit width

AWQ underperforms BF16 on all four published benchmarks — MMMU −0.55, DocVQA −1.38, MMBench −0.43, MathVista −4.50 — even though it shares the same 4-bit weight precision as GPTQ-Int4. The 4.50-point MathVista loss is substantially larger than GPTQ-Int4's 2.71-point loss on the same task.

AWQ's calibration approach, which uses per-channel activation-aware scaling to minimize quantization error on salient weights, appears to protect representational tasks (MMMU, MMBench) more effectively than it protects mathematical reasoning (MathVista). The model card does not expose the calibration dataset or per-layer sensitivity statistics, so the mechanism behind this asymmetry cannot be verified from the source. What is verifiable is the score: AWQ loses 10.1% of MathVista performance relative to BF16, versus GPTQ-Int4's 6.1% loss on the same task.

| Task | BF16 | AWQ | Delta vs BF16 |

|---|---|---|---|

| MMMU_VAL | 41.88 | 41.33 | −0.55 |

| DocVQA_VAL | 88.34 | 86.96 | −1.38 |

| MMBench_DEV_EN | 72.07 | 71.64 | −0.43 |

| MathVista_MINI | 44.40 | 39.90 | −4.50 |

A practitioner decision matrix for choosing a quantized Qwen2-VL variant

| Choice | Choose when | Avoid when |

|---|---|---|

| BF16 | MMMU-like reasoning or MathVista-like visual math is the primary workload and GPU memory is not the binding constraint | Every additional fraction of accuracy point matters less than memory savings |

| GPTQ-Int8 | You want near-BF16 accuracy across all four task categories and can afford moderate memory reduction | You need the smallest possible checkpoint size |

| GPTQ-Int4 | Your workload is dominated by DocVQA-like document extraction or general VQA, and MathVista-level reasoning is not a primary requirement | Your production workload includes hard visual math or broad academic reasoning |

| AWQ | Your primary task is MMMU-style multimodal reasoning and memory is the binding constraint | Your workload involves mathematical or quantitative visual reasoning |

The following matrix maps precision choice to task sensitivity and GPU constraint, based directly on the published model-card scores for Qwen2-VL-2B-Instruct.

Choose BF16 when your primary workload is MMMU-like multidisciplinary reasoning or MathVista-like visual math, GPU memory is not the binding constraint, and every fraction of accuracy point matters for your evaluation SLO.

Choose GPTQ-Int8 when you want near-BF16 accuracy across all four task categories (maximum delta: 0.33 points on MMMU) and can afford moderate memory reduction. GPTQ-Int8 is the safest quantized option in this card: it matches or slightly exceeds BF16 on MathVista.

Choose GPTQ-Int4 when your workload is dominated by DocVQA-like document extraction or general VQA (MMBench-style), memory pressure is significant, and MathVista-level mathematical reasoning is not a primary requirement. Among the two 4-bit options, GPTQ-Int4 is the stronger choice for math-adjacent tasks.

Choose AWQ when your primary task is MMMU-style multimodal reasoning (where AWQ sits within 0.55 points of BF16), memory is the binding constraint, and MathVista-style numerical reasoning is absent from the workload.

Do not choose AWQ if your production workload involves mathematical or quantitative visual reasoning. A 4.50-point MathVista loss is not recoverable by post-processing.

Methodological caveats that change how to read the numbers

Watch Out: All benchmark scores discussed in this article come from vendor-published model cards, not an independently audited third-party evaluation. No confidence intervals, multiple-run averages, or calibration-set descriptions appear in the surfaced card text. Score differences below approximately 0.5 points should be treated as potentially within evaluation noise, particularly on MMMU and MMBench where absolute scores are low enough that a handful of examples can shift the reported percentage. The 4.50-point AWQ drop on MathVista and the 2.66-point GPTQ-Int4 drop on MMMU are large enough to clear this threshold; sub-1-point deltas should be treated with more skepticism.

The numbers are internally consistent — all four variants were evaluated under the same harness (VLMEvalkit), the same validation subsets (MMMU_VAL, DocVQA_VAL, MMBench_DEV_EN, MathVista_MINI), and the same hardware stack — which makes cross-variant comparison valid within this card. What they do not provide is an independently reproduced result or error statistics.

Single-model-card reporting versus broader cross-model comparison

This article compares only the four precision variants of Qwen2-VL-2B-Instruct. The evidence does not extend to Qwen2-VL-7B, Qwen2-VL-72B, or any other VLM family. The model card notes that Qwen2-VL "achieves state-of-the-art performance on visual understanding benchmarks, including MathVista, DocVQA, RealWorldQA, MTVQA, etc." — but that claim applies to BF16. The quantization sensitivity patterns observed at 2B may not hold at larger scales, where quantization typically becomes more benign as model capacity increases.

Pro Tip: If your target deployment is the 7B or 72B Qwen2-VL variant, run your own VLMEvalkit sweep at both GPTQ-Int8 and GPTQ-Int4 before committing to a precision tier. The delta pattern documented here — reasoning tasks degrading more than extraction tasks — is plausible at larger scales, but the magnitude of those deltas is not predictable from 2B results alone.

Hardware and software stack constraints on generalization

The benchmarked environment is NVIDIA A100 80GB, CUDA 11.8, PyTorch 2.2.1+cu118, Flash Attention 2.6.1, Transformers 4.38.2, AutoGPTQ 0.6.0+cu118, and AutoAWQ 0.2.5+cu118. This is a highly specific stack.

Watch Out: Any change in CUDA version, AutoGPTQ version, or AutoAWQ version can affect kernel selection for the quantized matmuls, which in turn affects both throughput and output quality. Newer CUDA or library versions do not automatically reproduce the same numbers. The A100 80GB environment also excludes inference on consumer-class GPUs (RTX 4090, H100 PCIe, A10G), where quantization kernels may behave differently. Cross-hardware portability of these scores is not guaranteed, and the card's recommendation to build Transformers from source ("pip install git+https://github.com/huggingface/transformers") adds a further version-sensitivity constraint.

The default visual-token range of 4–16384 per image means that high-resolution inputs or multi-image prompts will increase memory pressure and potentially shift accuracy relative to single standard-resolution images. Benchmarks using the MINI or VAL subsets represent the evaluated regime; production prompts that push token counts higher may see different degradation patterns.

What these benchmark deltas mean for inference engineers

Bottom Line: The accuracy cost of 4-bit quantization on Qwen2-VL-2B-Instruct is real but workload-dependent. GPTQ-Int8 is effectively free: its maximum delta across all four benchmarks is 0.33 points, and it actually gains 0.20 points on MathVista. For 4-bit options, the decision is not "GPTQ-Int4 or AWQ" but "what does my task distribution look like?" GPTQ-Int4 is the safer 4-bit choice for math-heavy or document-heavy workloads. AWQ is the safer 4-bit choice for general multimodal reasoning tasks that resemble MMMU. Neither 4-bit option should be deployed on MathVista-category tasks without first measuring task-level degradation in the production environment.

The card documents both accuracy and speed benchmarking, but precise memory footprints by variant are not surfaced in the available text. Throughput benefits of 4-bit quantization are established in practice, but the accuracy trade-off must be weighed against the specific task distribution — not against a generic "4-bit is close enough" assumption.

When a small drop is acceptable

Document extraction and general visual QA workloads — analogous to DocVQA and MMBench — show the most tolerance for 4-bit quantization. DocVQA stays above 86.96 for every quantized variant in the published table. MMBench stays within 1.20 points of BF16 across all variants.

Bottom Line: For production systems primarily handling document image parsing, receipt processing, form extraction, or general visual classification, both GPTQ-Int4 and AWQ deliver accuracy within a range that is unlikely to produce user-facing quality regressions. The 1.13-point DocVQA loss under GPTQ-Int4 and the 1.38-point loss under AWQ are small relative to inter-model variance across VLM families.

When you should keep a higher-precision checkpoint

MathVista is the clearest signal for higher precision. BF16 scores 44.40, GPTQ-Int8 scores 44.60, GPTQ-Int4 scores 41.69, and AWQ scores 39.90. The gap between GPTQ-Int8 and AWQ on this single benchmark is 4.70 points — larger than most inter-model gaps on the same task among models of comparable size.

Watch Out: If your application involves chart question answering, geometry problem solving, statistical figure interpretation, or any task that rewards numerical precision in visual reasoning, deploying AWQ without task-level validation is not justified by these numbers. MMMU similarly shows a 2.66-point drop under GPTQ-Int4, which is meaningful for systems evaluated on multidisciplinary academic benchmarks. In both cases, GPTQ-Int8 is the correct precision tier when memory allows — it matches BF16 on MathVista and comes within 0.33 points on MMMU, with substantially lower memory cost than BF16.

The published numbers support keeping BF16 or GPTQ-Int8 for any task where the difference between a 39.9 and a 44.4 MathVista score translates to user-facing error rates. That threshold is a product decision, but the score data makes the quantization cost explicit.

Questions readers still ask about Qwen2-VL quantization

Does quantization affect multimodal accuracy?

Yes, measurably, but not uniformly. GPTQ-Int8 has negligible impact. GPTQ-Int4 and AWQ both show task-dependent losses of 1–4.5 points across the four reported benchmarks.

Which is better, GPTQ or AWQ?

The answer depends on the benchmark. AWQ outperforms GPTQ-Int4 on MMMU (by 2.11 points) and MMBench (by 0.77 points). GPTQ-Int4 outperforms AWQ on MathVista (by 1.79 points) and DocVQA (by 0.25 points). There is no universal winner at this model size and task set.

How much accuracy do you lose with 4-bit quantization?

On Qwen2-VL-2B-Instruct, GPTQ-Int4 loses 2.66 MMMU points, 1.13 DocVQA points, 1.20 MMBench points, and 2.71 MathVista points versus BF16. AWQ loses 0.55, 1.38, 0.43, and 4.50 respectively. The range across tasks spans from under 0.5 points to 4.50 points depending on method and task.

Is GPTQ better than AWQ for vision-language models?

For the Qwen2-VL-2B-Instruct model card benchmarks specifically: GPTQ-Int4 is the better 4-bit choice for math-adjacent tasks, AWQ is the better choice for general multimodal reasoning. Neither algorithm dominates on all four benchmarks. GPTQ-Int8 is the best-accuracy quantized option for this model regardless of task.

What benchmarks are used for Qwen2-VL evaluation?

The model card reports MMMU_VAL, DocVQA_VAL, MMBench_DEV_EN, and MathVista_MINI, all evaluated via VLMEvalkit.

Pro Tip: GPTQ-Int8 is the under-discussed option in most Qwen2-VL quantization threads. Its published deltas — never exceeding 0.33 points below BF16 across four tasks — suggest it should be the default quantized tier for accuracy-sensitive deployments where 8-bit memory savings are sufficient. Only move to 4-bit when the memory reduction from Int8 is insufficient for your target hardware.

Sources & References

- Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int4 — Primary source; vendor model card reporting BF16, GPTQ-Int8, GPTQ-Int4, and AWQ benchmark scores with VLMEvalkit evaluation environment details

- Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8 — Model card for the 8-bit GPTQ variant; benchmark scores cross-referenced for GPTQ-Int8 deltas

- Qwen/Qwen2-VL-2B-Instruct-AWQ — Model card for the AWQ variant; benchmark scores cross-referenced for AWQ deltas

Keywords: Qwen2-VL-2B-Instruct, Qwen2-VL-2B-Instruct-GPTQ-Int4, Qwen2-VL-2B-Instruct-AWQ, GPTQ-Int8, AWQ, VLMEvalkit, MMMU_VAL, DocVQA_VAL, MMBench_DEV_EN, MathVista_MINI, NVIDIA A100 80GB, CUDA 11.8, PyTorch 2.2.1+cu118, Flash Attention 2.6.1, Transformers 4.38.2, AutoGPTQ 0.6.0+cu118, AutoAWQ 0.2.5+cu118