Bottom line: when enterprises should stay on naive RAG or migrate

Bottom Line: Stay on naive RAG when your workload is primarily single-hop fact lookup — support deflection, docs Q&A, internal knowledge bases where each question resolves within one or two chunks. Move to modular RAG when your pipeline's retrieval precision or routing flexibility is the bottleneck, not the absence of relationship semantics. Justify GraphRAG only when multi-hop reasoning, cross-document entity relationships, or explicit audit lineage materially determine answer quality — and only after validating that the indexing, ontology engineering, and ongoing maintenance cost are funded and staffed. Microsoft's own GraphRAG repository warns that "GraphRAG indexing can be an expensive operation, please read all of the documentation to understand the process and costs involved, and start small." That is not a caveat — it is the decision rule.

A 2025 systematic evaluation published on arXiv confirms the split: standard RAG outperforms GraphRAG on single-hop, detail-oriented factual queries; GraphRAG outperforms standard RAG on multi-hop, reasoning-intensive questions. Neither dominates universally, which means the architecture choice is a workload-classification problem, not a technology preference.

What actually changes as RAG gets more modular or graph-aware

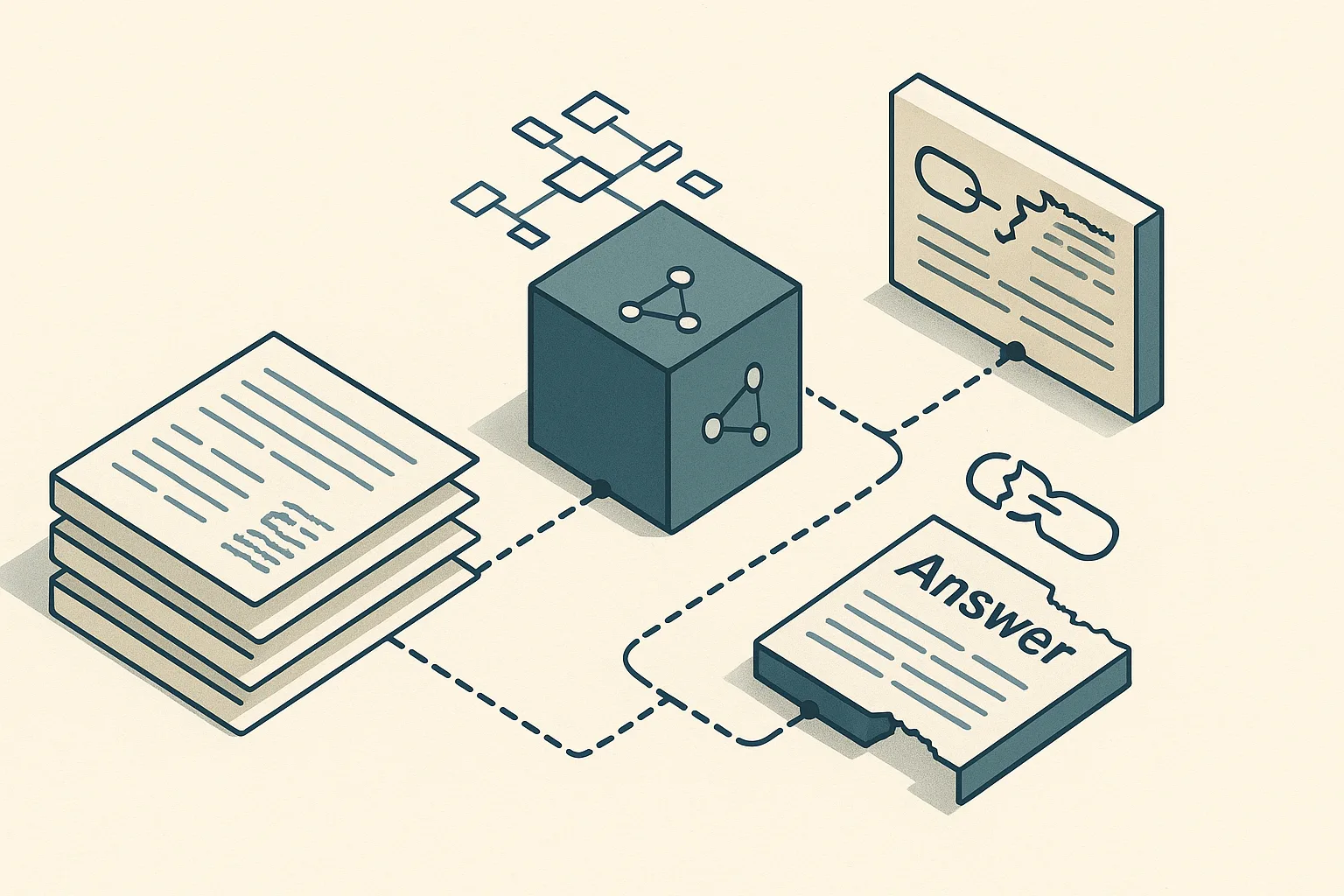

The retrieval unit is where the architectures diverge most sharply. The baseline anchor is a vector database: naive RAG chunks documents, embeds those chunks, and stores them in a vector database. At query time it retrieves the top-K nearest chunks by embedding similarity and hands them to an LLM in a single call. The entire system is stateless between queries and requires no domain model beyond a chunking strategy and embedding choice.

Modular RAG keeps the vector database as its retrieval anchor but wraps it with orchestration: a query router that directs traffic to appropriate sub-pipelines, hybrid retrieval that fuses dense and sparse signals, reranking that reorders candidates before the LLM call, and guardrails or evaluation gates that enforce quality thresholds. The retrieval unit is still the chunk, but the path to that chunk is no longer fixed.

GraphRAG replaces or augments the chunk as the primary retrieval unit with nodes and edges. Microsoft's GraphRAG extracts entities and relationships from source documents during indexing and exposes two distinct search modes — local search over a community's entity neighborhood and global search over the full graph's community summaries. GraphRAG-Bench evaluates this architecture across graph construction, retrieval, and multi-hop answer generation as a unified pipeline — a materially broader surface than single-step vector lookup.

| Dimension | Naive RAG | Modular RAG | GraphRAG |

|---|---|---|---|

| Retrieval unit | Text chunk (vector DB) | Chunk + routing/rerank layer | Entity + relationship + community |

| Reasoning depth | Single-hop | Single-hop to shallow multi-hop | Multi-hop, relationship-traversal |

| Governance fit | Low (opaque similarity) | Medium (auditable routing) | High (explicit entity lineage) |

| Operating cost | Low | Medium | High |

| Build complexity | Low | Medium | High |

Why naive RAG wins for localized FAQ-style workloads

For questions that resolve within one or two retrieved chunks — "What is our refund policy?", "Which API endpoint handles authentication?" — naive RAG delivers accurate answers at the lowest operational cost. The 2025 RAG vs. GraphRAG evaluation confirms that standard RAG outperforms GraphRAG on single-hop and detail-oriented factual queries, precisely the query class that dominates support deflection and documentation search.

Failure modes that naive RAG carries — missed entity links, multi-step answer stitching, long-context brittleness — are largely dormant when the question scope is narrow and the corpus is well-chunked. Adding graph infrastructure to solve problems that do not appear in production is pure overhead.

Pro Tip: A vector database with well-tuned chunking (semantic boundary detection, appropriate chunk size, overlap strategy) remains the correct default for any enterprise workload until query failure analysis in production reveals that the answer requires cross-document entity traversal or relationship reasoning. Do not migrate on architectural ambition; migrate on measured failure rates.

Why modular RAG is the middle ground for enterprise teams

Modular RAG addresses the fragility of a fixed retrieval pipeline without incurring the ontology and graph-maintenance tax of GraphRAG. The 2026 enterprise RAG blueprint from stAI tuned captures the pattern: "Route early, retrieve hybrid, fuse with RRF, rerank sparingly, guardrail always, evaluate continuously."

That framing encodes the core value proposition: enterprises typically serve heterogeneous query mixes — some factual, some comparative, some requiring external tool calls — and a single flat retrieval path serves all of them poorly. Routing lets each query class reach an appropriately tuned sub-pipeline.

| Capability | Naive RAG | Modular RAG |

|---|---|---|

| Query-type routing | ✗ | ✓ |

| Hybrid (dense + sparse) retrieval | Optional | First-class |

| Reranking | ✗ | ✓ |

| Evaluation gates / guardrails | ✗ | ✓ |

| Graph/ontology structures | ✗ | ✗ |

| FTE overhead vs. naive RAG | Baseline | Additional routing and evaluation work |

The main cost modular RAG adds is orchestration complexity: routing logic must be maintained, reranking models must be selected and monitored, and evaluation gates must be tuned over time. These costs are real but contained — they do not require ontology engineers or graph database expertise.

Why GraphRAG matters when relationships are the product

GraphRAG earns its overhead when the answer to a question depends on traversing relationships rather than finding semantically proximate text. In pharmaceutical regulatory compliance, for example, a question like "Which adverse event reports cite the same mechanism-of-action as compound X across all submissions filed in 2024?" cannot be answered by semantic similarity alone — it requires entity-linked traversal across a structured corpus.

Microsoft's GraphRAG repository centers its architecture on exactly this: entity extraction from source documents, relationship construction between entities, community detection, and separate local and global search modes that traverse this structure rather than performing a flat vector lookup. The 2025 systematic evaluation validates the design: GraphRAG is more effective than standard RAG on multi-hop, reasoning-intensive questions.

Pro Tip: GraphRAG becomes the right architecture when multi-hop reasoning is not an edge case but the primary query class — and when the entities and relationships in your corpus are stable enough that maintaining the graph does not outpace the quality gains. Domains with high entity-relationship density and low corpus churn (legal contracts, clinical trial networks, supply chain dependencies) are where GraphRAG delivers the clearest ROI.

The migration threshold: triggers that justify moving off naive RAG

Most top-ranking comparisons of RAG architectures describe what each system does but stop before naming the specific conditions that make migration worth the investment. That gap matters for enterprise planning. Three concrete triggers should drive the migration decision, and each maps to a different architectural response.

GraphRAG-Bench was created specifically to stress-test graph construction, retrieval, and multi-hop answer generation as a unified pipeline — the benchmark exists because single-hop retrieval quality is no longer sufficient to characterize production-grade systems. When your own production evaluations start revealing the same inadequacy, that is the migration signal.

Decision matrix: stay, modularize, or graphify

| Query complexity | Auditability need | Operational budget | Recommendation |

|---|---|---|---|

| Single-hop lookup dominates | Low | Any | Stay on naive RAG |

| Mixed query types, routing needed | Low–Medium | Moderate | Move to modular RAG |

| Multi-hop failures in production | Medium | Moderate | Move to modular RAG first; evaluate GraphRAG |

| Cross-document entity reasoning required | High | High | Adopt GraphRAG |

| Regulated domain, audit lineage required | High | High | Adopt GraphRAG |

| Corpus changes frequently | Any | Low–Moderate | Avoid GraphRAG; modular RAG preferred |

Trigger 1: multi-hop or cross-document questions start failing

When production queries require assembling evidence from multiple documents — connecting an entity mentioned in a contract to a regulatory filing to a support ticket — naive RAG fails structurally. Top-K chunk retrieval can surface individually relevant passages while missing the relationship that makes them collectively meaningful. The result is partial answers, hallucinated links, or silently dropped evidence.

The 2025 RAG vs. GraphRAG evaluation distinguishes single-hop factual QA from multi-hop reasoning-intensive QA and finds GraphRAG consistently stronger in the latter category. This is the empirical basis for treating multi-hop failure rate as the primary migration trigger.

Watch Out: The failure modes are often invisible in standard accuracy metrics. Missed entity links produce fluent but factually incomplete answers that pass casual review. Answer stitching errors (where the model fabricates a connection between chunks that don't actually relate) are harder to catch without structured evaluation. Long-context brittleness — where the LLM ignores evidence buried mid-context — compounds both. Monitor for partial-answer rates and cross-document citation accuracy separately from overall ROUGE or semantic similarity scores.

Trigger 2: auditability and governance become procurement blockers

GraphRAG's intermediate structure — explicit entities, relationships, and community summaries — creates a reviewable evidence layer that opaque chunk similarity cannot provide. In financial services, healthcare, and legal tech, procurement teams increasingly require that AI systems be able to show which source documents and which specific relationships contributed to an answer.

Modular RAG improves auditability over naive RAG by making routing decisions inspectable and by adding citation tracking at the retrieval layer. But it does not provide knowledge-graph lineage. If the audit requirement is "show me which entities and relationships grounded this answer," only GraphRAG satisfies it structurally.

Production Note: Governance requirements manifest differently across enterprise contexts. Lineage (which documents were retrieved) is satisfiable by modular RAG. Reviewability of reasoning steps (which entity relationships were traversed) requires GraphRAG. Policy enforcement (blocking certain retrieval paths based on access control or classification) can be implemented in either architecture but is cleaner with explicit graph nodes that carry metadata. Before defaulting to GraphRAG for governance, confirm which specific audit requirement is actually blocking procurement — lineage alone may not require a full graph stack.

Trigger 3: the cost per query still has to work at enterprise scale

GraphRAG's indexing cost is non-trivial and front-loaded. Microsoft's documentation states explicitly: "GraphRAG indexing can be an expensive operation, please read all of the documentation to understand the process and costs involved, and start small." GitHub discussions in the Microsoft GraphRAG repository also confirm that incremental updates are harder than standard baseline RAG because relationship structure can force broader graph rebuilds when new documents arrive.

Enterprise cost modeling must account for more than inference spend. The table below uses qualitative ranges since per-query costs vary by corpus size, LLM provider pricing, and infrastructure configuration — but the relative ordering is consistent across deployment reports.

| Cost dimension | Naive RAG | Modular RAG | GraphRAG |

|---|---|---|---|

| Initial build effort | Low (days) | Moderate (weeks) | High (months) |

| Cost per query | Lowest baseline | Higher than naive RAG due to orchestration | Highest due to graph traversal and summarization |

| Data engineering FTE burden | Minimal | Moderate | Highest |

| Ongoing ingestion / maintenance | Low | Low–Moderate | High (graph rebuilds) |

| Ontology / schema upkeep | None | None | Ongoing |

How the enterprise landscape breaks down in 2026

The 2026 enterprise RAG deployment picture is not a winner-take-all transition to GraphRAG. The 2025 systematic evaluation and GraphRAG-Bench together confirm that RAG and GraphRAG each hold distinct advantages across query types and summarization tasks, and current engineering blogs and production teams are signaling a mixed-adoption pattern rather than a wholesale replacement of vector search. The production landscape reflects this split directly.

| Architecture | Primary use case | Complexity | Governance overhead |

|---|---|---|---|

| Naive RAG | Support deflection, docs Q&A, FAQ search | Low | Low |

| Modular RAG | Mixed enterprise workloads, multi-tenant systems | Medium | Medium |

| GraphRAG | Regulated domains, interconnected corpora, multi-hop QA | High | High (but auditable) |

Naive RAG as the baseline infrastructure choice

Naive RAG remains the dominant architecture in production support deflection and documentation search because it is the easiest system to operate correctly. The 2025 evaluation confirms it outperforms GraphRAG on single-hop, detail-oriented factual queries — which describes the majority of enterprise knowledge-base traffic in most verticals.

Pro Tip: A vector database with semantic chunking is still the lowest-friction starting point for any enterprise RAG deployment. Optimize chunking strategy, embedding model selection, and retrieval parameters before assuming the architecture needs to change. Many production failures attributed to "RAG limitations" are chunking or embedding failures, not structural limitations of the retrieval paradigm.

Modular RAG as the orchestration layer for mixed enterprise workloads

Modular RAG earns its place when the enterprise serves query mixes that a single retrieval pipeline handles poorly. A legal tech platform might field factual lookups ("What is the penalty clause in contract 4821?"), comparative questions ("How do our standard NDA terms differ from the version proposed by this vendor?"), and summarization requests — each class benefiting from a different retrieval and generation path.

The stAI tuned Enterprise RAG Blueprint (2026) frames this as a continuous-evaluation architecture: routing, hybrid retrieval, RRF fusion, reranking, guardrails, and evaluation as modular components rather than a fixed pipeline. This beats naive RAG on precision for heterogeneous workloads while stopping short of the graph stack.

| Where modular RAG adds value | Where it stops short |

|---|---|

| Routes different query types to optimal sub-pipelines | Does not encode entity relationships |

| Hybrid retrieval improves recall on keyword-sensitive queries | Cannot traverse cross-document entity links |

| Reranking improves precision without graph overhead | No inherent audit lineage beyond retrieval citations |

| Evaluation gates catch quality regressions early | Orchestration complexity requires ongoing maintenance |

GraphRAG as the relationship-aware option for regulated or complex domains

GraphRAG is the architecture of choice when the source corpus is inherently relational — clinical trial networks, supply chain dependency graphs, financial entity webs, legal contract clause hierarchies — and when questions require reasoning over those relationships rather than proximity matching.

Microsoft's GraphRAG exposes local search (within a community of related entities) and global search (across all community summaries) as distinct retrieval modes, which means the architecture can answer both narrow entity-specific questions and broad thematic queries over an interconnected corpus. The 2025 evaluation confirms the multi-hop advantage.

Watch Out: Ontology engineering is not a one-time cost. Entity extraction quality depends on prompt design, LLM capability, and domain-specific entity schemas. As the source corpus evolves, entity definitions drift and relationship schemas require updates. Teams that underestimate the ongoing maintenance burden consistently find that graph quality degrades faster than expected, eroding the quality advantage that justified the migration.

Cost, ROI, and staffing implications of each path

The total cost of each RAG architecture includes build effort, FTE burden, maintenance load, and the quality uplift that justifies the spend. Microsoft's explicit warning about GraphRAG indexing cost — and community reports that incremental updates can require broader graph rebuilds — establish that the operational cost curve for GraphRAG is steeper than its infrastructure cost alone suggests.

| Dimension | Naive RAG | Modular RAG | GraphRAG |

|---|---|---|---|

| Build effort | Days to weeks | Weeks | Months |

| FTE to operate (steady state) | Minimal | Additional routing and evaluation work | Data engineering and ontology ownership |

| Maintenance load | Low | Moderate | High |

| Quality uplift (single-hop QA) | Baseline | Qualitatively better precision on routed queries | No consistent uplift on single-hop factual QA |

| Quality uplift (multi-hop QA) | Baseline | Better than naive RAG on some routed workloads | Stronger than standard RAG on multi-hop reasoning |

| Governance support | Minimal | Partial | Structural |

Quality uplift should be measured against the actual query mix, not assumed from the architecture label.

Where the spend goes: data engineering, ontology work, and maintenance

For GraphRAG, the majority of the non-infrastructure cost falls in three categories. First, ingestion: each document must be processed through entity extraction (typically multiple LLM calls per document), entity linking against existing graph nodes, and relationship construction. On large corpora, this is the primary cost driver Microsoft flags. Second, ontology and schema upkeep: as the business domain evolves, entity types, relationship types, and community definitions require revision. Third, graph rebuild cycles: unlike a vector index where adding new documents is an append operation, relationship structure changes in a GraphRAG corpus can require partial or full rebuilds.

Production Note: The GitHub discussion history for the Microsoft GraphRAG repository consistently surfaces incremental update difficulty as the most underestimated operational challenge. Teams planning GraphRAG deployments should design an explicit graph versioning and rebuild strategy before committing to the architecture — corpus churn rate is a primary input to that design.

How to estimate ROI before a migration

ROI estimation for a RAG migration should start from the query distribution, not the architecture. Sample a representative set of production queries, classify them by reasoning depth (single-hop factual, shallow multi-hop, deep multi-hop, relationship-traversal), and measure current failure rates in each class.

| Signal | Recommendation |

|---|---|

| >80% single-hop queries, low failure rate | Stay on naive RAG |

| >80% single-hop, high failure rate | Fix chunking/embedding before migrating |

| Mixed query types, precision problems | Modular RAG first |

| >30% multi-hop queries with measurable failure rate | Evaluate modular RAG; prototype GraphRAG on a sub-corpus |

| Multi-hop failures + governance requirements | GraphRAG justified if FTE and budget are available |

| Frequent corpus updates (daily/weekly) | Avoid GraphRAG; modular RAG preferred |

The incremental value of GraphRAG is a function of the multi-hop query fraction multiplied by the quality gain on those queries, offset by the added operational cost. The math rarely closes when the workload is dominated by straightforward lookup and FAQ traffic.

Decision matrix: accuracy lift versus governance and staffing cost

| Outcome | Accuracy lift | Governance overhead | Staffing cost | Recommendation |

|---|---|---|---|---|

| Stay on naive RAG | Low to none on single-hop lookup | Lowest | Lowest | Keep vector search baseline |

| Move to modular RAG | Moderate on mixed query mixes | Moderate | Moderate | Use when routing and reranking are the bottleneck |

| Adopt GraphRAG | Highest only when relationships drive the answer | Highest, but auditable | Highest | Use when multi-hop reasoning and lineage are required |

Decision framework for enterprise architects and tech leads

The migration question is a workload-classification and resource-allocation decision. The 2025 systematic evaluation and GraphRAG-Bench together establish that no single RAG architecture dominates across query types — the decision requires mapping workload traits to architecture capabilities and validating against available staffing and budget.

| Workload trait | Vector DB baseline sufficient? | Modular RAG adds value? | GraphRAG justified? |

|---|---|---|---|

| FAQ / fact lookup | Yes | No | No |

| Mixed query types | Partially | Yes | No |

| Multi-hop reasoning required | No | Partially | Yes |

| Cross-document entity reasoning | No | No | Yes |

| Audit lineage required | No | Partially | Yes |

| High corpus churn | Yes | Yes | Risky |

| Limited ML/data eng team | Yes | Sometimes | Rarely |

Stay on naive RAG when the workload is mostly lookup

Do not migrate because GraphRAG is architecturally richer. Migrate because production query failure analysis shows a specific inadequacy that a richer architecture addresses.

Bottom Line: A vector database with good chunking solves the majority of enterprise FAQ, support deflection, and documentation search workloads. The 2025 evaluation confirms naive RAG's superiority on single-hop factual queries. Premature migration to GraphRAG adds ontology engineering, graph maintenance, and the added operational cost of relationship extraction without improving the answers that make up most of the query volume.

Move to modular RAG when orchestration is the bottleneck

When the failure mode is not "the answer requires relationship traversal" but "the same query typed differently gets worse results" or "keyword-sensitive queries fall through semantic search," the problem is retrieval pipeline rigidity, not the absence of a knowledge graph. Modular RAG — with query routing, hybrid retrieval, and reranking — addresses that problem directly.

| Condition | Modular RAG response |

|---|---|

| Keyword-sensitive queries underperform | Add sparse retrieval (BM25) to hybrid pipeline |

| Top-K retrieval returns off-topic chunks | Add cross-encoder reranking |

| Different document types need different paths | Add query router |

| Quality regressions go undetected | Add evaluation gates |

Modular RAG is also the safer intermediate step for teams considering GraphRAG — building the orchestration infrastructure first means that if GraphRAG adoption occurs later, the routing and evaluation layers are already in place.

Adopt GraphRAG only when relationships drive answer quality

Pro Tip: The clearest signal that GraphRAG is justified is that your production evaluation shows multi-hop questions failing specifically because entity relationships are not being resolved — not because chunks are too large, embeddings are weak, or reranking is absent. Fix the simpler problems first. When those are fixed and multi-hop failure persists, GraphRAG addresses the structural gap. Pair the adoption decision with a funded ontology engineering effort and an explicit graph maintenance budget — the architecture does not pay for itself without both.

GraphRAG's audit lineage is a secondary justification that can strengthen the case in regulated domains: explicit entities and relationships make the retrieval path reviewable in a way that opaque vector similarity cannot match.

Risks, counterarguments, and migration pitfalls

The risks that most explainers omit are on the downside: over-engineering a system whose query distribution does not justify graph semantics, deploying a graph that becomes stale as the corpus evolves, and developing false confidence in answers because the graph structure implies precision that the actual entity extraction quality does not support.

Microsoft's documentation and the GitHub discussion history for GraphRAG collectively emphasize expensive indexing and nontrivial update burden — these are not edge cases but the default operating conditions for any non-trivial corpus.

Watch Out: The three most common migration pitfalls are: (1) over-engineering — committing to GraphRAG based on ambitious use cases that don't materialize in production query volume; (2) stale graphs — deploying without a defined graph rebuild cadence, then operating on an ontology that no longer reflects the corpus; (3) false precision — trusting graph-grounded answers more than the entity extraction quality warrants, creating an audit trail that looks rigorous but reflects extraction errors rather than ground truth.

When GraphRAG adds complexity without improving answers

GraphRAG's quality advantage is workload-dependent. The 2025 evaluation shows that on single-hop factual queries, GraphRAG matches or underperforms standard RAG. For a corpus of flat policy documents where questions are of the form "What is the deductible on plan type B?", graph infrastructure adds indexing cost, rebuild overhead, and ontology maintenance with no quality return.

Watch Out: Ontology drift is the most persistent maintenance failure in GraphRAG deployments. Entity types defined during initial schema design become misaligned with corpus evolution — new product names don't get added to the entity schema, relationship types become semantically overloaded, and community detection starts grouping unrelated entities. The result is a retrieval layer that appears structured but returns answers grounded in an increasingly inaccurate representation of the source corpus.

Why modular RAG can become an under-designed compromise

Modularity is not a quality guarantee. A modular RAG pipeline with undefined routing logic, no evaluation gates, and no success metrics per module is operationally more complex than naive RAG but not materially better in quality. The router-first blueprint is explicit that continuous evaluation is a required component — not optional.

Pro Tip: Before deploying modular RAG, define explicit success metrics for each module boundary: what does the router need to get right to justify its existence, what precision threshold makes reranking worth its latency cost, and what evaluation gate logic is required at the final output stage. Without those definitions, modular RAG becomes an overly complex version of naive RAG that is harder to debug and no easier to improve.

FAQ

What is the difference between modular RAG and GraphRAG?

Modular RAG is an orchestration architecture: it adds query routing, hybrid retrieval, reranking, and evaluation gates around a vector database retrieval core. The retrieval unit remains the text chunk. GraphRAG is a relational retrieval architecture: it replaces or augments chunk retrieval with entity- and relationship-based traversal over a knowledge graph constructed from the source corpus.

| Dimension | Modular RAG | GraphRAG |

|---|---|---|

| Core innovation | Pipeline flexibility and routing | Relational structure and entity traversal |

| Retrieval unit | Chunk (vector DB) | Entity + relationship + community |

| Build complexity | Moderate | High |

| Maintenance requirement | Routing logic, eval gates | Ontology, entity extraction, graph rebuilds |

| Best fit | Mixed query types, precision gaps | Multi-hop reasoning, audit lineage |

When should I use GraphRAG instead of vector search?

Use GraphRAG instead of vector search when the answer depends on traversing explicit relationships across documents, not on finding the nearest semantically similar chunk. If the workload is mostly FAQ, support deflection, or local document lookup, vector search remains the better baseline. If the workload is multi-hop, entity-linked, or audit-sensitive, GraphRAG is the better fit.

Is GraphRAG worth the complexity for enterprise applications?

Bottom Line: Conditionally yes — when multi-hop reasoning or relationship-based auditability materially determines answer quality and when the organization has funded both the build effort and the ongoing maintenance. Unconditionally no when the workload is primarily single-hop factual lookup, when the corpus changes frequently, or when the engineering team cannot sustain the maintenance burden. The 2025 systematic evaluation confirms GraphRAG's advantage is workload-specific, not universal.

How do you decide between modular RAG and GraphRAG?

Use query complexity and governance requirements as the primary axes:

- Choose modular RAG when the problem is pipeline rigidity — different query types need different retrieval paths — but answers do not require traversing entity relationships across documents.

- Choose GraphRAG when production evaluation confirms that multi-hop entity reasoning is a primary failure mode, and when the organization requires knowledge-graph audit lineage for compliance.

- Start with modular RAG if the decision is unclear — it is the better intermediate step, and its orchestration infrastructure is reusable if GraphRAG adoption follows.

What are the costs of implementing GraphRAG?

Microsoft explicitly warns that GraphRAG indexing is an expensive operation. Enterprise cost should be modeled across four categories:

| Cost category | Nature | Relative magnitude |

|---|---|---|

| Initial graph build | Data engineering, entity extraction LLM calls, relationship construction | High (weeks to months) |

| Ongoing ingestion | Re-extraction and re-linking for new documents | Moderate–High |

| Graph maintenance | Ontology updates, schema revisions, partial rebuilds | Moderate (persistent) |

| Operational FTE | Data engineers + ontology specialists | Ongoing, dedicated capacity |

Incremental updates are harder than standard RAG because relationship structure can require broader rebuilds when the corpus changes — a cost that compounds over time for high-churn corpora.

Sources & References

- AIQuinta: Naive RAG vs. Graph RAG vs. Agentic RAG — Engineering blog framing naive RAG as the low-cost baseline and GraphRAG as the relationship-aware alternative with significant upfront engineering cost

- Microsoft GraphRAG GitHub Repository — Official repository for Microsoft's GraphRAG implementation; primary source for indexing cost warnings, local/global search modes, and entity-relationship architecture

- Microsoft GraphRAG GitHub Discussions — Incremental Updates — Community discussion documenting incremental update difficulty and graph rebuild overhead

- arXiv: RAG vs. GraphRAG: A Systematic Evaluation and Key Insights (2025) — Primary empirical source for the single-hop vs. multi-hop performance split between RAG and GraphRAG

- GraphRAG-Bench — Benchmark framework covering graph construction, retrieval, and multi-hop answer generation as an integrated evaluation surface

- stAI tuned: RAG Reference Architecture 2026 — Router-First Design — 2026 enterprise RAG blueprint providing the "route early, retrieve hybrid, fuse with RRF, rerank sparingly, guardrail always, evaluate continuously" pattern for modular RAG

Keywords: GraphRAG, modular RAG, naive RAG, vector database, LangChain, Microsoft GraphRAG, knowledge graph, entity extraction, multi-hop reasoning, ontology, RAG evaluation, query routing, Azure AI Foundry, MMLU, GPQA