How We Compared These Embedding Models

Choosing an embedding model for a production RAG pipeline is a sticky decision: once you've embedded a large corpus, switching models means rebuilding every vector from scratch. This comparison forces a decision rather than listing options. We evaluated models across six criteria — retrieval quality, multilingual coverage, vector dimensions, cost per 1M tokens, context limit, and Matryoshka/truncation support — with migration burden as a seventh, often-underweighted factor.

The three anchor positions in 2026 are OpenAI text-embedding-3-small as the cost-effective managed default at ~$0.02/1M tokens, BGE-M3 as the common self-hosted multilingual pick, and Voyage AI as the premium retrieval-accuracy play. Every other model in this comparison earns its place by beating one of these three on a specific criterion.

| Criterion | What we measured |

|---|---|

| Retrieval quality | MTEB retrieval subset score or corpus-native Recall@10 where available |

| Multilingual coverage | MIRACL / MKQA benchmark claims; language count from model card |

| Dimensions | Default and min/max configurable output size |

| Cost per 1M tokens | Official pricing; secondary sources labeled |

| Context limit | Maximum input tokens before truncation or error |

| Matryoshka / truncation | Whether dimension reduction is supported without full re-embedding |

| Migration burden | Full re-embedding required on model switch; index incompatibility across families |

Migration burden deserves emphasis up front: vector spaces are model-specific. A 1,536-dim OpenAI vector and a 1,024-dim BGE-M3 vector occupy different latent geometries. You cannot mix them in one index, and switching families triggers a full re-embedding pass plus an ANN index rebuild. At $0.02/1M tokens, embedding 500M tokens costs $10 — but the compute and downtime costs of a parallel dual-write migration at scale dwarf that number for most teams.

At-a-Glance Model Comparison

OpenAI text-embedding-3-small is not universally better than BGE-M3 — the right answer is workload-dependent. For English-dominant, cost-sensitive, managed deployments, text-embedding-3-small wins on operational simplicity. For multilingual self-hosted corpora, BGE-M3 wins on language coverage and retrieval method flexibility. Voyage wins on retrieval accuracy when you can absorb a 3× price premium.

| Model | Dimensions | Context (tokens) | Cost / 1M tokens | Matryoshka / truncation | Host |

|---|---|---|---|---|---|

| OpenAI text-embedding-3-small | 1,536 (default) | 8,191 | ~$0.02 (official) | Yes (size control param) | Managed API |

| BGE-M3 | 1,024 (fixed) | 8,192 | Infra cost only | No (fixed dim) | Self-hosted |

| Voyage AI voyage-3-large | ~1,024–1,536 | 128,000 (secondary¹) | ~$0.06 (secondary¹) | Yes (truncation config) | Managed API |

| Cohere embed-v4+ | 256 / 512 / 1,024 / 1,536 | max_tokens param | Contact / tiered | Yes (selectable dims) | Managed API |

| Qwen3 Embedding-8B | Up to 4,096 | 32,000 | Infra cost only | Yes (user-defined) | Self-hosted |

| E5-Mistral | ~4,096 (varies by ckpt) | ~32,768 | Infra cost only | Partial | Self-hosted |

¹ Voyage pricing and context figures from secondary comparison source (Prem AI blog, 2026); verify against Voyage pricing docs before committing.

Default pick by task: - General English RAG, managed ops → text-embedding-3-small - Multilingual self-hosted → BGE-M3 - Max retrieval accuracy, long docs → Voyage AI voyage-3-large - Enterprise dimension control, managed → Cohere embed-v4 - Long-context multilingual self-hosted → Qwen3 Embedding-8B

Retrieval quality signals that matter more than headline averages

MTEB averages aggregate across classification, clustering, reranking, STS, and retrieval tasks. A model that scores 2 points higher on the leaderboard may rank lower on your domain-specific Recall@10 because the average is diluted by tasks irrelevant to retrieval.

The signals that actually predict RAG performance: MTEB retrieval subset score (not overall average), MIRACL for multilingual recall, MKQA for cross-lingual question answering, and — most importantly — your own corpus-native ablation. FlagEmbedding's repository explicitly claims BGE-M3 achieves "new SOTA on multi-lingual (MIRACL) and cross-lingual (MKQA) benchmarks." OpenAI's docs state text-embedding-3-small delivers "higher multilingual performance" versus ada-002 — but that is a relative improvement claim, not an absolute leaderboard position versus BGE-M3.

Pro Tip: MTEB averages can hide corpus-specific wins. A model that leads on MIRACL may underperform on a code-heavy English corpus where BM25 hybrid retrieval and a cross-encoder reranker contribute more lift than the base embedding. Always report the MTEB retrieval subset score separately from the overall average, and run at minimum a Recall@10 probe on 500 representative queries from your own index before committing to a model.

Dimension count, storage footprint, and index cost

Dimension count determines the per-vector memory footprint in your ANN index. A 1M-vector index at 1,536 dims (float32) costs ~6 GB of RAM before HNSW graph overhead; the same index at 1,024 dims costs ~4 GB. Switching from text-embedding-3-small (1,536) to BGE-M3 (1,024) cuts index memory by roughly 33% — but only after a full re-embedding.

| Model | Default dims | Min dims | Max dims | Dim control mechanism |

|---|---|---|---|---|

| OpenAI text-embedding-3-small | 1,536 | 256 | 1,536 | dimensions API param (Matryoshka) |

| BGE-M3 | 1,024 | 1,024 | 1,024 | None |

| Cohere embed-v4+ | 1,024 | 256 | 1,536 | output_dimension API param |

| Qwen3 Embedding-8B | 4,096 | User-defined | 4,096 | User-defined at inference |

| Voyage AI | Model-dependent | Truncation config | Model-dependent | truncation boolean |

Watch Out: Smaller dimensions do not automatically degrade retrieval quality. OpenAI's Matryoshka training and Cohere's selectable output dimensions both preserve most retrieval signal at reduced sizes. BGE-M3 at 1,024 dims consistently appears on retrieval leaderboards alongside models with 1,536 dims. Validate Recall@10 at your target dimension before assuming you must use the maximum.

OpenAI text-embedding-3-small

Text-embedding-3-small is the right starting model for most new RAG projects because it eliminates infrastructure decisions and keeps costs low enough to prototype without a budget conversation. OpenAI describes it as "our new highly efficient embedding model" that delivers "stronger performance" over ada-002 at a 5× price reduction — from $0.0001/1k tokens to $0.00002/1k tokens (~$0.02/1M tokens). It produces 1,536-dimensional vectors by default with an 8,191-token context window (cl100k_base tokenizer). Inputs exceeding that limit return an error; you must chunk or truncate beforehand.

| Factor | text-embedding-3-small | Self-hosted alternative |

|---|---|---|

| Ops overhead | Near-zero (managed API) | GPU/CPU serving, model updates, uptime |

| Cost at 1B tokens | ~$20 one-time embed | Infra-dependent, potentially lower at scale |

| Latency | API round-trip | Local inference, lower p99 at scale |

| Dim control | Yes (Matryoshka param) | BGE-M3: No; Qwen3: Yes |

| Multilingual | Improved vs ada-002 | BGE-M3: SOTA on MIRACL/MKQA |

| Vendor lock-in | High | None |

Production Note: The one-time re-embedding cost of switching away from text-embedding-3-small seems low at $0.02/1M tokens, but a 10B-token corpus costs $200 in API spend — plus the operational cost of coordinating a dual-write migration, rebuilding your ANN index, and validating Recall@10 before cutover. Model selection is sticky. Account for migration cost in your initial model choice, not as an afterthought.

Where text-embedding-3-small wins in production

Text-embedding-3-small wins when the priority is shipping a retrieval system fast with low operational overhead. At ~$0.02/1M tokens, it is cheaper than every managed alternative in this comparison. The managed API removes model serving, version management, and hardware provisioning from your stack entirely.

For English-dominant corpora in typical RAG domains (documentation, support tickets, product catalogs), the retrieval quality is competitive without corpus-specific tuning. The Matryoshka size-control parameter lets you experiment with reduced dimensions — say 512 — without rebuilding from a different checkpoint.

Pro Tip: If you're embedding a new corpus with no prior production traffic, start with text-embedding-3-small at 1,536 dims and run your Recall@10 baseline. The cost of an initial embed pass is a rounding error compared to the engineering time you'd spend setting up a self-hosted alternative. Only migrate when your baseline measurement reveals a retrieval gap that a specialized model demonstrably closes on your specific data.

Where it can fall behind specialized models

Text-embedding-3-small shows its limits on multilingual corpora, code-heavy indexes, and long-document retrieval. BGE-M3 explicitly targets multilingual and cross-lingual retrieval with SOTA claims on MIRACL and MKQA. Voyage AI positions its models for top retrieval accuracy with reranking support and (per Prem AI's 2026 comparison) a 128k-token context window on voyage-3-large.

| Weakness | text-embedding-3-small | BGE-M3 | Voyage AI |

|---|---|---|---|

| Multilingual recall (MIRACL) | Improved vs ada-002; no SOTA claim | SOTA claim (FlagEmbedding) | Not a primary multilingual focus |

| Cross-lingual QA (MKQA) | No specific claim | SOTA claim (FlagEmbedding) | — |

| Long-doc retrieval | 8,191-token hard limit | 8,192-token limit (BAAI model card) | ~128k (secondary¹) |

| Reranking pipeline | External reranker needed | ColBERT/sparse hybrid built-in (FlagEmbedding) | Native reranker offered (Voyage docs) |

| Code-heavy corpora | General-purpose training | General-purpose training | — |

For truly multilingual corpora (10+ languages, mixed-script queries), the practical choice is BGE-M3 self-hosted or Qwen3 Embedding for broader language support and longer context. For retrieval accuracy on English legal, medical, or financial text where precision matters more than cost, Voyage AI's reranking pipeline is the argument.

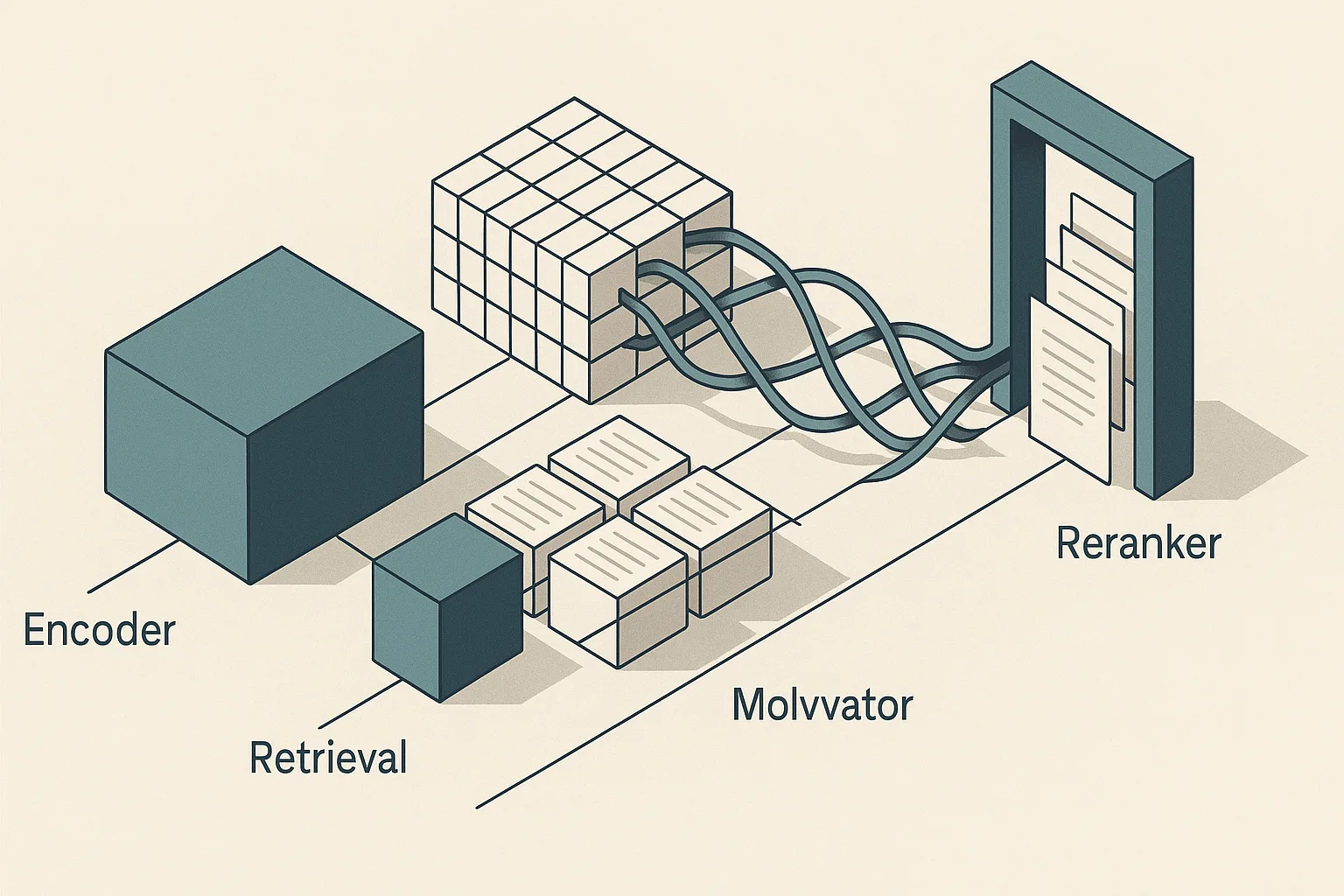

BGE-M3 and other self-hosted BGE options

BGE-M3 is the dominant self-hosted multilingual embedding model in 2026 because it combines three retrieval methods — dense, sparse (BM25-compatible), and multi-vector (ColBERT-style) — in a single checkpoint. The FlagEmbedding repository states it is "the first embedding model which supports all three retrieval methods, achieving new SOTA on multi-lingual (MIRACL) and cross-lingual (MKQA) benchmarks." The Hugging Face model card lists 1,024 dimensions and 8,192 sequence length, and the BGE-M3 paper provides the technical basis for that architecture.

| Factor | BGE-M3 | BAAI/bge-large-en-v1.5 |

|---|---|---|

| Dimensions | 1,024 | 1,024 |

| Context | 8,192 tokens | 512 tokens |

| Languages | 100+ | English-focused |

| Retrieval methods | Dense + sparse + multi-vector | Dense only |

| Reranker compatibility | BGE reranker (BAAI) | BGE reranker |

| Deployment | GPU recommended | GPU or CPU |

| MIRACL performance | SOTA claim | Not evaluated |

Self-hosting shifts cost from API spend to infrastructure: GPU memory for inference, model update cycles, and serving uptime. BGE-M3 runs on a single A100 40GB for batch inference, though the exact hardware minimum varies by batch size and sequence length. If you need reranking, pair with a BGE reranker from BAAI for the strongest same-family evaluation alignment.

Why BGE-M3 is attractive for multilingual corpora

BGE-M3 is the default answer to "which embedding model is best for multilingual search?" among self-hosted deployments because it was explicitly trained and evaluated across 100+ languages, and its MIRACL and MKQA SOTA claims are the most specific multilingual retrieval evidence available from any model in this comparison. For broader language coverage with a longer context window, Qwen3 Embedding-8B extends to 32k tokens and user-defined output dimensions up to 4,096.

| Scenario | Recommended model | Rationale |

|---|---|---|

| Multilingual RAG, self-hosted, ≤8k tokens | BGE-M3 | SOTA multilingual claims, all three retrieval methods |

| Multilingual RAG, long docs (>8k tokens) | Qwen3 Embedding-8B | 32k context, 100+ languages |

| Low-budget multilingual, CPU-only | BAAI/bge-large-en-v1.5 (if English-only) or BGE-M3 small | Smaller footprint |

| Code-heavy multilingual corpus | BGE-M3 + BM25 hybrid | Sparse retrieval handles lexical code tokens |

| Managed multilingual | Cohere embed-v4 | Selectable dims, enterprise API |

Evaluate BGE-M3 on your actual language mix with corpus-native queries. MIRACL covers 18 languages; if your corpus spans 40+, run your own Recall@10 probe before treating leaderboard averages as predictive.

Operational cost of self-hosting and updating indexes

Switching from OpenAI text-embedding-3-small (1,536 dims, 8,191-token context) to BGE-M3 (1,024 dims, 8,192-token context) requires a complete re-embedding of your corpus. The dimension mismatch alone makes mixed-index retrieval impossible — a query embedded with BGE-M3 cannot be compared to document vectors from text-embedding-3-small using dot product or cosine similarity.

Watch Out: Re-embedding is never just the API cost. At $0.02/1M tokens for OpenAI, embedding 1B tokens costs $20 — but the production migration involves: (1) provisioning a parallel index while keeping the old one live, (2) dual-writing new documents to both indexes during the transition window, (3) running Recall@10 validation on the new index before cutover, and (4) rebuilding ANN graph structures (HNSW or IVF-PQ) which can take hours on billion-scale indexes. Cache invalidation compounds this: if you use semantic caching on query embeddings, the cache becomes stale the moment you switch models. Plan migration as a multi-sprint project, not a weekend task.

For BGE-M3 self-hosting specifically, model version updates (when BAAI releases an improved checkpoint) also require re-embedding if the latent space shifts. Pinning to a specific commit hash in your serving config and validating before upgrading is standard practice.

Voyage AI, Cohere, E5, and Qwen3 Embedding

Beyond the three anchor models, four alternatives earn consideration for specific workloads. Voyage AI targets maximum retrieval accuracy with native reranker support. Cohere embed-v4 offers the most flexible dimension control of any managed API. Qwen3 Embedding-8B delivers 32k context and 100+ language coverage for self-hosted long-document retrieval. E5-Mistral provides a portable open checkpoint for teams that need embedding portability across deployment environments.

| Model | Dims | Context | Cost / 1M tokens | Multilingual | Reranker | Host |

|---|---|---|---|---|---|---|

| Voyage voyage-3-large | Model-dep. | 128k (secondary¹) | ~$0.06 (secondary¹) | Moderate | Native | Managed |

| Cohere embed-v4+ | 256–1,536 | max_tokens param | Tiered (contact) | Strong | Cohere Rerank | Managed |

| Qwen3 Embedding-8B | Up to 4,096 | 32,000 | Infra only | 100+ languages | External | Self-hosted |

| E5-Mistral | ~4,096 | ~32,768 | Infra only | Moderate | External | Self-hosted |

¹ Secondary source; verify at docs.voyageai.com.

Voyage AI for top-end retrieval accuracy

Voyage AI is positioned as the answer to "are Voyage embeddings better than OpenAI?" — and for retrieval-accuracy-first use cases, the answer is likely yes, at a cost. Voyage describes its platform as providing "cutting-edge embedding models and rerankers", and Prem AI's 2026 comparison consistently places voyage-3-large among the top retrieval performers. The native reranker integration matters: embedding quality and reranker quality compound, and Voyage's paired design means both are optimized for the same retrieval objective.

The price differential versus text-embedding-3-small is roughly 3× (~$0.06 vs ~$0.02/1M tokens, secondary figures). For a 10B-token corpus, that gap is $400 on the initial embed pass — but in production, ongoing query embedding at 10M queries/day with average 128 tokens per query costs ~$24/day at Voyage vs ~$8/day at OpenAI. Over a year, that difference funds significant engineering time.

Pro Tip: Voyage's premium is justified when your retrieval quality directly drives revenue — legal discovery, medical literature search, financial document retrieval — and you can measure a Recall@10 improvement that translates to user outcomes. For internal tooling or developer documentation RAG, the gap in accuracy relative to text-embedding-3-small is unlikely to be perceptible to end users, and the cost premium is hard to justify.

Cohere and Qwen3 for multilingual and enterprise search

Cohere embed-v4 and newer is the managed API choice when you need fine-grained control over output dimensions alongside enterprise governance. The API exposes selectable output dimensions of 256, 512, 1,024, and 1,536 and a max_tokens parameter to control per-input length. This dimension flexibility means you can tune the storage/quality trade-off without switching models — an operationally significant advantage for teams managing index cost at scale.

Qwen3 Embedding-8B covers 100+ languages with a 32,768-token context window and user-defined output dimensions up to 4,096. For corpora with long multilingual documents — regulatory filings in multiple languages, international technical standards — the context window advantage over BGE-M3 (8,192 tokens) is decisive. The trade-off is serving cost: an 8B-parameter model demands significantly more GPU memory than BGE-M3.

| Model | Multilingual strength | Context | Dim flexibility | Enterprise fit |

|---|---|---|---|---|

| Cohere embed-v4+ | Strong (managed) | max_tokens | 256/512/1024/1536 | High (SLAs, governance) |

| Qwen3 Embedding-8B | 100+ languages | 32,000 | Up to 4,096 | Moderate (self-hosted) |

E5-style open models when you want portability

E5-Mistral and the broader E5 family (via sentence-transformers) represent the portability-first path: open checkpoints you can run on any hardware, quantize for edge deployment, and version-pin without vendor dependency. The sentence-transformers library makes loading and batching these models straightforward in Python production pipelines.

A verified primary-source MTEB score for E5-Mistral against the 2026 leaderboard was not available in the evidence collected for this article. E5-Mistral has historically performed competitively on MTEB retrieval subsets, but treat any specific number from secondary sources as directional until you verify against the official MTEB leaderboard.

Watch Out: Benchmark fairness breaks down when you compare a hosted managed model (Voyage, OpenAI) against a self-hosted open checkpoint (E5-Mistral, BGE-M3) without matching evaluation protocol. Managed APIs often serve the base model without reranking; your self-hosted setup may include a cross-encoder reranker that inflates Recall@10. Always label whether your benchmark reflects embedding-only or reranked retrieval, and whether you're comparing API latency versus local inference latency — these are different systems.

Benchmark numbers that are actually comparable

The table below presents only claims grounded in primary sources or clearly labeled secondary sources. There is no apples-to-apples official Recall@10 table covering all five models on the same dataset — such a comparison requires running evaluations on a shared corpus with matching protocol.

| Model | Benchmark | Metric | Score | Source type |

|---|---|---|---|---|

| BGE-M3 | MIRACL (multilingual retrieval) | NDCG@10 (claimed SOTA) | New SOTA (no abs. # in official repo) | Primary — FlagEmbedding repo |

| BGE-M3 | MKQA (cross-lingual QA) | Recall (claimed SOTA) | New SOTA (no abs. # in official repo) | Primary — FlagEmbedding repo |

| OpenAI text-embedding-3-small | MTEB multilingual | Relative improvement | Better than ada-002 | Primary — OpenAI docs |

| Voyage voyage-3-large | MTEB retrieval | Not disclosed publicly | Top-tier positioning | Primary — Voyage docs |

| Qwen3 Embedding-8B | Multilingual coverage | Language count | 100+ languages | Primary — model card |

The honest position: the benchmark evidence for this decision set is directional, not definitive. No vendor publishes a head-to-head table against all competitors on the same retrieval corpus. Run your own Recall@10 evaluation on a random 1,000-query sample from your index before committing to any model at scale.

How to read MTEB averages without overfitting to them

MTEB is a suite of tasks — retrieval, classification, clustering, STS, reranking, summarization — and the overall average weights all tasks equally. A model tuned for semantic textual similarity can post a high MTEB average while underperforming on retrieval-specific subsets. When selecting an embedding model for RAG, filter to the MTEB retrieval subset (BeIR benchmark family) and the multilingual retrieval tasks (MIRACL, MKQA) that match your corpus.

Language subset matters as much as task subset. A model may lead on English BEIR while ranking below BGE-M3 on Korean or Arabic MIRACL. Domain mix matters too: legal text, code, and conversational queries have different token distributions that shift which model's training data composition provides an advantage.

Pro Tip: Pull the per-task MTEB breakdown, not just the overall average. Two models within 1 point of each other on the leaderboard can be 5+ points apart on the retrieval subset tasks you actually care about. If your corpus is code-heavy, check whether the model was evaluated on CodeSearchNet or similar code retrieval benchmarks — the MTEB standard suite underweights code retrieval relative to its importance for developer tooling RAG.

What the scores imply for RAG ablations

Benchmark differences between embedding models interact with your chunking strategy. Text-embedding-3-small at 8,191 tokens and BGE-M3 at 8,192 tokens both accommodate chunks up to roughly 6,000 tokens with a 25% overlap buffer — but that does not mean large chunks are optimal. Embedding quality typically degrades as chunk size increases because the model must compress more semantic content into a fixed-dimension vector, dispersing the signal that retrieval queries are targeting.

| Corpus type | Recommended chunk size | Overlap | Model implication |

|---|---|---|---|

| Documentation / prose | 400–800 tokens | 100–200 tokens | All models perform acceptably |

| Legal / long-form documents | 800–1,500 tokens | 200–400 tokens | Voyage (128k context) reduces chunk count |

| Code (function-level) | Function boundaries | None | Lexical hybrid (BGE-M3 sparse) helps |

| Multilingual mixed | 400–600 tokens | 100 tokens | BGE-M3 or Qwen3 Embedding |

If you change your embedding model, revisit chunk size. The optimal granularity is model-dependent: a model with stronger positional encoding for long sequences may sustain retrieval quality at larger chunks where another model degrades. Run Recall@10 at chunk sizes of 256, 512, and 1,024 tokens for each candidate model on your corpus before treating any default as correct.

Decision matrix: which embedding model to choose

| Criterion | text-embedding-3-small | BGE-M3 | Voyage AI | Cohere embed-v4 | Qwen3 Embedding-8B |

|---|---|---|---|---|---|

| Budget (managed) | Lowest (~$0.02/1M) | N/A (self-hosted) | ~$0.06/1M (secondary) | Tiered | N/A |

| Multilingual | Improved vs ada-002 | SOTA (MIRACL/MKQA) | Moderate | Strong | 100+ languages |

| Context window | 8,191 tokens | 8,192 tokens | 128k (secondary) | max_tokens param | 32,000 tokens |

| Dimension control | Yes (Matryoshka) | No | Yes (truncation) | Yes (256–1,536) | Yes (up to 4,096) |

| Reranker | External | BGE Reranker (BAAI) | Native | Cohere Rerank | External |

| Migration tolerance | Low needed | High (self-host setup) | Low needed | Low needed | High (self-host setup) |

| Self-hosting required | No | Yes | No | No | Yes |

Choose OpenAI text-embedding-3-small if you need the simplest default

Text-embedding-3-small is the correct starting point when: you are building a new RAG system, your corpus is English-dominant or lightly multilingual, you do not want to manage GPU infrastructure, and you need to validate retrieval quality before committing to a specialized model.

Bottom Line: Start with text-embedding-3-small at 1,536 dims. Measure Recall@10 on your corpus. Only migrate to a specialized model if the measurement reveals a gap — because migration requires full re-embedding, index reconstruction, and operational coordination that costs far more than the token price differential.

Choose BGE-M3 if multilingual self-hosting matters

BGE-M3 is the correct choice when: your corpus spans multiple languages (especially non-Latin scripts), you need dense + sparse hybrid retrieval from a single model, you have GPU infrastructure to self-host, and you need to keep data on-premises.

Bottom Line: BGE-M3 on a GPU node with a BGE reranker is the strongest open-source multilingual retrieval stack available in 2026. Ideal corpus profile: 5M+ documents, 10+ languages, queries that mix languages within a session, and a team that can manage model serving. Evaluate on MIRACL language subsets that match your corpus before treating leaderboard claims as your production benchmark.

Choose Voyage AI or Cohere when accuracy or enterprise constraints dominate

Voyage AI is the right choice when retrieval precision directly drives revenue and you can absorb the ~3× cost premium versus text-embedding-3-small. The native reranker integration is the differentiator — paired embedding and reranking models optimized together outperform mix-and-match stacks on precision-sensitive retrieval tasks.

Cohere embed-v4 is the right choice when your organization needs a managed API with enterprise SLAs, fine-grained dimension control, and a vendor with explicit data processing agreements. The selectable output dimensions (256/512/1,024/1,536) make it uniquely suited for deployments where storage cost is a first-class constraint alongside retrieval quality.

Bottom Line: Choose Voyage when Recall@10 on your domain-specific corpus, measured after enabling the Voyage reranker, beats your current baseline by a margin that justifies the cost delta. Choose Cohere when governance, data handling agreements, and dimension flexibility matter more than raw leaderboard positioning.

FAQ

What is the best embedding model for RAG?

Start with OpenAI text-embedding-3-small for the simplest managed baseline. If your corpus is multilingual and self-hosted, BGE-M3 is the stronger open-source option. If retrieval precision directly drives revenue and you can absorb a premium, Voyage AI is the managed specialization to test next.

Is OpenAI text-embedding-3-small better than BGE-M3?

It depends on corpus language, hosting constraints, and retrieval method. OpenAI text-embedding-3-small wins on operational simplicity and cost. BGE-M3 wins on multilingual coverage, hybrid dense+sparse retrieval, and self-hosted control.

Are Voyage embeddings better than OpenAI?

On retrieval accuracy, Voyage AI is often the better test candidate, especially when paired with its native reranker. The trade-off is cost: secondary 2026 comparisons such as Prem AI's benchmark roundup place Voyage at roughly 3× the price of OpenAI text-embedding-3-small.

Which embedding model is best for multilingual search?

For self-hosted deployments, BGE-M3 is the default choice. For longer multilingual documents, Qwen3 Embedding-8B extends context to 32k tokens. For managed enterprise search, Cohere embed-v4 gives you dimension control and governance.

Do I need to re-embed my corpus if I switch embedding models?

Yes, always. Vectors from OpenAI text-embedding-3-small, BGE-M3, Voyage AI, and Cohere embed-v4 are not interoperable across model families, so the index must be rebuilt when you switch.

Sources & References

- Primary — OpenAI: New embedding models and API updates — pricing and model performance claims for text-embedding-3-small

- Primary — OpenAI API docs: Embeddings guide — multilingual performance statements and usage guidance

- Primary — OpenAI Cookbook: Embedding long inputs — context handling guidance for long inputs

- Primary — BAAI/bge-m3 model card — dimensions, sequence length, and model metadata

- Primary — FlagOpen/FlagEmbedding GitHub repository — BGE-M3 retrieval-method support and benchmark claims

- Primary — Voyage AI documentation — embedding and reranker positioning

- Primary — Voyage AI multimodal embeddings docs — truncation configuration reference

- Primary — Cohere Embed API reference — output dimensions and

max_tokensparameter - Supplementary — Cohere embed documentation — additional product context

- Primary — Qwen3 Embedding-8B model page — 32k context, 100+ languages, and dimension options

- Reference — MTEB benchmark index on CodeSOTA — benchmark suite reference

- Secondary — My Engineering Path embeddings comparison — comparison framing

- Secondary — Prem AI blog: Best embedding models for RAG 2026 — secondary Voyage pricing and context figures

- Primary — BGE-M3 paper — technical basis for BGE-M3 architecture

Keywords: OpenAI text-embedding-3-small, BGE-M3, Voyage AI, Cohere embed-v3, Qwen3 Embedding, E5-Mistral, BAAI/bge-large-en-v1.5, FlagEmbedding, sentence-transformers, MTEB, Recall@10, BM25, cross-encoder reranker, Chunking, Matryoshka Representation Learning