How We Compared LangChain, LlamaIndex, and LangGraph

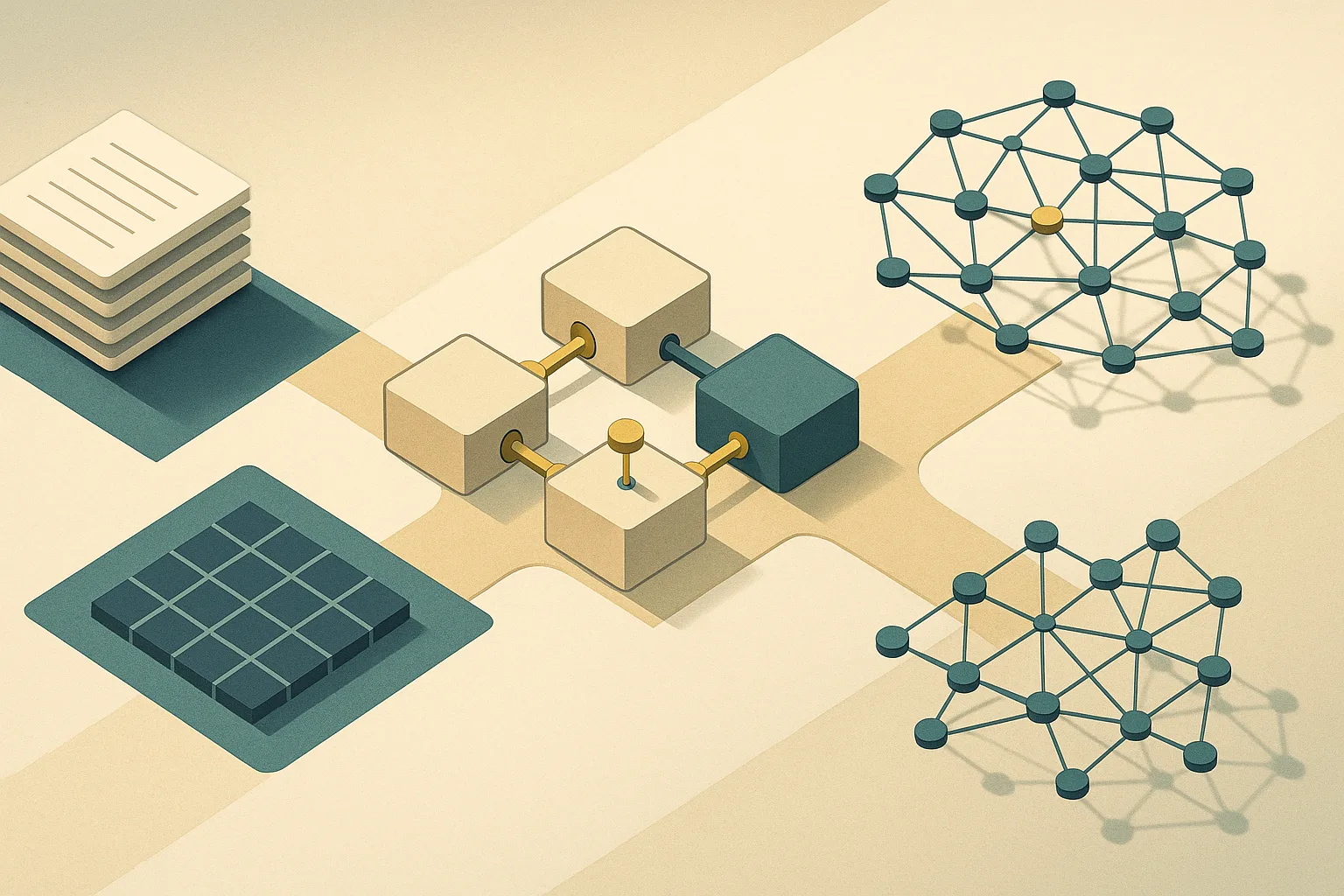

LangChain, LlamaIndex, and LangGraph solve different problems in a production RAG stack, and conflating them produces bad architectural decisions. This comparison holds each framework against five production criteria: retrieval depth, orchestration and statefulness, observability, code volume, and upgrade stability.

The frameworks' own documentation clarifies the boundaries. LangChain describes itself as "a framework for building agents and LLM-powered applications" with "composable tools and integrations for working with language models" and "off-the-shelf chains" for higher-level tasks. LlamaIndex positions its high-level API as enabling users to "ingest and query their data in 5 lines of code," with lower-level customization of indices, retrievers, query engines, and reranking modules. LangGraph describes itself as the graph-based stateful runtime in the LangChain ecosystem — one that "can be used standalone, [and] also integrates seamlessly with any LangChain product."

Critically, the production comparison is not LangChain versus LlamaIndex in isolation. The real choice is LangChain + LangGraph + LangSmith (the full production stack) versus LlamaIndex + Workflows + an external observability tool such as Langfuse or Arize Phoenix.

| Criterion | LangChain (base) | LangGraph | LlamaIndex 0.10 |

|---|---|---|---|

| Retrieval depth | Generic chain assembly | Delegates to retrieval tool | Purpose-built indices + query engines |

| Orchestration / statefulness | Stateless chains | Stateful graph with checkpointing | Lightweight event-driven Workflows |

| Observability | LangSmith (first-party) | LangSmith (first-party) | Langfuse / Arize Phoenix (third-party) |

| Code volume for pure RAG | High (assembly overhead) | High + graph wiring | Low (5-line baseline) |

| Upgrade stability | Stable 0.3 core | Maturing 0.2 | Stable 0.10 core |

At-a-Glance Comparison for Production RAG Teams

LlamaIndex is the faster path when retrieval is the primary workload. LangGraph is the stronger choice once the application requires stateful orchestration, branching control flow, or human review gates.

| Stack | Fastest path to working RAG | Operational risk | Best-fit use case |

|---|---|---|---|

| LangChain + LangGraph | Medium — requires graph wiring | Stack surface area (three products) | Stateful agents, approval workflows, multi-step branching |

| LlamaIndex 0.10 | Lowest — purpose-built abstractions | Observability toolchain fragmentation | Document-centric Q&A, ingestion-heavy pipelines, multi-index search |

| LangGraph | Medium — stateful runtime, not retrieval-first | Persistence setup and graph design overhead | Human-in-the-loop workflows, fault-tolerant branching, resumable agents |

| Hybrid (LlamaIndex retrieval + LangGraph orchestration) | Medium — clean boundary required | Integration seam between the two stacks | High-volume RAG with downstream stateful decision gates |

As LlamaIndex's documentation states, its high-level API "allows beginner users to use LlamaIndex to ingest and query their data in 5 lines of code." LangGraph's persistence layer, on the other hand, "enables human-in-the-loop workflows, conversational memory, time travel debugging, and fault-tolerant execution" — capabilities that have no direct equivalent in LlamaIndex Workflows.

LangChain: When the Stack Needs Orchestration

LangChain is the right foundation when the application is an agent or a multi-step workflow rather than a pure retrieval pipeline. As its README states, "LangChain is a framework for building agents and LLM-powered applications" — and in 2026, that production story runs through LangGraph for the runtime and LangSmith for observability.

LangSmith — Helpful for agent evals and observability. Debug poor-performing LLM app runs, evaluate agent trajectories, gain visibility in production, and improve performance over time.

LangSmith's role is explicit in the official documentation: "Helpful for agent evals and observability. Debug poor-performing LLM app runs, evaluate agent trajectories, gain visibility in production, and improve performance over time." That tight first-party integration between the agent runtime (LangGraph) and the observability layer (LangSmith) is the primary operational advantage of the LangChain stack.

Pro Tip: In production, treat LangChain as three co-deployed products: LangChain 0.3 for component interfaces and integrations, LangGraph 0.2 as the stateful graph runtime, and LangSmith for tracing and eval. Evaluating LangChain without LangGraph and LangSmith understates the stack's production readiness and overstates its complexity per feature.

Where LangChain/LangGraph Wins

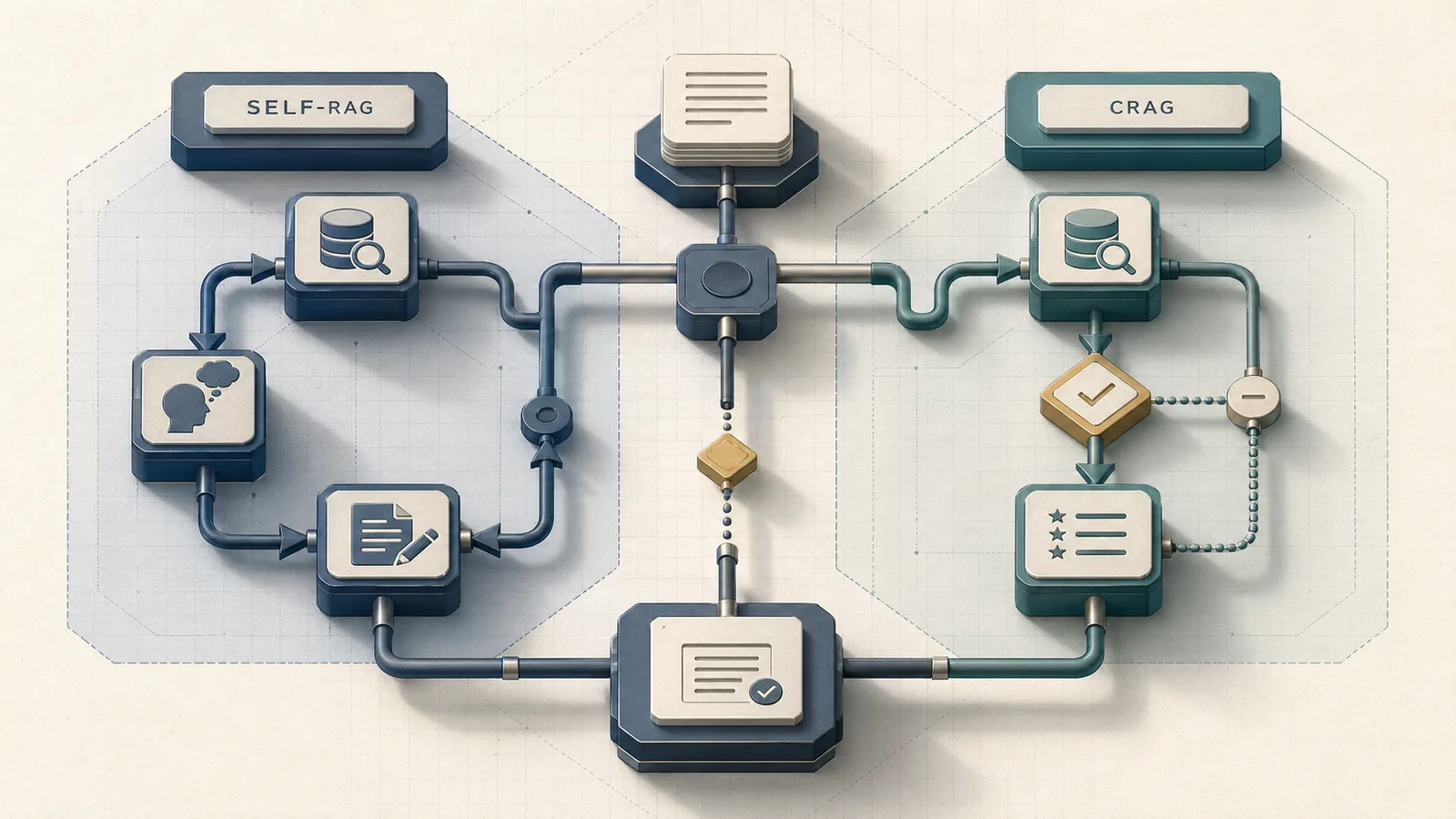

LangGraph's checkpointing is the architectural feature that separates it from plain chain assembly and from LlamaIndex Workflows. The persistence layer "enables human-in-the-loop workflows, conversational memory, time travel debugging, and fault-tolerant execution" — specifically because LangGraph saves a checkpoint at every superstep, giving the runtime a full state snapshot to resume from after a failure or a human review pause.

For RAG systems with approval gates — a compliance review before a generated response is sent, a routing decision based on retrieved document classification, or a multi-turn agent that must resume sessions across days — LangGraph's checkpointing is not a nice-to-have. It is the mechanism that makes SLA recovery deterministic rather than retry-dependent.

Production Note: LangGraph's Agent Server handles checkpointing automatically, which means teams using the managed deployment path may not need to configure persistence manually. For self-hosted deployments, checkpointers must be explicitly wired; without a configured checkpointer, state persistence and recovery are unavailable. This distinction matters when scoping incident response runbooks — confirm your deployment path before writing SLA commitments around recovery time.

Where LangChain Adds Friction

LangChain's composability is a liability for retrieval-heavy pipelines. Its architecture centers on "composable tools and integrations" and "off-the-shelf chains" — which means building a retrieval pipeline requires assembling document loaders, text splitters, embedding wrappers, vector store integrations, and retrieval chains from separate components, each with its own configuration surface and update cadence.

For an application where retrieval is the product — high-volume Q&A over large document corpora, multi-index routing, or aggressive reranking — every additional composable part is a maintenance liability. Each new retriever, store, splitter, or prompt chain adds another dependency boundary, another release track, and another place where a minor version bump can alter behavior.

Watch Out: The maintenance cost of a retrieval-heavy LangChain pipeline scales with the number of composable parts, not with the complexity of the retrieval logic itself. A pipeline that replaces an embedding model, swaps a vector store from Qdrant to Weaviate, or adds a reranking step touches multiple independently-versioned components. LlamaIndex's purpose-built retrieval abstractions concentrate that change surface in fewer modules.

LlamaIndex: When Retrieval Is the Product

LlamaIndex wins on retrieval-heavy workloads because its architecture is built around retrieval primitives rather than assembled from them. The high-level API delivers a working ingest-and-query pipeline in 5 lines; the low-level API exposes "data connectors, indices, retrievers, query engines, and reranking modules" as individually customizable extension points — not as a composition of generic components.

LlamaIndex Workflows add lightweight event-driven orchestration for agents and RAG flows. They reduce boilerplate for common patterns without requiring teams to adopt the full LangGraph graph model for applications where statefulness is not a core requirement.

Pro Tip: If your application's primary loop is ingest → index → retrieve → generate, start with LlamaIndex 0.10. Its retrieval primitives —

VectorStoreIndex,QueryEngine, configurable reranking via Hugging Face sentence-transformers — map directly to that loop with minimal translation overhead. Reserve LangGraph for the application layer above retrieval when you need state, branching, or human review.

Why the Retrieval Primitives Matter

The structural difference between LlamaIndex and LangChain-style assembly is not philosophical; it shows up in codepath length and in the number of abstraction layers a change propagates through.

LlamaIndex exposes indices, retrievers, query engines, and reranking modules as first-class retrieval primitives. A "Workflow in LlamaIndex is a lightweight, event-driven abstraction used to chain together several events" — meaning orchestration composes over retrieval primitives rather than wrapping them in generic chain objects.

| Capability | LlamaIndex 0.10 | LangChain-style assembly | Operational effect |

|---|---|---|---|

| Index type abstraction | VectorStoreIndex, SummaryIndex, KnowledgeGraphIndex built-in |

Requires manual retriever configuration per store | Fewer modules to rewire when the corpus or store changes |

| Query engine | Native QueryEngine with reranking hooks |

Assembled from retriever + prompt + LLM chain | Less codepath length for the common ingest-query loop |

| Reranking | First-class module, drop-in | Requires custom chain step or community package | Lower maintenance overhead when ranking strategy changes |

| Workflow orchestration | Lightweight event-driven Workflows | LangGraph graph nodes (heavier, but stateful) | Less boilerplate for non-stateful flows |

| Beginner baseline | 5 lines (documented) | Significantly more; composable by design | Faster time-to-first-working-RAG |

For applications where retrieval logic changes frequently — new corpora, updated embedding models scored against RAGAS metrics, A/B testing of reranking strategies — LlamaIndex's purpose-built layer reduces the blast radius of each change.

Observability in a LlamaIndex Stack

"LlamaIndex provides callbacks to help debug, track, and trace the inner workings of the library." and can collect duration and event counts. For production-grade tracing, teams route through OpenInference callback documentation to connect with Arize Phoenix (branded as LlamaTrace in the hosted version) or through Langfuse's native LlamaIndex integration.

This multi-tool approach works, but it introduces a configuration choice that the LangChain/LangSmith stack avoids by design.

Watch Out: LlamaIndex's observability is multi-tool by architecture: native callbacks, OpenInference/Arize Phoenix, and Langfuse are all valid but separately configured. If your team doesn't standardize on one pipeline at project start, you risk trace fragmentation — spans from ingestion appearing in one tool while query-engine spans land in another. Establish the observability stack in the infrastructure layer before writing application code, not as a post-launch retrofit.

Benchmarking the Production Trade-Offs

No single authoritative third-party benchmark covers code volume, recovery time, and observability overhead across these three stacks simultaneously. The table below synthesizes what the official documentation supports, with qualitative ranges where numeric benchmarks are not independently verified.

Code Volume and Time-to-First-Working-RAG

LlamaIndex's documented 5-line baseline is the clearest indicator of lower initial implementation overhead for retrieval-only pipelines. LangChain's design is explicitly composable — that composability is a feature for complex agents and a cost for pure retrieval.

Official docs support a qualitative conclusion rather than a numeric benchmark: LlamaIndex reduces assembly overhead because retrieval primitives are first-class, while LangChain requires more wiring when the task is primarily ingest, index, and query.

| Scenario | LlamaIndex 0.10 | LangChain 0.3 (assembly) | LangGraph 0.2 |

|---|---|---|---|

| Ingest + query (baseline) | ~5 lines (documented) | ~15–25 lines (estimated, composable) | Not the right tool alone |

| Add reranking | Drop-in module | Additional chain component | Not the right tool alone |

| Add stateful multi-step | Requires LangGraph or custom | Add LangGraph | Native |

| Human-in-the-loop | Not native in Workflows | LangGraph checkpoint | Native |

Statefulness, Checkpointing, and Human-in-the-Loop Control

LangGraph is unambiguously the production layer for stateful orchestration. Its persistence docs enumerate exactly what checkpointing delivers: "human-in-the-loop workflows, conversational memory, time travel debugging, and fault-tolerant execution." LangGraph checkpointers "save a checkpoint at every superstep" — providing a recoverable state snapshot at each discrete execution step.

Checkpointers provide persistence layer for LangGraph. LlamaIndex Workflows are event-driven and lightweight. They handle multi-step RAG flows with low boilerplate, but the retrieved documentation does not describe equivalent checkpoint semantics to LangGraph's persisted graph state.

| Capability | LangGraph 0.2 | LlamaIndex Workflows | Plain LangChain |

|---|---|---|---|

| Persistent state across requests | Yes (via checkpointers) | No — event-driven, in-memory | No |

| Resumable execution after failure | Yes — checkpoint at every superstep | No native mechanism | No |

| Human-in-the-loop pause/resume | Yes — documented, first-class | Not documented | No |

| Time-travel debugging | Yes — replay from any checkpoint | No | No |

| Orchestration overhead | Graph wiring required | Minimal boilerplate | Chain assembly |

For SLA recovery, the operational implication is direct: a LangGraph workflow that fails mid-execution resumes from its last checkpoint. A LlamaIndex Workflow or plain LangChain chain that fails mid-execution restarts from the beginning unless the application layer implements its own checkpointing.

Observability and Debugging in Production

The observability gap between the two stacks is architectural, not incidental. LangSmith provides first-party tracing, eval, and trajectory inspection for the entire LangChain/LangGraph stack in one product. LlamaIndex routes observability through callbacks, OpenInference, and external platforms.

| Dimension | LangSmith (LangChain/LangGraph) | Langfuse (LlamaIndex integration) | Arize Phoenix / LlamaTrace |

|---|---|---|---|

| Integration depth | First-party, auto-instrumented | Native LlamaIndex integration | OpenInference callback |

| Agent trajectory eval | Yes (documented) | Query/span tracing | Span tracing, Phoenix eval suite |

| Stack coverage | Full LangChain + LangGraph | LlamaIndex + other frameworks | LlamaIndex + other frameworks |

| Hosting model | Managed SaaS (LangSmith) | Self-hosted or managed | Managed (LlamaTrace) or OSS |

| Configuration surface | Single product | Separate setup per project | Separate setup per project |

For incident response, first-party observability reduces mean time to diagnosis because trace context, eval results, and agent trajectories live in one system correlated by run ID. Multi-tool stacks require teams to join traces across systems manually, which adds friction under SLA pressure.

When to Choose LangChain, LlamaIndex, or a Hybrid Stack

LangChain and LlamaIndex can be used together, and for applications where retrieval and orchestration have distinct complexity profiles, the hybrid pattern is the strongest production architecture. The official LangGraph README confirms it "integrates seamlessly with any LangChain product," and LlamaIndex's modular design makes its query engines and indices straightforward to call from within a LangGraph node.

| Use case | Recommended stack | Rationale |

|---|---|---|

| Document Q&A, multi-index search, ingestion-heavy | LlamaIndex 0.10 | Purpose-built retrieval, lowest code volume, 5-line baseline |

| Stateful agents, approval workflows, multi-step branching | LangChain + LangGraph 0.2 | Checkpointing, human-in-the-loop, fault-tolerant execution |

| High-volume RAG with downstream stateful decision gates | LlamaIndex retrieval + LangGraph orchestration | Clean retrieval/orchestration split, best-of-stack |

| LLM app needing agent evals and integrated tracing | LangChain + LangSmith | First-party observability, trajectory eval built-in |

Choose LlamaIndex First

Start with LlamaIndex when retrieval is the dominant complexity axis: document-centric Q&A systems, pipelines that ingest large corpora with frequent updates, or applications that route queries across multiple indices with reranking. The 5-line documented baseline means time-to-first-working-RAG is the lowest of any option, and the purpose-built retrieval primitives keep the codebase maintainable as retrieval logic evolves.

| Signal | LlamaIndex fit |

|---|---|

| Retrieval is the primary product feature | Strong |

| Multiple index types needed | Strong |

| Reranking required | Strong (native module) |

| Stateful multi-step orchestration required | Weak — consider adding LangGraph |

Choose LangChain plus LangGraph First

Start with LangChain and LangGraph when the application's dominant complexity is orchestration: stateful agents that branch on retrieved content, workflows with human review gates, or systems that must survive partial failures and resume deterministically. LangGraph's checkpointing "enables human-in-the-loop workflows, conversational memory, time travel debugging, and fault-tolerant execution" — these are the features that matter when the cost of a dropped workflow is measured in compliance risk or user-facing errors.

| Signal | LangChain + LangGraph fit |

|---|---|

| Human approval gates required | Strong |

| Multi-turn agent with session persistence | Strong |

| Complex branching control flow | Strong |

| Pure retrieval, no statefulness needed | Weak — LlamaIndex is faster to ship |

Use a Hybrid Stack When Retrieval and Orchestration Split Cleanly

The hybrid pattern — LlamaIndex handling retrieval, LangGraph handling orchestration — is the right architecture when both complexity axes are present. LlamaIndex's QueryEngine or VectorStoreIndex runs inside a LangGraph node, providing context to downstream nodes that perform routing, approval, or output generation.

The integration boundary is clear: LlamaIndex is responsible for producing retrieved context; LangGraph is responsible for deciding what to do with it. The boundary is architecturally straightforward but is not standardized by a single official shared adapter in the current documentation — teams must write the node wrapper themselves.

Production Note: In the hybrid pattern, instrument both halves independently. LlamaIndex spans (via Langfuse or Arize Phoenix) cover retrieval latency and relevance signals; LangSmith traces cover graph execution and agent trajectory. Correlate the two using a shared

trace_idpassed through the LangGraph state object into the LlamaIndex query call. Without this correlation, retrieval latency and orchestration failures appear in separate systems with no causal link.

What the Current SERP Leaves Out

Most published comparisons reduce the decision to a slogan: "LangChain equals orchestration, LlamaIndex equals data." That framing is not wrong, but it is operationally incomplete. What it omits are the criteria that actually determine SLA impact.

Upgrade stability is unaddressed in most comparison posts. Both stacks have active release cadences, and neither official documentation set provides regression-rate statistics or compatibility guarantees across minor versions. Teams shipping to production need canary deployment practices for both, not a framework recommendation that assumes stability.

Observability cost is treated as a footnote. The LangSmith-versus-third-party-stack choice affects how quickly engineers diagnose production incidents. Joining traces across Langfuse and LangSmith for a hybrid stack requires explicit tooling decisions at project start — not a retrofit.

Hybrid deployment cost is ignored. The hybrid architecture is the right answer for many production systems, but it carries real overhead: two SDK dependency trees, two observability configurations, and an integration seam that must be tested across both frameworks' release cycles.

Watch Out: No unified SLA table exists across LangChain, LlamaIndex, and LangGraph in official documentation. Teams publishing SLA commitments for production RAG systems must build their own recovery and latency baselines through load testing rather than relying on framework documentation. The framework choice determines which recovery mechanisms are available (checkpointing, event replay, retry logic) — but the SLA numbers themselves come from your infrastructure, not the README.

FAQ

Is LangGraph part of LangChain?

LangGraph is a distinct package in the LangChain ecosystem. It "can be used standalone, [and] also integrates seamlessly with any LangChain product." It is not bundled with the langchain package — teams install langgraph separately. The relationship is ecosystem membership, not inheritance: LangGraph is the stateful graph runtime, LangChain provides component interfaces and integrations, and LangSmith provides observability across both.

Can LangChain and LlamaIndex be used together?

Yes. The most common integration pattern routes LlamaIndex's QueryEngine or VectorStoreIndex as a retrieval tool inside a LangGraph node. LlamaIndex handles ingest, indexing, and retrieval; LangGraph handles control flow, checkpointing, and human-in-the-loop gates. The integration boundary is explicit and stable, though no official shared adapter standardizes it — teams implement the node wrapper directly.

What is the difference between LangChain and LlamaIndex?

LangChain is a composable component framework for agents and LLM-powered applications; its production value is in orchestration, chaining, and the broader LangGraph/LangSmith ecosystem. LlamaIndex is a retrieval-centric framework with purpose-built index types, query engines, and lightweight Workflows; its production value is in reducing the code surface for retrieval-heavy pipelines. LangChain assembles retrieval from generic components; LlamaIndex treats retrieval primitives as first-class objects.

Is LangChain better than LlamaIndex?

Neither framework dominates the other unconditionally. LangChain/LangGraph is the stronger choice when stateful orchestration, human-in-the-loop control, or fault-tolerant multi-step execution is the primary requirement. LlamaIndex is the stronger choice when retrieval depth and ingest-query pipeline maintainability is the primary requirement. For production systems where both matter, the hybrid pattern is the correct answer rather than a single-framework commitment.

Sources & References

- LangChain GitHub Repository — Official README describing LangChain's component model, LangSmith observability, and LangGraph ecosystem integration

- LangGraph GitHub Repository — Official README confirming standalone use and seamless LangChain product integration

- LangGraph Persistence and Checkpointing Documentation — Canonical source for checkpointing semantics, fault-tolerant execution, and human-in-the-loop capabilities

- LangGraph.js Checkpoint API Reference — Technical reference confirming per-superstep checkpoint behavior

- LlamaIndex Python Framework Documentation — Canonical source for high-level API, retrieval primitives, and module extension points

- LlamaIndex TypeScript Workflows Documentation — Event-driven Workflow abstraction specification

- LlamaIndex Callbacks and Observability Documentation — Native callback system for tracing and debugging

- LlamaIndex OpenInference Callback Documentation — Integration path to Arize Phoenix and observability platforms

- Langfuse LlamaIndex Integration — Third-party observability integration documentation

- PremAI: LangChain vs LlamaIndex 2026 Production RAG Comparison — External practitioner comparison that is not used as factual evidence here

Pro Tip: For orchestration decisions — checkpointing semantics, graph node design, human-in-the-loop patterns — the LangGraph persistence docs and LangGraph GitHub README are the authoritative sources. For retrieval decisions — index types, query engine configuration, reranking — the LlamaIndex Python framework docs are canonical. Neither framework's documentation adequately covers the hybrid integration boundary; treat the node-wrapper implementation as your team's responsibility to test and version.

Keywords: LangChain 0.3, LangGraph 0.2, LangSmith, LlamaIndex 0.10, LlamaIndex Workflows, LangGraph checkpointing, LangGraph human-in-the-loop, LangSmith tracing, Langfuse, Arize Phoenix, OpenTelemetry, Qdrant, Weaviate, RAGAS, Hugging Face sentence-transformers